Getting Started with ATOM

Intended Audience

This document is intended for Network Administrators & Operators that are using ATOM to perform Network management, configuration management, services automation and MOPs.

References

- ATOM Deployment Guide – All aspects of ATOM Deployment including sizing and deployment process

- ATOM User Guide – Master [This Document]

- ATOM User Guide – Remote Agent Deployment Guide

- ATOM User Guide – Performance Management & Alerting

- ATOM User Guide – Network Configuration Compliance, Reporting & Remediation

- ATOM API Guide – Discusses all external interfaces and integration flows

- ATOM Platform Guide – Discusses Service model, Device model and Workflow development

ATOM Solution Overview

Following sections provide a brief overview of ATOM Features.

Configuration Management

ATOM provides Configuration management capabilities for a wide variety of devices. This includes configuration archival, scheduling, trigger driven configuration sync, configuration diff etc.,

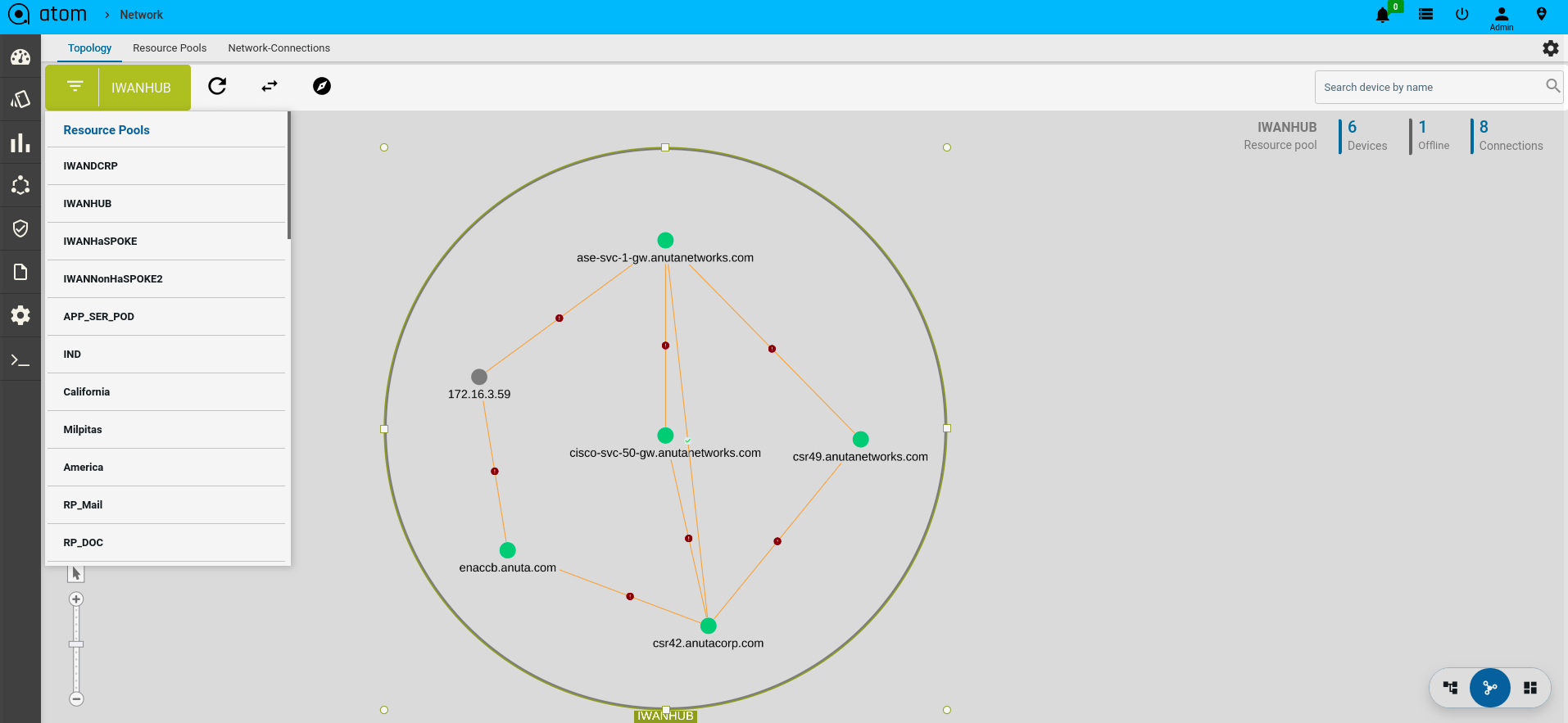

Topology

ATOM provides topology discovery through CDP & LLDP. Topology can be displayed hierarchically using Resource Pools (Device Groups). Topology overlays Alarms and Performance information.

Collection & Reporting

ATOM supports collection of network operational and performance data through various protocols like SNMP, SNMP Trap, Syslog & Telemetry. Such information can be visualized in ATOM as reports or can be rendered on Grafana as Charts. Admin guide discusses Report customization in further detail.

Network Automation

ATOM provides Model driven Network automation for stateful services. Stateful services involve a Service model (YANG) and some business logic. Service model development is covered in ATOM Platform guide. Admin guide discusses how to deploy & operate a service.

Workflow & Low Code Automation

ATOM provides an intuitive graphical designer to design, deploy and execute simple or complicated network operations and procedures. It allows the administrator to configure pre-checks, post-checks and approval flow. Workflow creation flows will be covered in the ATOM Platform Guide. Admin guide discusses how to deploy & operate.

Telemetry & Network Analytics

In today’s economy, data is the new oil. Anuta’s ATOM helps organizations collect a massive amount of network data from thousands of devices and generate detailed in-depth insights that will help them deliver innovative applications and solutions to their customers. ATOM can collect network data from a variety of sources including model-driven telemetry, SNMP and Syslog. The diverse data format of each source is normalized to provide a single consistent view to the administrator. Grafana is packaged as part of ATOM to view historical data, observe patterns and predict future trends. Organizations can integrate their Big Data and AI platform with ATOM to generate business insights from the network element configuration and operational state.

Procedure to Create Native Telemetry Collection

- Create a new Telemetry Collection

- Provide the name of collection

- Choose Junos as platform

- Select the transport as UDP which we will auto select the encoding as compact GPB with Dial Out Mode

- To configure resource filtering on device, select the filtering tab and choose the sensor name in dropdown & add regex pattern to configure

- Select ALL option, if we have same resource filter across sensors

- Once telemetry collection is provisioned, users can’t edit the entry.

- Subscription is not required in this case.

Closed Loop Automation

Anuta ATOM allows administrators to define a baseline behavior of their network and remediation actions to be initiated on any violation of this behavior. ATOM collects a large amount of network data from multi-vendor infrastructure using Google Protobufs and stores in a time series database. ATOM correlation engine constantly monitors and compares the collected data with the baseline behavior to detect any deviations. On any violation, the pre-defined remediation action is triggered thereby always maintaining network consistency.

The solution simplifies troubleshooting by providing the context of the entire network. Customers can define KPI metrics and corrective actions to automate SLA compliance.

Multi-Vendor support

Anuta ATOM has the most comprehensive vendor support. It supports thousands of devices spanning across 45+ vendors and automates all the use-cases including Data Center Automation, InterCloud, Micro-Segmentation, Security as a Service, LBaaS, Campus/Access, Branch/WAN, IP/MPLS Edge, Virtual CPE, and NFV.

odel (YANG)

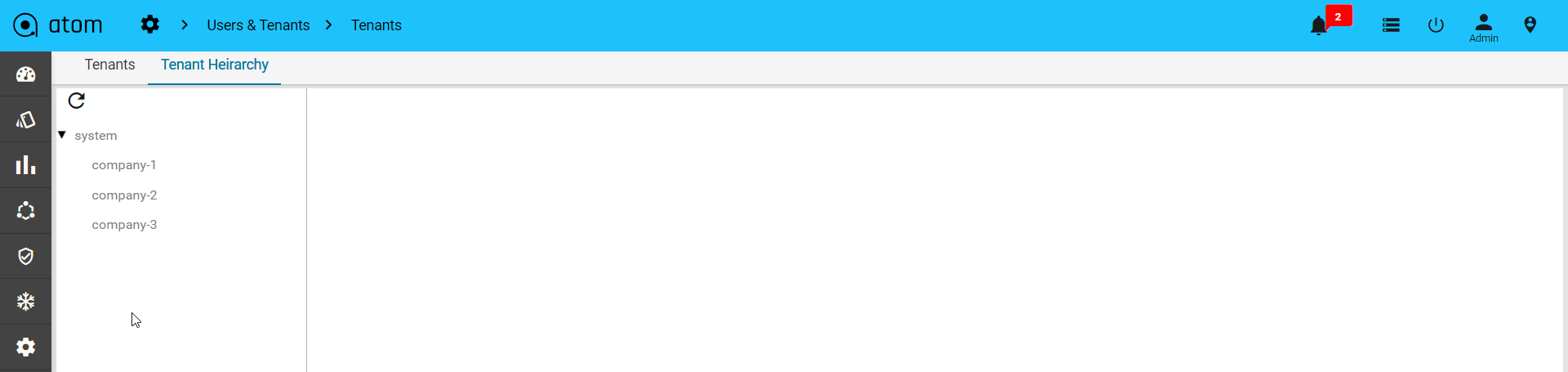

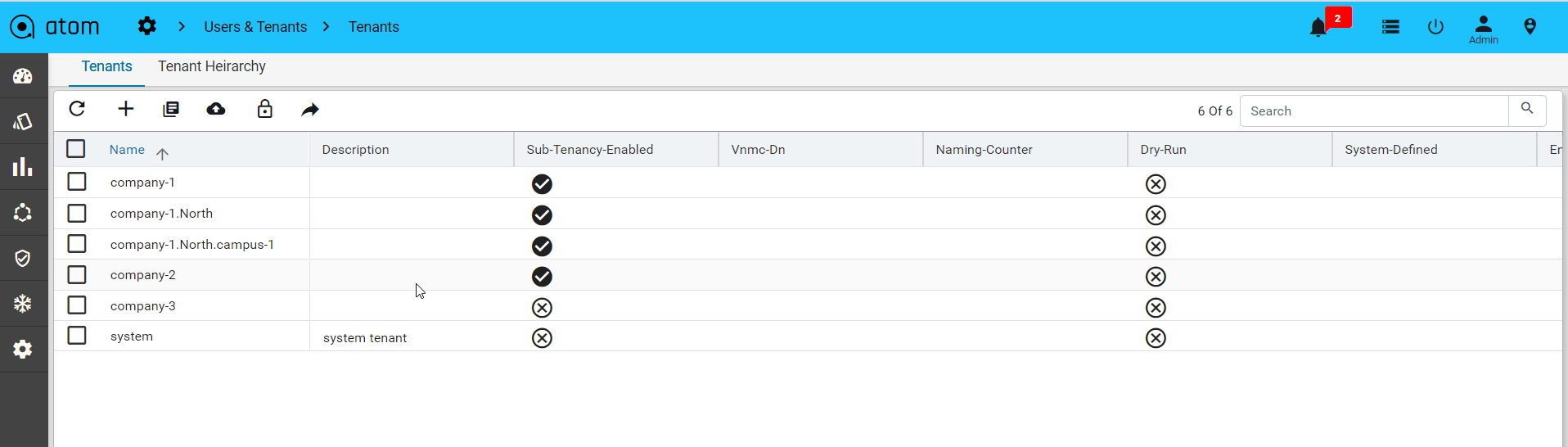

Multi Tenancy

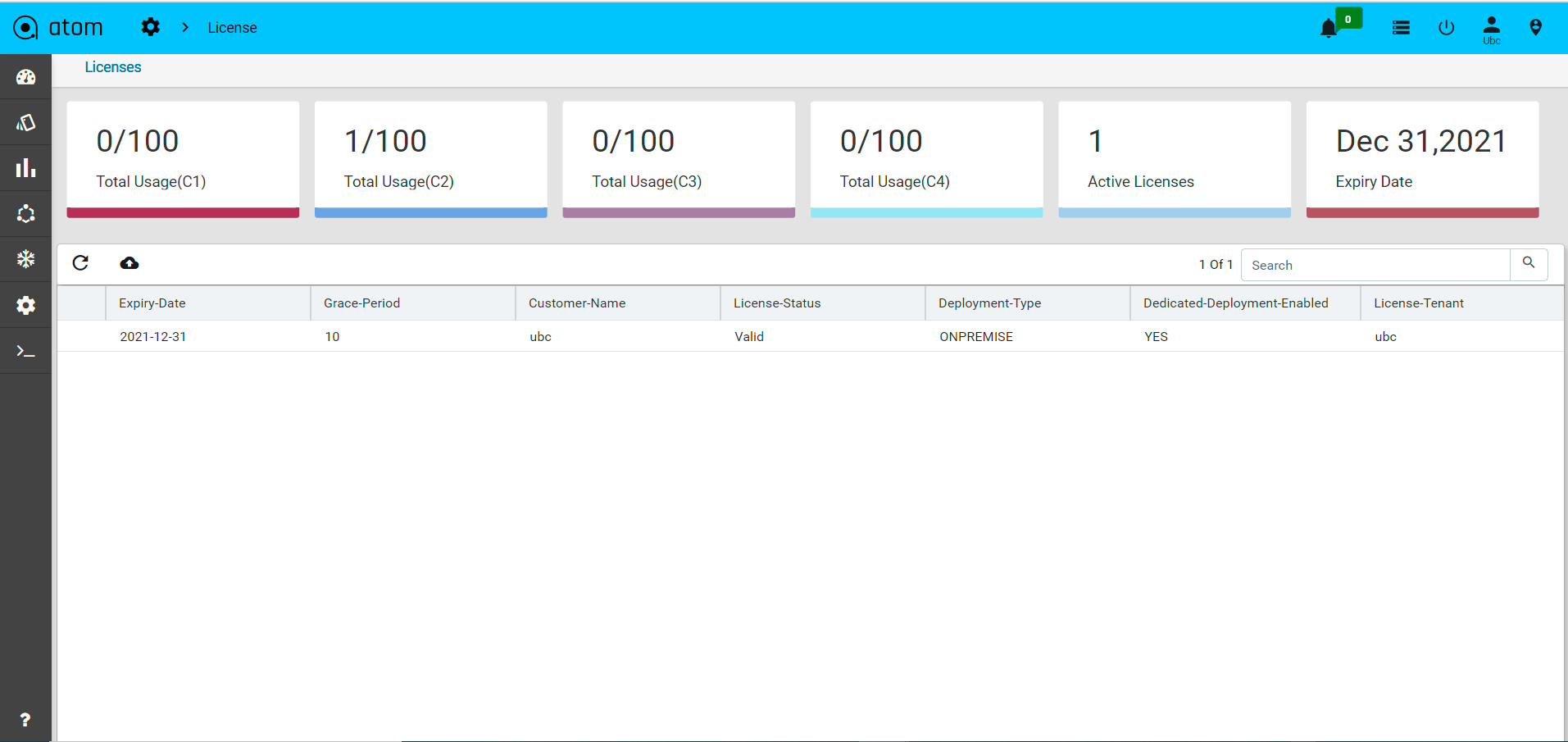

ATOM supports Multi-Tenancy across organizations and Sub-Tenancy within an Organization.

- Multi-Tenancy – Supported on ATOM On-Premises & ATOM Silo/Dedicated Deployment on ATOM Cloud.

- Tenants (Coke, Pepsi etc.,) are completely isolated from each other.

- Sub-Tenancy – Supported on All ATOM Deployments – On-Premises, ATOM Cloud Silo/Dedicated and also ATOM Cloud Shared.

- Data sharing across sub-tenants (Coke.east, Coke.west, Coke.it etc.,) is controlled by Tenant Admin.

- By Default Data at a higher Level Tenant is Visible to the Sub-Tenants.

- By Default, Data under a sub-tenant is visible to the Tenant

- By Default, Data under a sub-tenant is not visible to other Sub-tenant

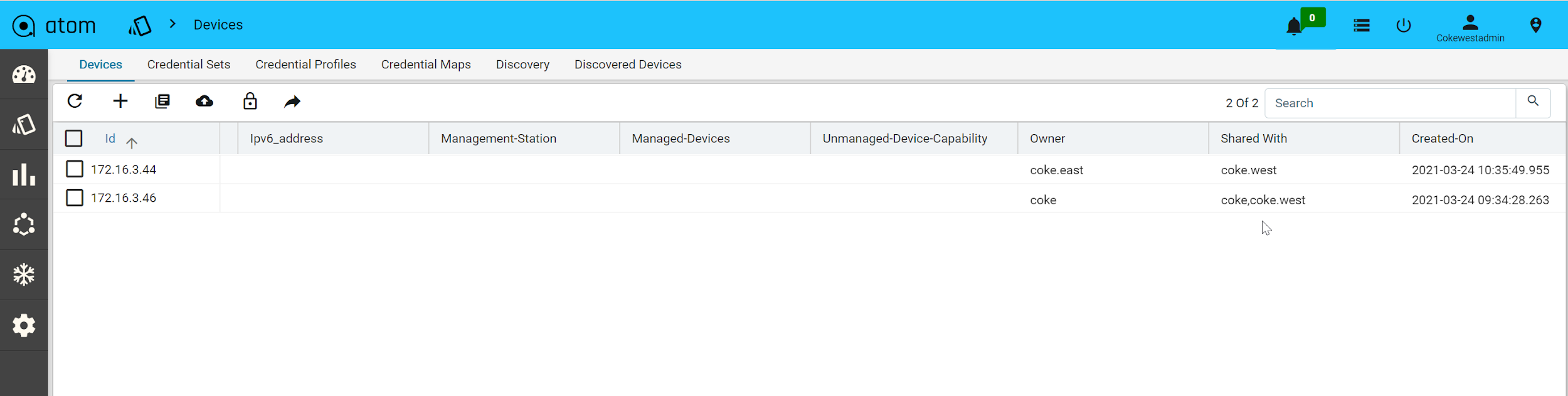

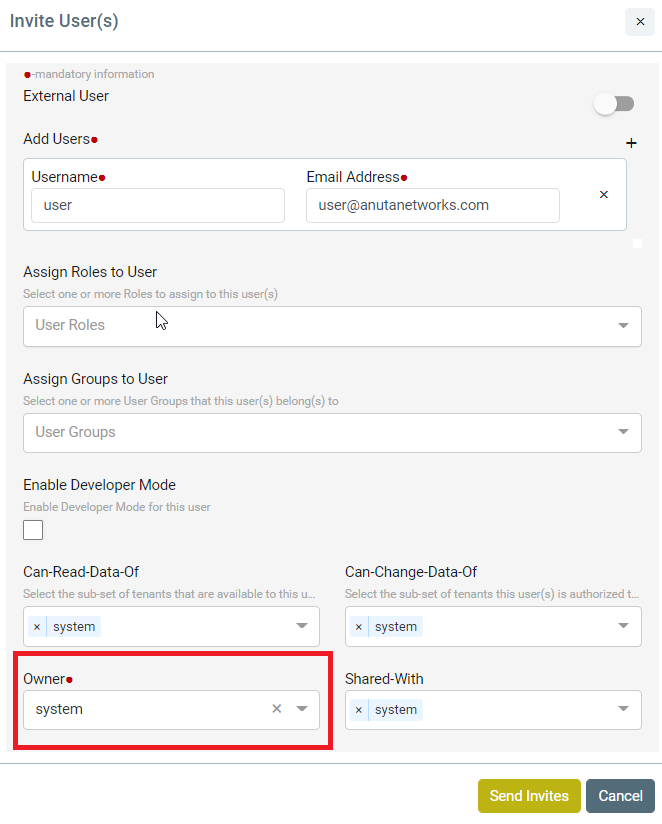

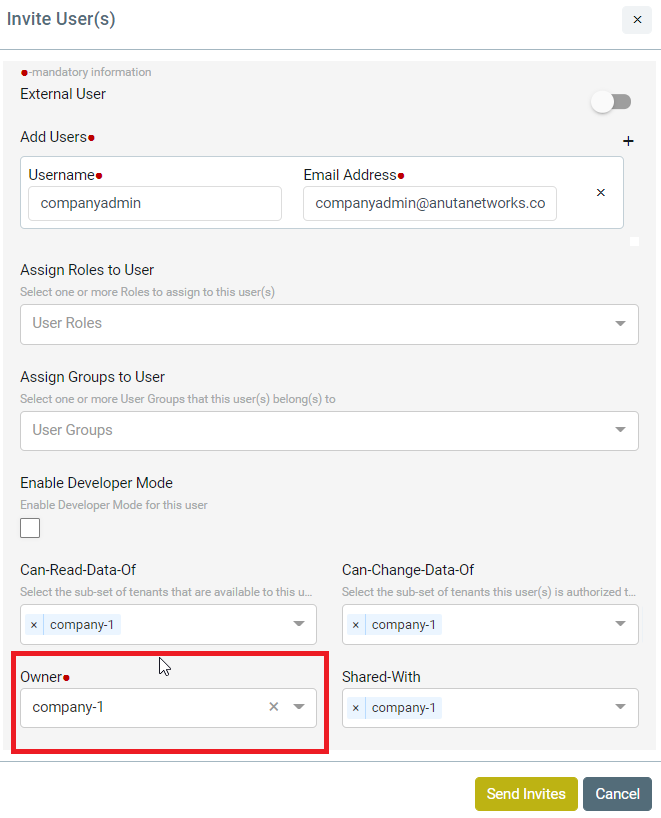

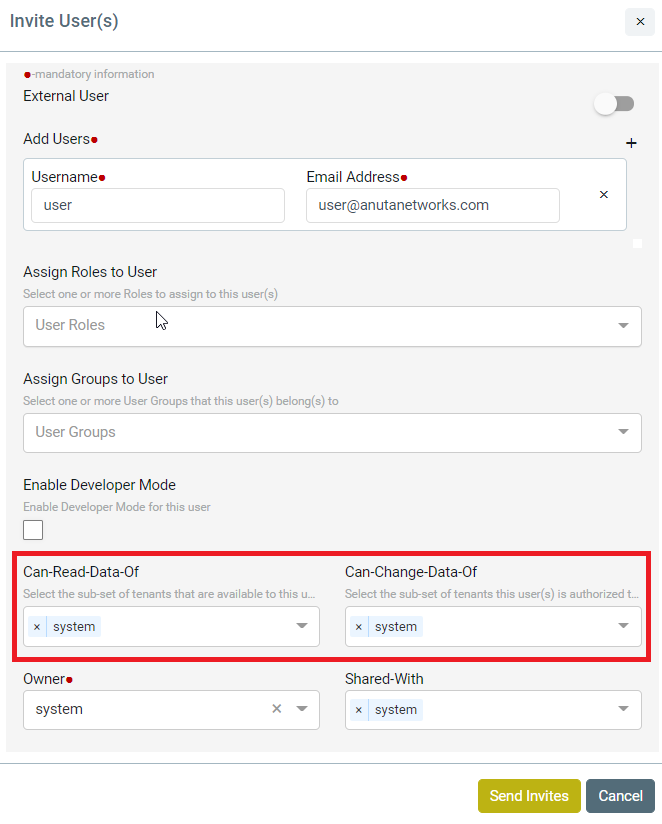

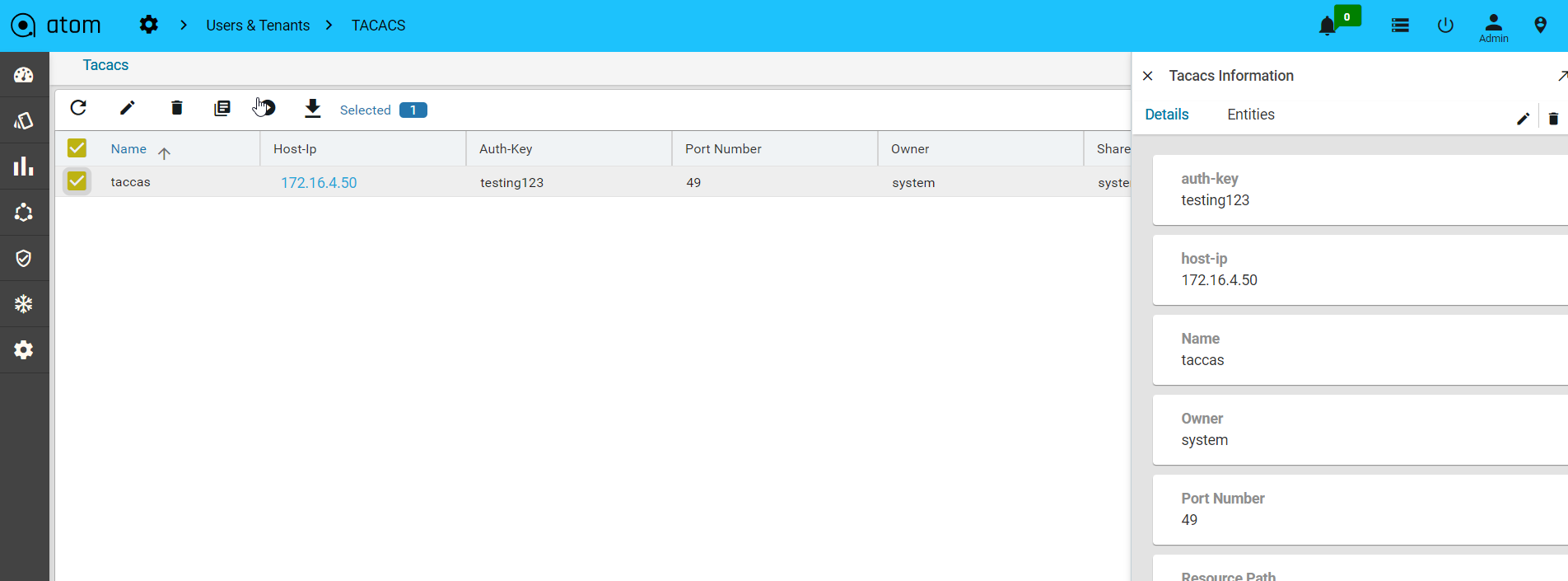

- Example – Coke.east owns a resource (credential set or device etc.) and wants to share with sub tenants (Coke.west but not with Coke.it). In this case, ATOM Multi Tenancy Infrastructure provides a facility to share a resource with particular sub-tenants. Upon sharing the resources as required, each individual ATOM User interface will provide information on Resource sharing as shown below. This behaviour will be the same across all the resources in ATOM and will not be discussed specifically across features in the user guide.

Sample View of Resource being shared from Coke.east to Coke.west

Multi-Tenancy including Sharing, Wild Card usage to share across multiple Sub-tenants, Users within a Sub-Tenant and more details are discussed in ATOM Multi Tenancy & Sub-Tenancy

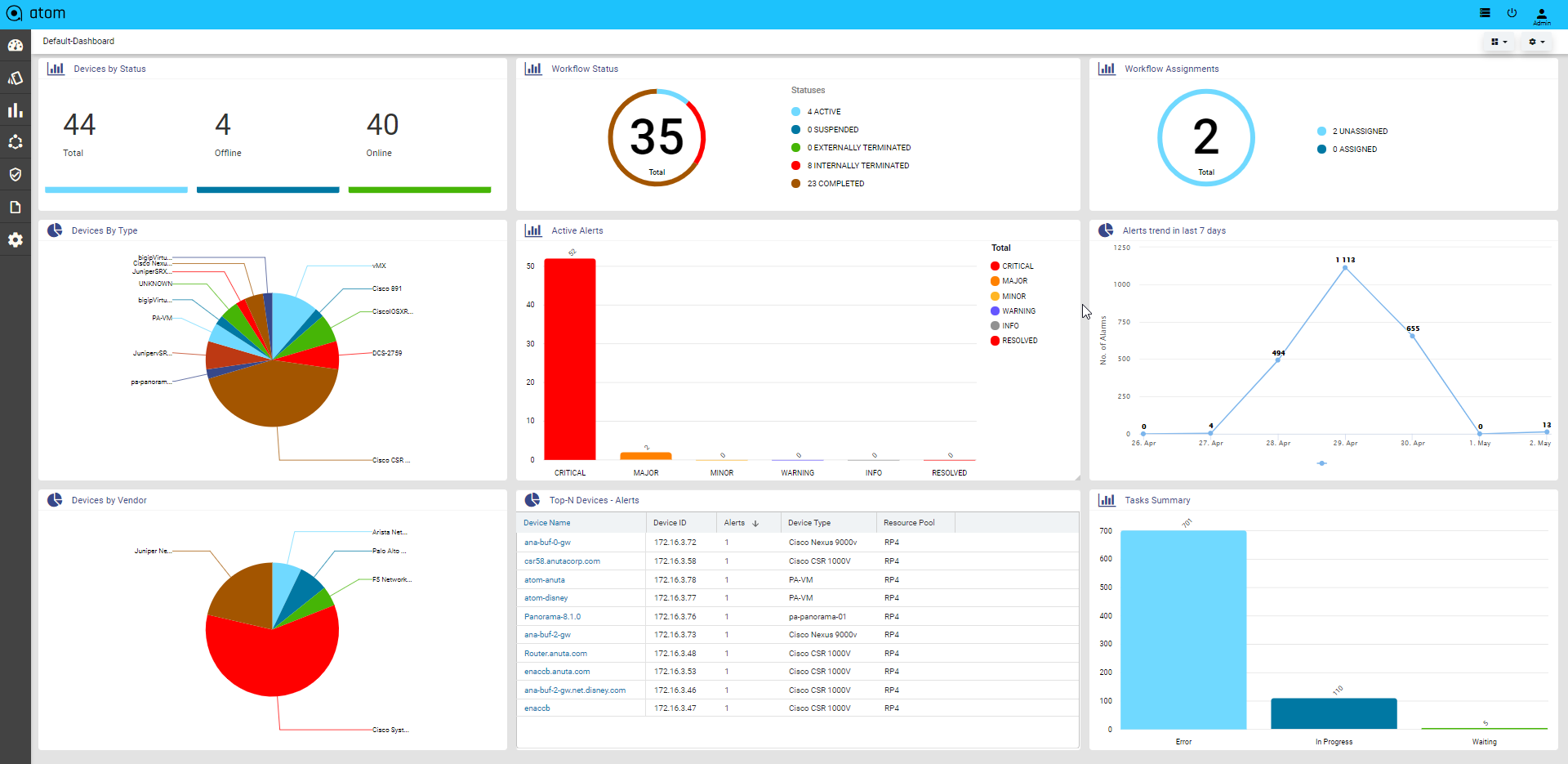

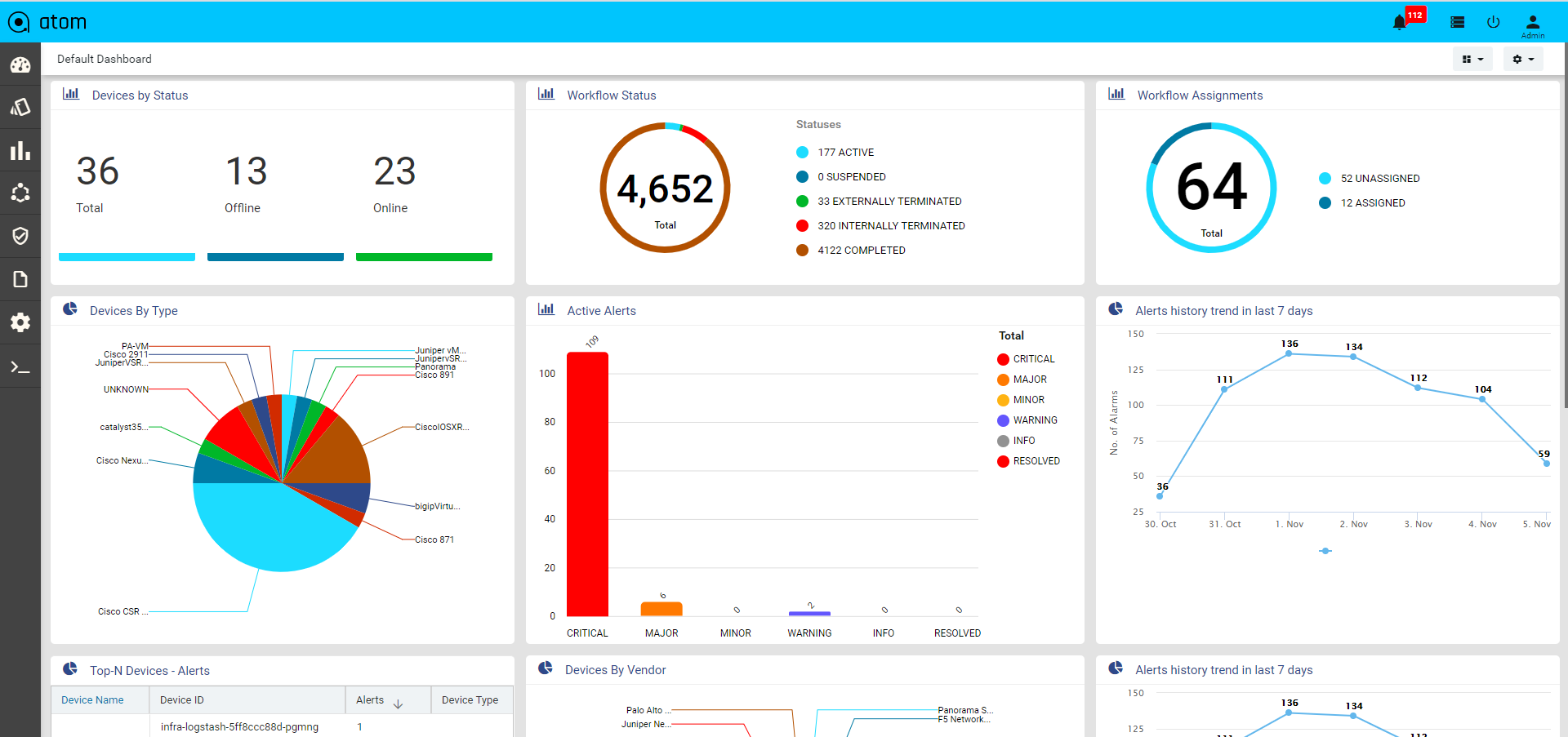

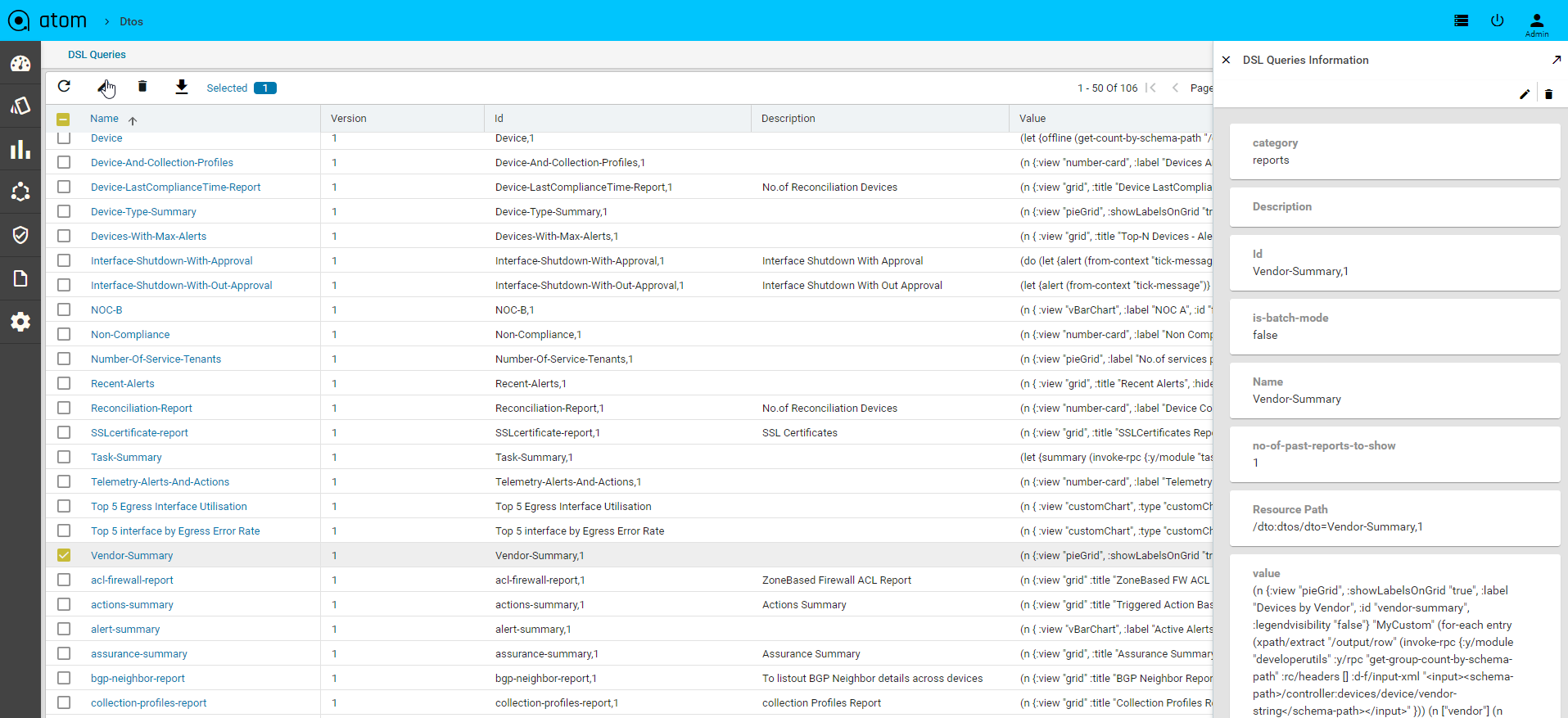

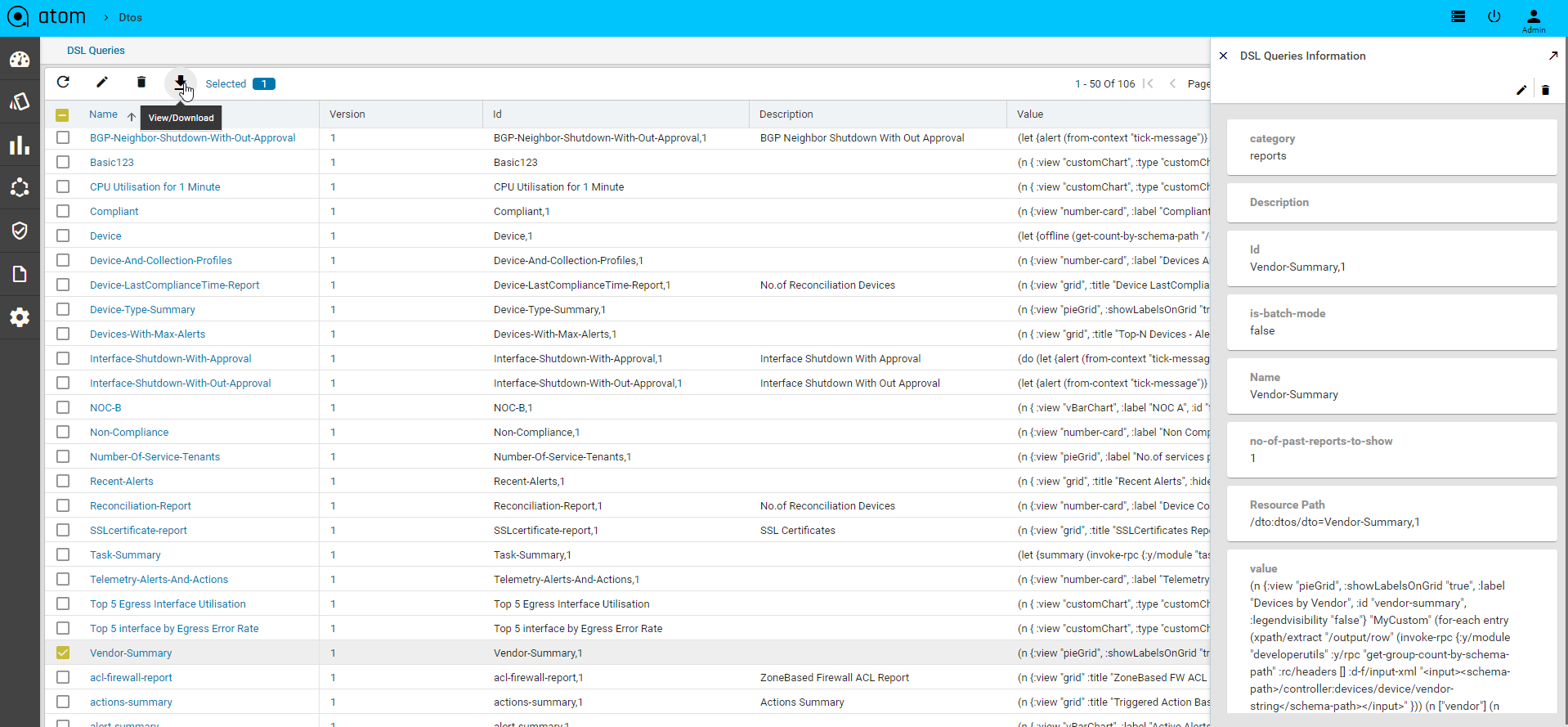

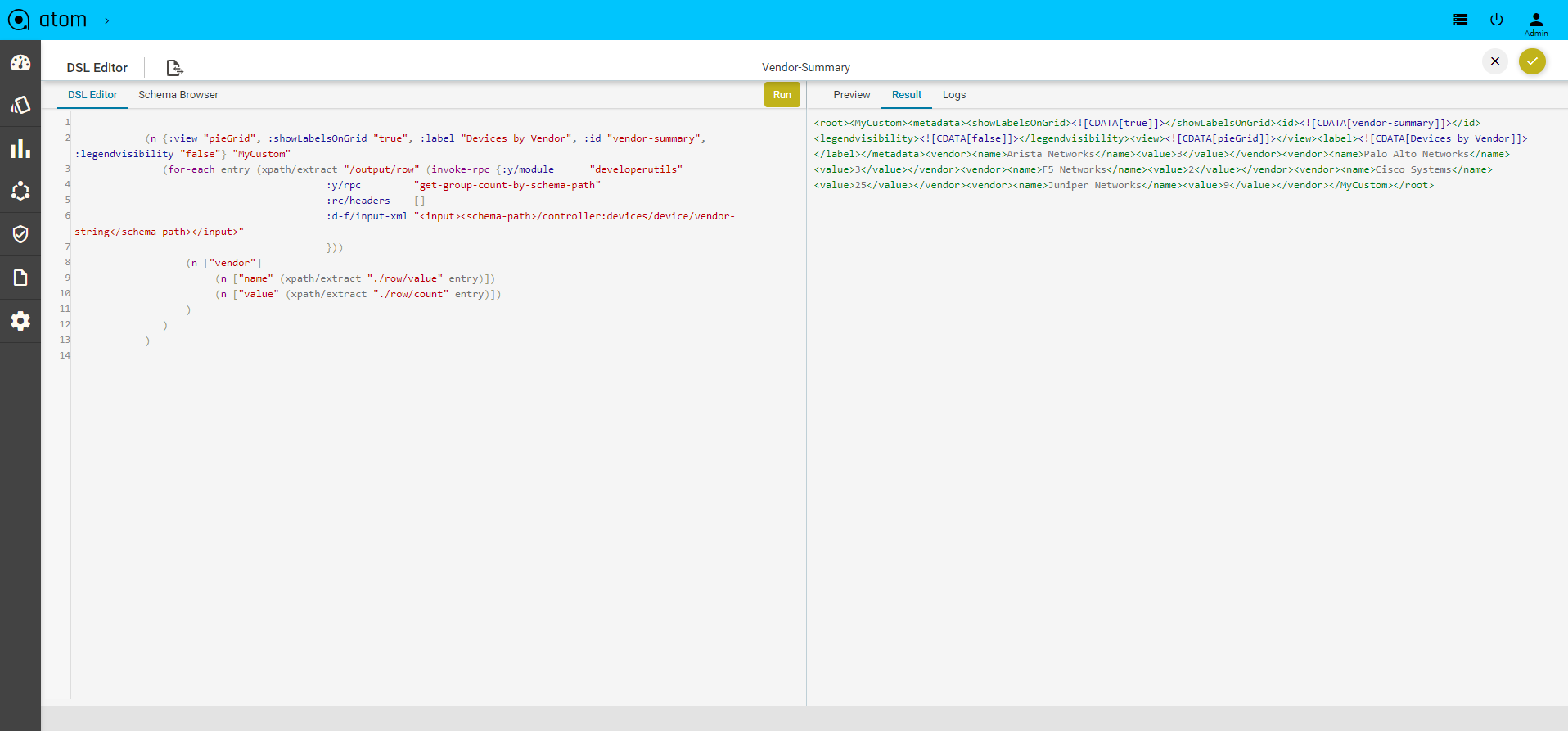

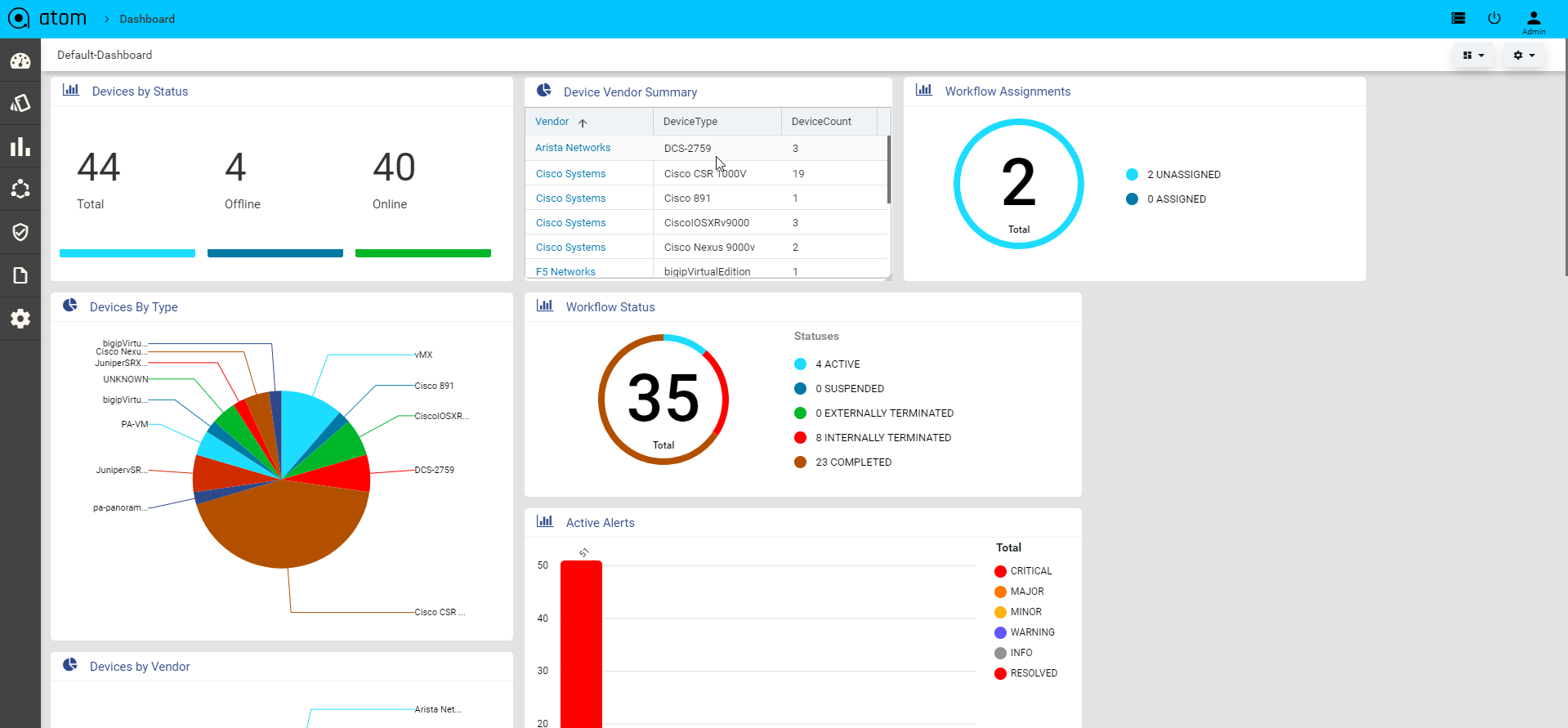

Viewing the Dashboard

Dashboard provides a simple, integrated, comprehensive view of the data associated with the resources managed by ATOM. Information about the devices, services, service approvals are available “at‐a‐glance” for the administrator.

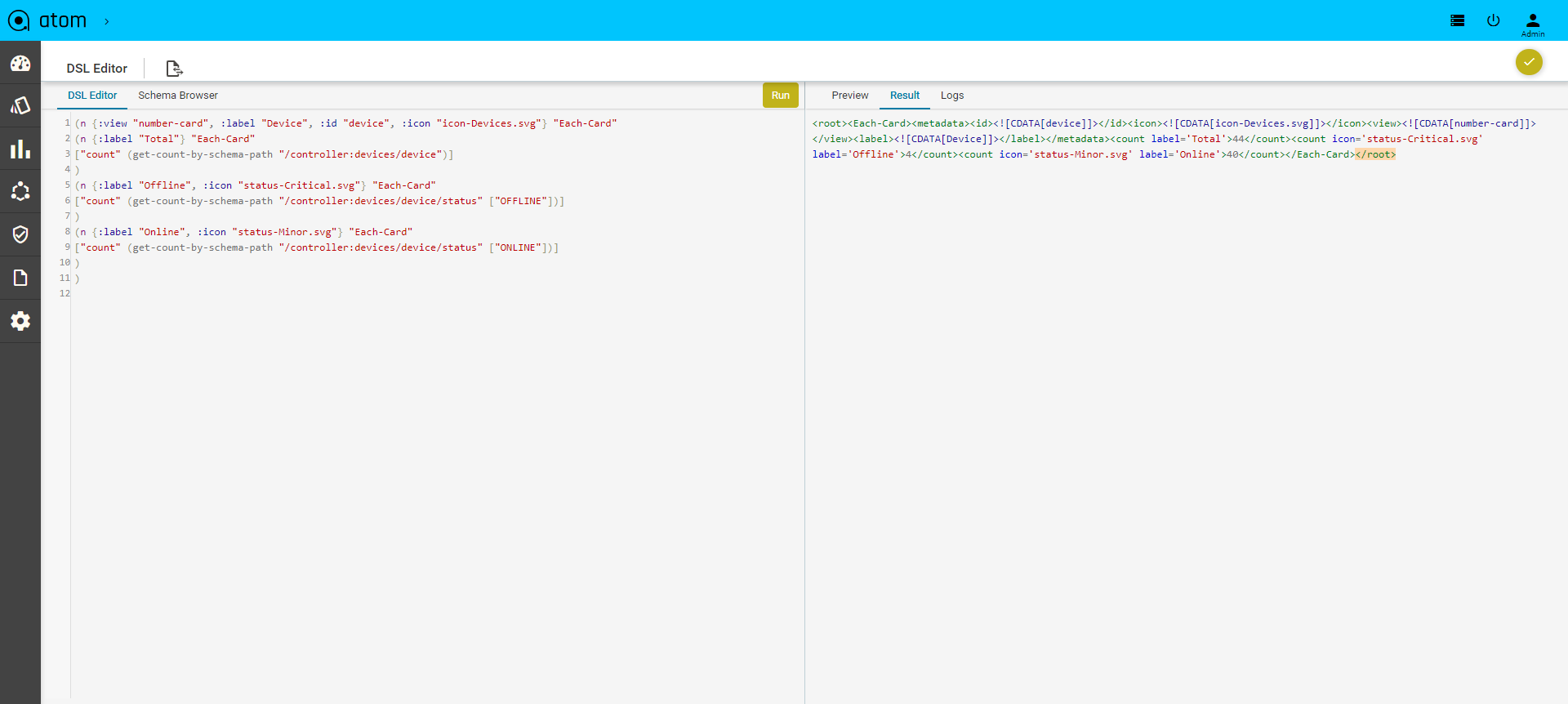

Starting from the 7.x release, Dashboard, the landing page of ATOM, is organized into dashlets. A dashlet is an individual component that can be added to or removed from a dashboard. Each dashlet is a reusable unit of functionality, providing a summary of the feature or the function supported by ATOM and is rendered as a result of the custom queries written in DSL.

You can customize the look of the Dashboard, by adding the dashlets of your choice, and dragging and dropping (the extreme right corner of the dashlet) to the desired location on the dashboard.

Each dashlet contains the summary or the overview of the feature or the functionality supported by ATOM.

For example, the dashlet “Device” displays the summary of devices managed by ATOM.

Some of the statistics that can be of interest in this dashlet could be as follows:

- Total number of devices

- Number of online devices

- Number of offline devices

These statistics can be gathered by ATOM and displayed in the corresponding dashlet depending on the DSL query written for each of them. You can save the layout containing the dashlets of your choice and set in a particular order.

Resource Management

ATOM Resource management involves device credential management, device onboarding through discovery or manual import, configuration archival, topology discovery & visualization, resource pools (device grouping), IP Address Management etc.,

Following table provides a quick summary of the activities that can be Resource Management activities.

| If you want to.. | Navigate to … |

| Credential Sets, Credential Maps and Devices | Resource Manager > Devices |

| Device Discovery | Resource Manager > Devices > Discovery |

| Visualize Topology | Resource Manager > Network > Topology |

| Create & Visualize Logical & Hierarchical Network Device Groups/Resource Pools | Resource Manager > Network > Resource Pools |

| Create physical locations | Resource Manager > Locations |

Device Management

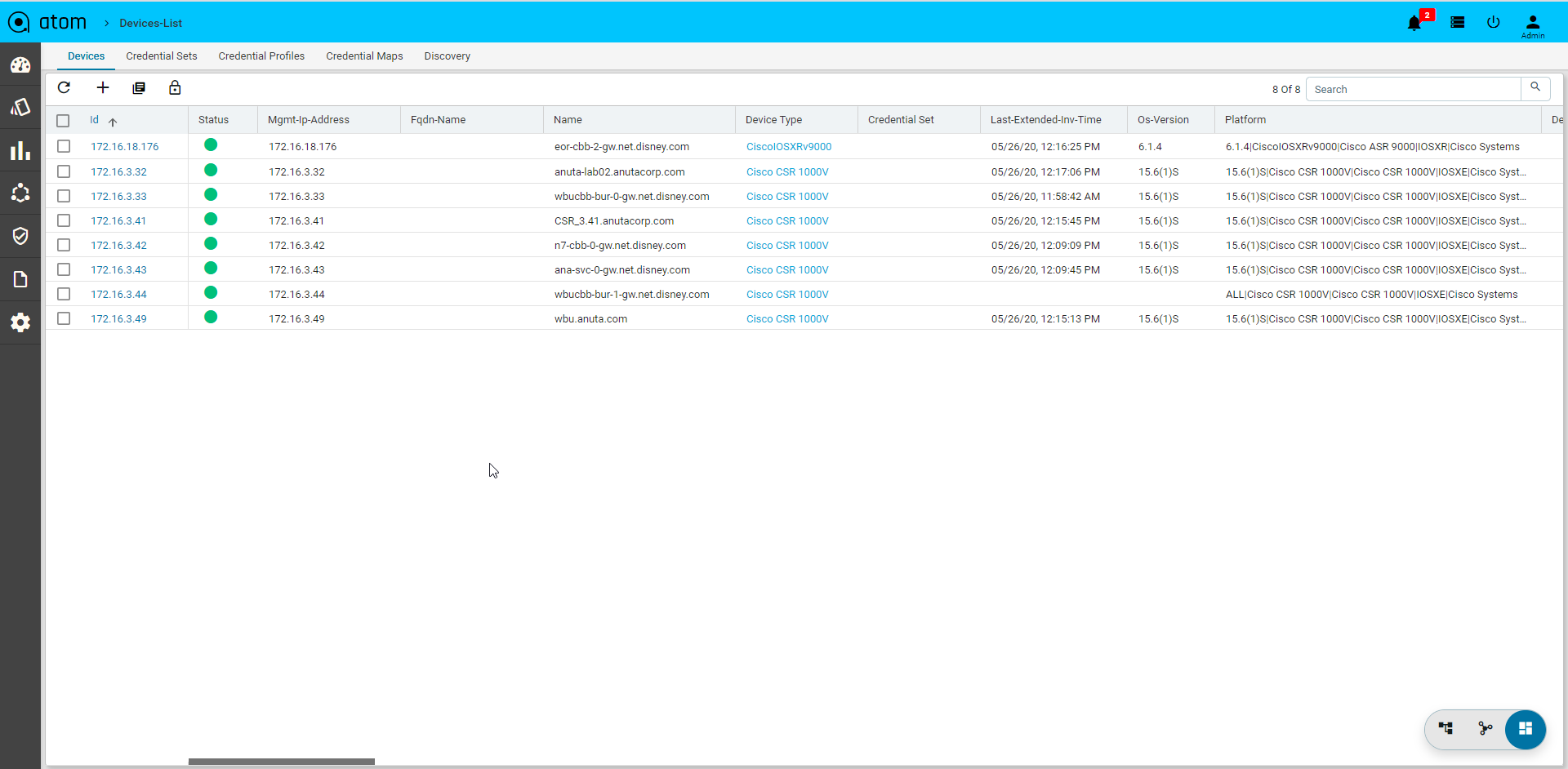

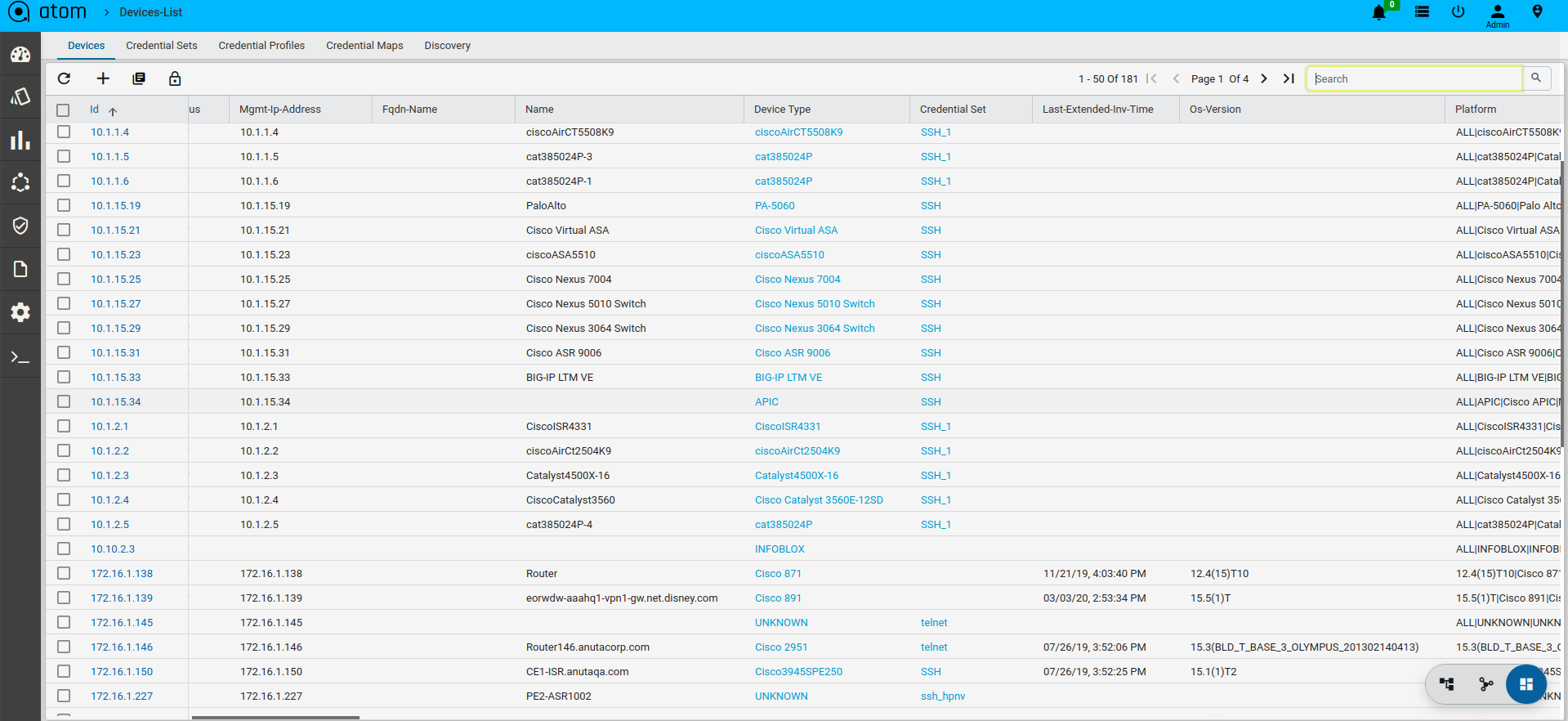

Device Management involves onboarding of devices and working with Device inventory, Configuration, Monitoring & Alerts. Devices can be added Manually, through an API or Automated Discovery using CDP/LLDP.

All Device Mgmt activities can be performed from Device Explorer & Grid View. Following are the three main views for a Device.

- Grid View – Grid layout of all Devices & and action on a device(s)

- Tree View – Device Group based tree view of devices that provides a much easier way to toggle between devices and inspect various device characteristics.

- Topology View – Devices can be visualized in a Topology view

- Device Detail View – On Clicking a Device from Tree View or Grid View a detailed view of the device is presented. This is same as the view when a device is selected from the Tree view

Grid, Tree view & Topology Views can be toggled using the view selector button available at the bottom right hand side corner of the page.

Credential Management

ATOM provides multiple functions like Provisioning, Inventory Collection etc. Function like Provisioning can be various ways – Payload (CLI vs YANG or Other) over a Given Transport (SSH, Telnet, HTTP(S), etc.,). For example, based on the use case ATOM Workflow Engine can use various Payload + Transport mechanisms to perform Provisioning actions. ATOM helps accomplish this using:

- Credential Sets – Define the Transport/Connectivity & Authentication to the devices

- Credential Profile – Maps Credential Sets to various functions in ATOM

This addresses various scenarios, some as follows:

- Reuse of same SNMP Credentials across the entire Network, while retaining Device/Vendor Specific Transport for Provisioning.

- Inventory Collection Via SNMP for a Given Vendor/Device vs Telemetry for another

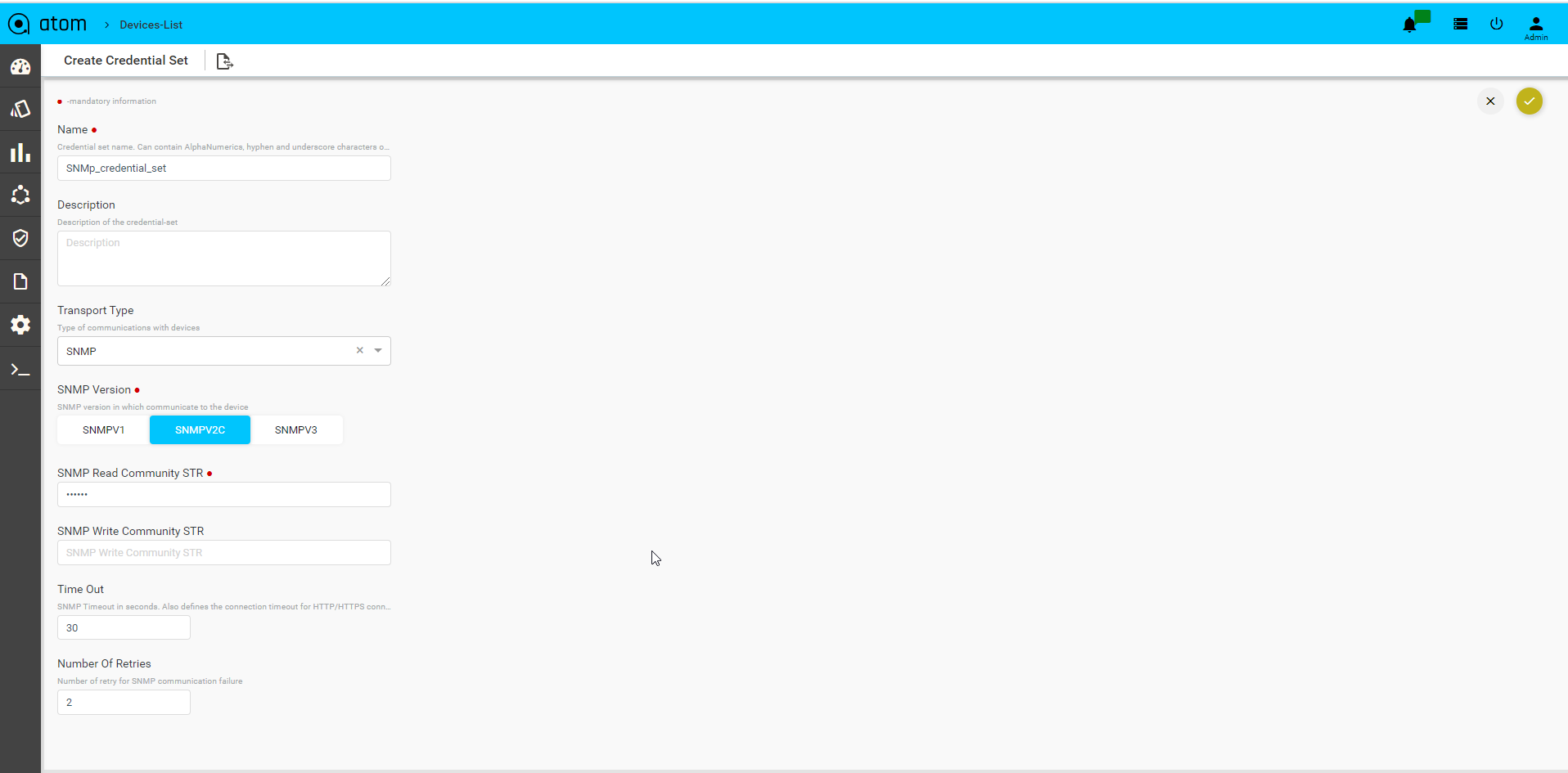

Credential Sets

Following section provides guidance on how to configure device credentials in ATOM.

- Navigate to Resource Manager > Devices > Grid View(Icon) > Credential Sets

- Create/Edit a Credential Set

- Name: Enter a string that will be used to identify the Credential Set

- Description: Enter a description w.r.t the created Credential Set(Optional)

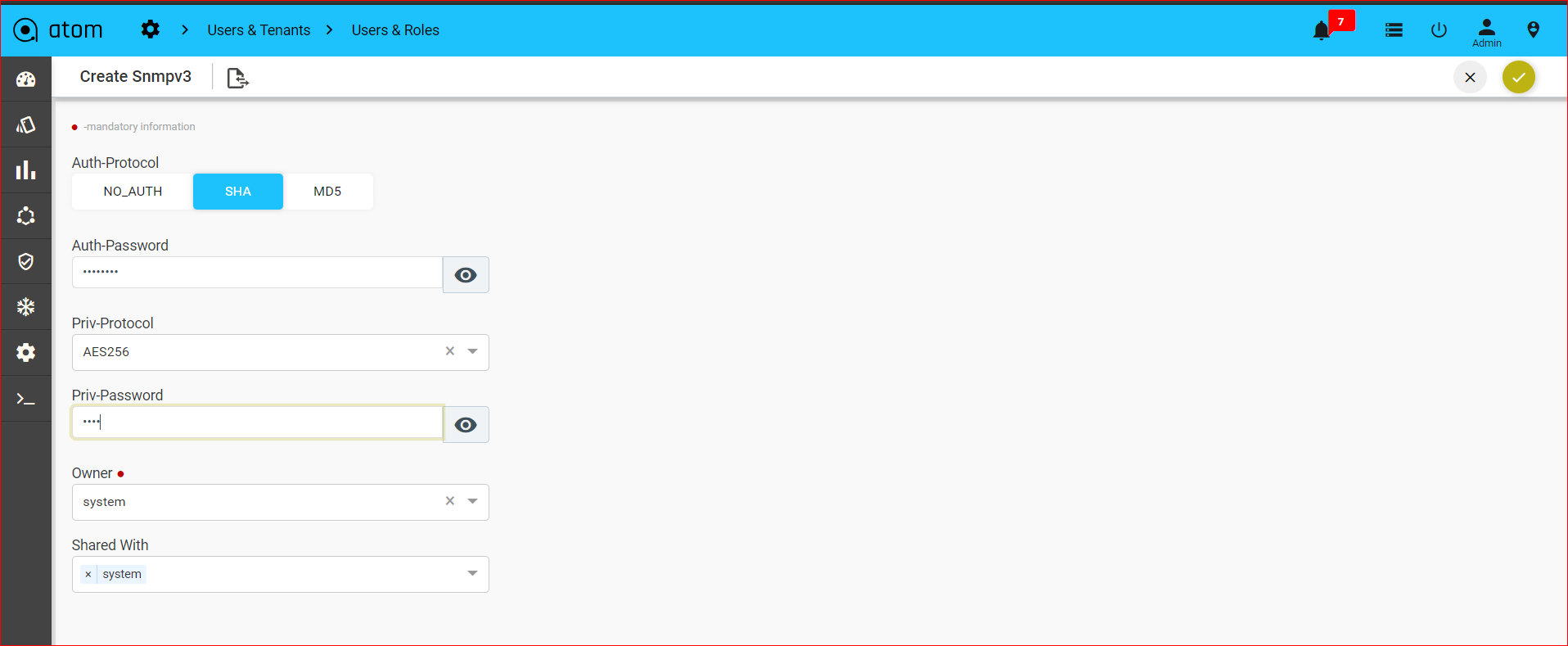

SNMP Transport credentials:

Select Transport type as “SNMP” can view below option

- SNMP version: Select the version of SNMP that should be used for device communication

- SNMP Read Community String: Enter the string that is used by the device to authenticate ATOM before it can retrieve the configuration from the device

- SNMP Write Community String: Enter the string that is used by the device to authenticate ATOM while writing configuration to the device

- Timeout: Enter the time taken for the response from the device in seconds.

- Number Of Retries: Enter the number of times the SNMP request is sent when a timeout occurs.

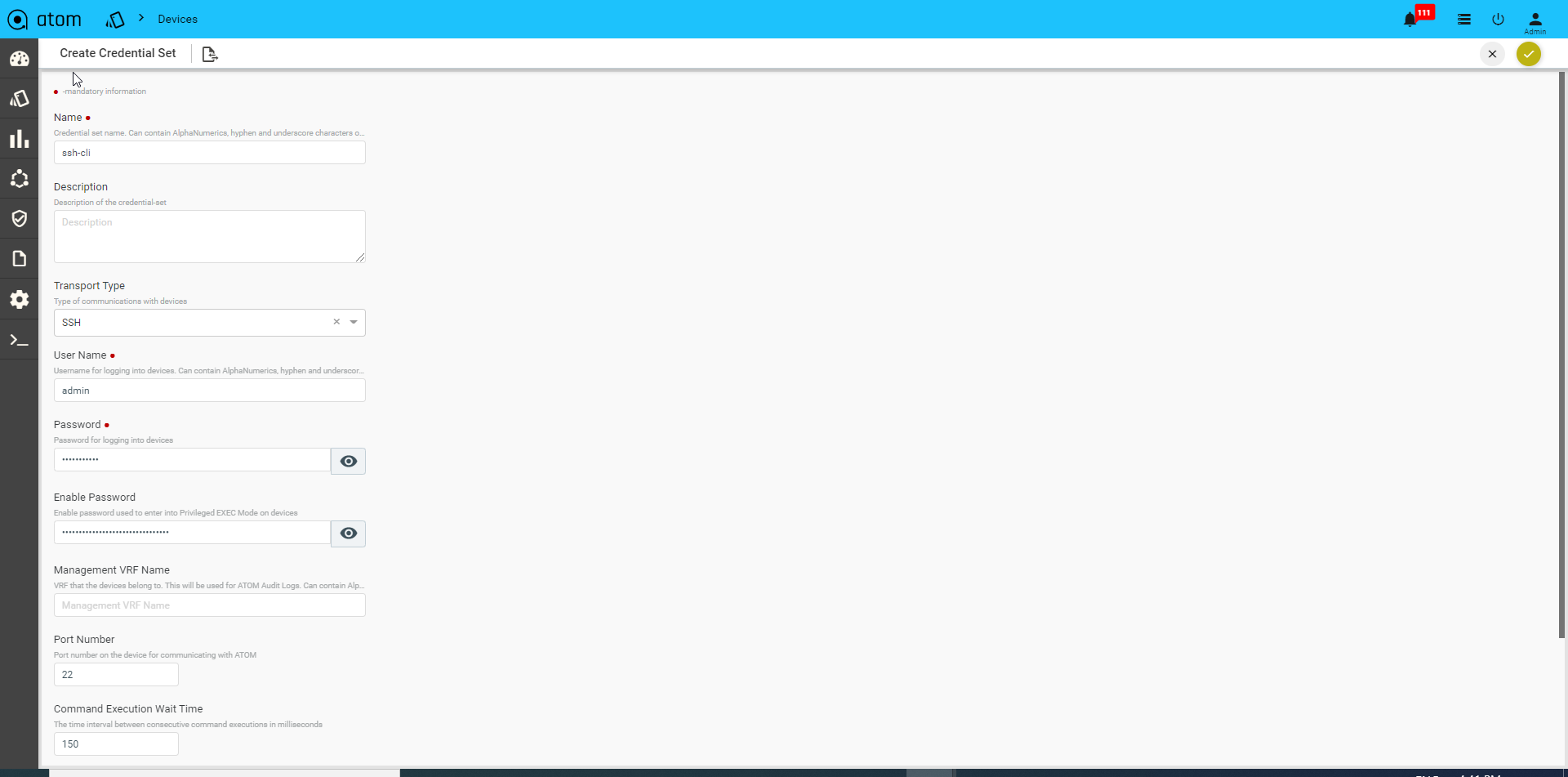

CLI Device(SSH/TELNET) Transport Credentials:

Select Transport type as “SSH/TELNET”

- User name: Enter a string that should be used to login to the device

- Password: Enter a string that used be a password for logging into the device

- Enable Password: Enter a password to enter into the privilege exec mode of the device.

- Mgmt-VRF-Name: Enter the name of the management VRF configured on the device. This will be used by ATOM to retrieve the audit logs from the device.

- Port Number: Enter the number of the port on the device that should be used for communication with ATOM

- Command Execution Wait Time: Enter the number (in millisecs) that ATOM should wait for the consecutive commands to be executed on the device. Enter any number between 10 to 30000.

- CLI Configure Command TimeOut: Enter the time (in seconds) that ATOM should wait for the command line prompt on the device to appear. Enter any between 1 to 1200.

- Max Connections: Enter the number of max connections that can be opened for a given device at any time.

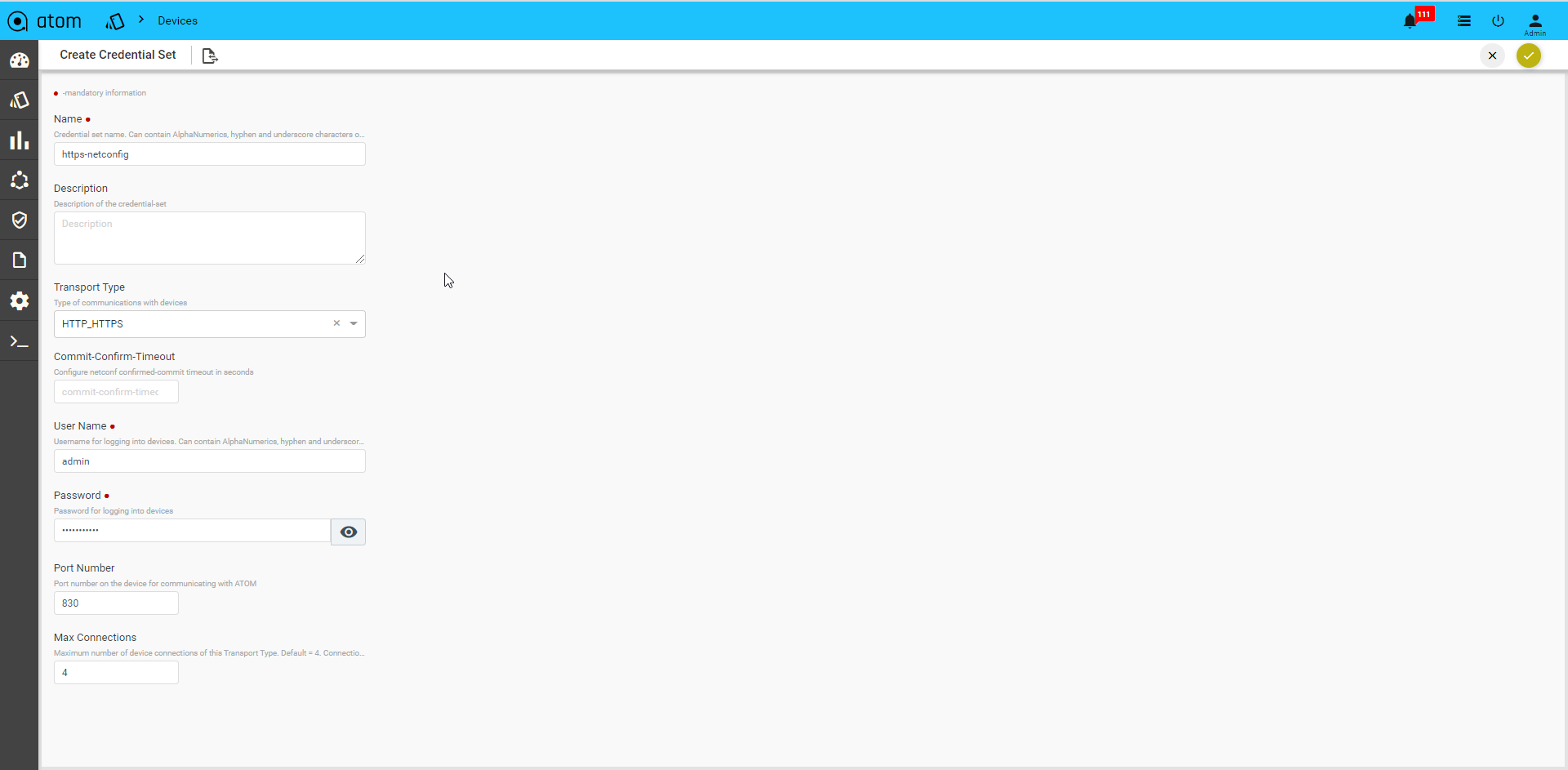

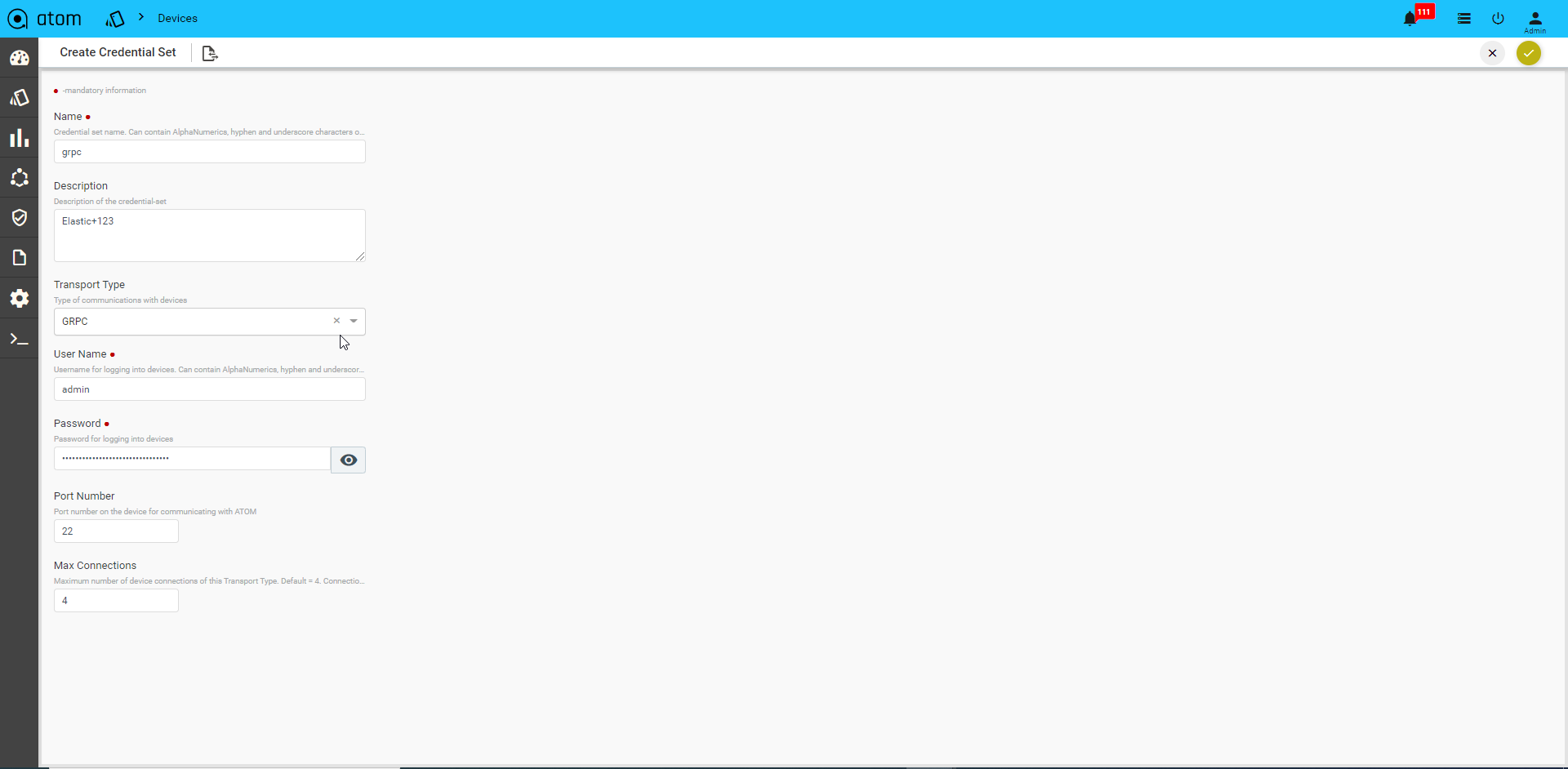

API Device Transport Credential:

Select Transport type as “HTTP_HTTPS / GRPC”

- User name: Enter a string that should be used to login to the device

- Password: Enter a string that used be a password for logging into the device

- Port Number: Enter the number of the port on the device that should be used for communication with ATOM.

- Max Connections: Enter the number of max connections that can be opened for a given device at any time.

GRPC Transport credential:

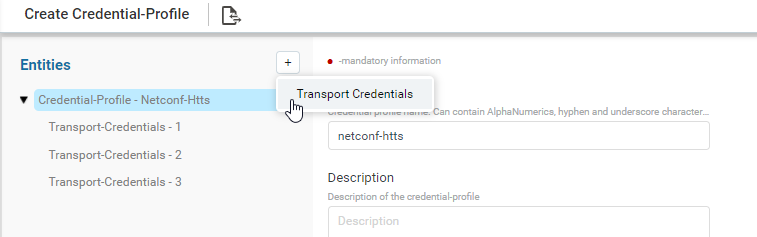

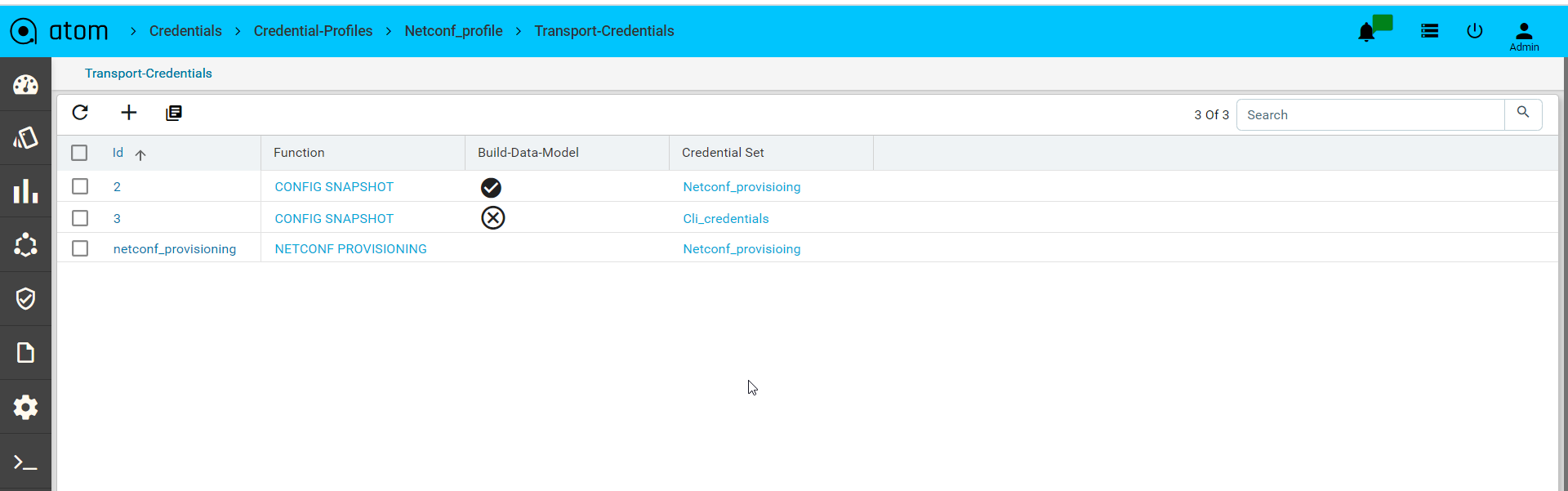

Credential Profile

By default, ATOM has the following out of the box functions:

- Config Provisioning

- SNMP

- Telemetry

- HTTP provisioning

- NETCONF provisioning

Navigate to Resource Manager > Devices > Grid View(Icon) > Credential Profile

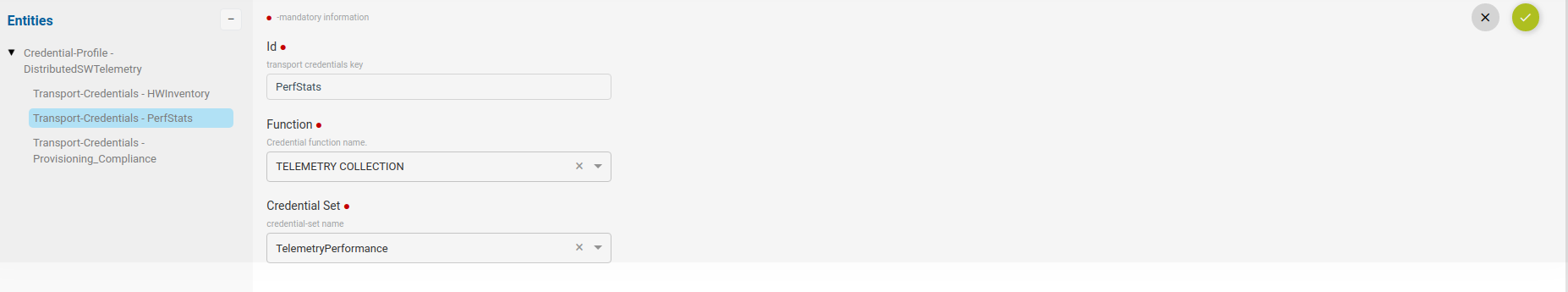

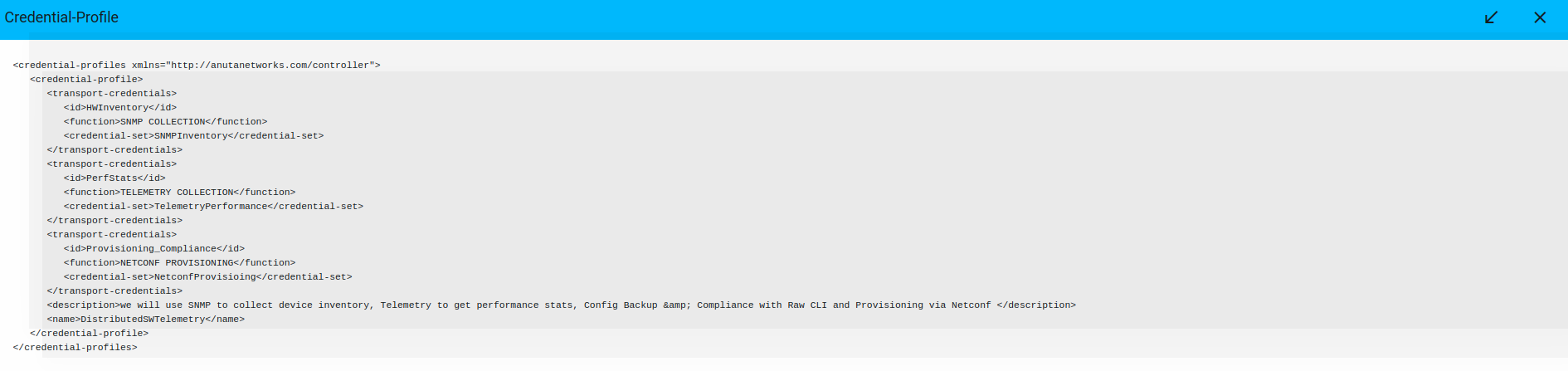

- Here, provide the name of credential profile, description and add the transport credentials by choosing the appropriate functions.

- Below is the snapshot to attach the credential set with function.

Credential profile payload in XML:

Credential Maps

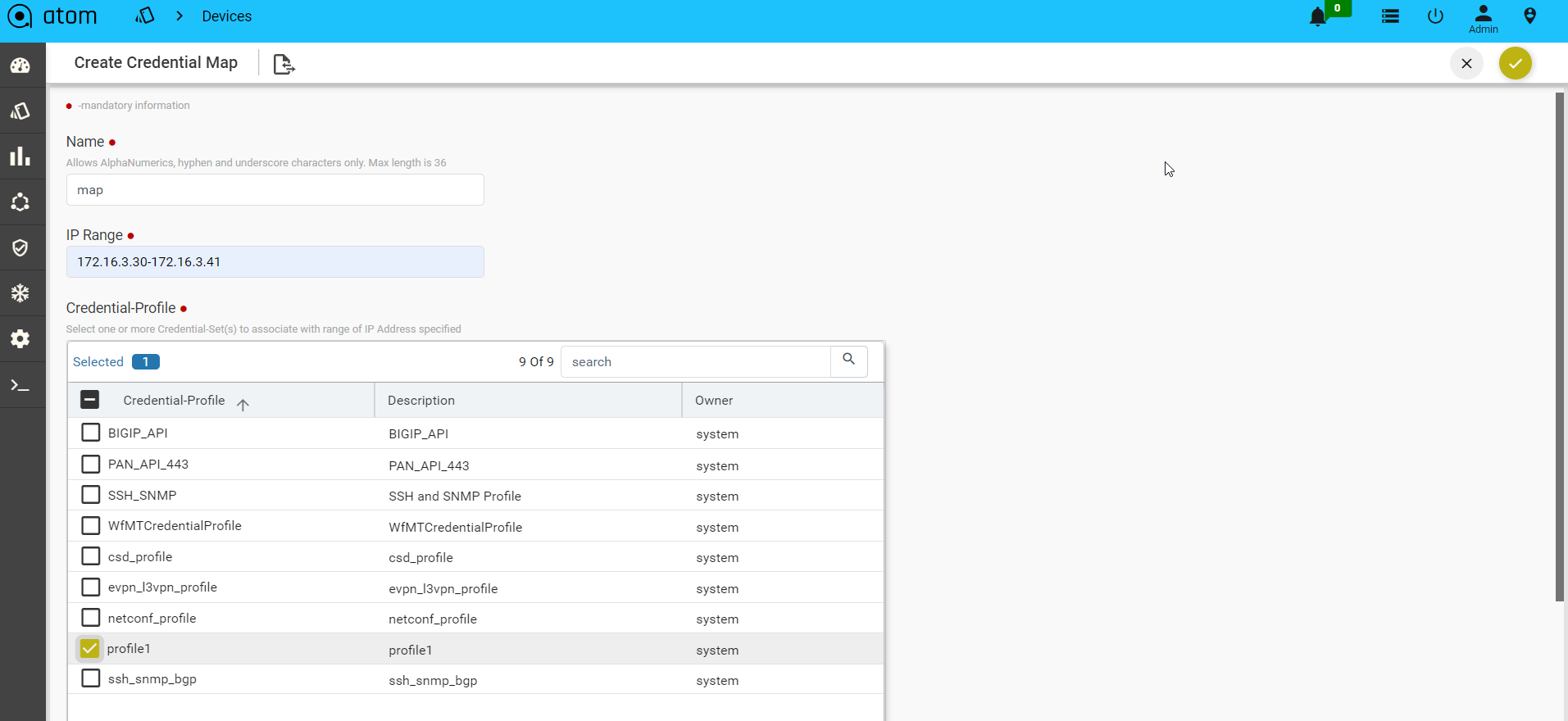

Credential Map allows users to map multiple Credentials Profiles to an IP-Address range. This addresses the following use cases:

- Device Discovery – When ATOM needs to Perform Discovery using SNMP Sweep or CDP/LLDP. Since devices are yet to be onboarded, explicit assignment is not available.

- Credential profile is mandatory when onboarding a device.

When ATOM needs credentials for a device and explicit Device to Credential Profile is not available, ATOM will cycle through the IP Address range and use the first credential profile that works. The successful Credential Profile is mapped to the device. This process is repeated whenever ATOM is unsuccessful communicating with the device using the current assigned credential profile.

To create a Credential Map:

- Navigate to Resource Manager > Devices > Grid View(Icon) > Credential Maps

- Create/Edit Create Credential Map:

- Name: Enter a name for the Credential Map

- Start-IP-address: Enter an IP address in the range from which ATOM starts the sweep for locating the devices.

- End-IP-address: Enter an IP address in the range beyond which ATOM will not continue the sweep for locating the devices.

| Note: The Start and the End IP address are the range of IP addresses of the devices. |

- Credential Profile: Select one or more Credential Profiles shown.

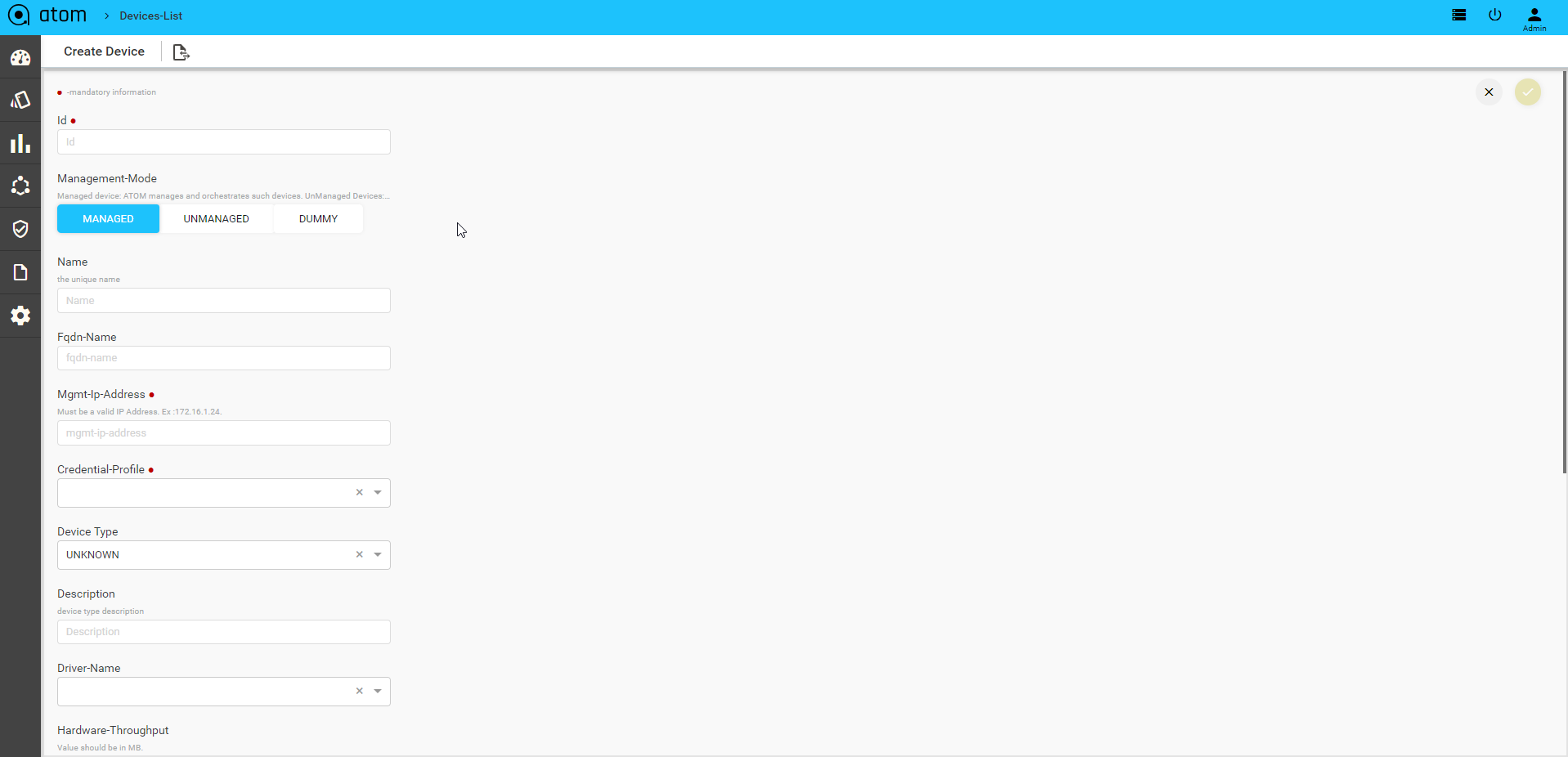

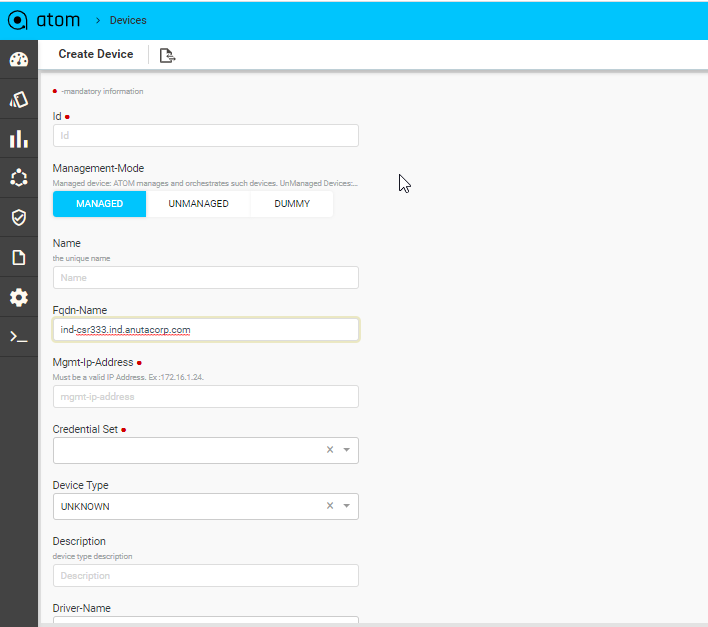

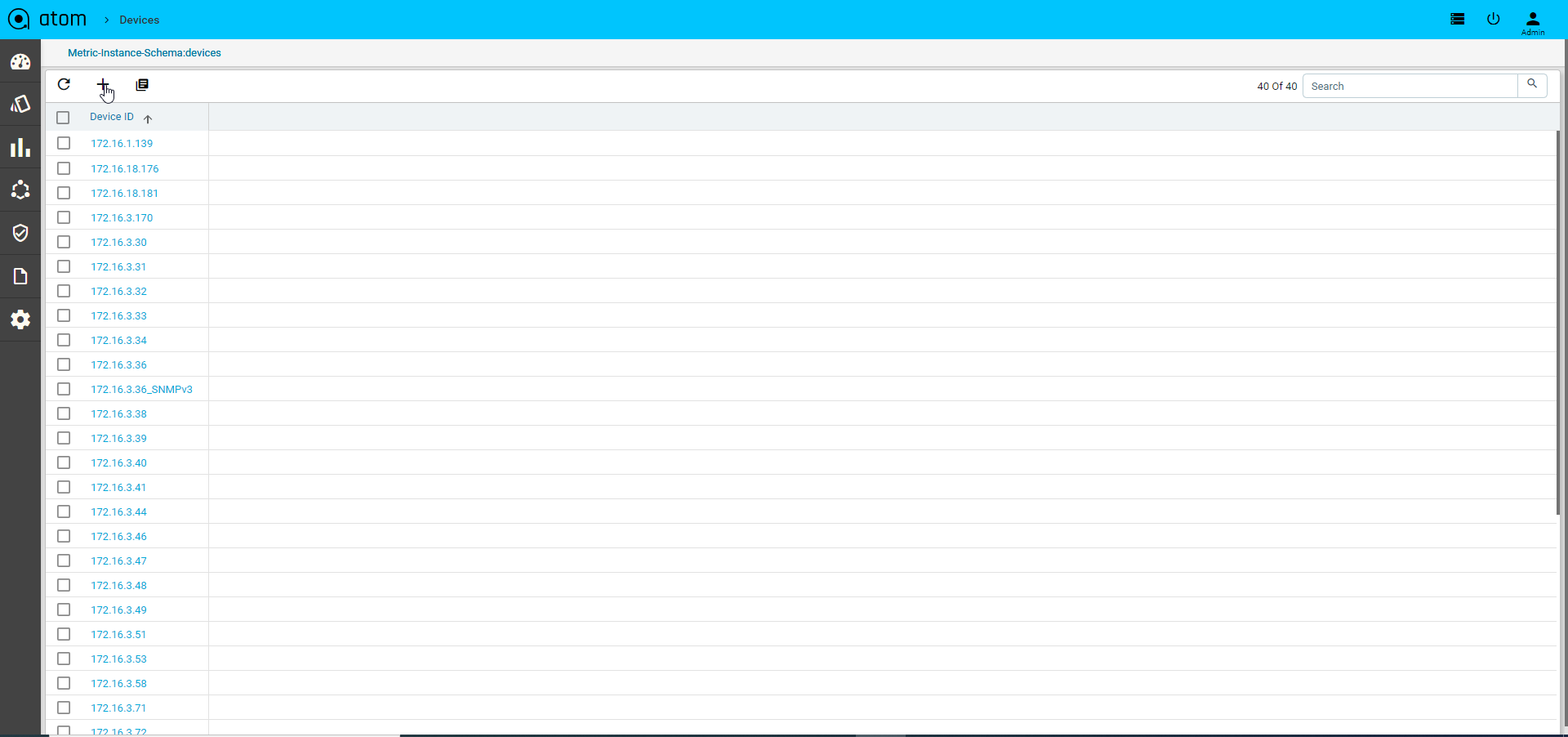

Device Onboarding

Devices can be onboarded into ATOM using an API, Manually through User Interface of Discovery using CDP/LLDP.

Discovering Devices

Devices discovery is covered in section – Device Discovery

Adding Device Manually

We may have scenarios where device discovery is not viable. Some reasons below:

- Lack of support for Layer 2 discovery support on the device

- Operational/Administrative reason to not use LLDP/CDP

- SNMP Sweep discovery is not suitable – IP Address Range are not well defined, contiguous or some other reasons

Before you begin, it’s mandatory to define Credential Sets & Credential Profiles.

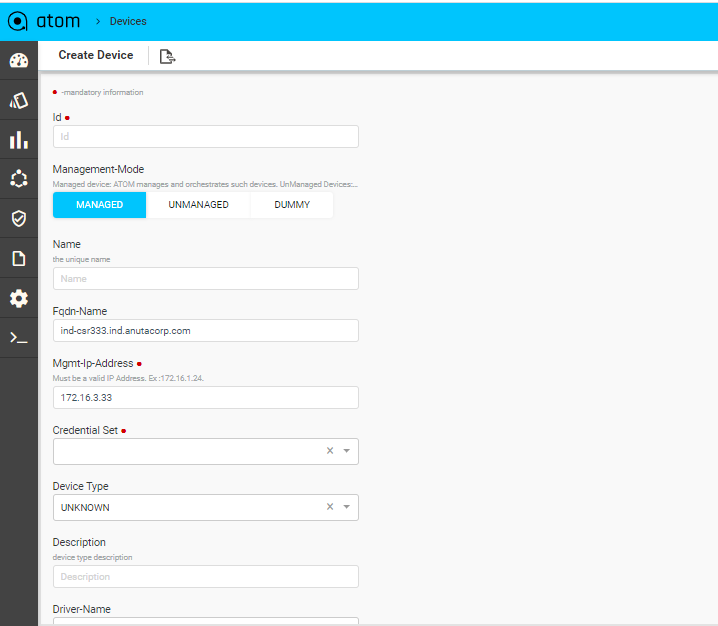

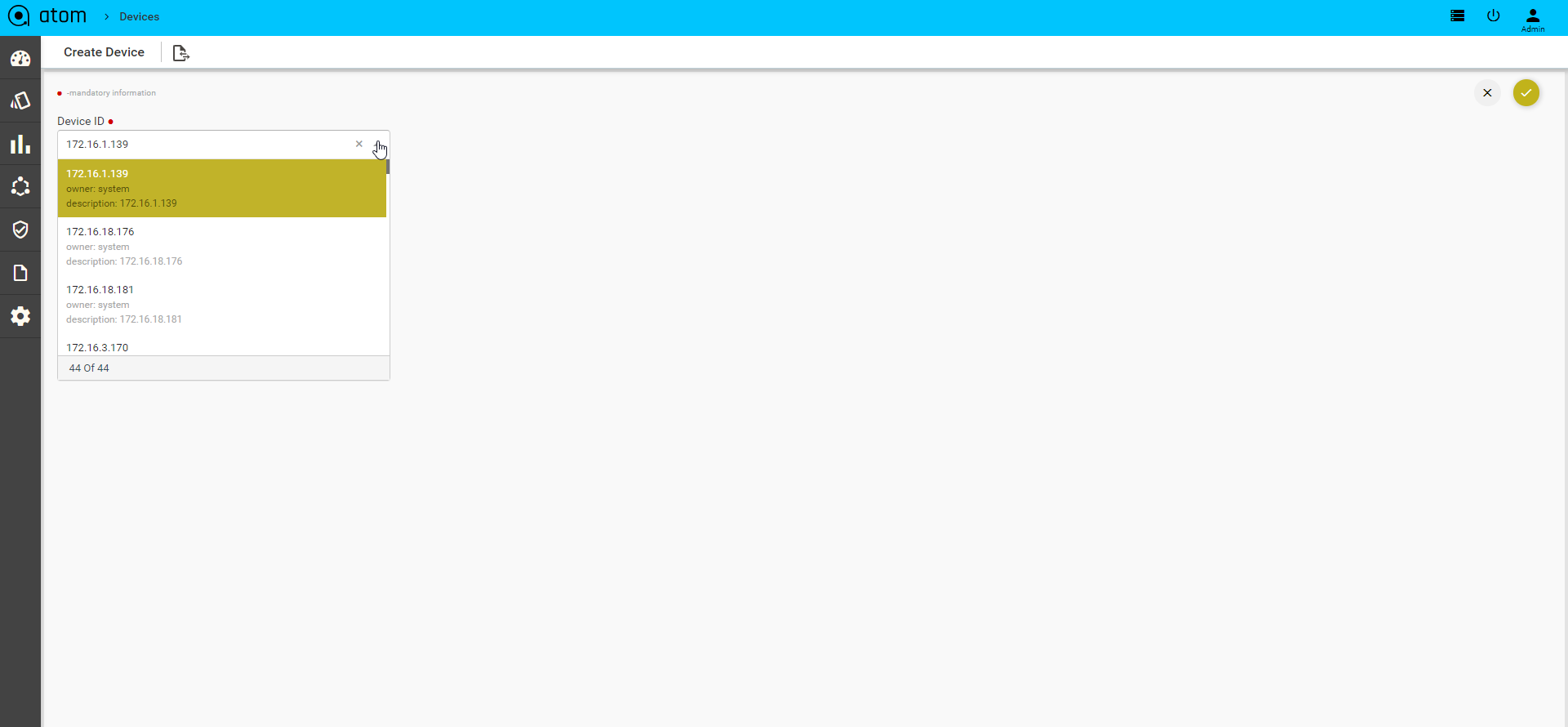

To Add/Edit a Device:

- Navigate to Resource Manager > Devices (Grid View)

- Add – Select Add action

- IP address: Enter the IP address of the device

- Credential Profile: Select the Credential Profile of the device

- Driver name: Driver can be selected for API devices.

- Latitude & Longitude: is a measurement on a globe or map of location north or south of the Equator on devices

- Modify – Select Device & Select Edit action

- Delete – Select one/more device(s) and Select Delete Action

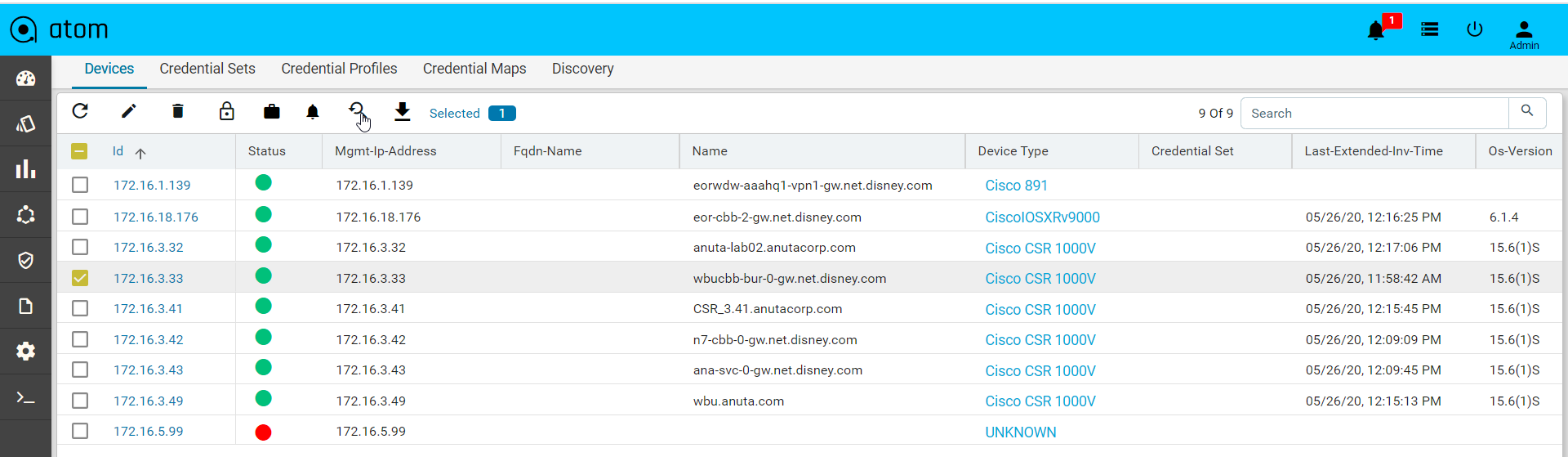

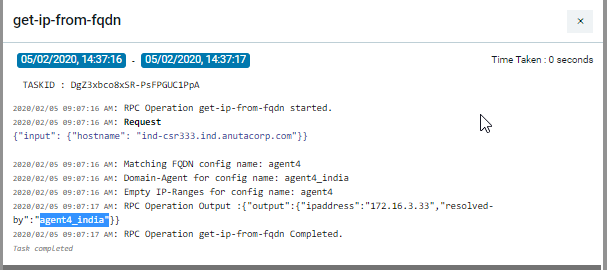

Upon device addition, ATOM will perform the following:

Added Devices are shown in Devices grid and Device status will be shown in Green if device is SNMP reachable and ATOM is able to work with the device successfully.

Device Views

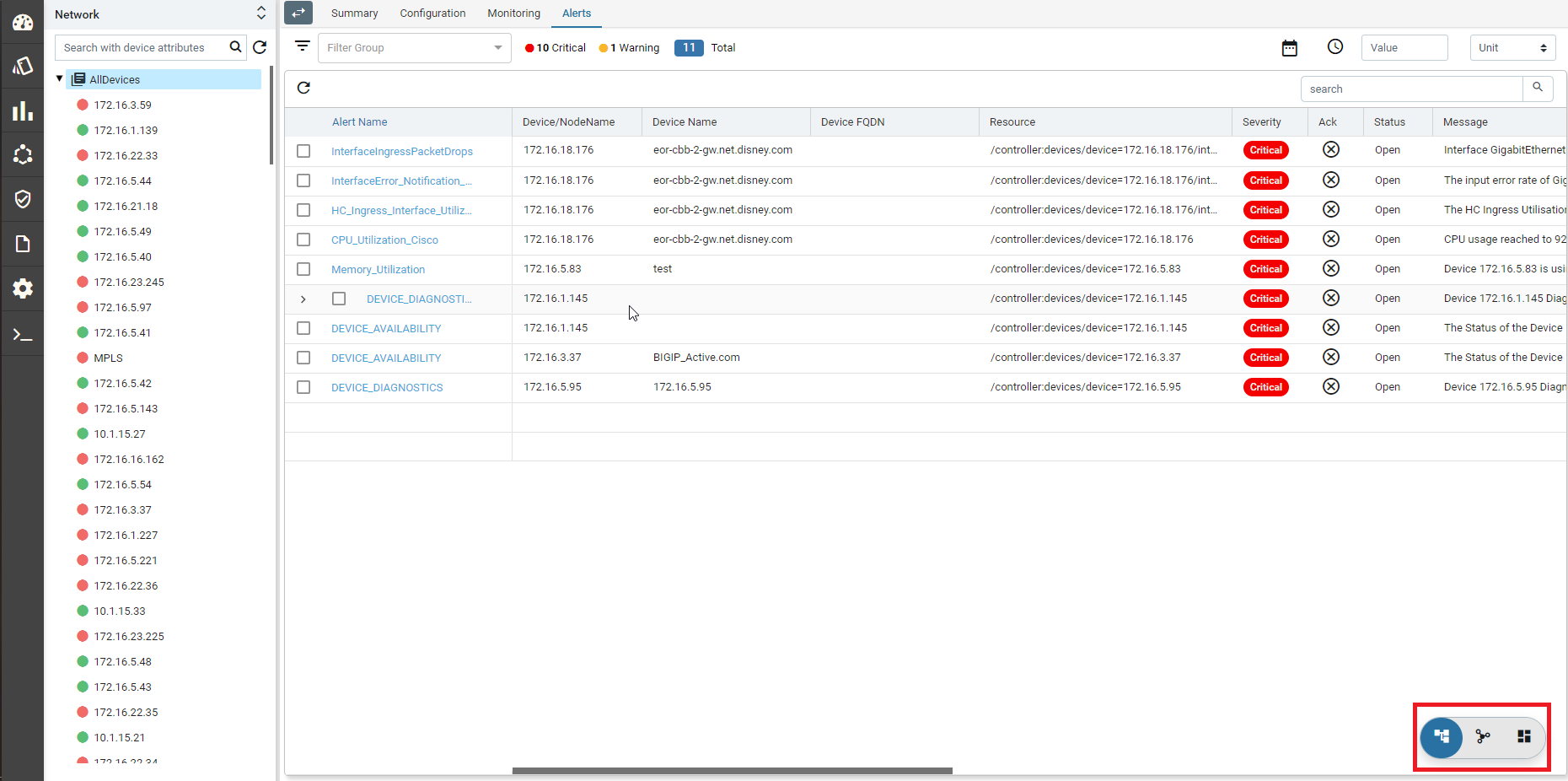

ATOM has 3 views for the devices – Tree (Device Explorer), Topology and Grid.

- Tree View:

- Topology View

- Grid View:

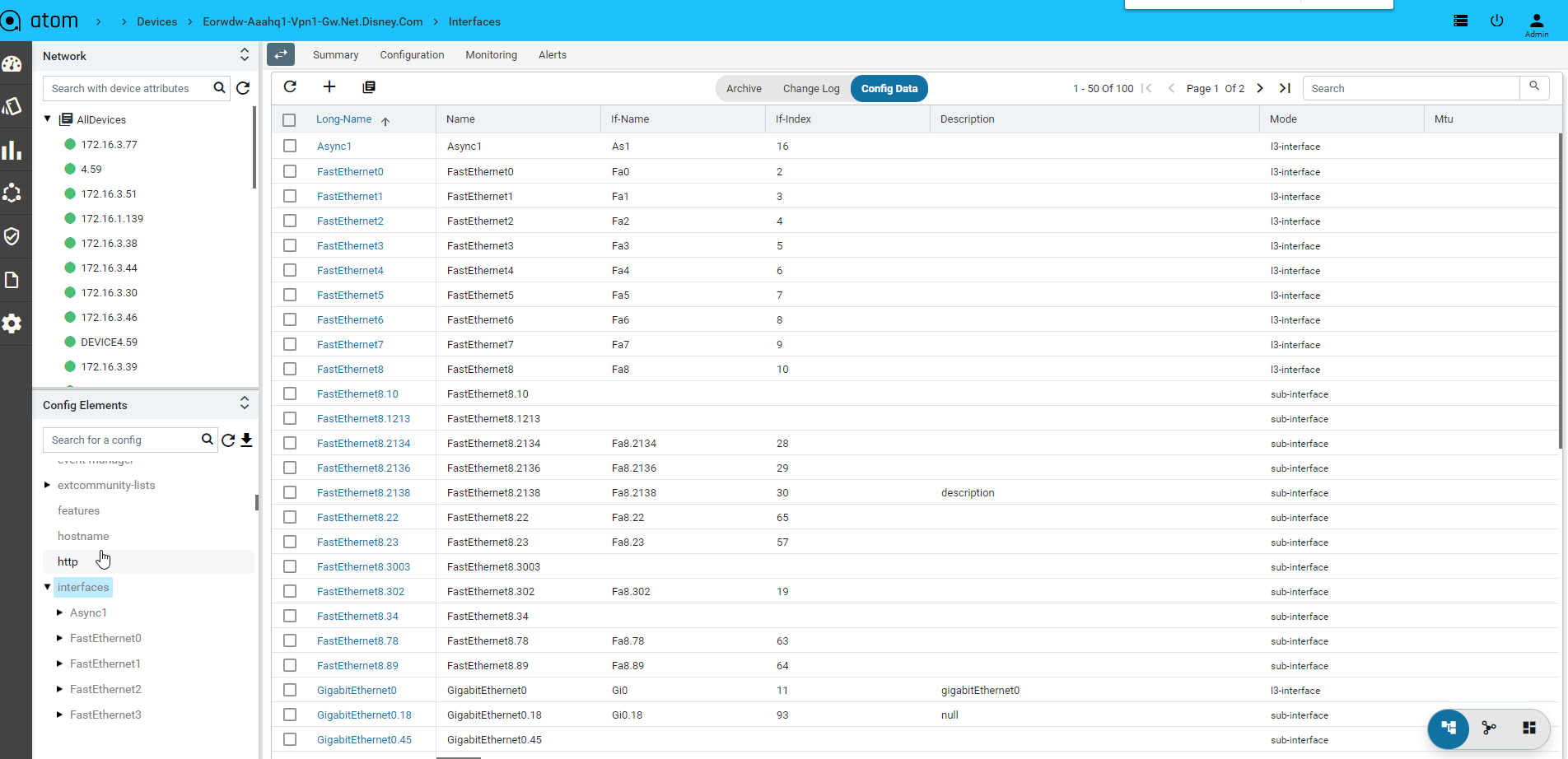

Device Explorer

Device explorer view will provide the devices, its associated config and observability elements in logical hierarchy. This view contains the available device-groups and its associated devices . By default, all the devices are part of AllDevices Group.

Device group will have all the corresponding device details Each group and node will have the following sections:

- Summary : It provides the device platform, version, serial number, current operating OS, Device hardware health, Interface summary, Config compliance violations and Active alerts and recent activity.

- Configuration : it provides the entire summary of config related operations.

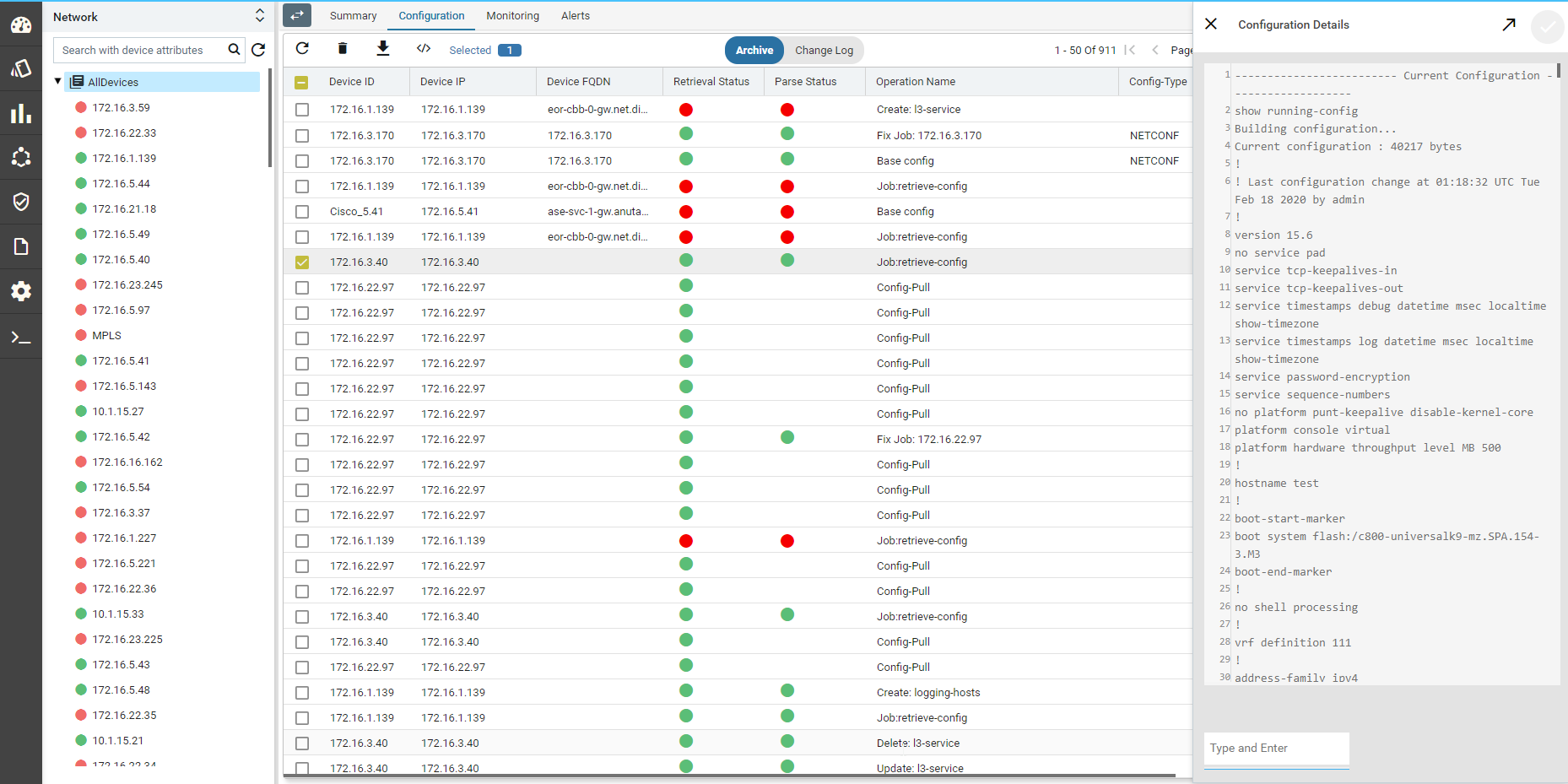

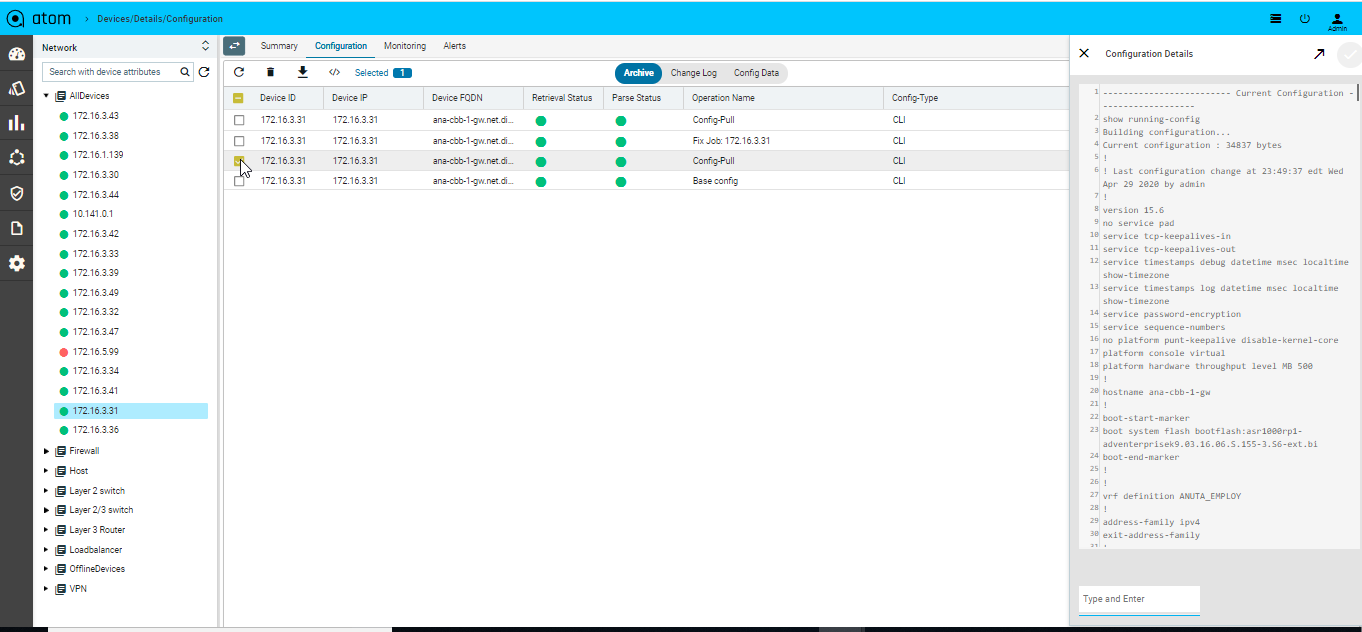

- Config Archive : It shows the each config retrieval, type, retrieval & parsing status.

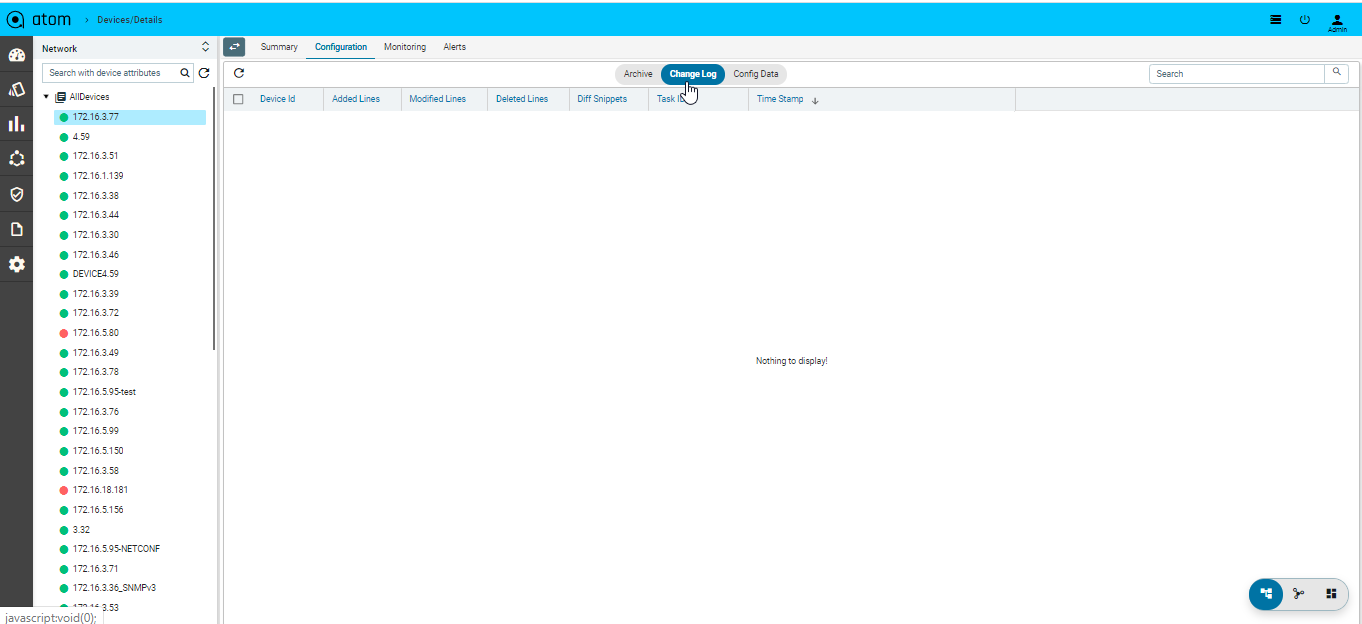

- Changelog : provides the summary of change in configuration such as number of lines added, deleted or modified and at what time & corresponding changes.

- Config Data : it will provide the entire config tree through YANG models parsing. This is not applicable for any device group as they can have heterogeneous models based on the grouping criteria & provisioning interface such as ATOM abstract device models, OC or Native models.

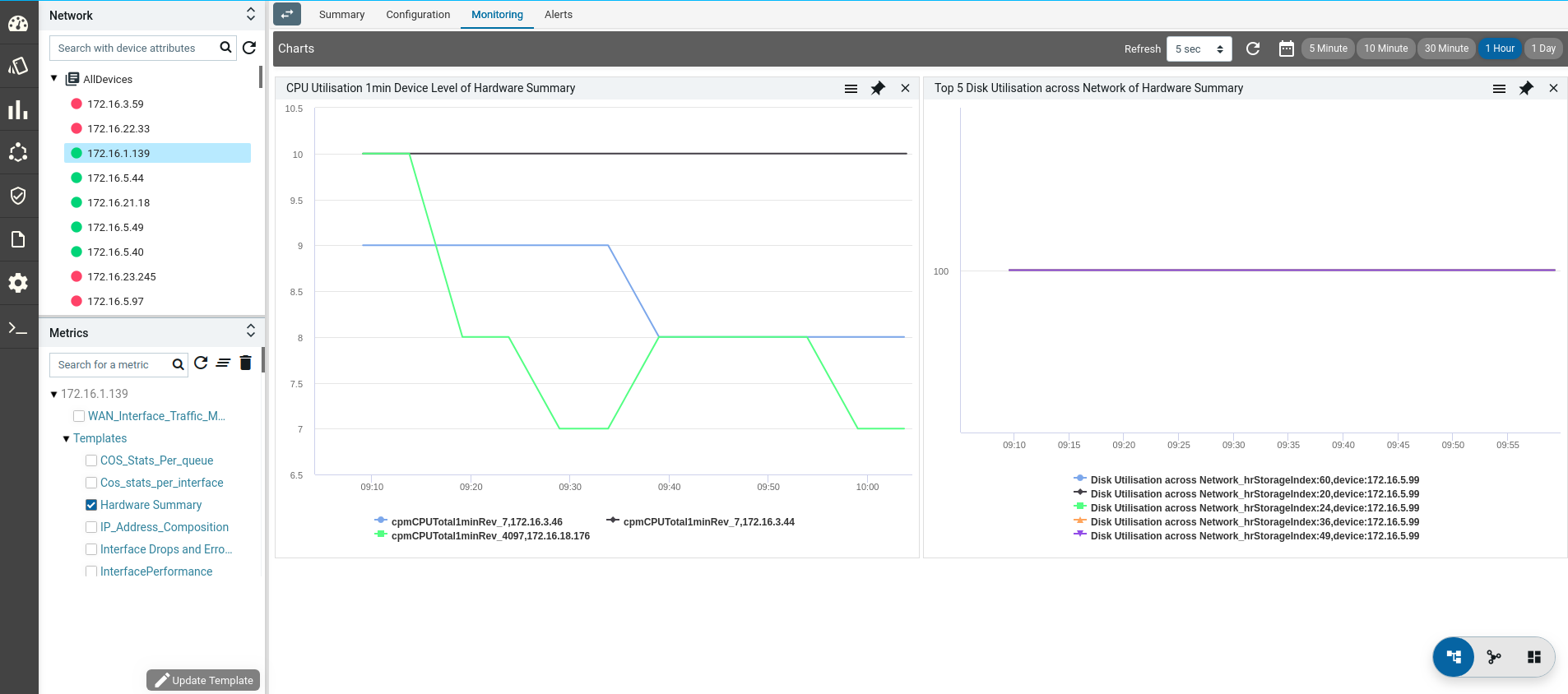

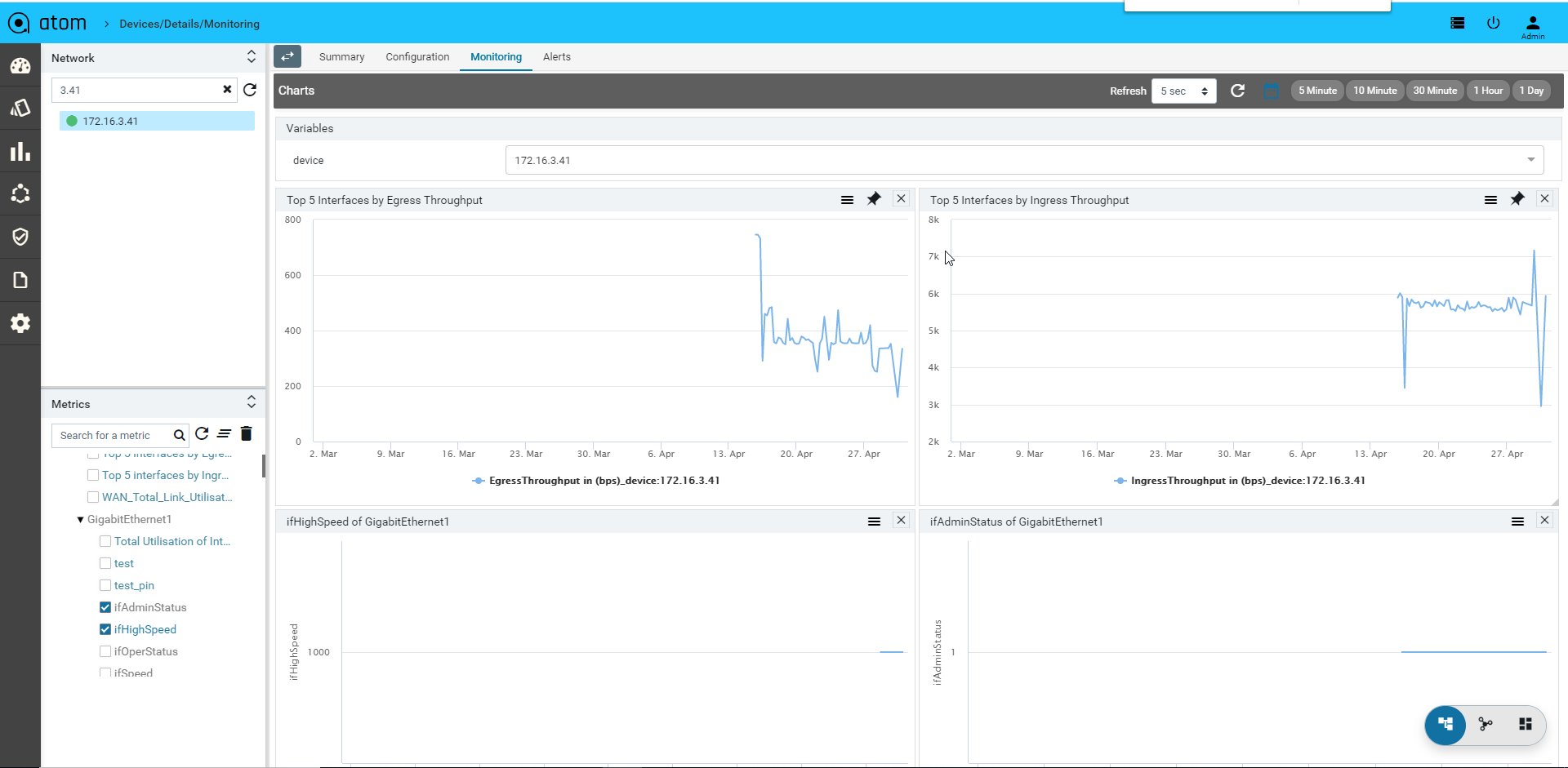

- Monitoring : It contains all possible templates & charts through inheritance from its group or node level. It will show the default template by default as its monitoring summary. Refer Monitoring Guide for more details.

- Alerts : It will show the all active alerts and its history by default. Alert filter view is also available to search & prioritise the alerts. Refer Alerting Guide for more details.

Each device-group view will have a Summary dashboard which can be customizable.

Device Actions

ATOM supports common actions on Device. These actions can be performed from Device Grid view on one or more devices or from within the Device specific view and will be discussed in Device Summary section.

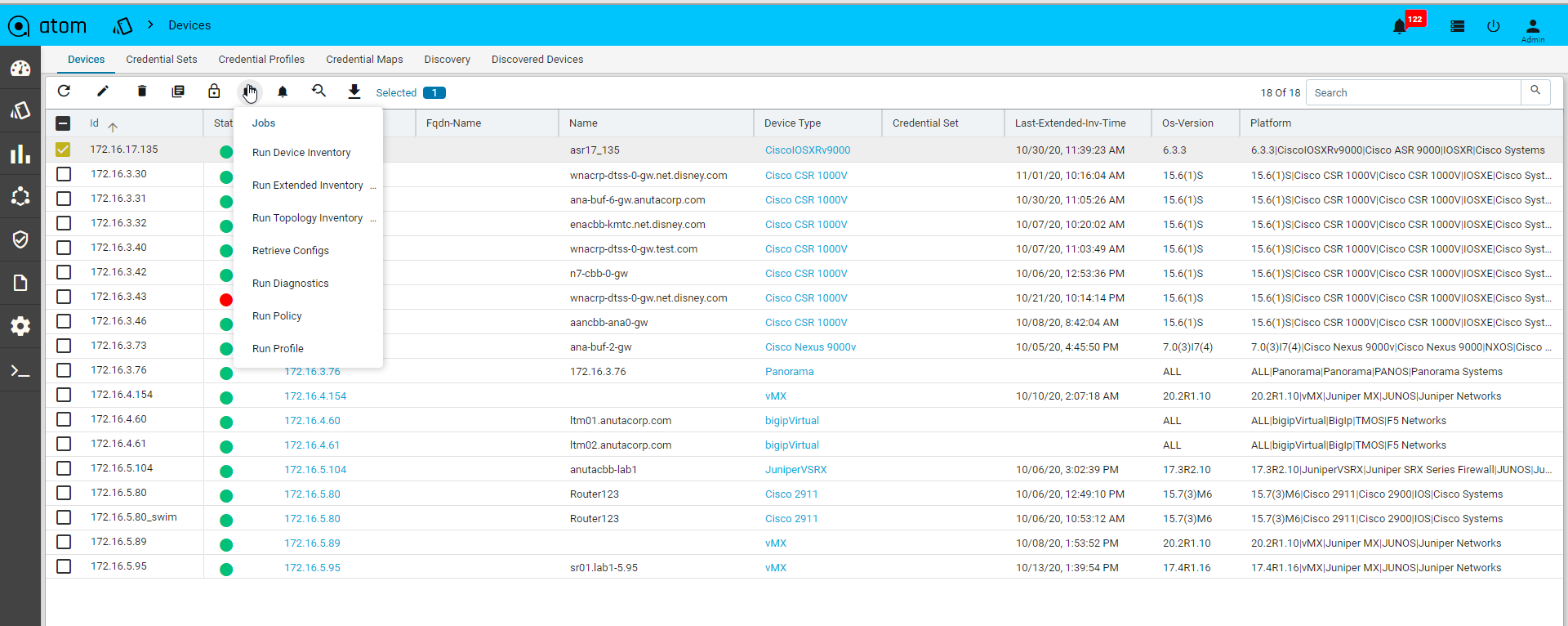

Jobs & Subscriptions

Various Collection & Diagnostics jobs can be invoked.

- Navigate to Devices > select one or more devices

- Click on the Jobs and select the job to run

-

- Jobs action -> Run Device Inventory

- Jobs action -> Run Extended Inventory

- Jobs action -> Run Topology Inventory

- Jobs action -> Retrieve Configs

- Jobs action -> Run Diagnostics

- Jobs action -> Run Policy

- Jobs action -> Run Profile

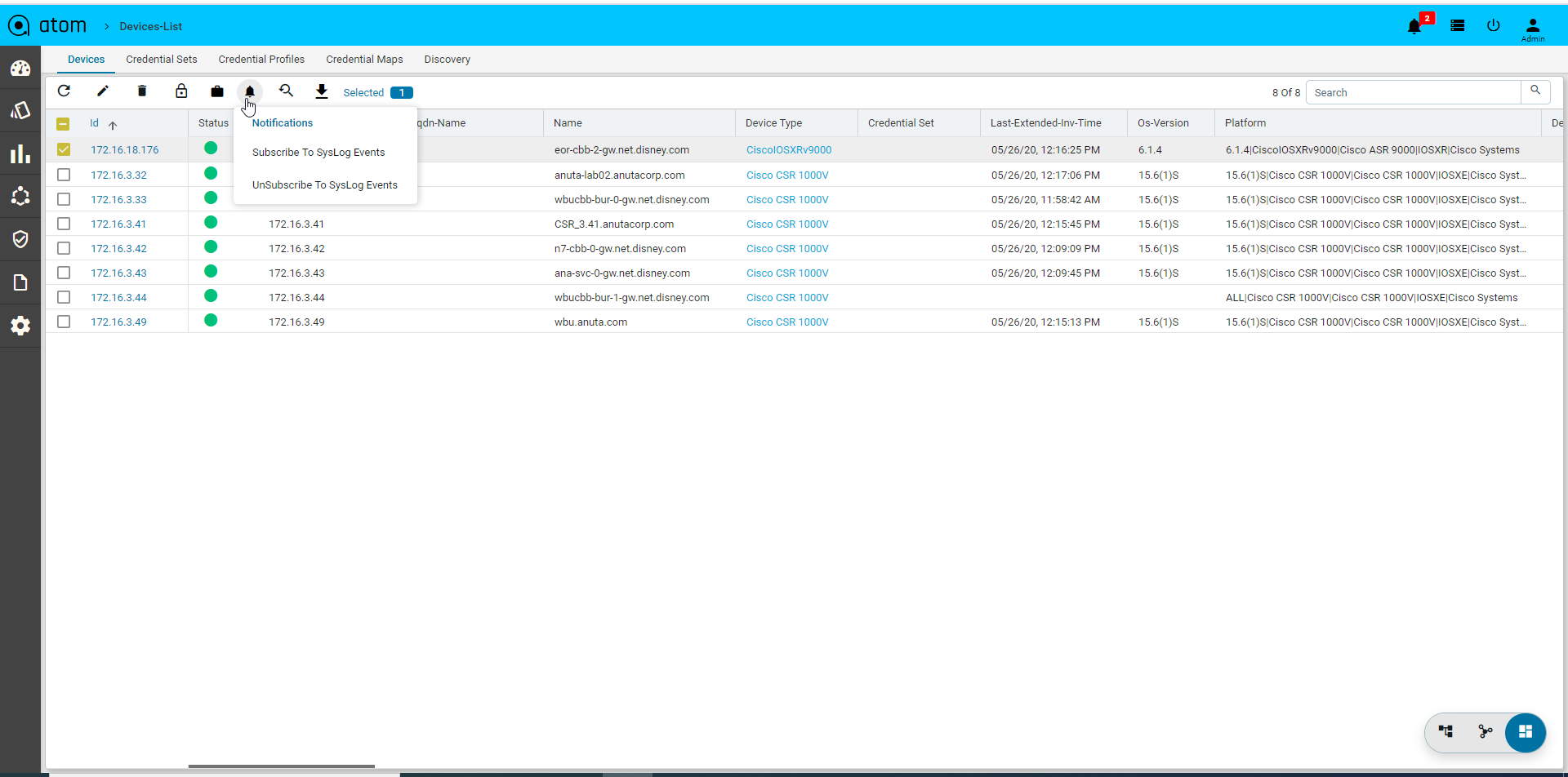

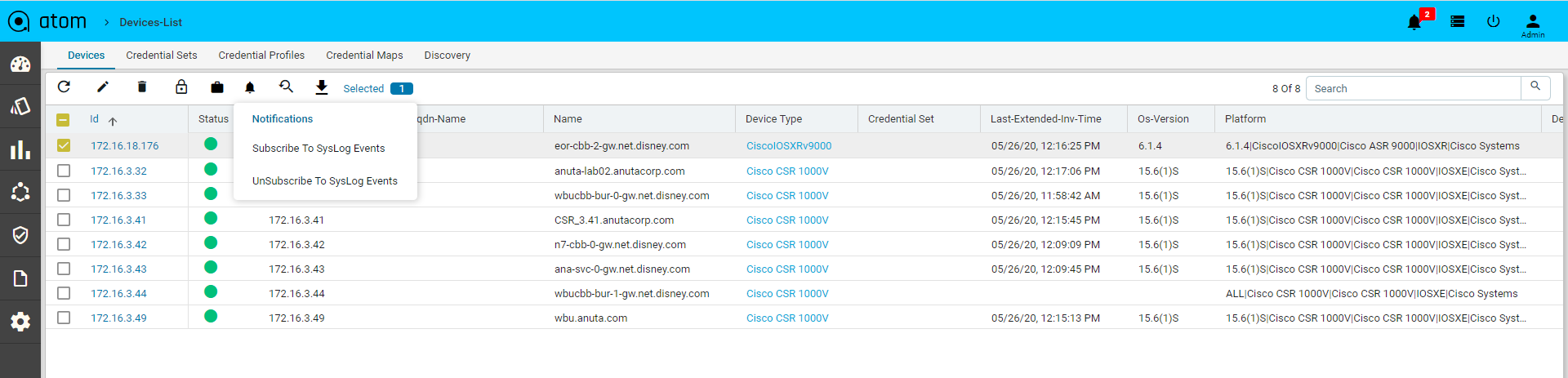

- Click on the Subscriptions to configure Syslog Subscription on the Devices

- This will result in ATOM being configured as a Syslog receiver and is a configuration change on the device.

Exporting Device Information

You can export the device information of the devices either in the XML or JSON format.

- Navigate to Resource Manager > Devices > Grid View(Icon) > Devices

- Select one or more devices

- Click the View/Download button and select either the XML or JSON

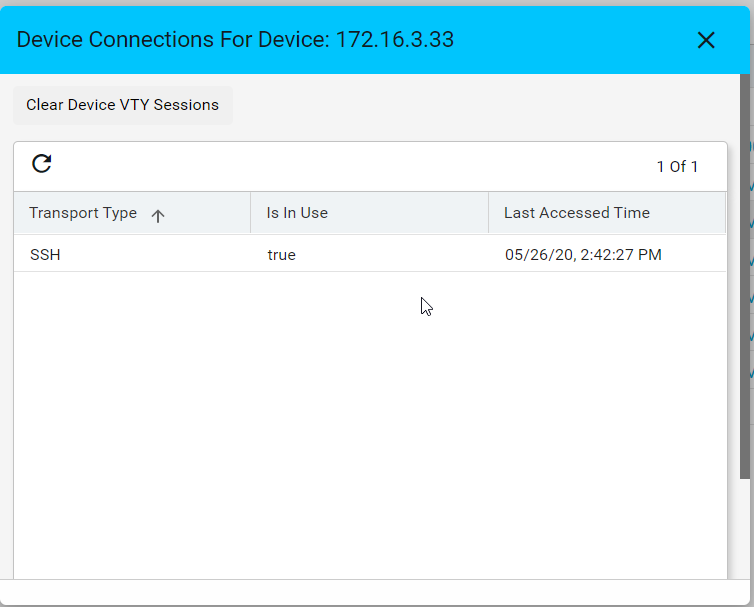

VTY Sessions

This is used to view the active vty sessions.

- Navigate to Resource Manager > Devices > Grid View(Icon) > Devices

- Select any device

- Click the VTY Sessions button

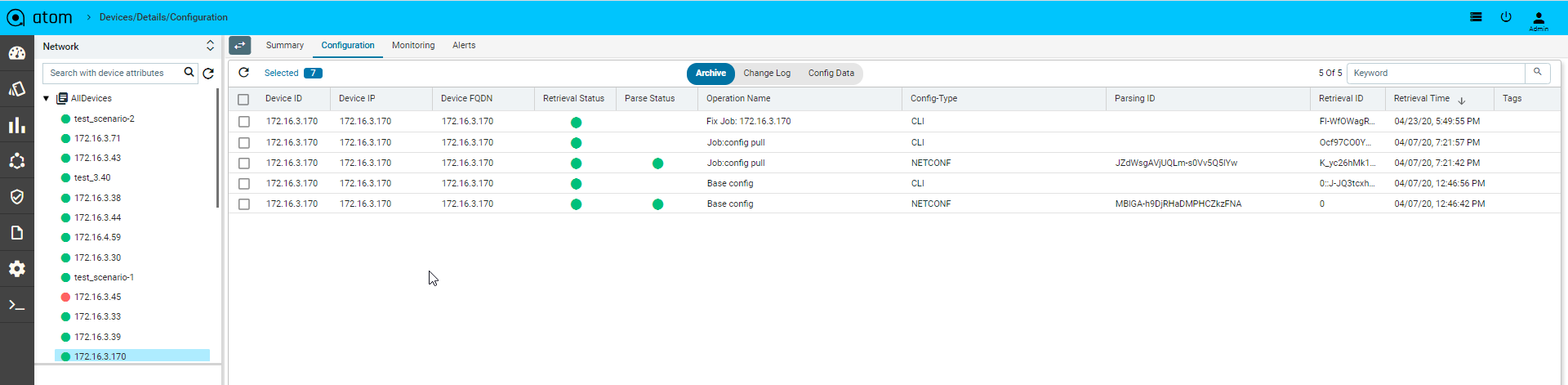

Default Jobs

Below are the jobs which run during the device onboarding process in the mentioned order.

- Device Inventory : It gathers the Platform, OS Version through SNMP and gets the Device to ONLINE. If the platform is not found in ATOM then check Platform guide on

- Device Extended Inventory : It collects the Serial Number, Interface performance, health, availability etc.,

- Device Diagnostics : ATOM will perform the reachability check through Ping, SNMP and Telnet/SSH if they are applicable.

- Base Config Pull or Config Retrieval : It will retrieve the configuration and persist in the database. Configuration will be collected if the credential function is set to Config SNAPSHOT or any of the PROVISIONING functions. Build data model flag is used to parse the configuration into YANG entities from the specified config source snapshot.

Below is the example, to backup cli and netconf xml config and parse the xml version.

Config Type column will show us the source of config retrieval.

All the above operations can be customized for any platform as required and scheduled similar to other collection jobs.

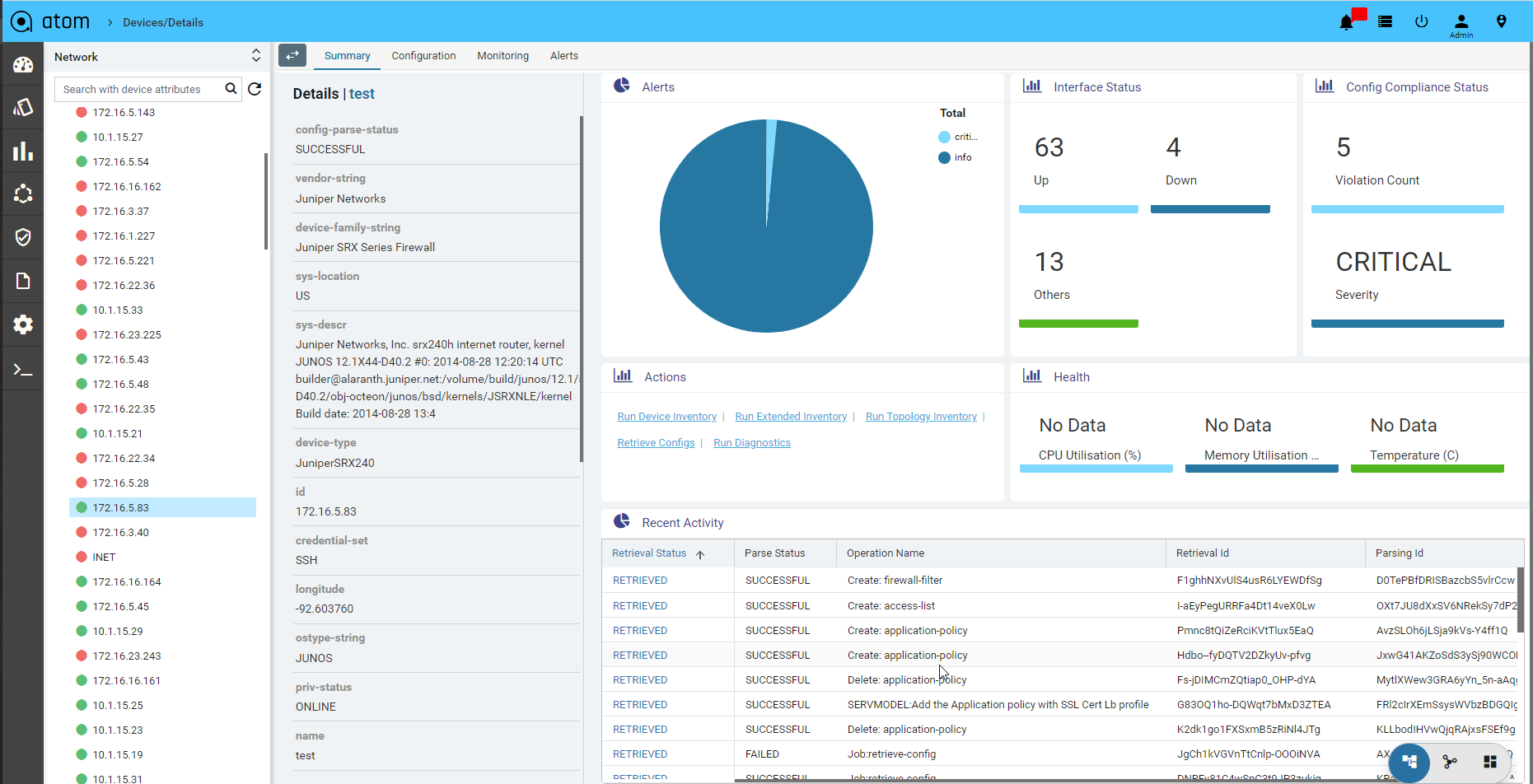

Device Summary

Device summary view provides a quick snapshot of important device attributes including Alarm summary, interface summary, recent configuration change history and health.

Device Summary also provides access to most popular device actions and quick links to frequently used activities.

- Navigate to Devices > select a device

- Click on the Device > Summary> to view the details associated with each attribute.

Configuration Management

Configuration Archive

ATOM Collects Device configuration periodically as configured in Jobs->Configuration or upon a config change event from the device. To trigger configuration collection through config change notification, ATOM should be configured to receive config change notification through SNMP Trap or Syslog.

- To view Device(s) Configuration – Navigate to Devices > select a device(s)

- Click on the “Configuration > Archive” Tab

- Select an Entry in the Grid

- In Details view – CLI/XML Configuration is displayed

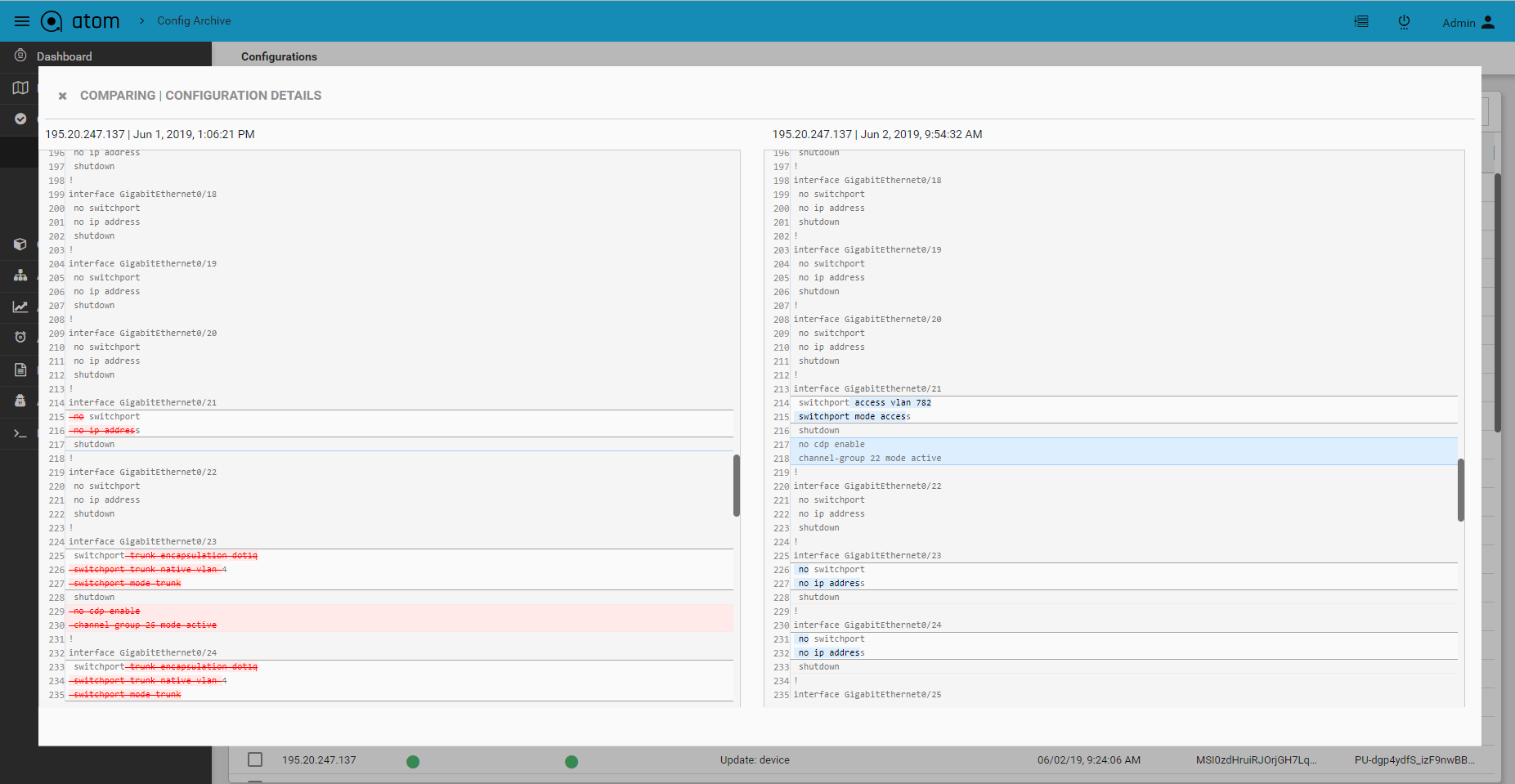

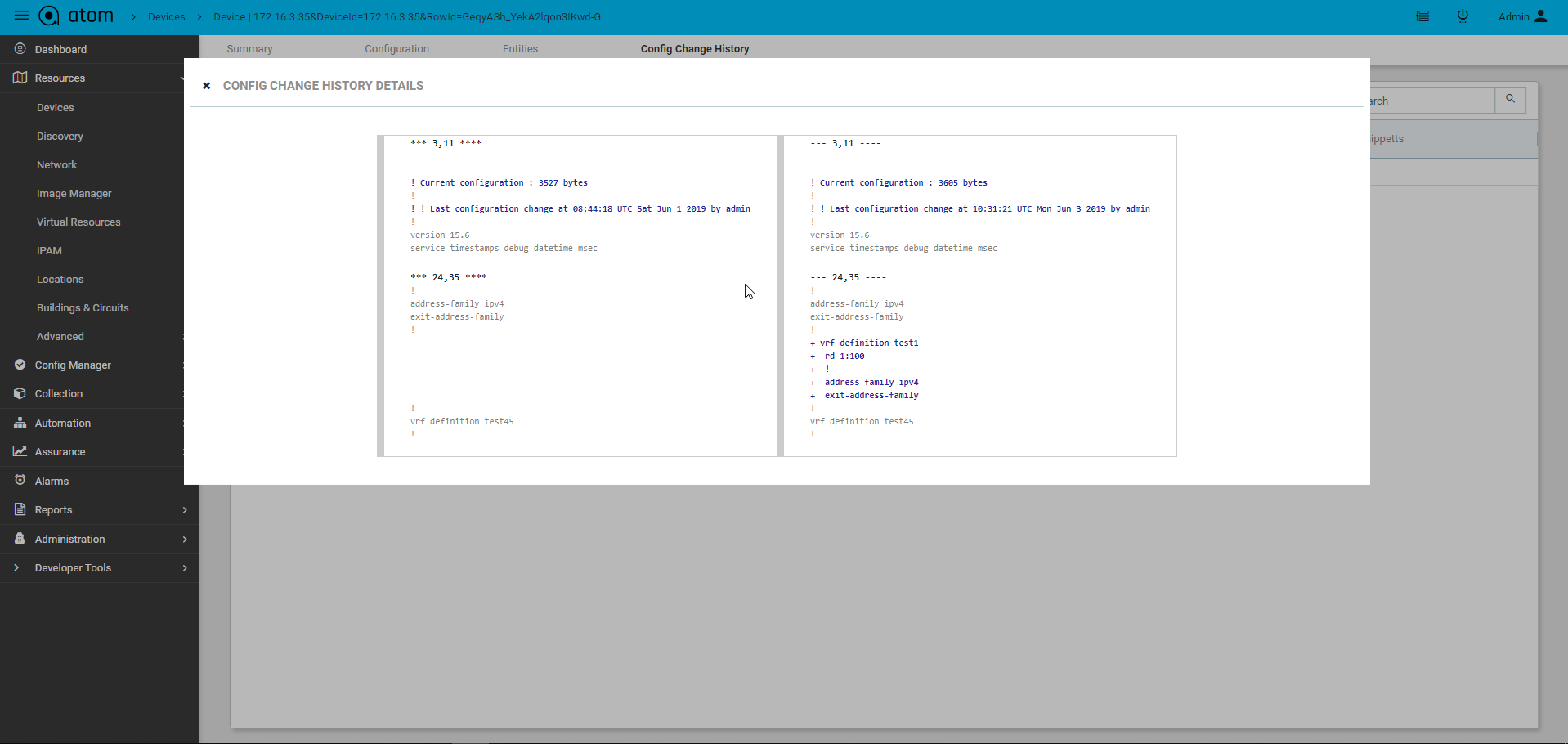

Configuration Diff

Configuration differences across various revisions can be viewed by selecting two versions from the Configuration archive grid.

- To view Device(s) Configuration – Navigate to Devices > select a device(s)

- Click on the “Configuration > Archive” Tab

- Search configuration grid using tags or other attributes

- Select two configuration revisions

- Click on “Compare” to launch configuration diff view

Configuration Tagging

Configuration version can be tagged using user provided flags or tags. This can be used for filtering and comparison of configuration revisions.

- To view Device(s) Configuration – Navigate to Devices > select a device(s)

- Click on the “Configuration > Archive” Tab

- Select an entry from the configuration revision grid

- Click on “Update Tags”

- Enter one or more tags in the lower right of the configuration details view

Configuration Change Log

Configuration archive provides full comparison of device configuration changes across revisions. ATOM provides another view to see only config modifications only.

This can be enabled from Admin Settings.

- Administrations > System> General settings> Admin settings

- Edit “Admin Settings”

- Set “generate-config-inventory-event” to true

Config change history for devices can be tracked as follows:

- Navigate to Devices (Tree View) > select device(s)

- Click on the “Configuration” Tab

- Click on the “Change Log” Tab

Configuration Change Management – Create/Update/Delete

Configuration archive discussed in the “Configuration Management” section provides a Read-Only view of Device CLI configuration. Additionally, ATOM provides Model driven configuration for create, update & delete. This includes the following:

- Discovery of Device configuration

- Show a tree view of the configuration

- Create/Edit/Delete of Device configuration

Configuration Editing can be done from “Config Data” view:

- To view Device Configuration – Navigate to Devices > select a device

- Click on the Configuration> Config Data Tab

- From the Tree view select a node and possible operations are shown on the right hand side

Note: Create/Edit/Delete from here will send configuration instructions to the device. ATOM should be set to interactive mode from the Administration page.

Device Inventory (SNMP)

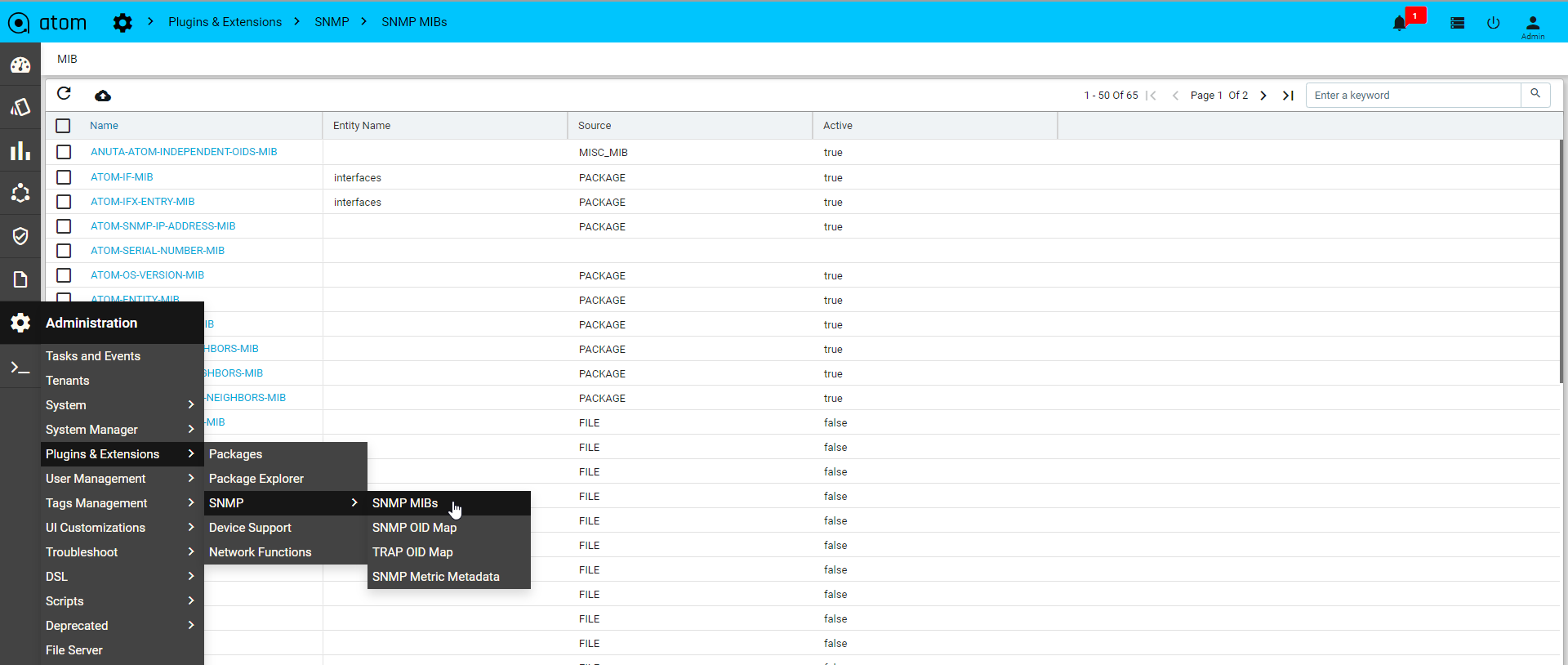

All Device inventory collected through SNMP Collection job is shown in Entities view. Following provides guidance on

- To view Device Configuration – Navigate to Devices > select a device

- Click on the “Monitoring” Tab

- Collected data will be shown under MIB-name

Adding Unmanaged devices

Some devices, with feature capabilities such as L2 only, L2 and L3 both, L3 only, can be manually added to the Devices table. Such devices are not managed by ATOM and it does neither generate configurations nor push any configurations on them. Multiple unmanaged devices can be on‐boarded into the resource pool and each such device can be used during service instantiation.

To add an Unmanaged device, do the following:

- Navigate to Resources > Devices > Add Device

- In the Create device screen, select the Unmanaged option

Enter values in the following fields:

- Host Name: Enter a name for the device

- Device Capability: Select one or more capabilities from the available list.

For example, if you want the device to behave as a L3 device, choose L3Router from the list.

- Device Type: Select the category of the device that it belongs to. 3. Add network connections between the null device and it’s peer device as follows:

- Source Interface: Select the interface, on the null device, from which the network connection should originate.

- Peer Device: Select the device, managed by ATOM, as the peer device.

- Peer Interface: Select the interface on the peer device where the network connection should terminate.

Adding Dummy devices

In some scenarios, you may have to create devices for which configurations are created as a part of a service but are not pushed to any actual device. These logical entities are termed Dummy Devices and they do not have any real world counterparts with a pingable IP address.

Monitoring

ATOM enables you to create Assurance profiles to facilitate 24×7 uptime of your network. Closed loop automation (CLA) framework allows you to define policies and remediation actions in violation of those policies.

ATOM collects operational & performance metrics from multiple data sources such as SNMP, SNMP traps, Syslog and Streaming Telemetry and stores them in a time-series database.

Following are the different activities on the metrics:

- Visualize Data Using Charts & Reports

- Alerts against thresholds defined on the Metrics

- Alert Dashboards – Collection of Predefined & User Defined Dashlets

- Alert Routing to Email, Slack etc.,

- Actions on Alerts

- Closed Loop Automation Actions on the Alerts

Please refer to “ATOM User Guide – Performance Management & Alerting” for further details.

Network Topology

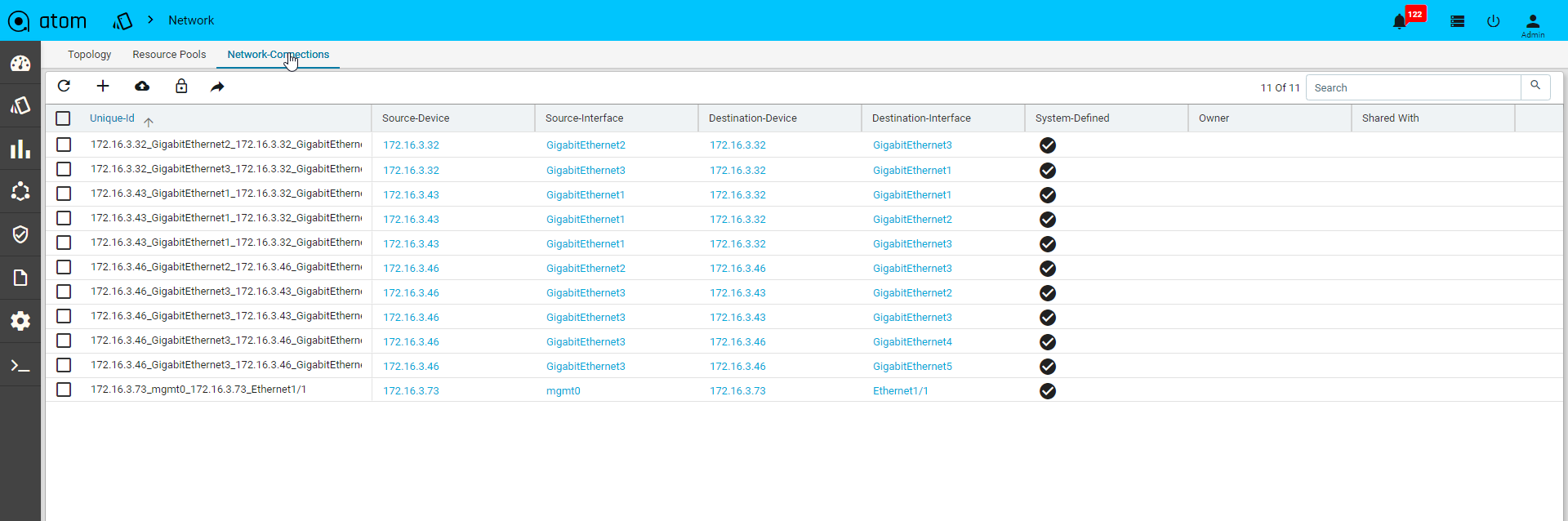

Network Connections

Network connectivity is discovered between devices using Layer 2 discovery protocols – CDP & LLDP. In cases where CDP/LLDP is not supported or enabled on the device, Network connections can be added Manually using Network connections .

NOTE: Network connections should be added manually between the devices that have LACP port channels configured on them.

To add a Network Connection, do the following:

- Go to Resource Manager > Network

- Click Network Connections and click Add.

- In the Create Network Connection screen, enter the values in the following fields:

- Unique ID: This is a system‐generated ID for a network connection.

- Source Device: Select a Device (origin of the network connection)

- Source Interface : Enter a name for the interface on the source device

- Destination Device: Select a Device (the end of the network connection)

- Destination Interface: Enter a name for the interface on the destination device. A Network Connection is established between the interfaces of the source and the destination devices.

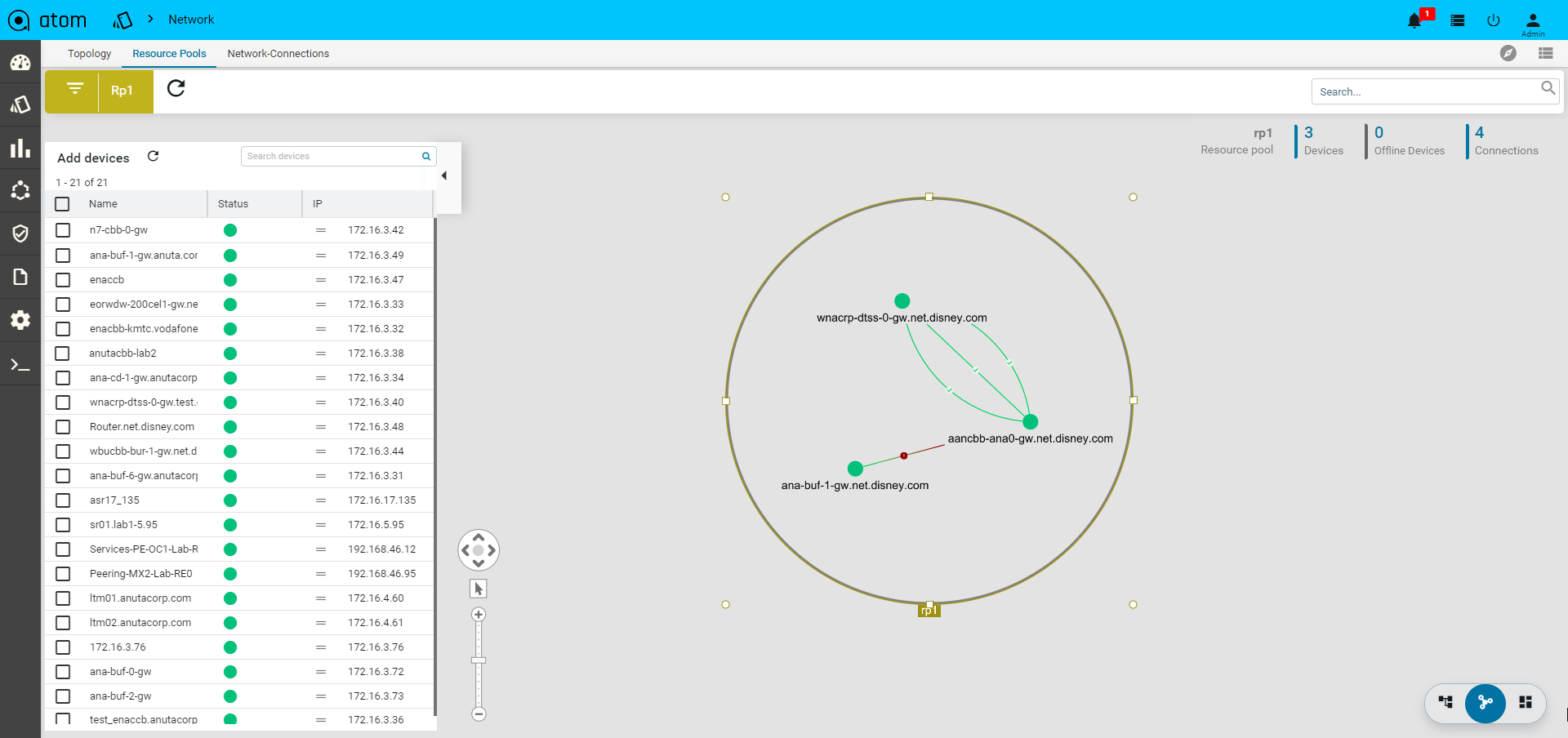

Network Topology

All the devices for each of which network connections are available are displayed in the topology view.

Resource Pools

A resource pool is a logical abstraction for flexible management of resources managed by ATOM. A resource pool can contain child resource pools and you can create a hierarchy of shared resources. The resource pools at a higher level are called parent resource pools. Users can create child resource pools of the parent resource pool or of any user‐created child resource pool. Each child resource pool owns some of the parent’s resources and can, in turn, have a hierarchy of child resource pools to represent successively smaller units of resources.

Resource pools allow you to delegate control over the resources of a host and by creating multiple resource pools as direct children of the host, you can delegate control of the resource pools to tenants or users within the organizations.

Using resource pools can yield the following benefits to the administrator:

- Flexible hierarchical organization

- Isolation between pools, sharing within pools

- Access control and delegation

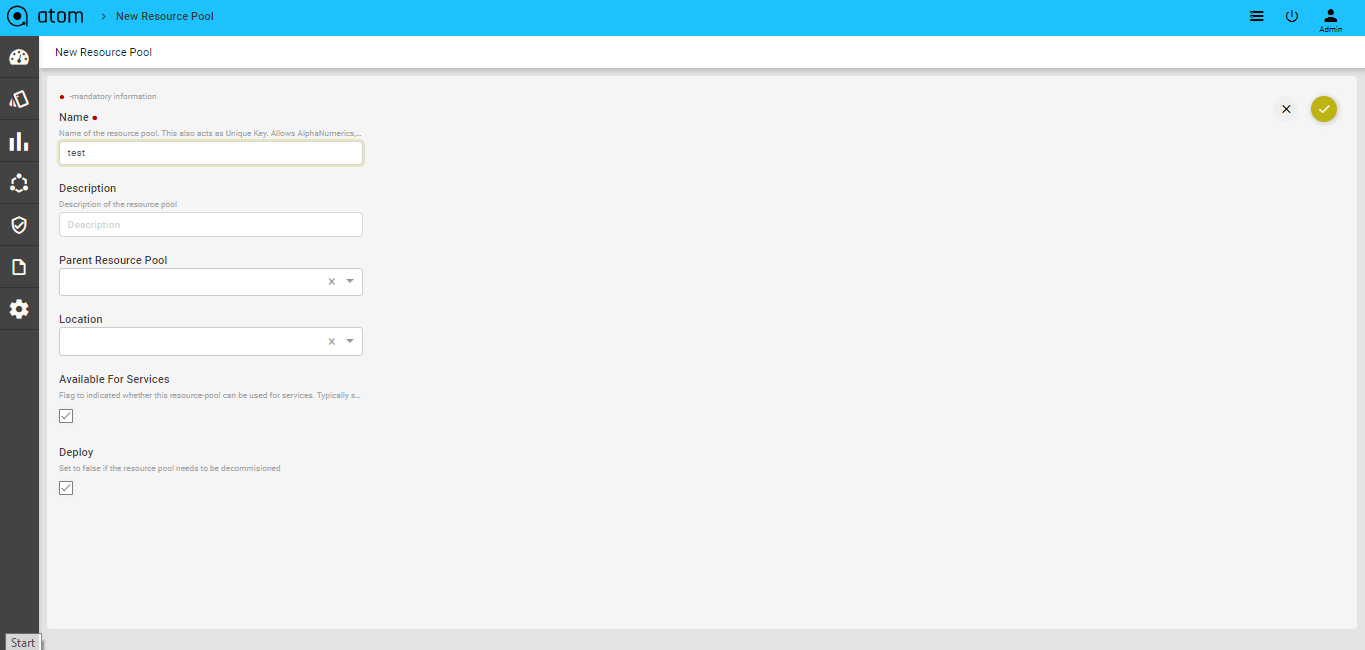

Creating a Resource Pool

- Navigate to Resource Manager > Network > Resource Pools

- In the right pane, click the Add Resource Pool button to create a Resource Pool

- In the Create Resource Pool, enter values in the fields are displayed: .

- Name: Enter a name for the resource pool

- Description: Enter some descriptive text for the created resource pool

- Available for Services: Select this option if the resource pool can be used for creating services.

- Parent Resource Pool: Select a resource pool that should act as the parent for this resource pool that is being created.

- Location: Select the name of the site or the geographical location where this resource pool should be created. See the section, “Locations” for more information about creating Locations and Location types.

- Deploy: Select this option if the resource pool should be deployed or used in services.

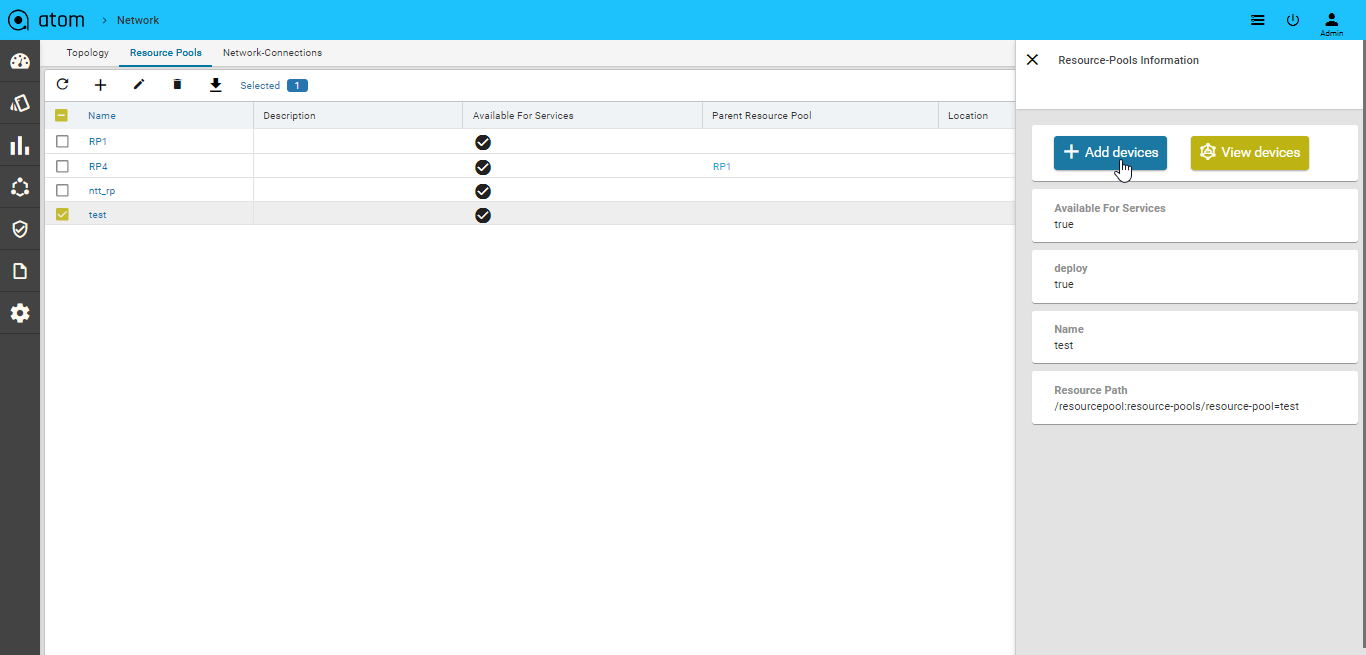

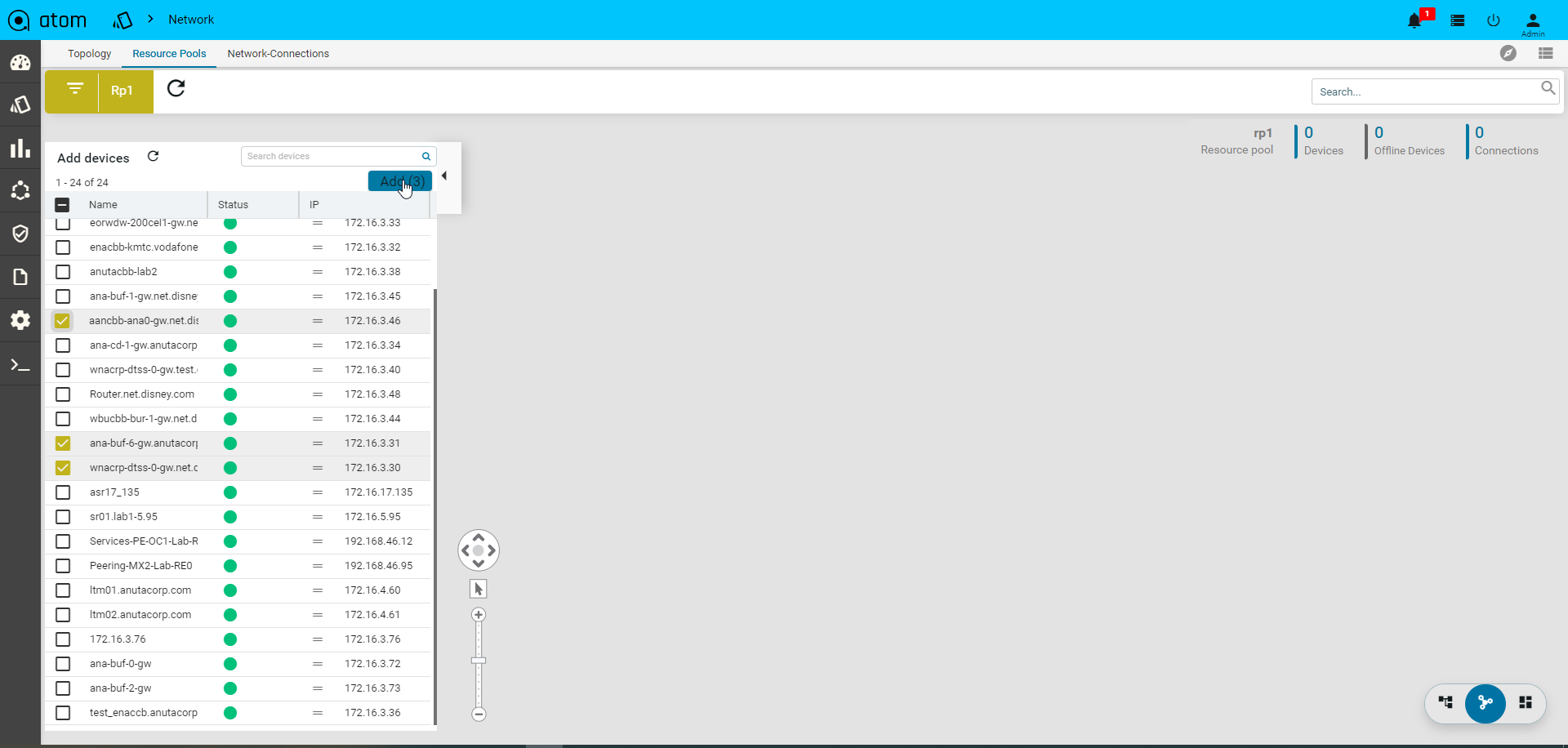

Adding Devices to a Resource Pool

- Click the created resource pool to add the required devices to it.

Select Resource pool > Add Devices

- All the devices available in ATOM are displayed in the left pane.

- Click Add to include the required devices in the resource pool

- Select the device from the Drag and Drop the devices pane to the right pane All the selected devices are now part of the resource pool created earlier.

Locations

Devices & Resources Pools can be attached to a Physical Location. Location tagging allows devices and resource pools to be visualized on a Geographical Map in topology view.

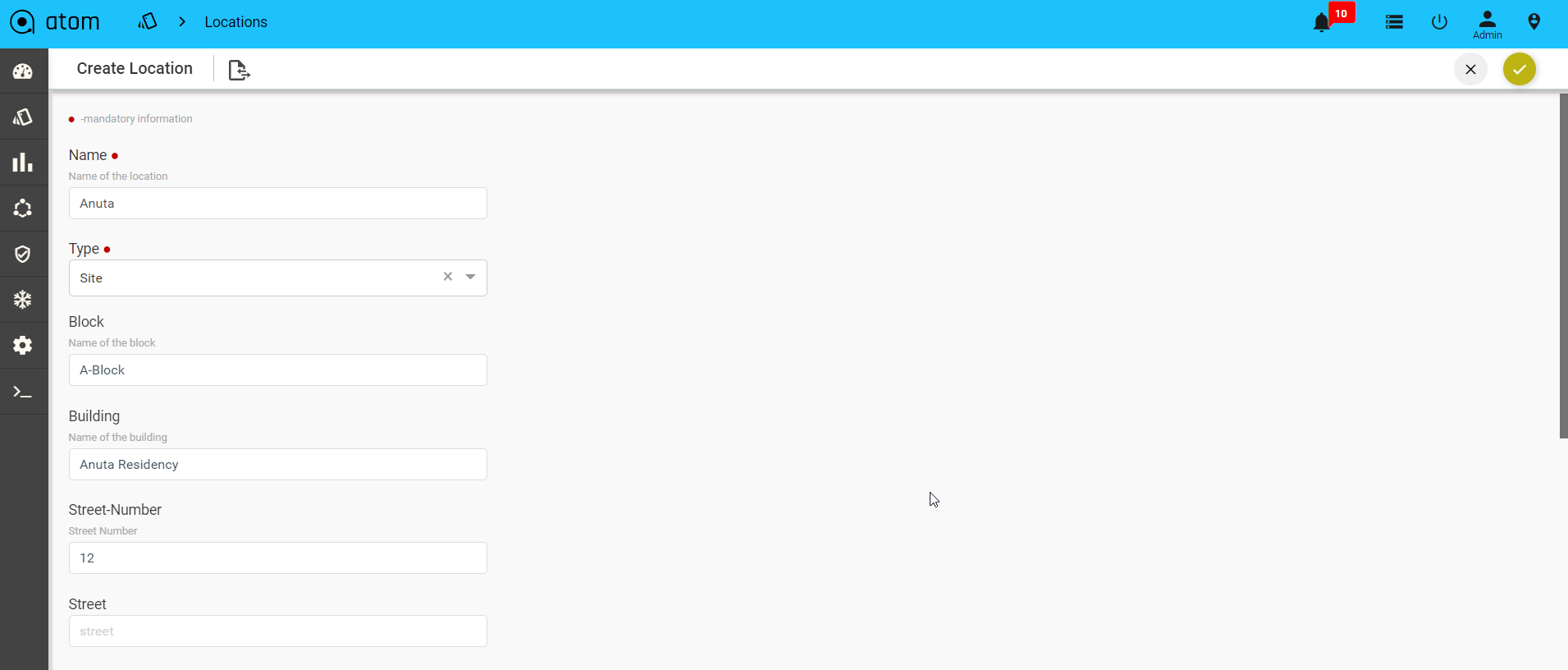

- To Create/Edit a Location – Resource Manager > Locations > Resources-Location >click Add Location

- In the Create Location screen, enter values in the fields described:

- Name: Add the name of for the data center or the site that you want to create.

- Type: Select from the pre‐defined location types from the drop‐down menu. (preferably select Site)

- Block: Enter the name of the block where the location is situated

- Building: Enter the name of the Building

- Street Number: Enter the number of the street where the Building is located

- Latitude: Enter the latitude of the site.

- Longitude: Enter the longitude of the site.

- Street: Enter the street name where the building is located.

- Country: Select a country from a pre populated list available in ATOM

- City: Select a specific city contained in the chosen country.

- State: Enter the name of the State or province to which the city belongs.

- Zip Code: Enter the zip code of the City where the Site is located.

- Parent Location: Select one of the predefined locations (of the type, Region or Country) defined earlier.

For assigning the created Location to a Resource pool, refer to section, “Creating a Resource Pool”.

After the successful allocation of the Resource Pool to the given Location, you can view it on the map. Select the created Resource Pool and click View on Map

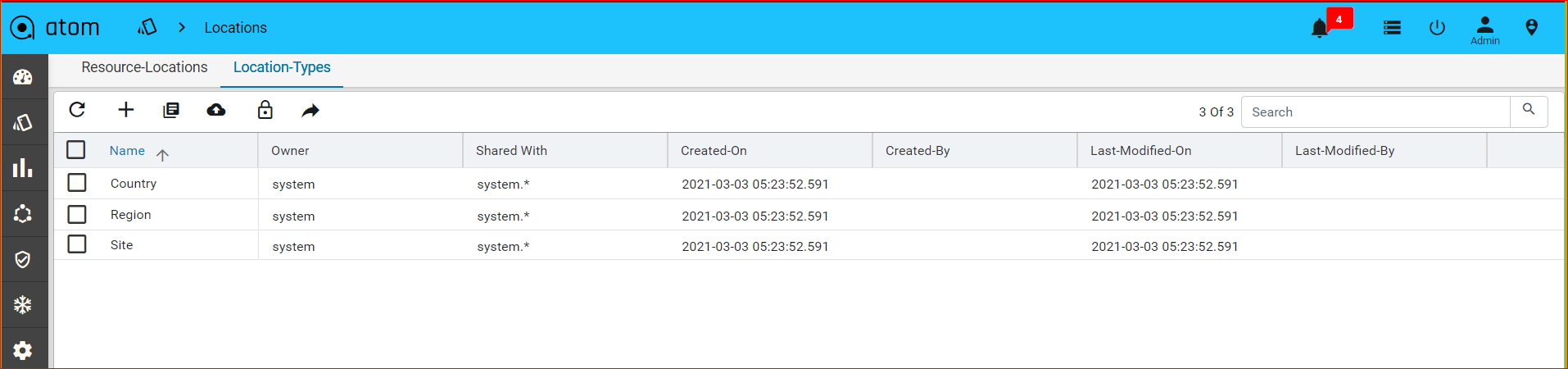

Location Types

Add the types of the location that should be associated with a Location.

Navigate to Resource Manager > Locations > Location Types.

The default location types available in ATOM are Region, Country, and Data Center

IPAM

IP Address Pool Group

For effective management of IP addresses, you can arrange IP addresses as an ordered collection and use them while instantiating a service.

- Navigate to Resource Manager > IPAM > IP Address Pool Groups

- Click Add IP Address Pool Group in the right pane

- In the Create IP Address Pool Group screen, enter values in the fields:

- Name: Enter the name of the IP address pool group

- Label: Enter the name of the label that describes the IP address pool group

- Click Add to add IP Address Pools to be included in the IP Address Pool Group

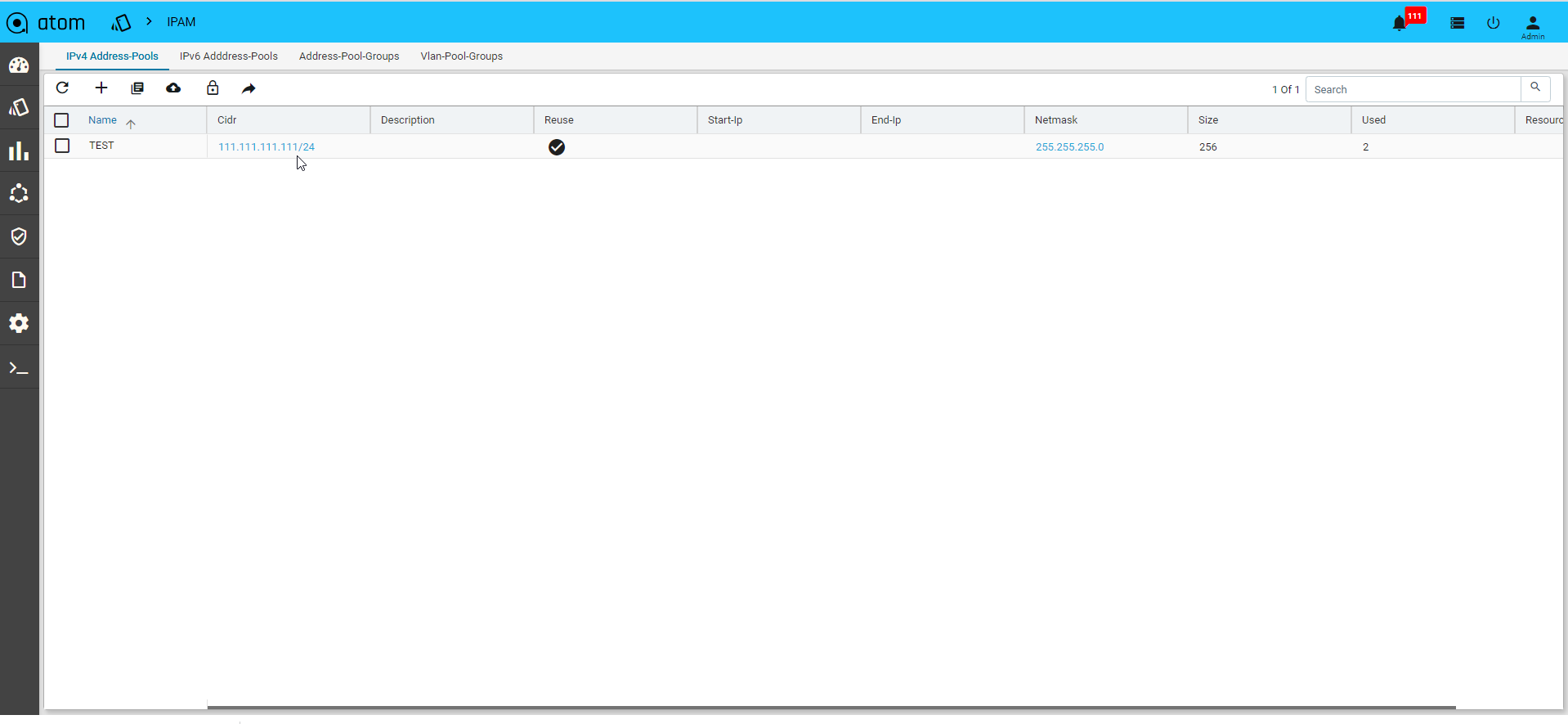

IP Address Pools

A range of IP addresses can be assigned to a pool and associated with a resourcepool.All these IP addresses will be used during the instantiation of the service.

1. Navigate to Resource Manager > IPAM > IP4- Address Pools

2. Click Add IP Address Pool on the right pane and enter values in the following fields:

- Name: Enter a unique name for the IP address pool

- CIDR: Enter the CIDR (IP address followed by a slash and number)

- Description: Enter the description for the created IP Address Pool

- Reuse: Select this option if the IP addresses contained in this pool should be reused across different services.

- Start IP:Enter the start IP address of the range of IP addresses

- End IP: Enter the last IP address in the IP address range.

- Resource Pool: Select the Resource pool to which these IP addresses should be assigned. All the services that are created in these Resource Pools will use these IP addresses.

Creating IP address entries

IP Address entries are the IP Address Pools that have been reserved for a service.

• Click IP address pool > Action > IP address entries

IPV4

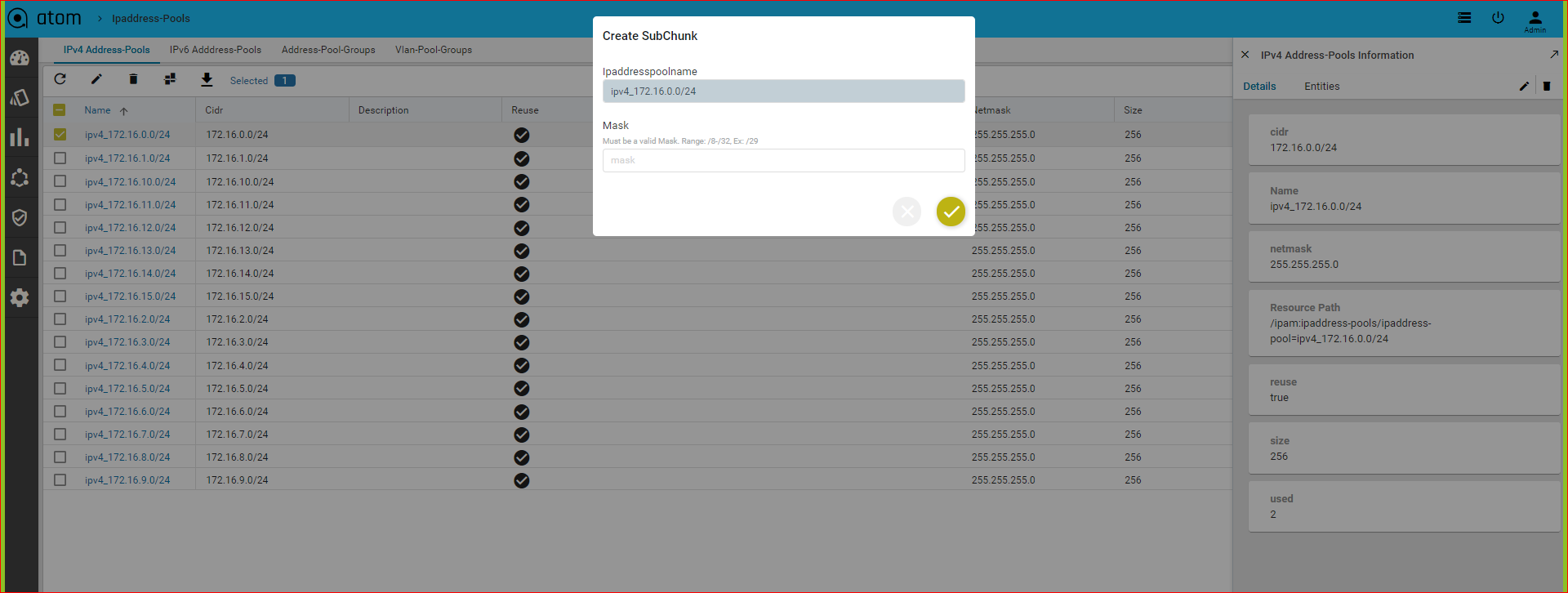

Creating Sub Chunks of the IP Address Pools

The network contained in an IP address pool can be divided into two or more networks within it. The resulting sub chunks can be used for different services to be configured on a resource pool tied with the parent IP address pool.

IPV6:

Resource Manager > IPAM > IP6- Address Pools

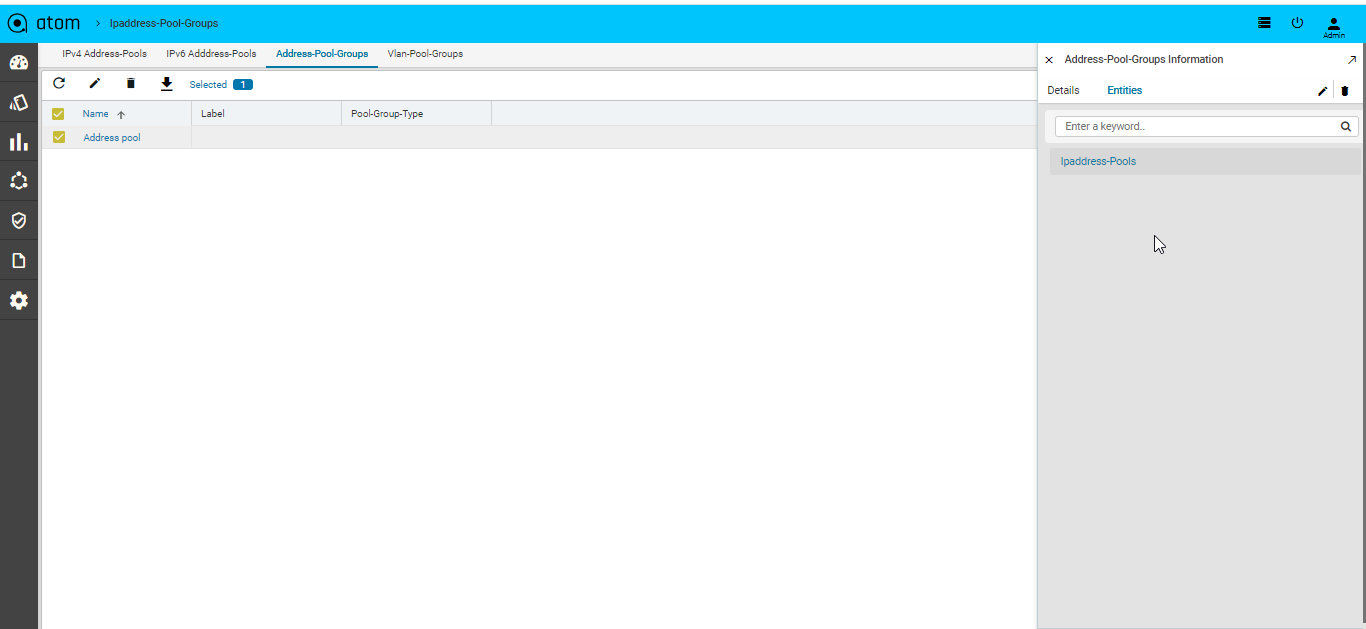

Address-pool-Groups

VLAN Groups

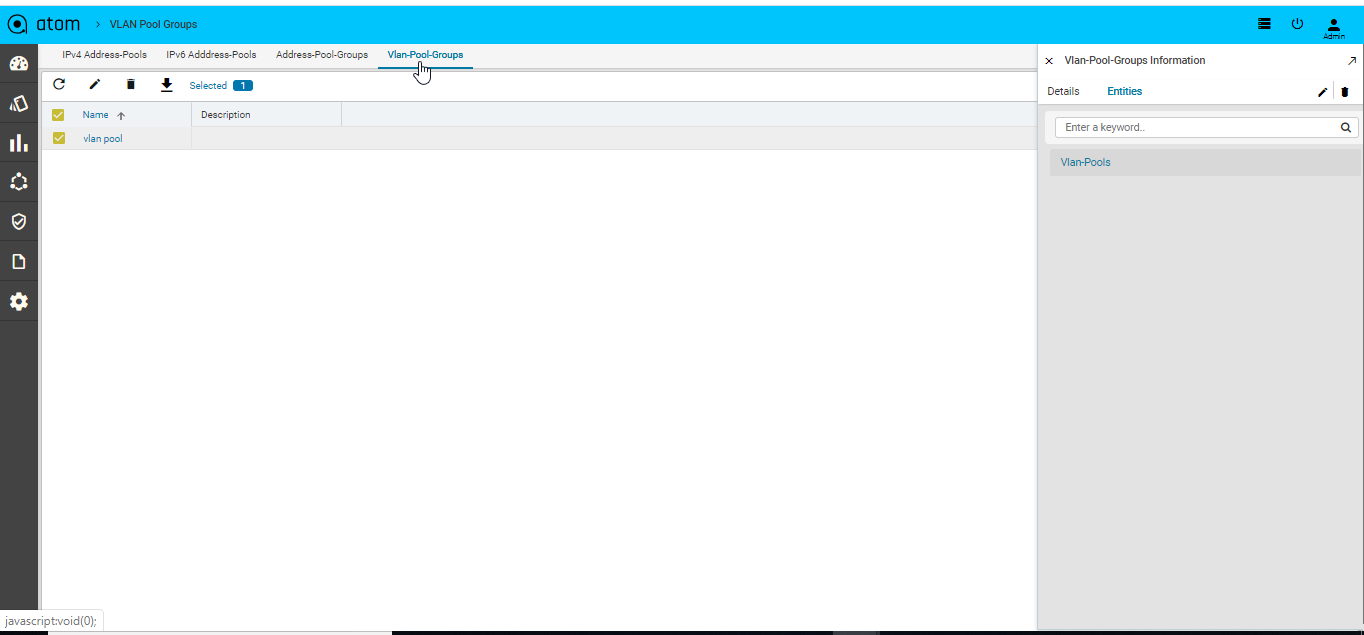

You can define VLAN groups and VLAN pools and define them as resource boundaries for a tenant in such a way that these VLAN Pools can be used during service instantiation on a resource pool.

Adding VLAN Groups

- Navigate to Resources > IPAM > VLAN Groups

- In the right pane, click Add VLAN Pool Group

- In the Create VLAN Pool Group screen, enter values in the following fields:

- Name: Enter a name for VLAN Group

- Description:

- Click Actions > vlan pools > vlan pool to create VLAN pools in the VLAN group:

- Enter values in the following fields:

- Start VLAN: Enter a number from the valid VLAN range. (1‐4096)

- End VLAN: Enter a number from the valid range (1‐4096)

- Click Add to add the required resource pools to the VLAN Pools

- Click the vlan pool > click Actions to add allocated VLAN.

Configuration Compliance

Configuration Compliance feature allows users to Define & Enforce Configuration Compliance Standards.

For more details and usecases please refer to “ATOM CLI Compliance Guide” and “ATOM Yang Compliance Guide”.

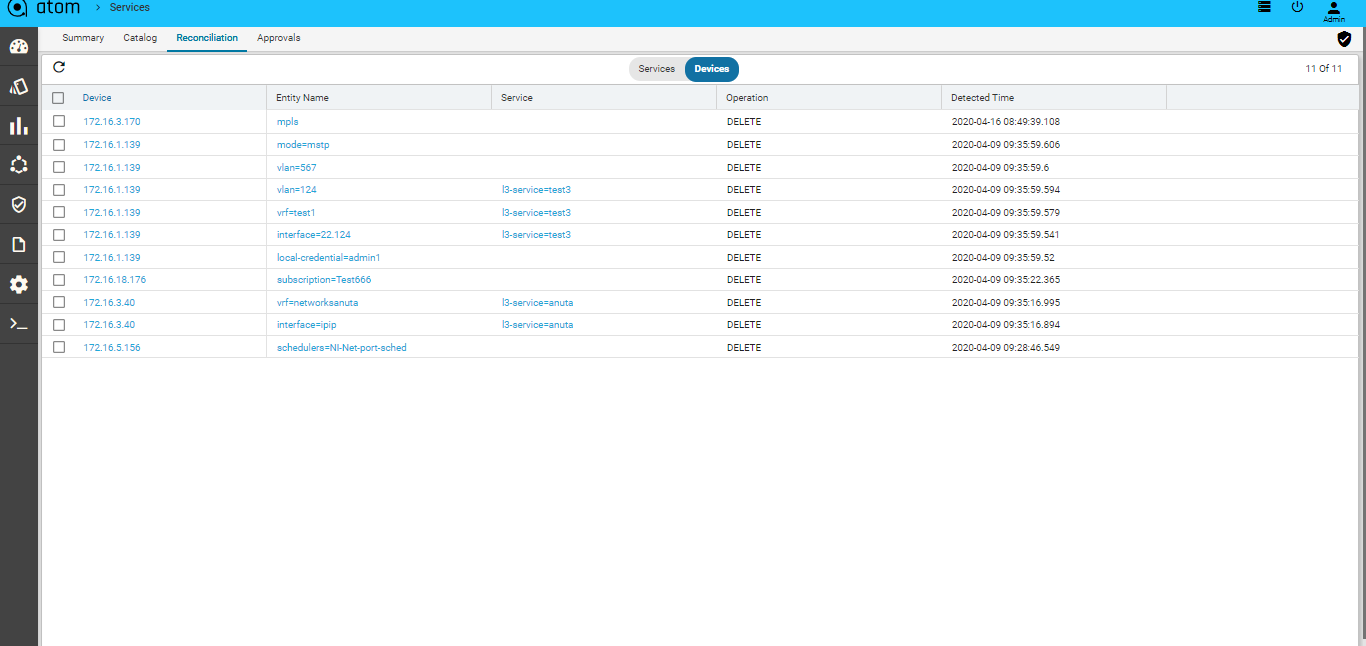

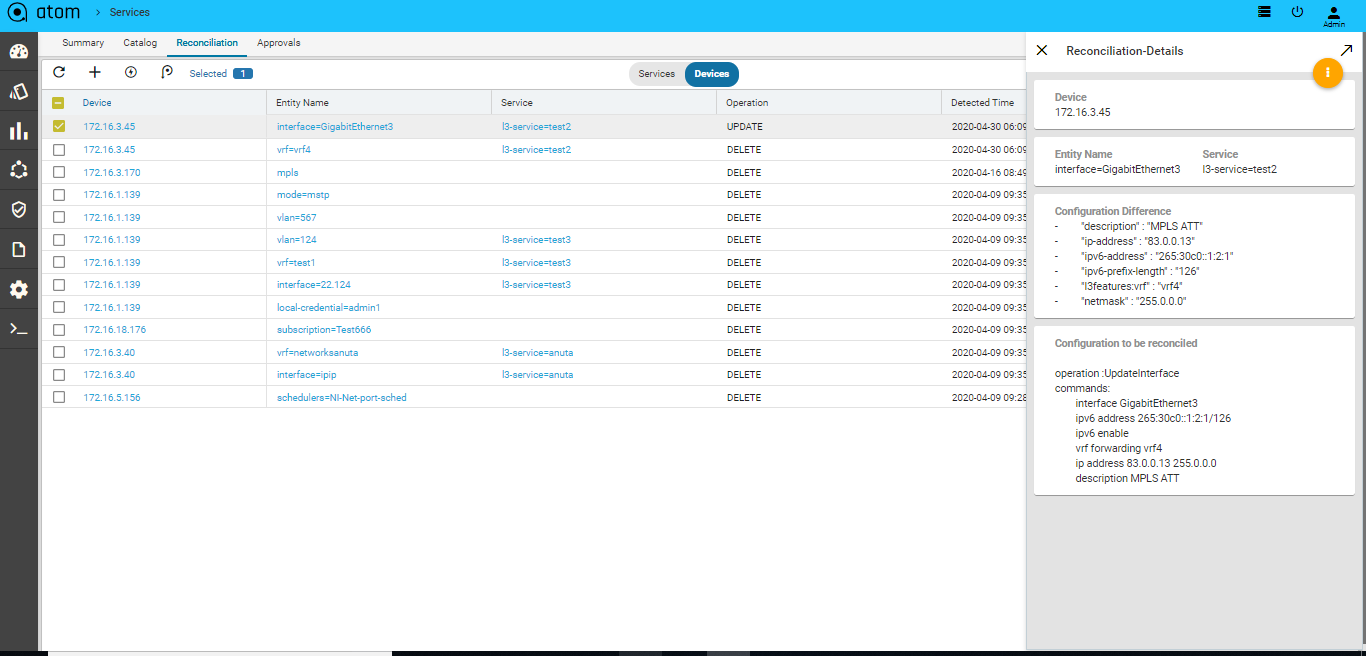

Configuration Drift (Network Services)

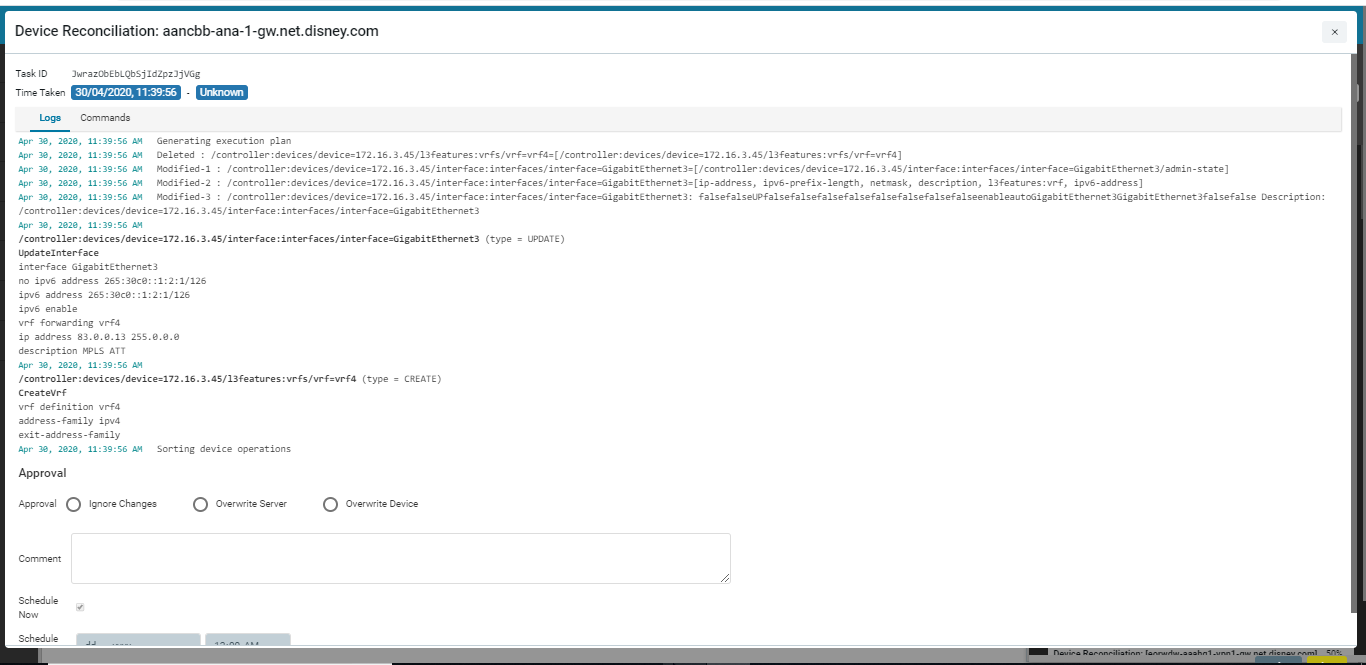

Whenever there is a configuration change in the device that does not match with the generated configuration on ATOM, a reconciliation task is generated in ATOM. After viewing the config diff generated, the administrator can decide how to reconcile these config differences so that the device and ATOM are always in sync with respect to the configuration states.

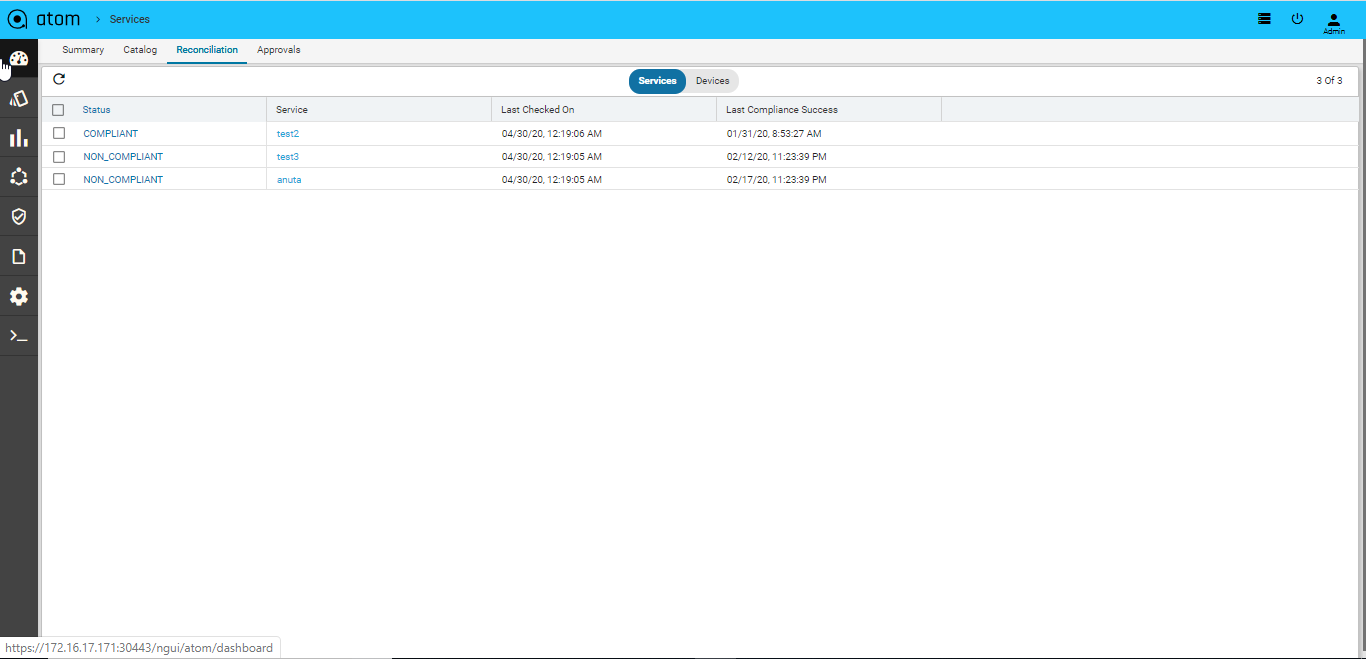

- Navigate to Automation->Services->Reconciliation in the left pane

- In the right pane, click Reconciliation > Entities to view all the reconciliation entities that are generated at the device level and service level.

- Double click the reconciliation entity of your choice, to view the Reconciliation details:

The configuration difference between ATOM and the device is shown on the left pane where as the right pane displays the configurations that should be pushed to the device to reconcile with the state of ATOM.

- Click the Reconciliation Policy to create the policies for reconciling the config differences either with the state of ATOM or with that of the device.

- OVERWRITE SERVER – The generated config diff is pushed to the database of ATOM to reconcile with the state of the device

- OVERWRITE DEVICE – The generated config diff is pushed to the device to reconcile with the state of ATOM.

- WAIT FOR APPROVAL – Select this option if the generated reconciliation entities require a review by an administrator. The generated config diff is sent to an approver who can take the decision of either pushing the configurations to the device or overwriting the ATOM database.

Setting the Global Policy

The policy configured in this setting will have an impact on all the reconciliation entities generated for all devices.

Setting the Device Policy

You can set granular control of what needs to be done with the config diff generated by ATOM for a specific device or a set of devices. The policy configured at the global level can be overridden by the device level.

For example, if the global level the policy is set is WAIT FOR APPROVAL but at the device level it is set to OVERWRITE DEVICE, all the reconciliation entities generated in ATOM for that device will be reconciled with the state of ATOM.

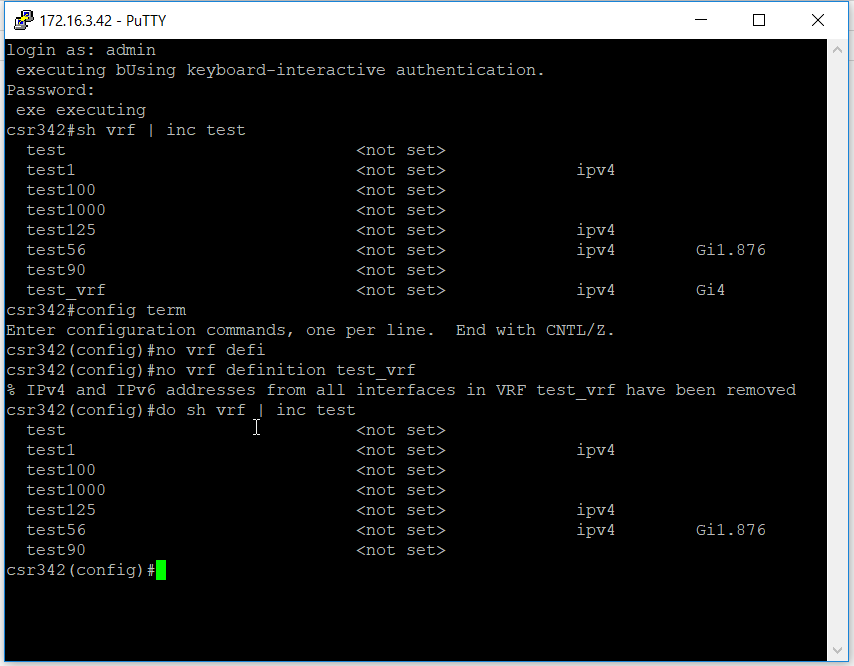

Example

Let us understand how service compliance and reconciliation work by taking the service, L3 service” as follows:

- Create a service instance in ATOM

- Login to the device console and delete the “test VRF” from the device

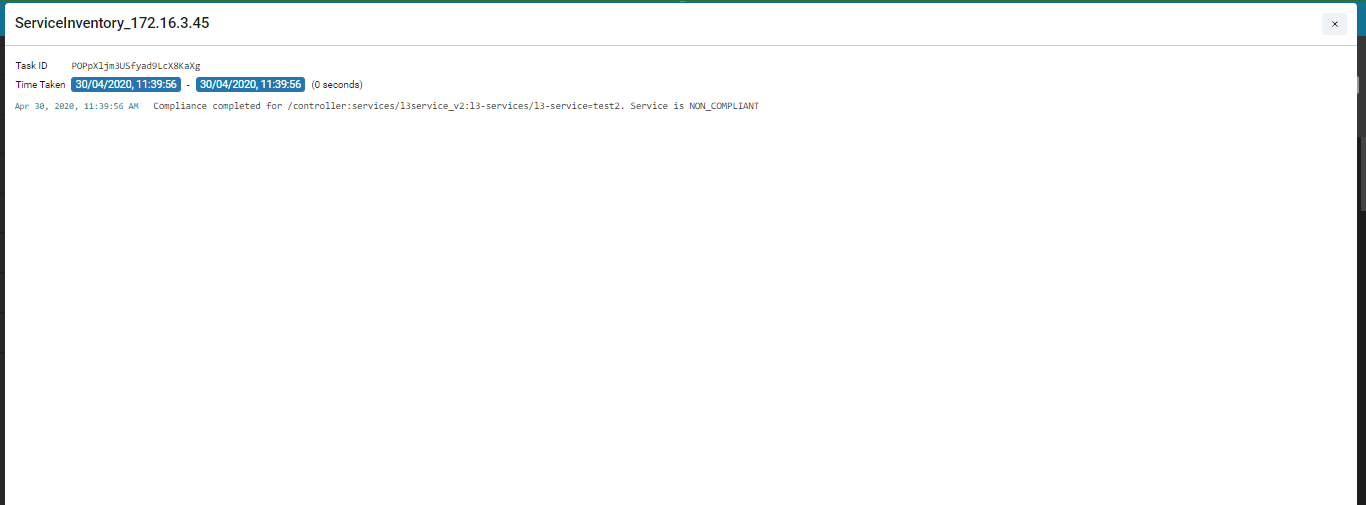

- As there is a config difference between the device and ATOM, a Reconciliation task is triggered in ATOM.

As the config change in the device is related to the created service in ATOM, a Service Inventory task is created.

The Service is marked as “Non Compliant” service as shown in the “Compliance” Dashboard.

You can either resolve the service violation or look at the Reconciliation entities created.

Service Compliance

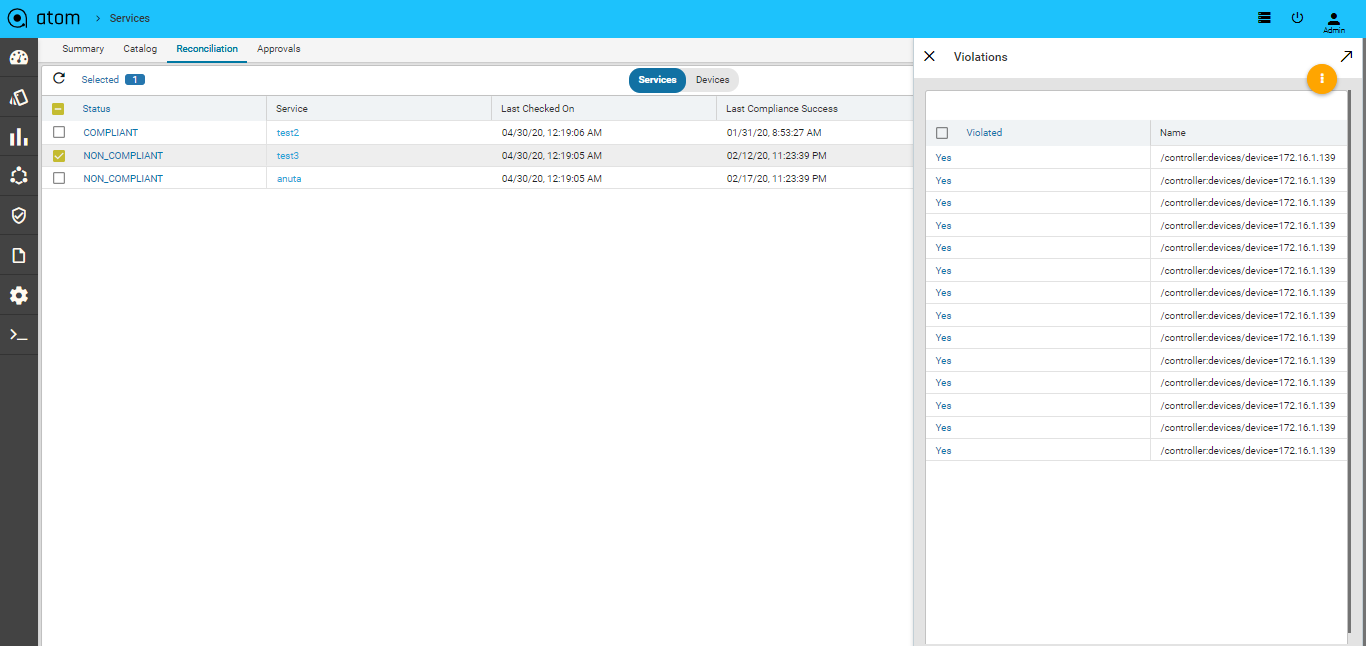

ATOM helps to detect any configuration deviations in network at the service level. ATOM detects the missing, deleted, violated configurations of the services that have been instantiated in ATOM and sends the reconciliation report.

When a service is instantiated on a device, all the necessary configurations are generated by ATOM and pushed to the device. After the successful creation of the service on the device, ATOM compares the running configuration on the device, compares this with the services that were generated , flags the violations and marks the service as non-compliant.

If there is any service that is non-compliant, navigate to Automation –> Services->Reconciliation->Services

Click Non Compliant Services to view those services

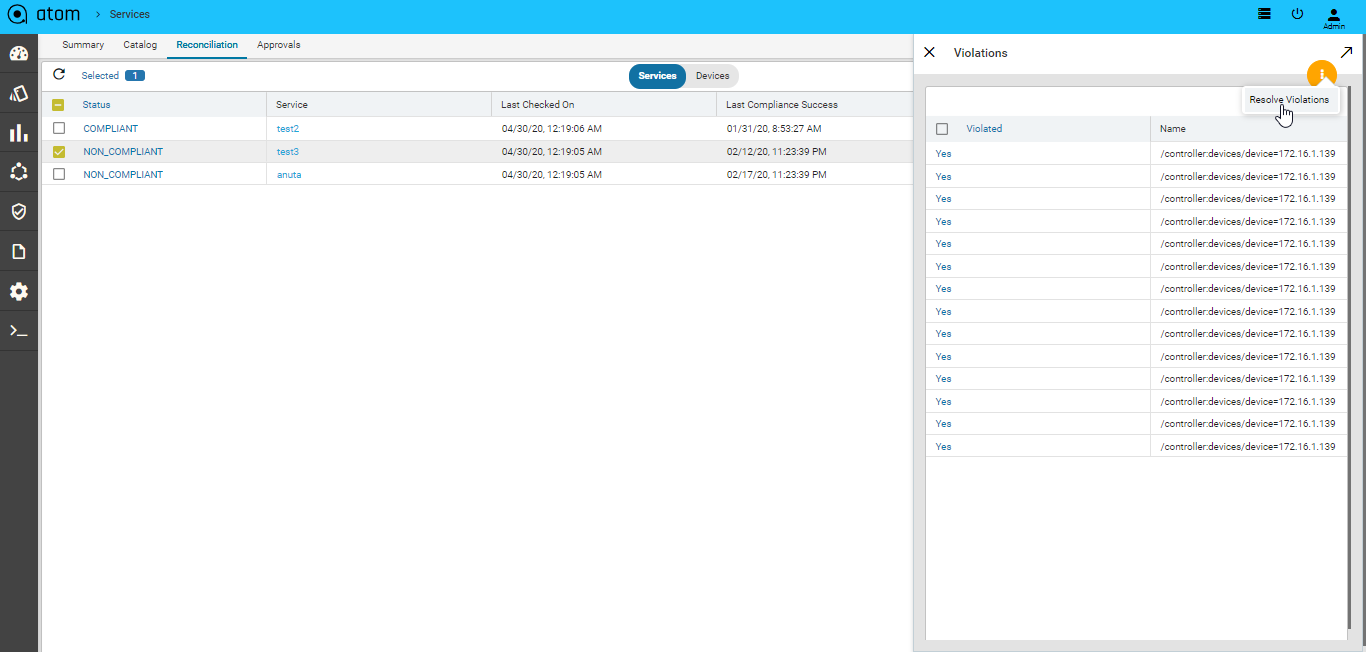

Resolving Service Violations

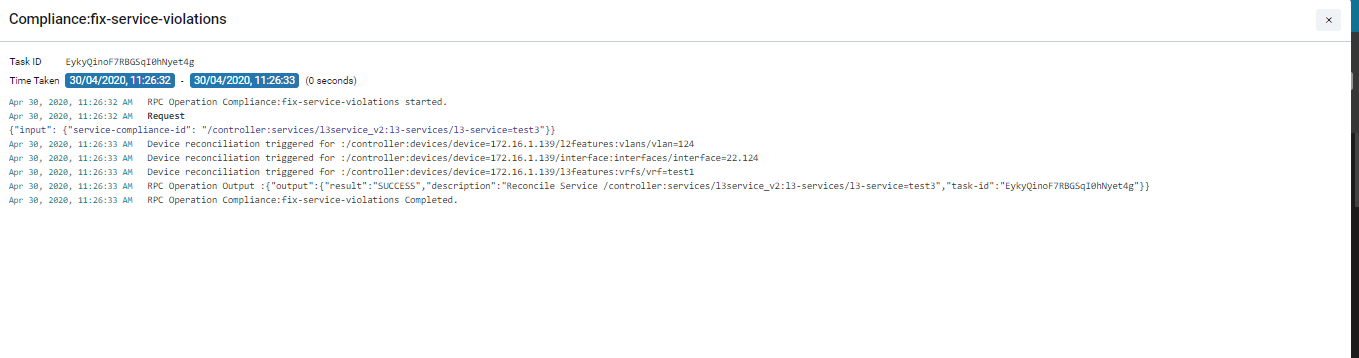

ATOM generates the config diff, in the service created through ATOM. and pushes the deleted configurations (that were either removed intentionally or accidentally) to the device, thus enabling the administrator to maintain the same state of configuration in both.

- Select Non Compliant Services > Click Resolve Violations

- Click the Task Viewer and look for the task named “RPC Operation: Compliance:fix-service-violations”

Collections in ATOM

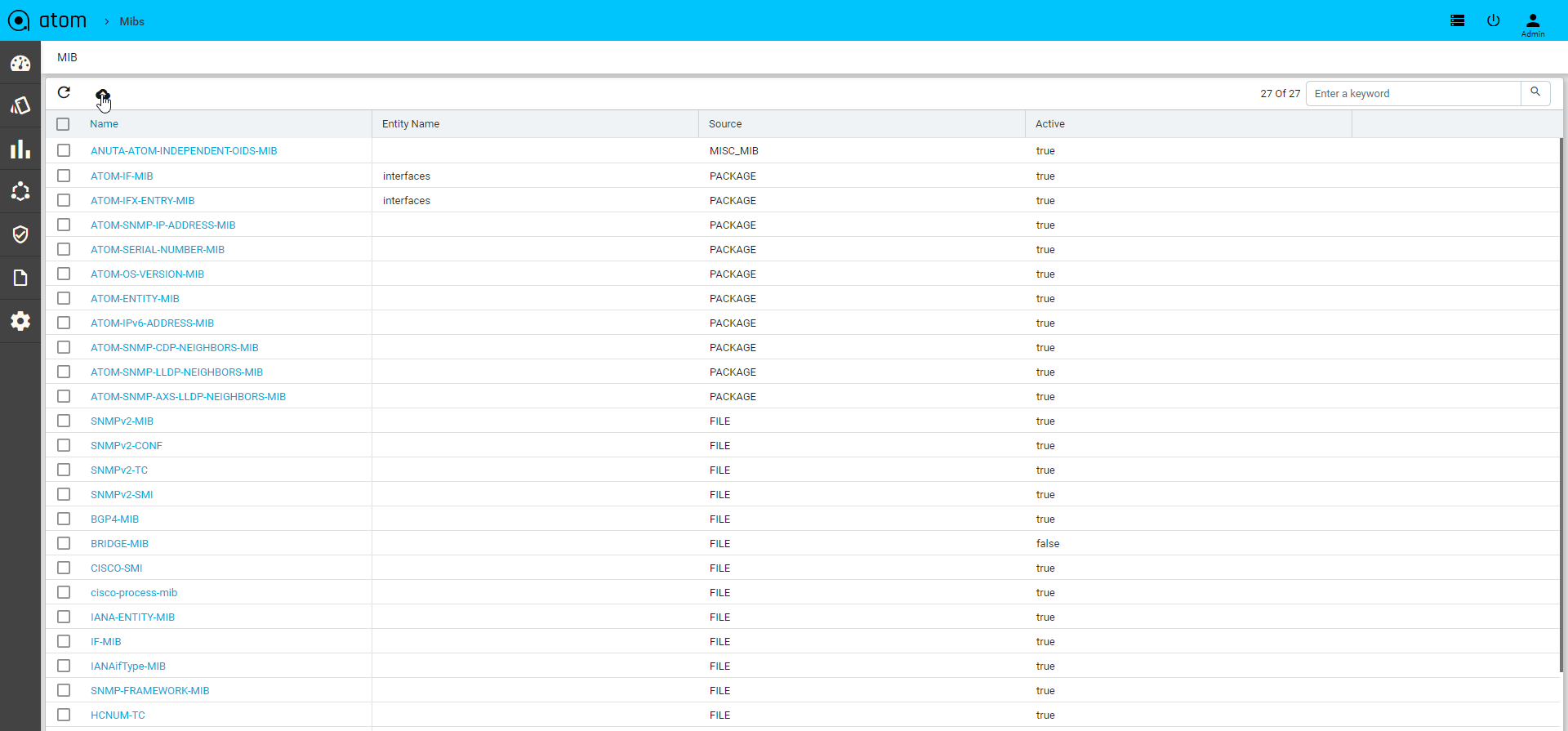

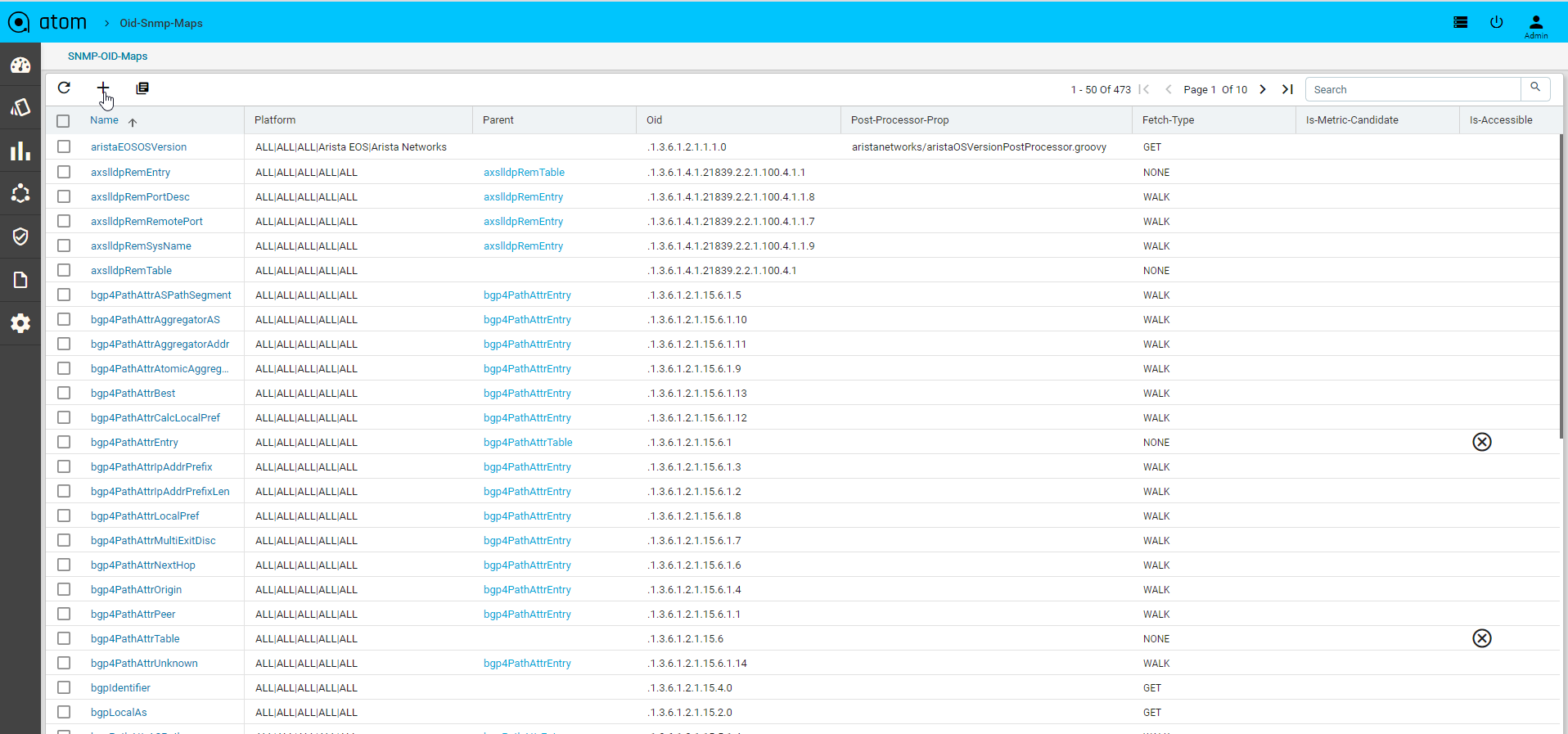

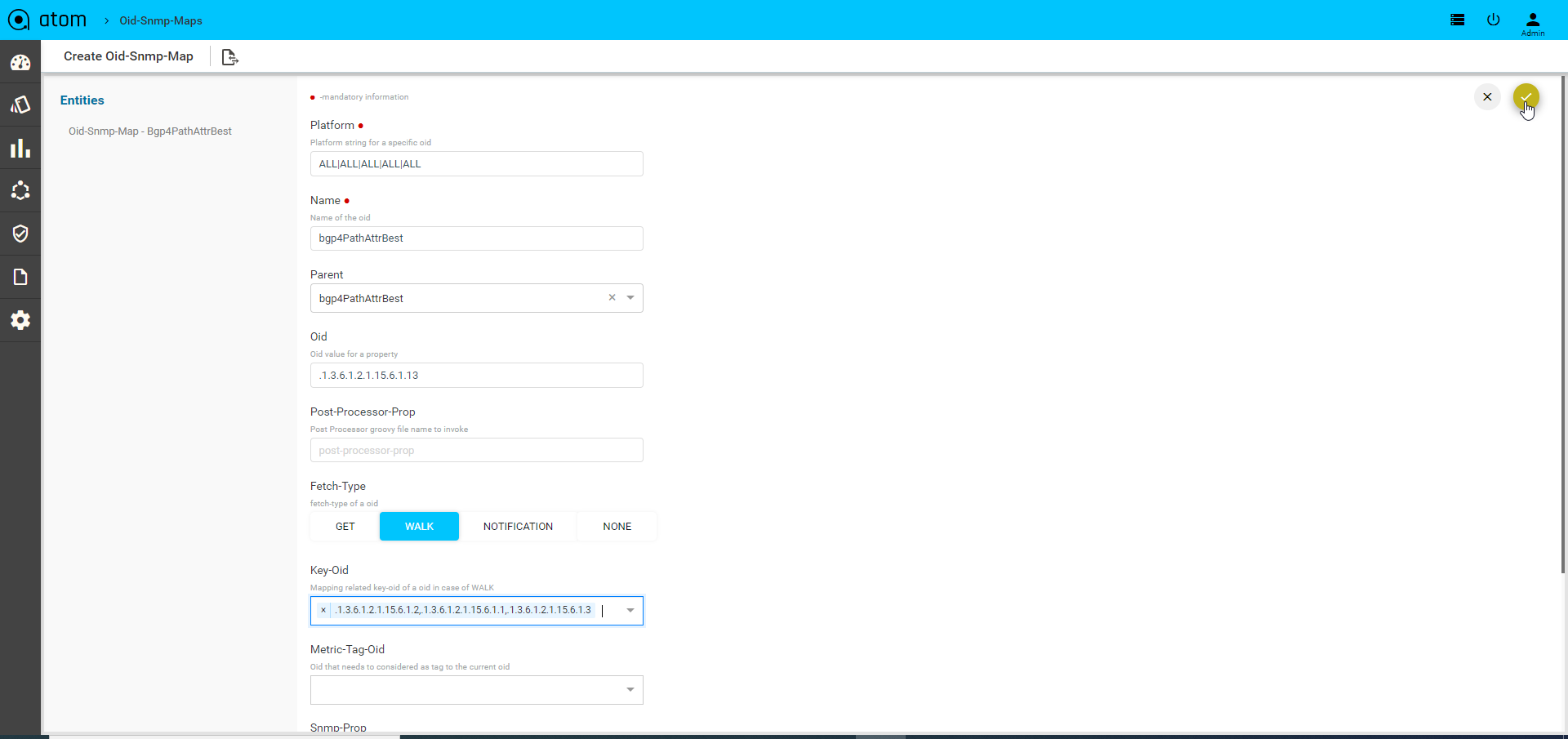

ATOM collects network operational & performance data from multiple data sources such as SNMP, Streaming Telemetry, SNMP Traps and Syslog.

Appropriate data source and data stream can be chosen based on device capabilities, and throughput and latency requirements. Model-Driven Telemetry uses a push model and provides near real-time access to operational & performance statistics.

The collected Operational and Performance data can be visualized using Grafana (available as part of ATOM package) or in ATOM UI (by using various built-in reports available in Report section or under the device view). Users can build additional dashboards customized to their interests. (See ATOM Platform Guide to know more on how to create custom dashboards).

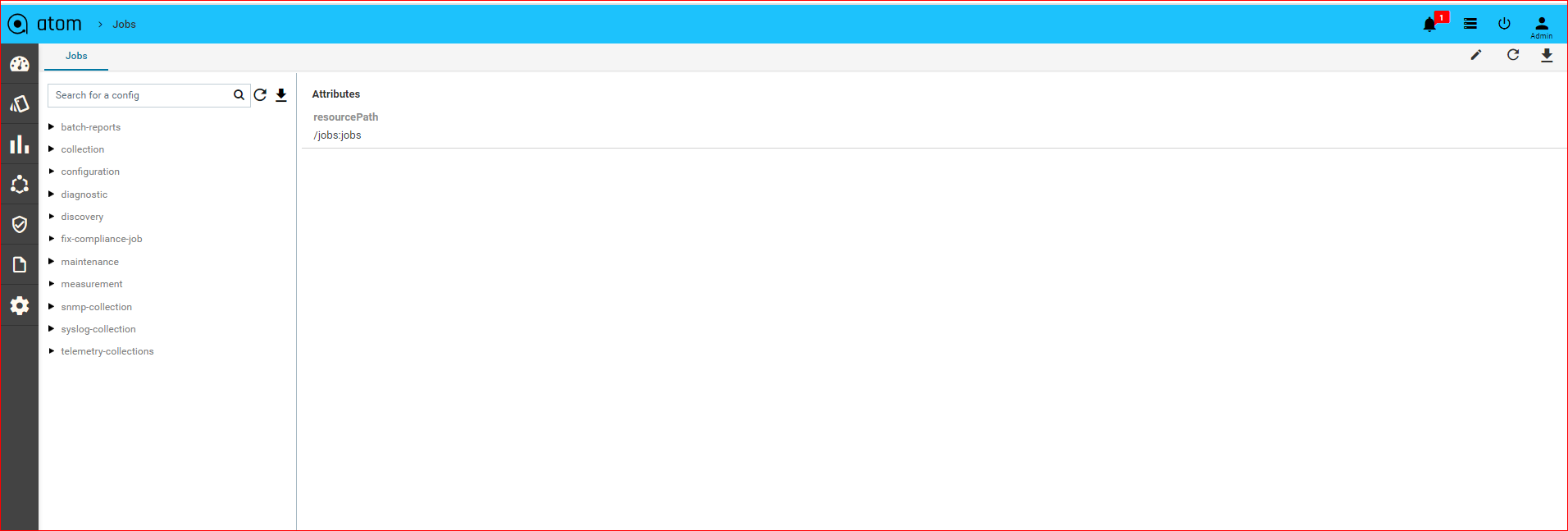

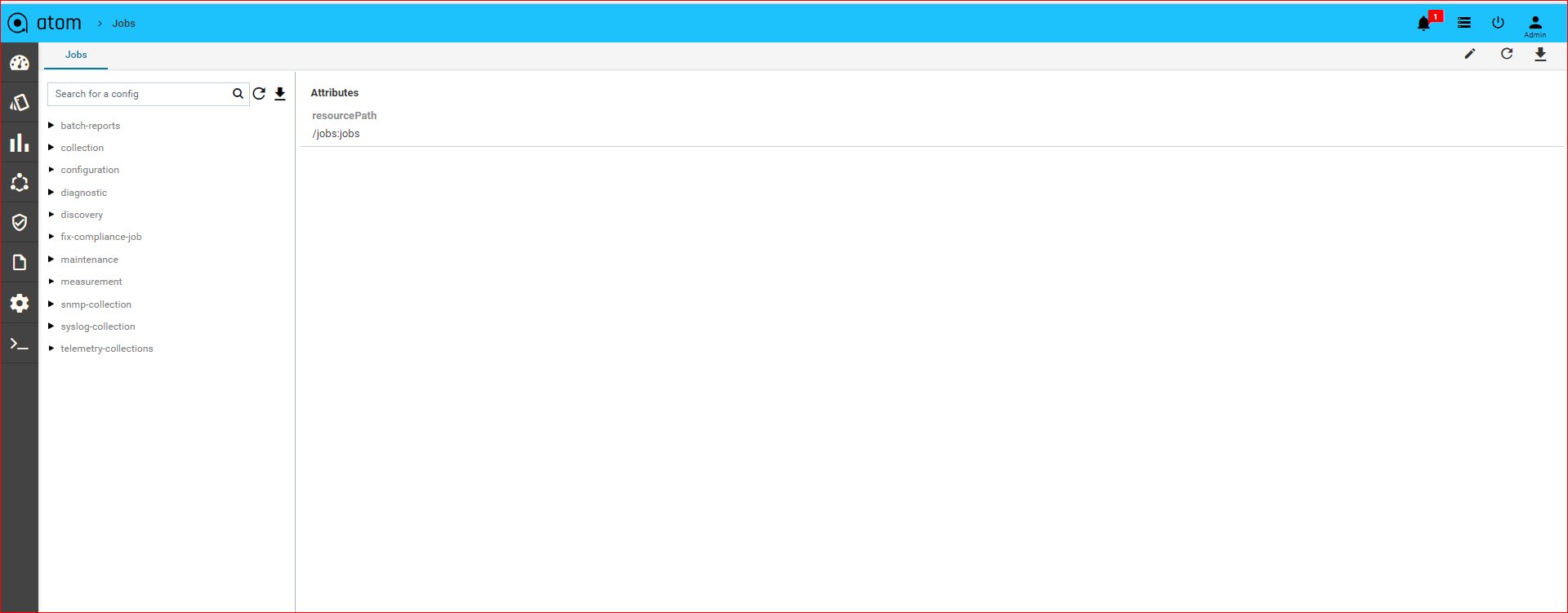

Jobs

A Job is the configurable task on a device that can be managed by ATOM. A job thus created can be a piece of work that can be created, executed and tracked in ATOM. Depending on the need, the administrator can schedule and manually run various Jobs to collect data about the device state.

Jobs are classified into the following types:

- Collection Job

- Configuration Job

- Diagnostics Job

- Discovery Job

- Inventory Job

- Maintenance Job

- Batch-reports Job

- SNMP-collections Job

- Syslog-collection Job

- Telemetry-Collections Job

- Telemetry-measurement Job

Collection Job

ATOM collects or retrieves the status of the device (OFFLINE or ONLINE) . By default, this job is scheduled to run every 6 hours. Starting with 6.0 release, you can model the collection job to collect information about the device using the SNMP OIDs. For more information, refer section, “Modelling of Collection Job” in the “ATOM Platform Guide.”

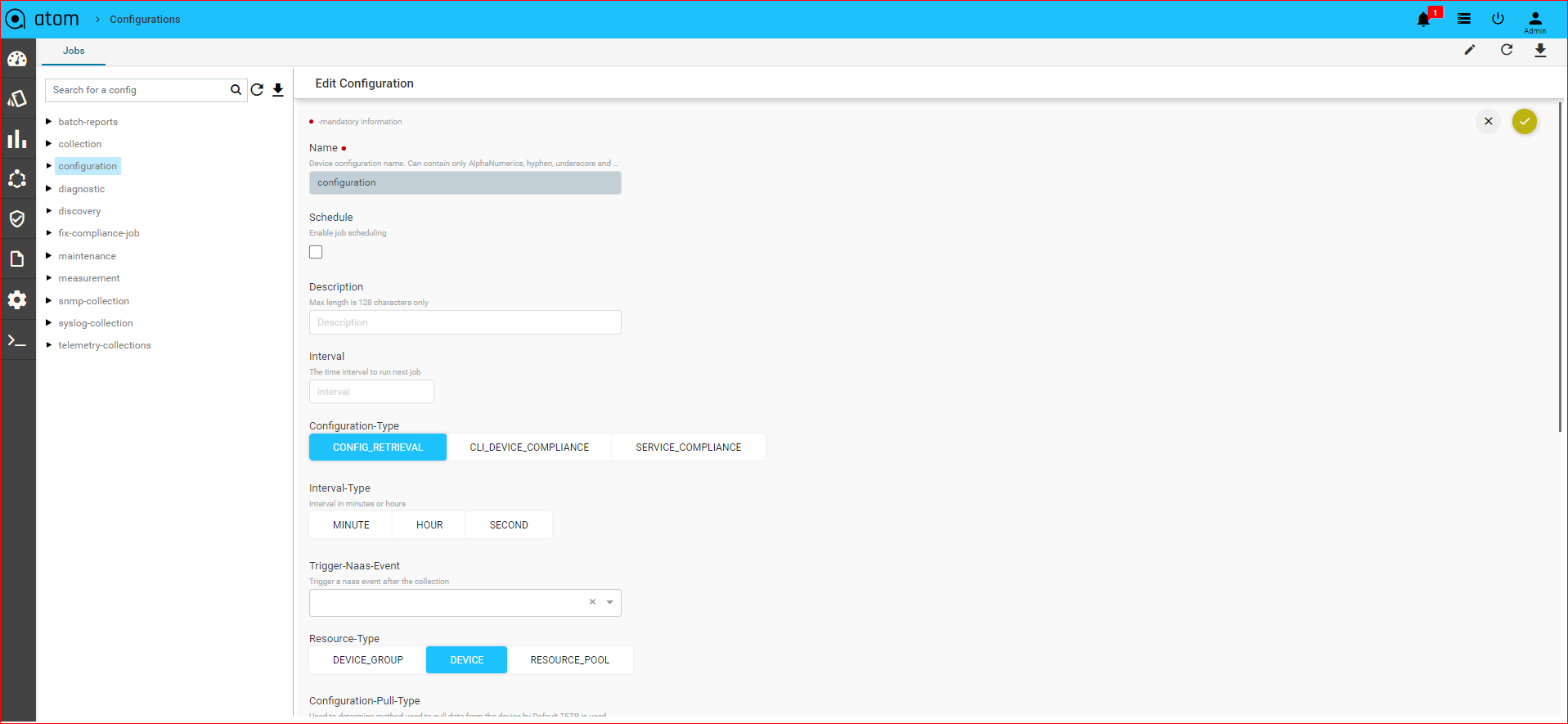

Configuration Job

Configuration job retrieves the running configurations from the device, or is triggered in the event of configuration discrepancies (either at the device or the service level) between the device and ATOM.

Creating a Configuration Job

- Navigate to Monitoring > Jobs > Configuration

- Select the Configuration folder > Add Configuration

- In the Create configuration screen, enter the values in the following fields:

- Name: Enter the name of the Job

- Description: Enter an appropriate description for the Job

- Configuration Type: Select the type of Configuration Job from the menu:

- CONFIG_RETRIEVAL – Retrieves the basic device configuration. This option is the default value.

- DEVICE_COMPLIANCE ‐ The device compliance job is triggered when there is a violation of policy configured on the device or a set of devices. For information about the Device Compliance Policies, refer “Creating Compliance Templates”

- SERVICE_COMPLIANCE ‐ The service compliance job is triggered when there is a discrepancy in the service configurations available on ATOM and the device.

By default, ‘ServiceInventory’ Job of type Service Compliance is triggered every 5 minutes.

- Resource Type: Select one of the following resource entities where the job should be triggered:

- DEVICE: If the selected resource type is a Device, click Add to enter the IP address of the device for ATOM to communicate with it.

- DEVICE GROUP: If the selected resource type is Device Group, click Add to enter the device group for which the configuration jobs should be triggered.

- RESOURCE POOL: Click Add to select from the available resource pools where the job should be run.

- Config Pull type: If the selected configuration type is Config Retrieval, you can opt for one of the following methods to be used while retrieving the configurations from the device:

- TFTP_EXPORT – Select this option when ATOM should retrieve configs from the TFTP server

- SHOW_COMMAND – Select this option if ATOM should retrieve configs from the running configuration of the device. This will be useful when in some customer environments where the TFTP port is disabled.

- Parse Config: Select this option if the parsing of the configurations should be enabled on the device/devices after the successful run of the config retrieval job.

| Note: Use this option if you want to override the value set in the global parameters of ATOM. By default, at the global level config parsing is enabled for all devices However, using this option you can disable config parsing at the device level. |

- Schedule: Select the checkbox and schedule the job to be run at intervals

- Interval: Enter the time period for which the job should be scheduled

- Interval Type: Select the units of time when the job should be scheduled (HOUR or MINUTE)

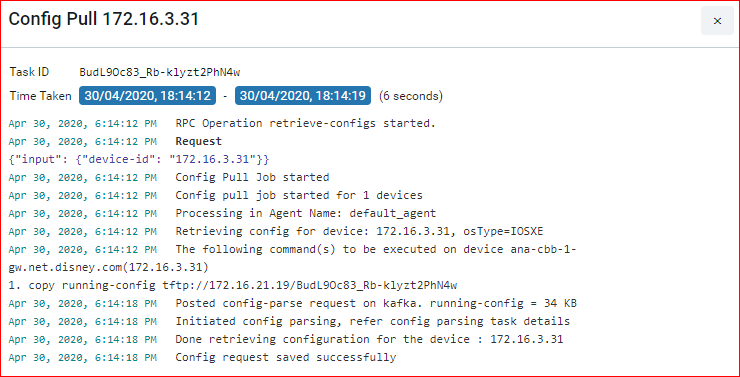

Click the Task viewer to check for the status of the executed job.

Option 1: A successful run of the Config Retrieval job, where ‘TFTP Export’ enabled, fetches the following details from the device:

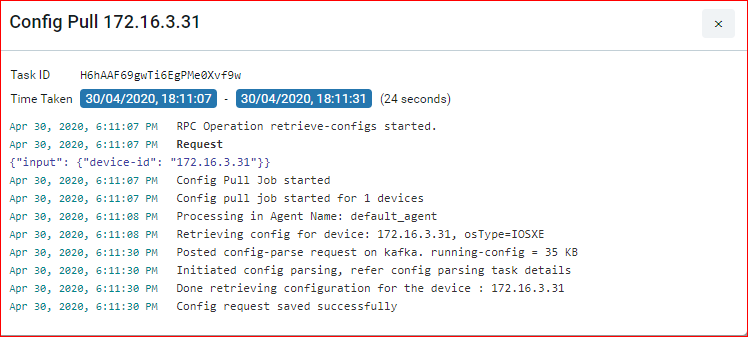

Case 2: A successful run of the Config Retrieval Job, with ‘show run config’, is shown as below:

Diagnostics Job

Diagnostics job collects various CPU, memory utilization and interface performance data that is used to provision the service. By creating a Diagnostics Job, you can run the basic device Telnet or SNMP connections to the device and also perform module‐ level diagnostics.

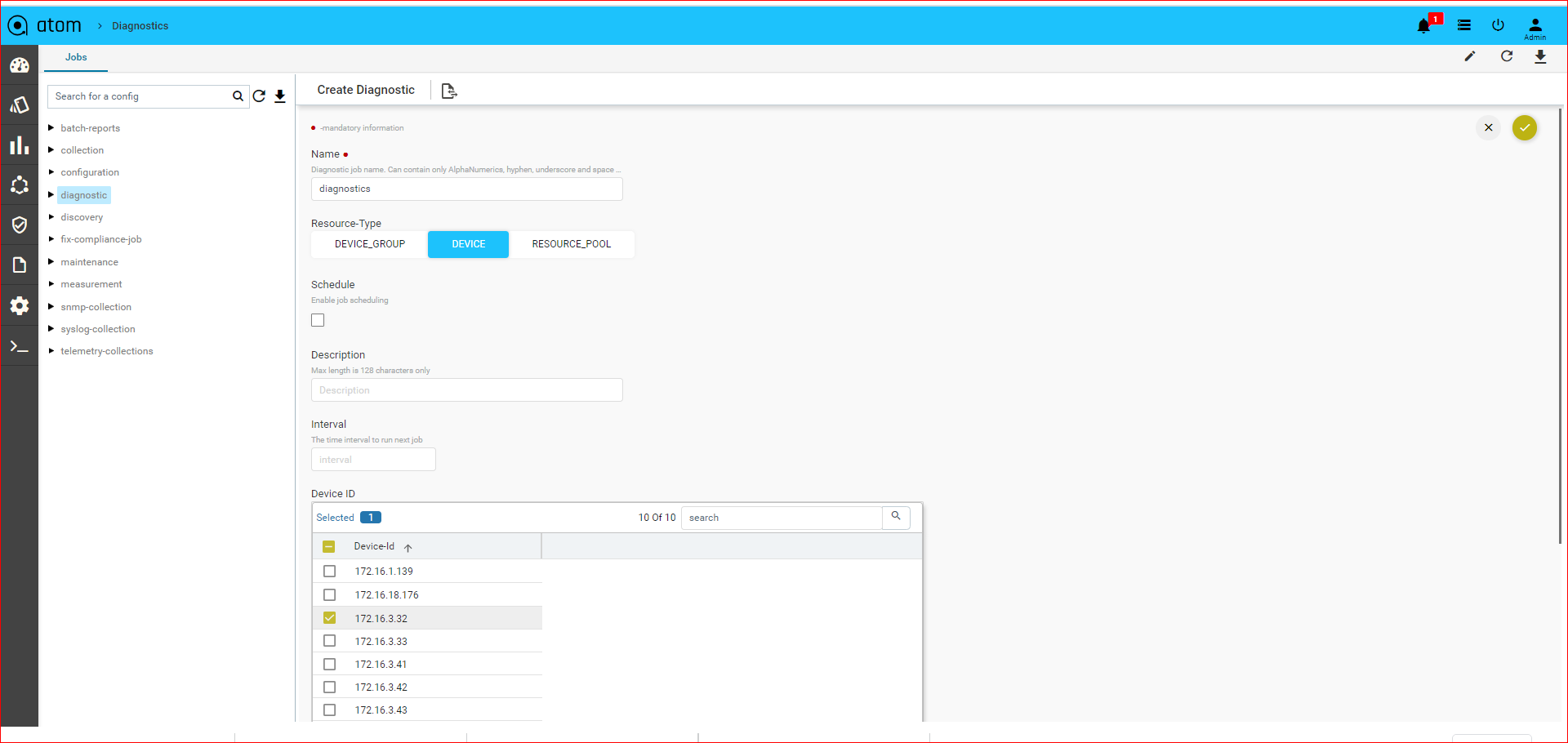

Creating a Diagnostics Job

- Navigate to Monitoring > Jobs > Diagnostic

- Select Diagnostic and click Actions > Add Job

- In the Add Diagnostic screen, enter values in the following fields:

- Name: Enter a name not exceeding 64 characters

- Description: Type an appropriate description for the job.

- Resource Type: Select one of the entities where the job should be run

- Device: Enter the IP address of the device

- Device Group: Select a device group from the drop‐down list.

- Resource Pool: Select the resource pool from the available resource pools in ATOM.

- Schedule: Select this option to run the job in specific intervals of time

- Interval: Enter a number representing a span of time.

- Interval Type: Select the units of time (minute or hour)

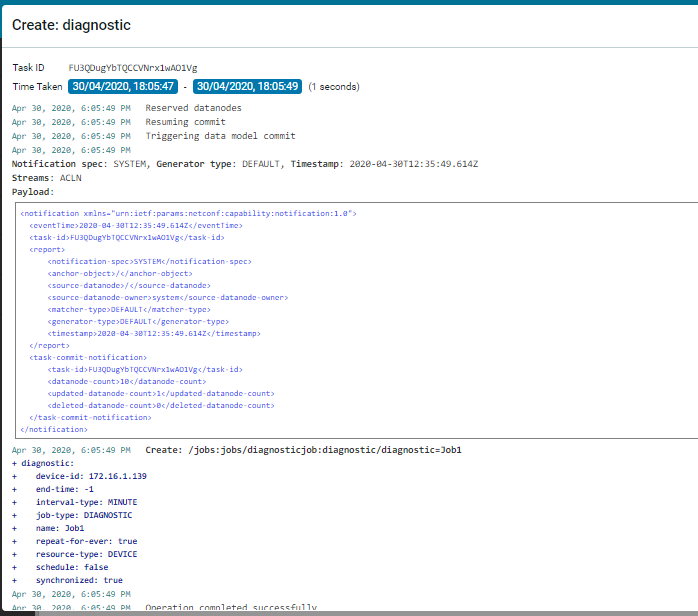

Click the Task Viewer pane and search for the Diagnostics job. A successful run of the Diagnostic Job displays the following information in the task details:

Discovery Job

Discovery job is used in discovery of the devices falling within a range of IP addresses.

The first step in provisioning a network is discovering the devices in the network. ATOM discovers the devices in the pod using either CDP or LLDP. Based on this discovery, ATOM automatically draws a network topology diagram.

If only SNMP is enabled, the topology diagram cannot be drawn as SNMP does just the sweep, which is not a methodical way of discovering device hierarchy. Therefore, it should be ensured that either CDP or LLDP is enabled on all the devices managed by ATOM.

A SEED device is the starting point from which ATOM discovers the network and its peers or neighbor devices. SEED discovery type should be selected when devices in a smaller range are required in the topology. This method of discovery is quicker, but fewer number of devices are discovered.

If the selected discovery type is SWEEP, the devices within a range of IP addresses are discovered.

Creating a Discovery Job

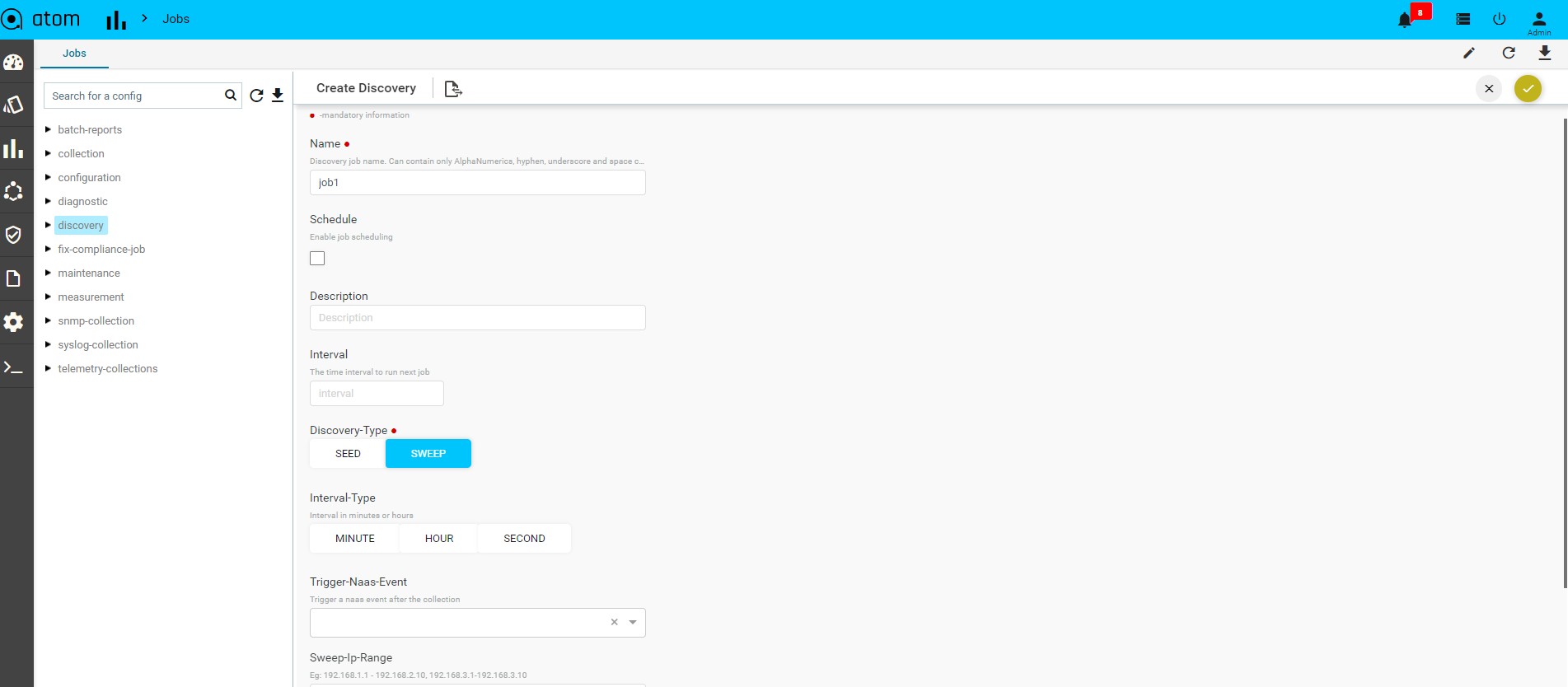

- Navigate to Monitoring > Jobs > Discovery > Add Discovery

- In the Create Discovery screen, enter values for the mandatory fields:

- Name: Enter an alphanumeric string to identify the created discovery job

- Description: Enter some text that describes the Job

- Discovery Type: Select one of the discovery protocols that used for discovering devices:

- SEED – By default, the discovery type is SEED.

- SWEEP – Change the value to SWEEP, if you want more devices to be discovered.

- Seed Type: Select the type of the seed protocol, either CDP or LLDP. In case the Discovery type is selected as SWEEP, enter the SWEEP IP range in the field.

| NOTE: This IP range should be the same or a subset of the range of IP addresses defined in the Credential Map. IP addresses can be expressed in CIDR notation as well. |

- Hop Count: Enter the number of hops (devices)that ATOM should discover from the seed device while using CDP.

- Seed IP Address: Enter the IP Address of the seed device from which the discovery of the neighbouring devices should be initiated.

- Auto manage: Select this option to add the discovered devices to ATOM automatically. If this option is selected, a Managed Task is generated after the successful run of the discovery job.

- Schedule: Select this option if this job should be scheduled at prescribed time intervals.

- Interval: Enter the period of time within which the job should be scheduled

- Interval Type: Enter the units of time (HOUR or MINUTE)

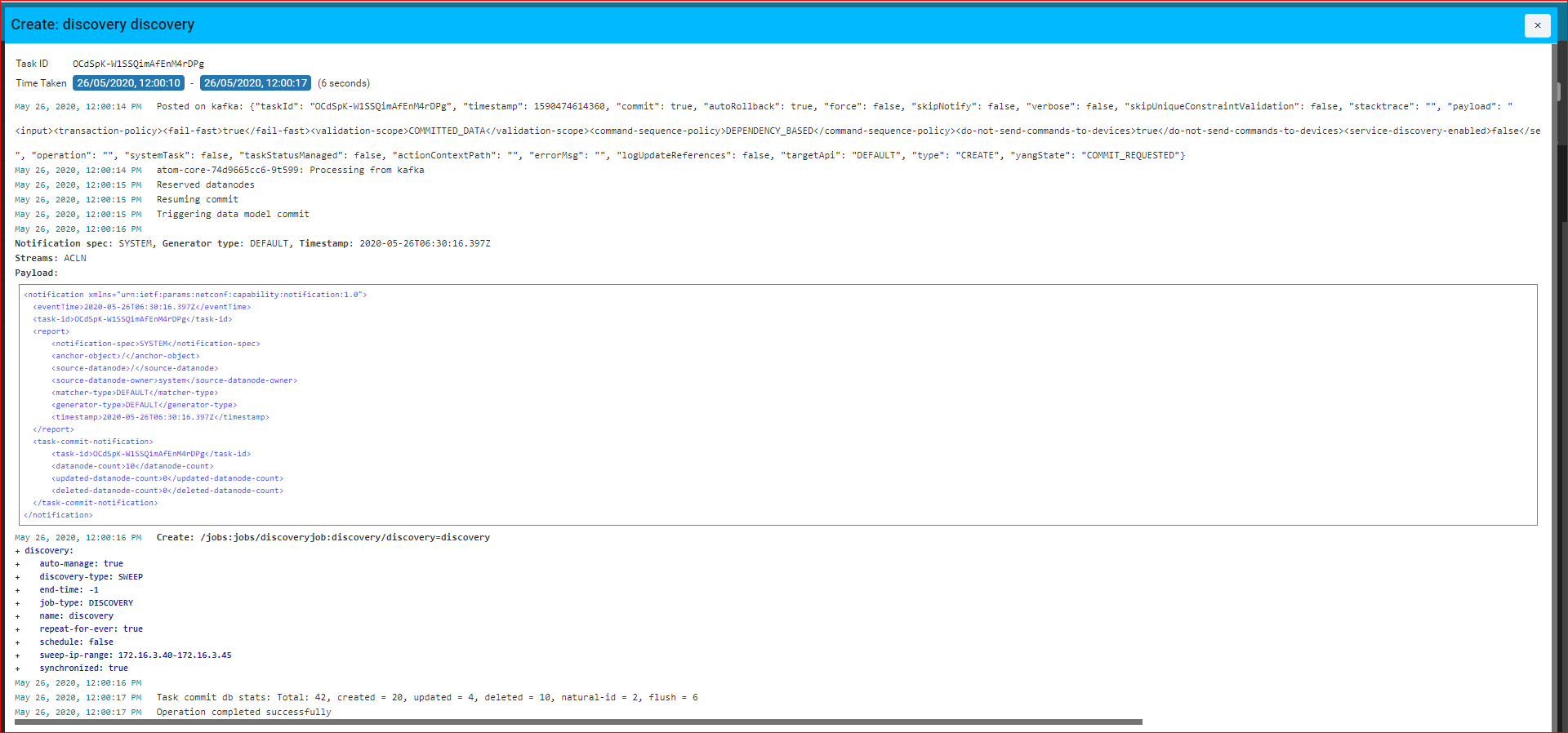

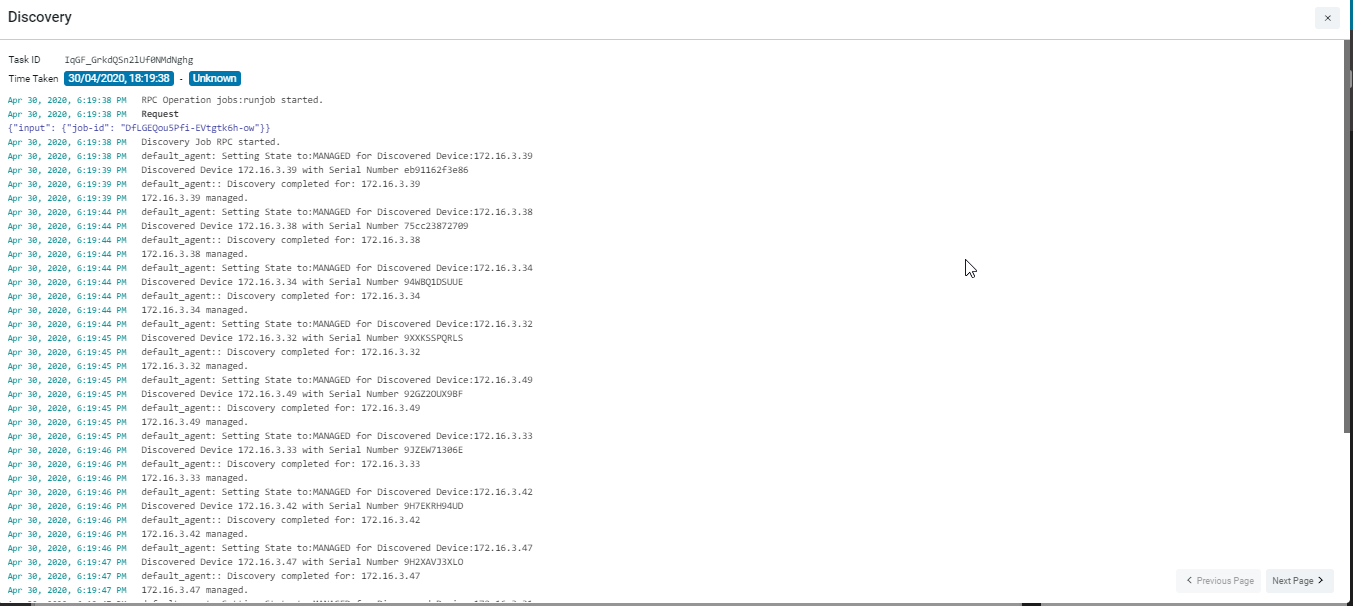

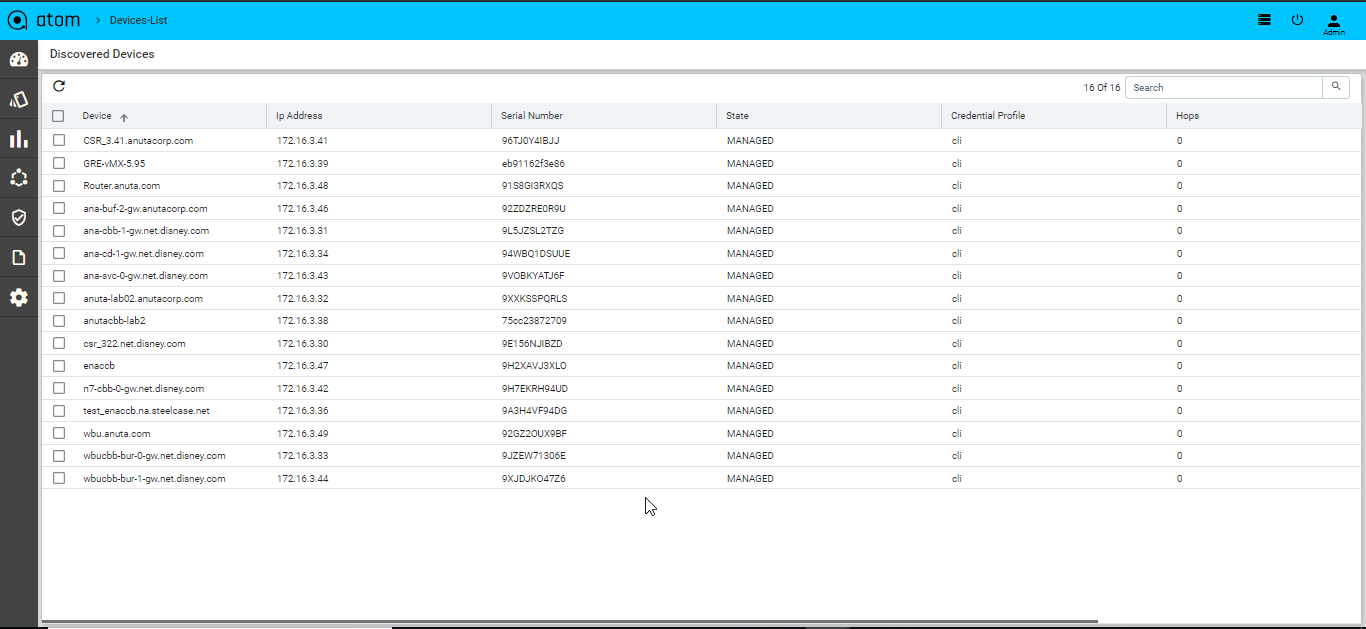

A successful run of the Discovery Job with the SWEEP protocol is as shown as below:

Details of the Executed Job along with the devices that are discovered by ATOM in this job are displayed as follows:

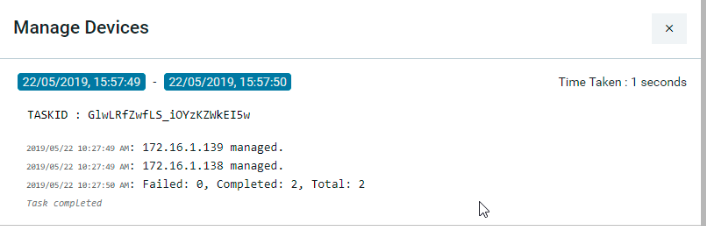

Managed Task

This task will be triggered in ATOM after the successful run of the discovery job. All the discovered devices are added to the device table maintained in the ATOM inventory are marked as “Managed” devices

Inventory Job

Inventory job is used for detecting and adding device Interfaces, interface capabilities, and interface addresses.

Extended Inventory: Retrieves the lost network connections, establishes the new network connections between the devices, retrieves the configurations from the device, By default, this job is scheduled to run every 12 hours.

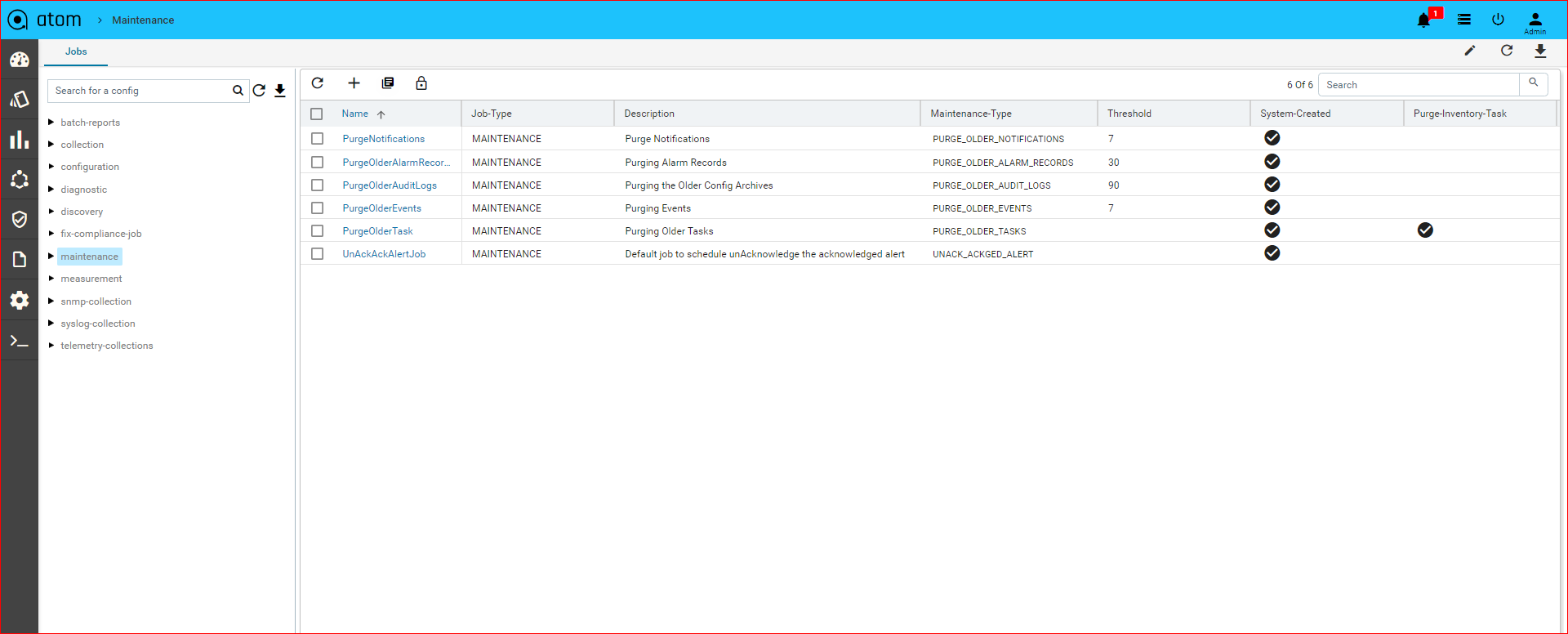

Maintenance Job

You can configure the maintenance jobs to remove unwanted records of the tasks or the alarms in ATOM. The maintenance jobs can be scheduled on a one-time basis or run

Periodically.

Purge Older Alarm Records

You can remove unwanted, older records of the Alarms generated in ATOM.

Creating a Purge Older Alarm Records Job

1. Navigate to Resource Manager > Jobs

2. In the left pane, navigate to the MAINTENANCE folder

3. Click the MAINTENANCE folder > Actions > Add Job

4. In the Create MAINTENANCE screen, enter the values for each field described below:

- Maintenance Job Name: Enter a name for the maintenance job to be created.

- Description: Enter a suitable description for the job

- Maintenance Type: Select the type as ” PURGE_OLDER_ALARM_RECORDS” to create a job to clean all the old Alarms from ATOM

- Threshold (in days): Enter a number of days, of which the records for which history should be maintained. All the records before the prescribed days will be deleted.

- Schedule: In order to schedule the job to run periodically at specified intervals of time, select the Schedule option.

- Interval: Enter the number for the interval

- Interval Type: Select either Hour or Minute as units of time.

Example:

If 30, 24, and HOUR are entered as values in the fields – Threshold, Interval, and Interval Type respectively, a maintenance job is executed every 24 hours that will remove all the Alarm records older than 30 days. That is, all the records of the previous month before the 30th day will be deleted..

Purge Older Task Details Records

You can schedule a maintenance job that can be run to remove all the details of the tasks run before a specified period in time.

Creating a Purge Older Task Details Records

1. Navigate to Monitoring> Jobs

2. In the left pane, navigate to the maintenance folder

3. Click the maintenance folder > Actions > Add Job

4. In the Create maintenance screen, enter the values for each field described below:

- Maintenance Job Name: Enter a name for the maintenance job to be created.

- Description: Enter a suitable description for the job

- Maintenance Type: Select the type as ” PURGE_OLDER_TASK_RECORDS” to create a job to clean all the details of the tasks

- Threshold (in days): Enter a number of days, of which the records for which history should be maintained. All the records before the prescribed days will be deleted.

- Schedule: In order to schedule the job to run periodically at specified intervals of time, select the Schedule option.

- Interval: Enter the number for the interval

- Interval Type: Select either Hour or Minute as units of time.

Example

If 30, 24, and HOUR are entered as values in the fields – Threshold, Interval, and Interval Type respectively, a maintenance job is executed every 24 hours that will remove all the details of the tasks older than 30 days. That is, all those task details of the previous month before the 30th day will be deleted.

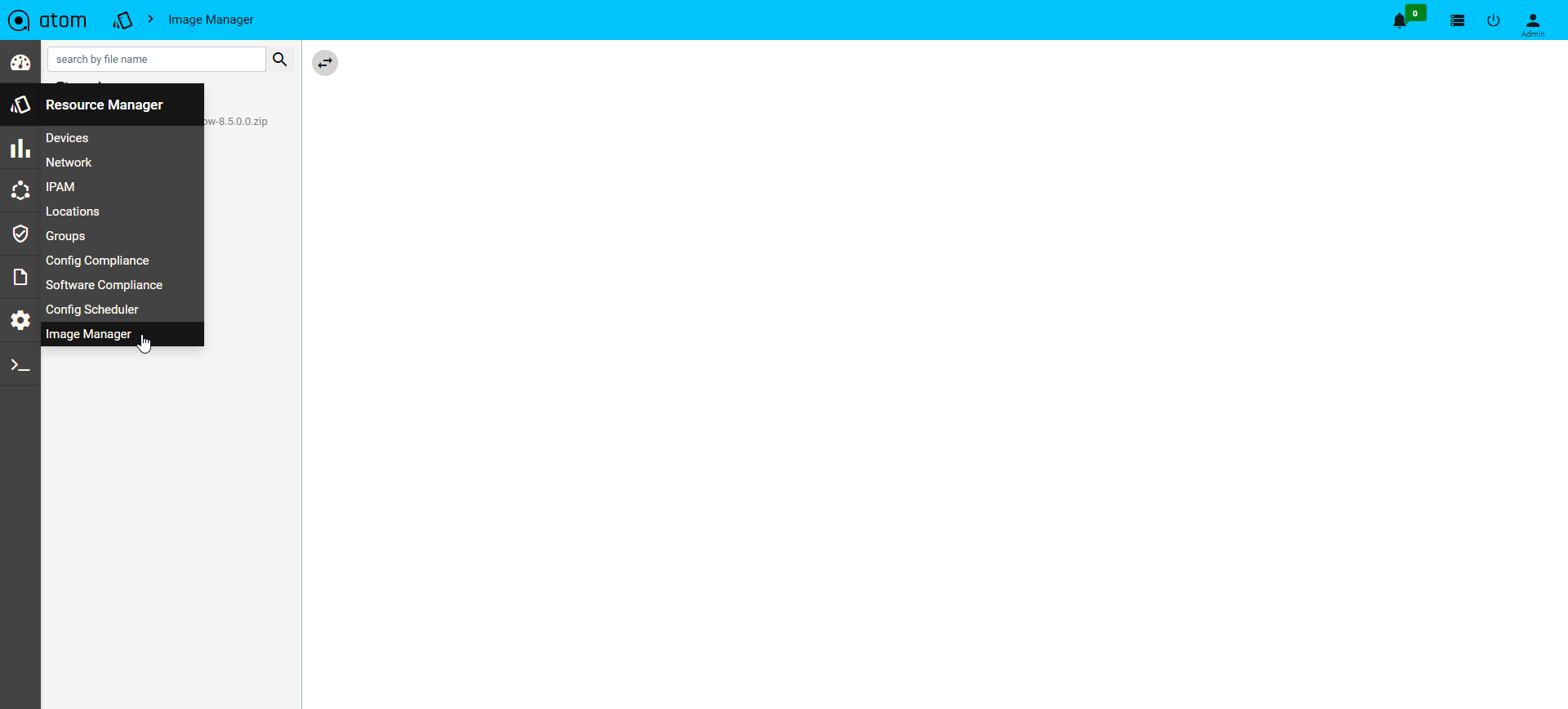

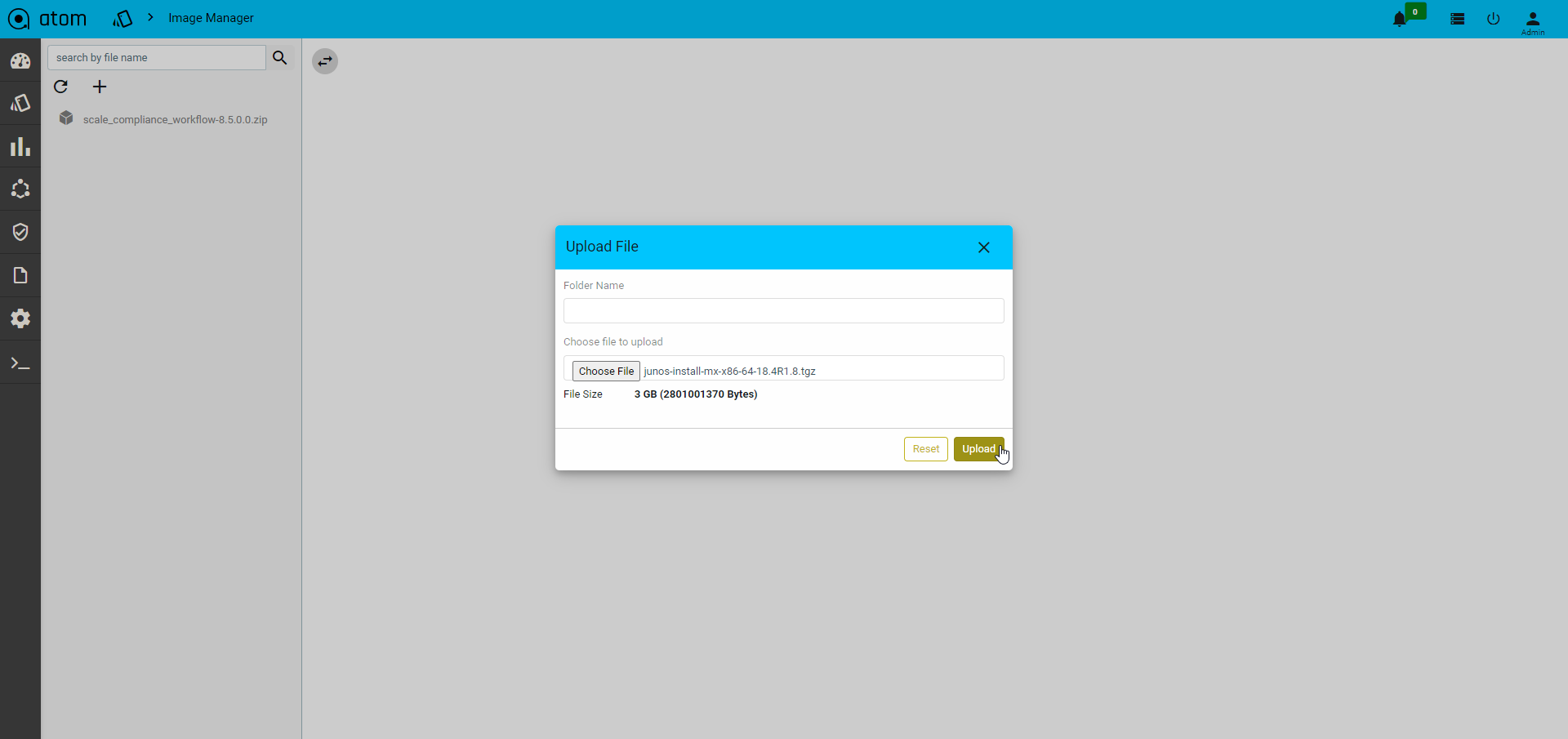

Image Manager

ATOM acts as Network Element Image Repository (Image Server). Devices can boot an Image from ATOM Image Server manually or through ATOM Network Element Software Image Upgrade Workflow. Image Transfer is supported through SFTP.

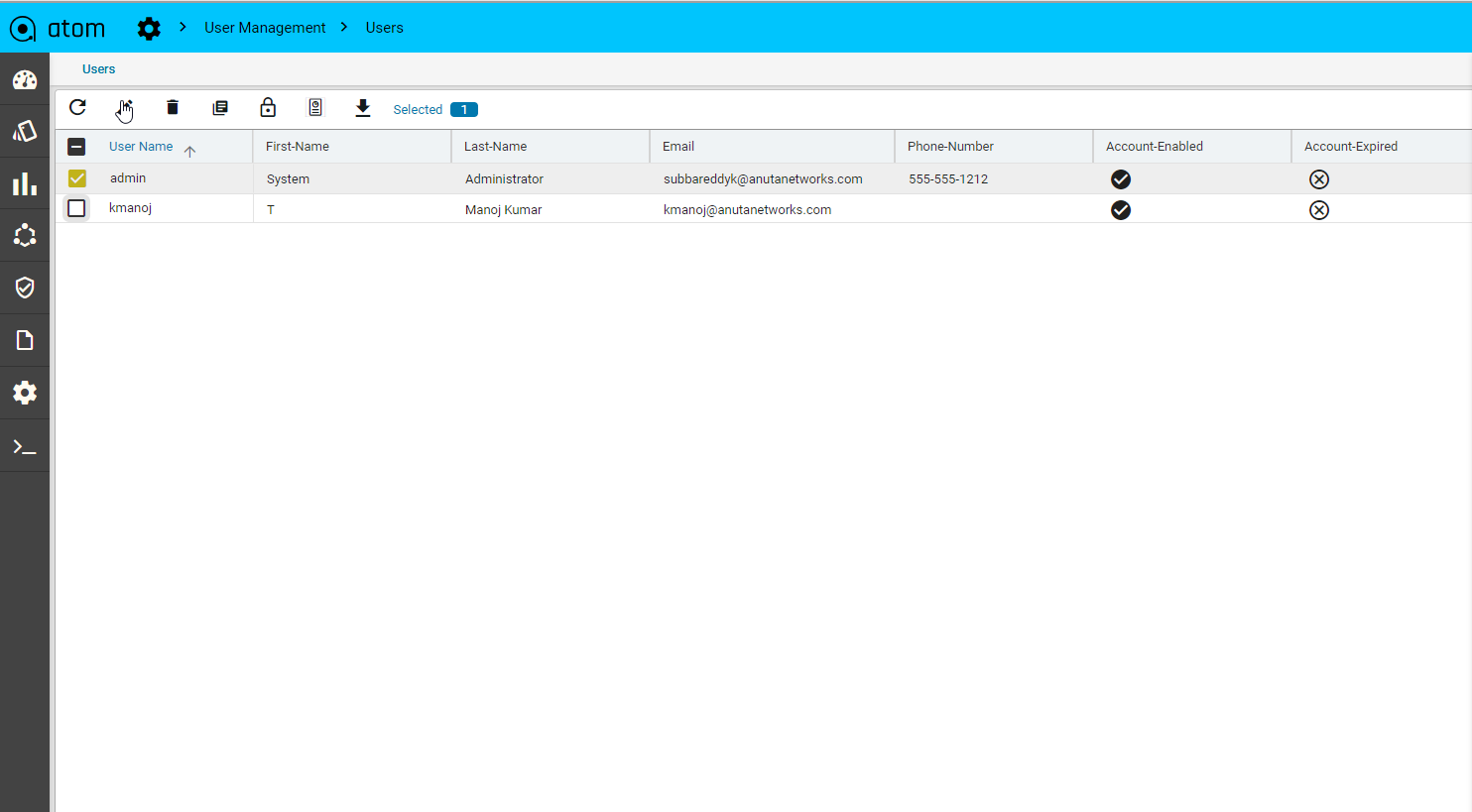

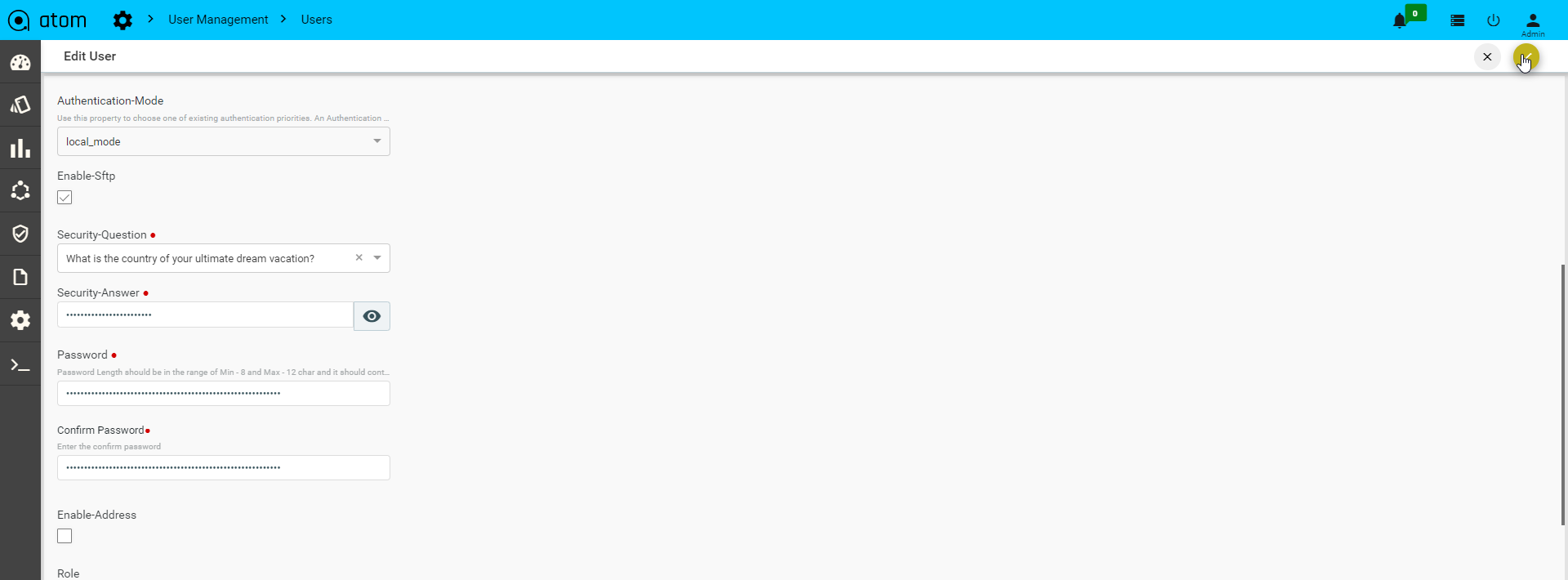

Following steps enable SFTP on ATOM & Upload Images:

- Navigate to Administration > User Management > Users and select the target user and click on edit.

- Enable SFTP in the user and click on save option.

- To upload a file to the Image Manager navigate to Resource Manager > Image Manager

- Click on the upload option

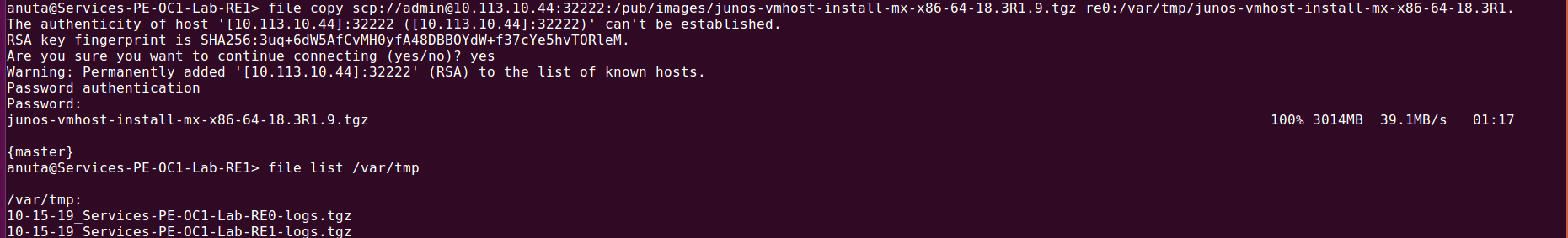

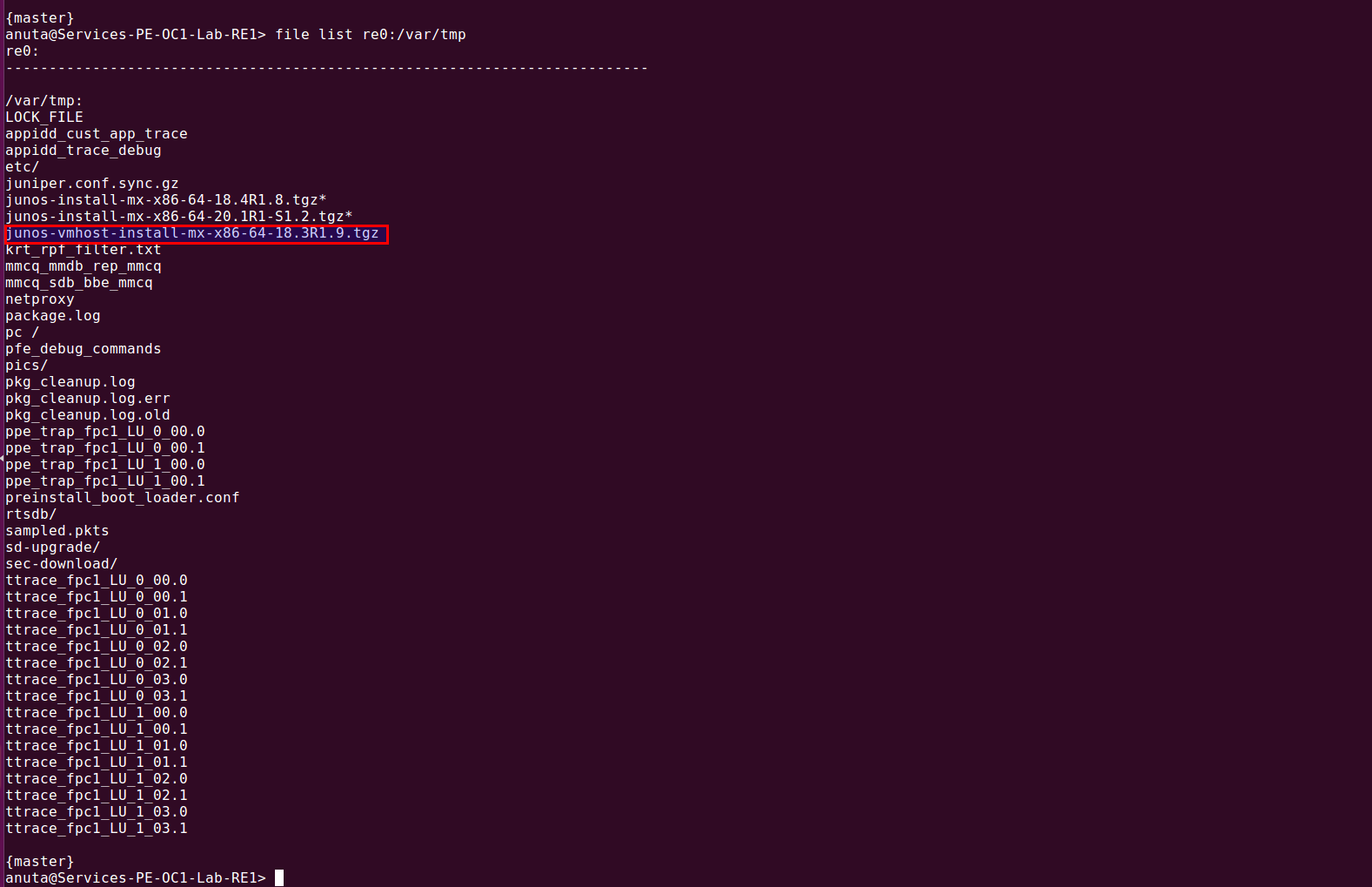

Once files got uploaded to the image-manager then login to the client device, then transfer the files to a particular location on device by using scp protocol..Syntax : file copy scp://{user-name}@{file-server IP}:{file-server node port}:/{path}/{file-name} {Destination path}/{file-name}

Example : file copy scp://admin@10.113.10.44:32222:/pub/images/junos-vmhost-install-mx-x86-64-18.3R1.9.tgz re0:/var/tmp/junos-vmhost-install-mx-x86-64-18.3R1.9.tgz

| Note: When you try to copy the file from the file server to the client then service atom-file-server-node ports should be running. |

Network Automation

ATOM provides stateful or Service and stateless (MOP) automation framework.

Stateless, Low Code or MOP automation – Low Code Workflow automation enables network administrators to perform method-of-procedures involving different actions configuration, operations including show & exec commands on the device. Multiple actions can be stitched together to form a flow. Such flow is executed on one more device with appropriate user inputs.

Example:

- Device Software Image Upgrade

- Protocol Migration [IPV4 TO V6, OSPF to ISIS]

- Hardware RMA/ Refresh [Moving from one vendor to another]

MOP Automation can be a combination of Stateless Action and Staful actions as well. In such scenarios MOP will contain stateless actions like pre-checks while performing API invocations against Device or Service Models to perform stateful transactional action.

Such tasks have no requirement for statefulness and can be best developed using Workflow Automation.

Example:

- Application Deployment in Data Center with Pre-checks and Post-Checks

- Branch Config Deployment with Pre-Checks & Post-Checks

Stateful, Service Automation – ATOM Service automation helps administrators develop stateful and atomic transactions. Admins can create service models that enable Create, Update and Delete operations (CRUD). Such operations can be carried out throughout the life of the service. Brownfield service discovery is also supported.

Example:

- Application Deployment in Data Center

- Layer-3 VPN

- Layer-2 VPN

- Private Cloud to Public Cloud Interconnect

Network Workflow & Low Code Automation

Workflow breaks down an activity into subtasks and ties them together with network events, provisioning actions, show-commands, pre-checks, post-checks, user forms and approvals, timed background tasks, inventory checks etc.

Workflow Automation offers an intuitive graphical designer to automate network provisioning and maintenance activities.

Administrators can create simple or complex flows using ATOM Workflow’s drag and drop interface. ATOM Workflow has prebuilt adaptors to enable integration with ticketing, billing, OSS, BSS and many other network elements. Workflow can also automate multi-level approval sequences. Use workflows for a one time project or for repetitive tasks. Workflow development is covered in “Workflow Modelling” section in the ATOM Platform Guide guide. For automation of tasks that require stateful and atomic transactions it is advised to use ATOM Service Models discussed in ATOM Platform Guide.

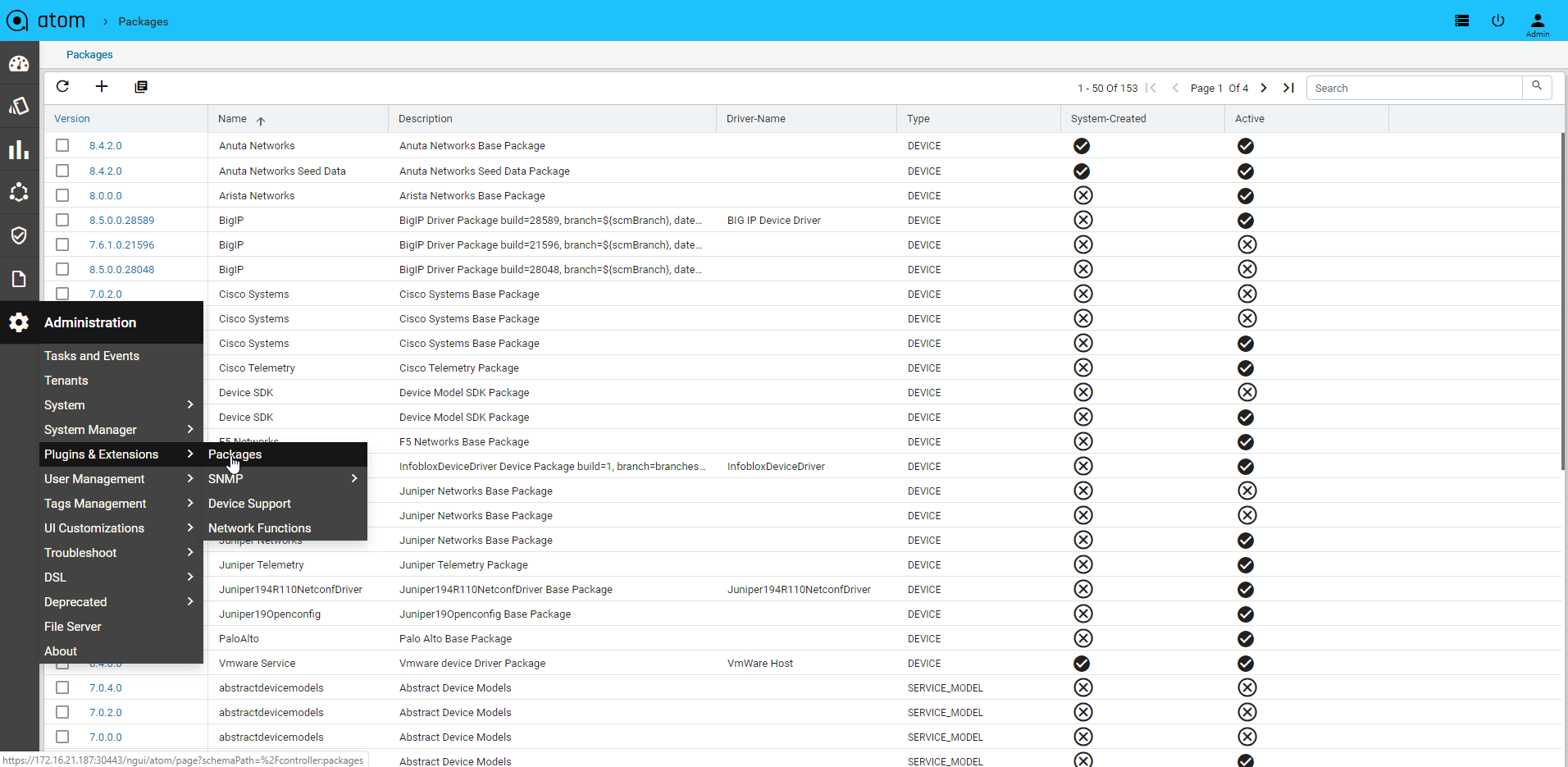

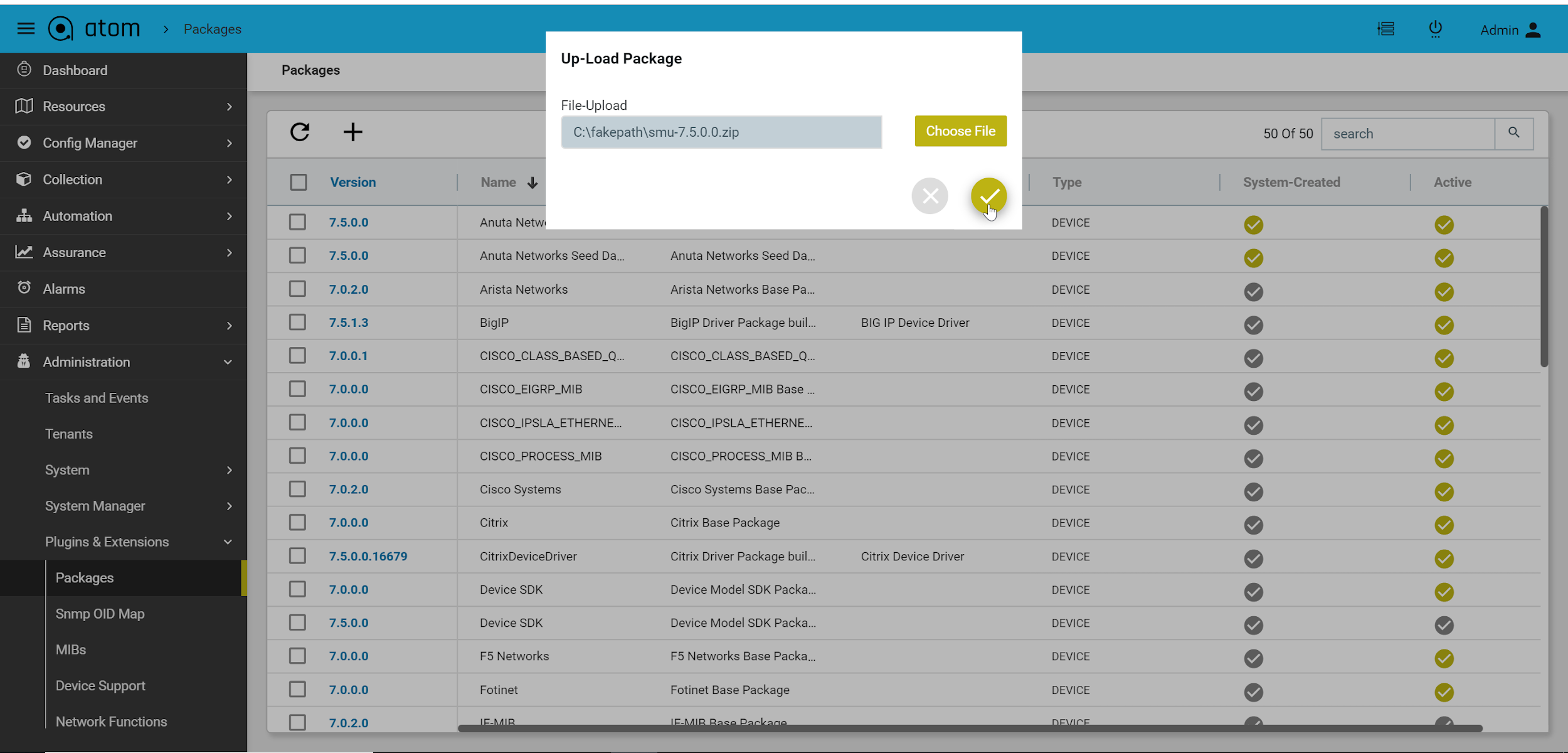

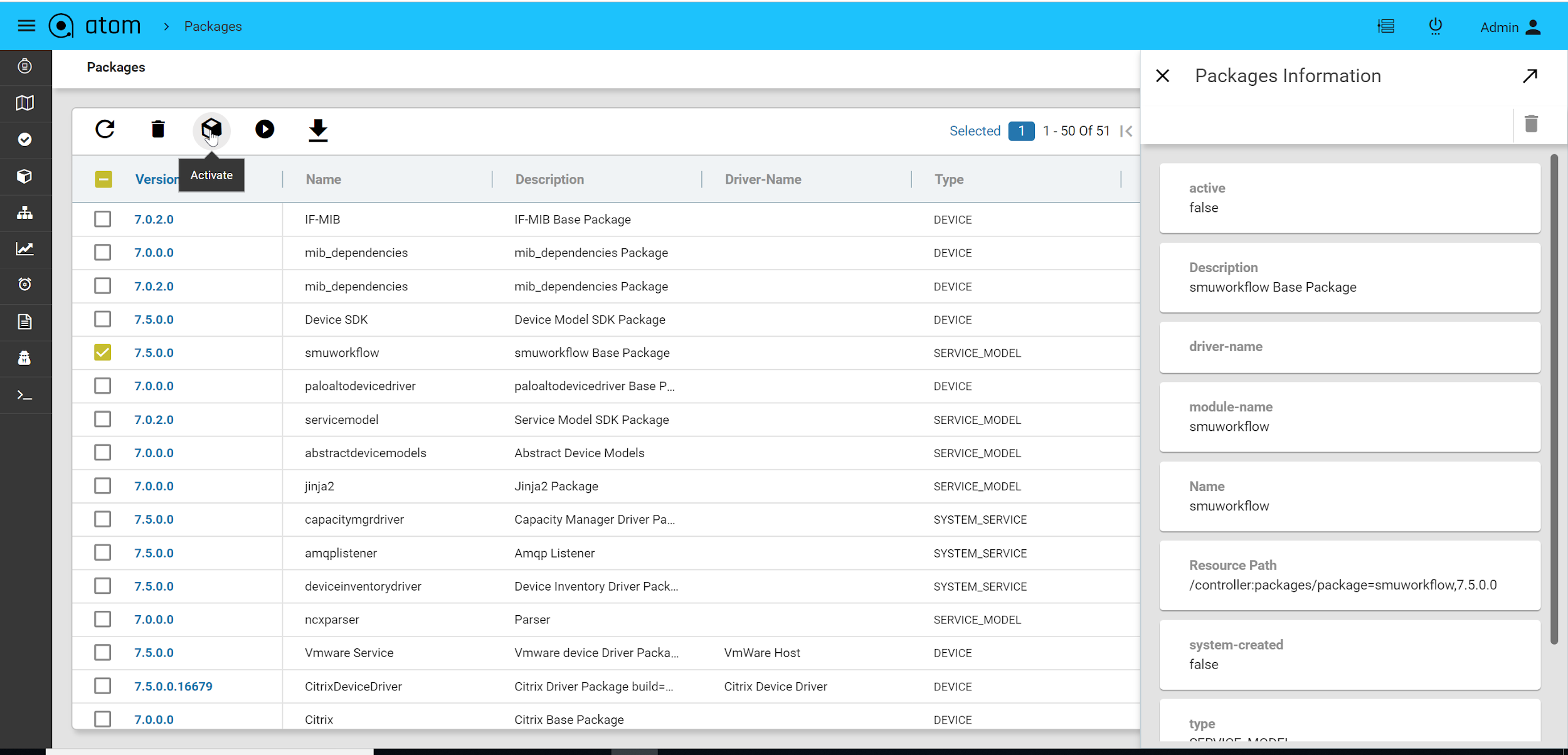

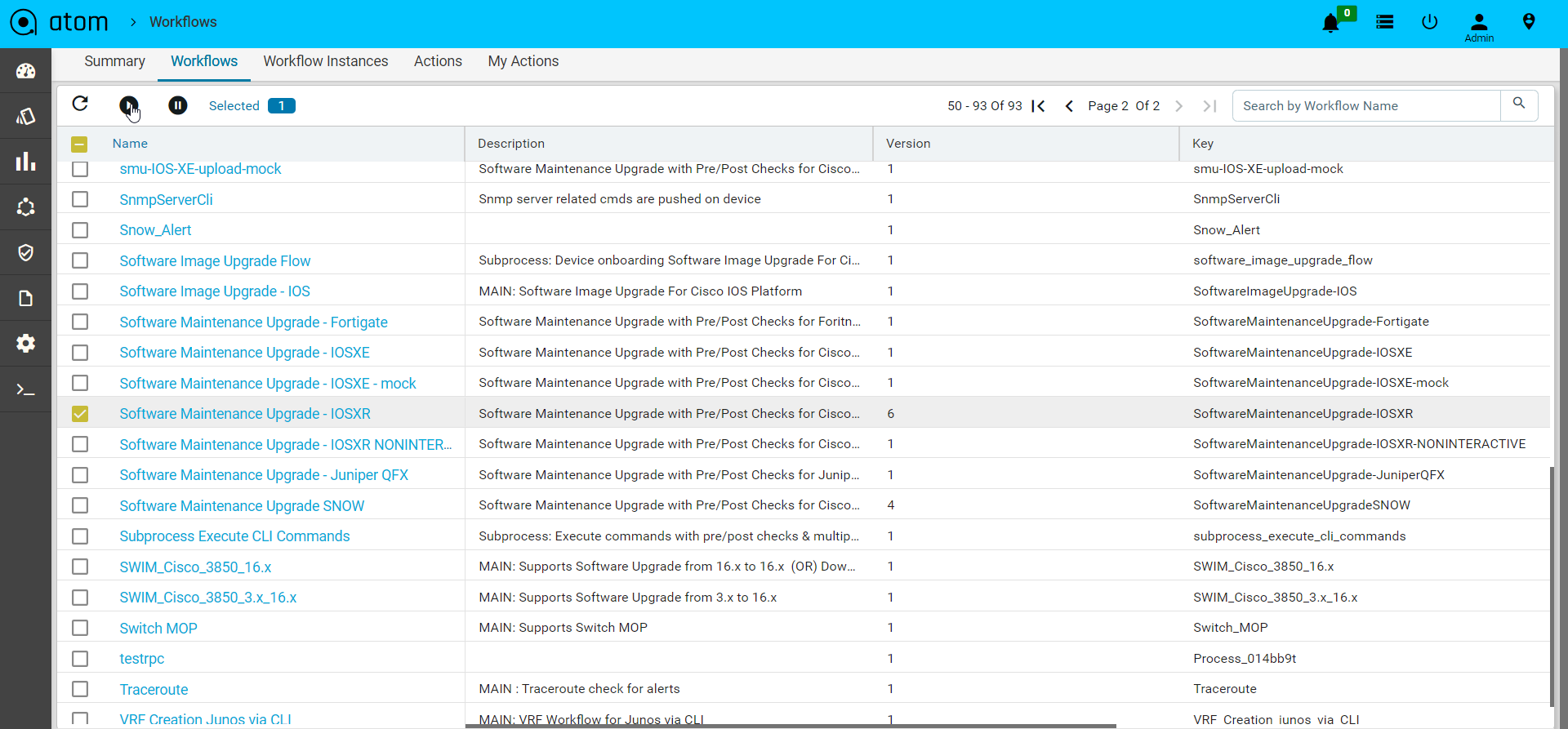

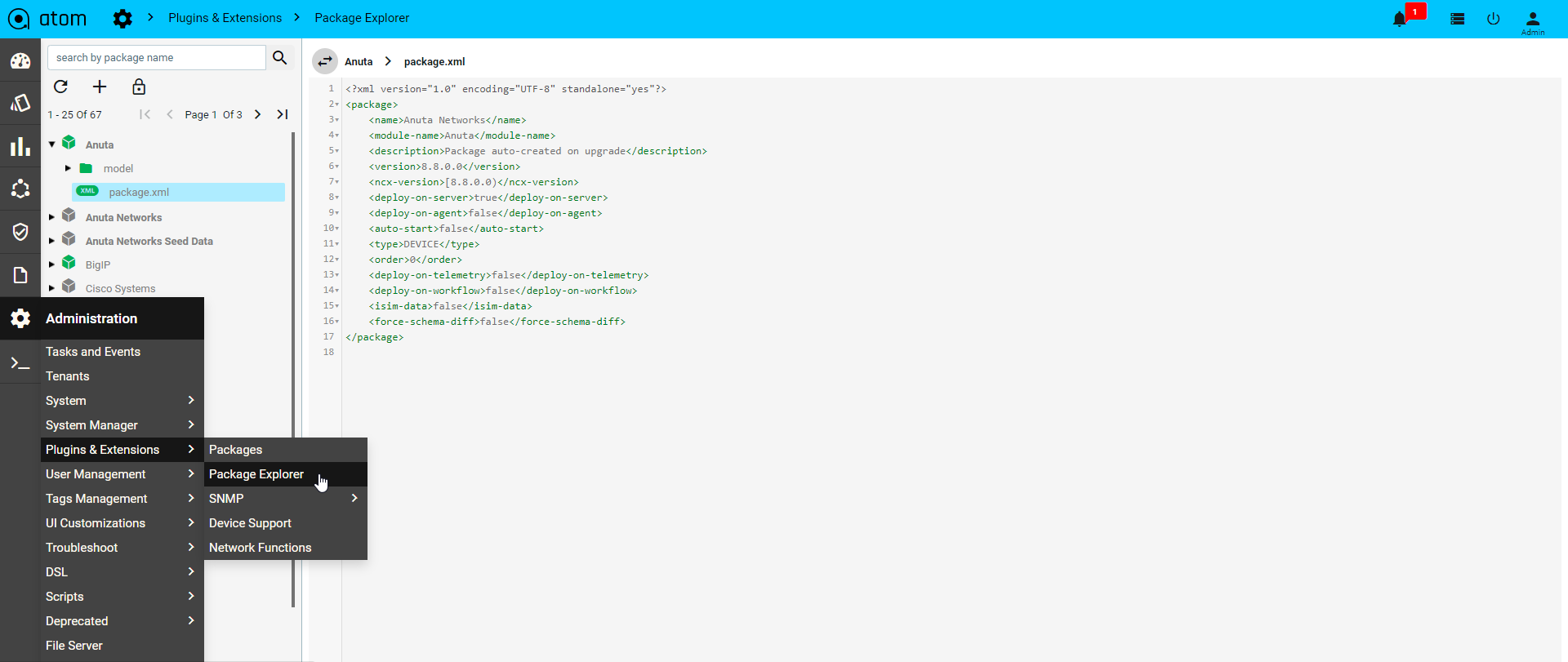

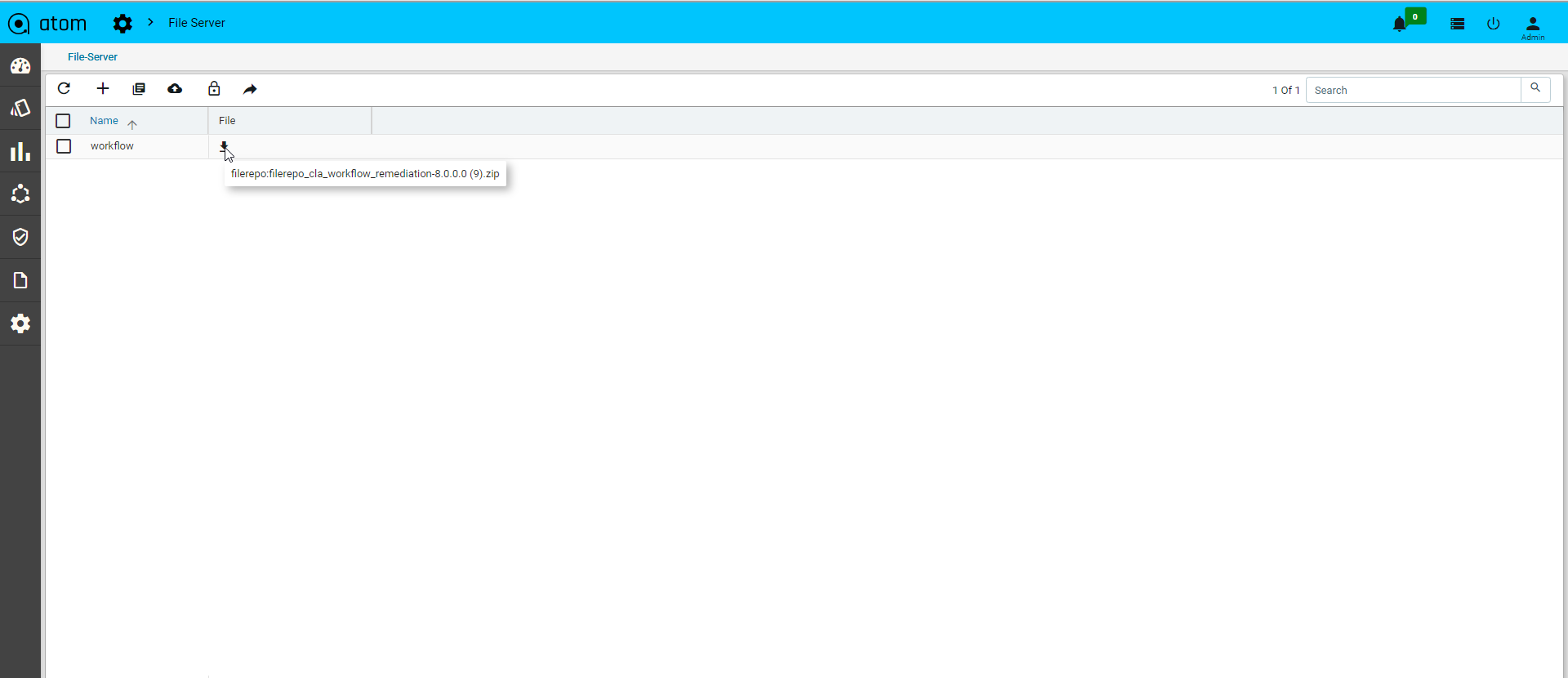

Uploading Workflow Package

Navigate to Administration > Plugins & Extensions > Packages

- Click on Add at the top bar to upload the packages.

- Upload workflow package and click on the tick mark

- Select the package and click on Activate

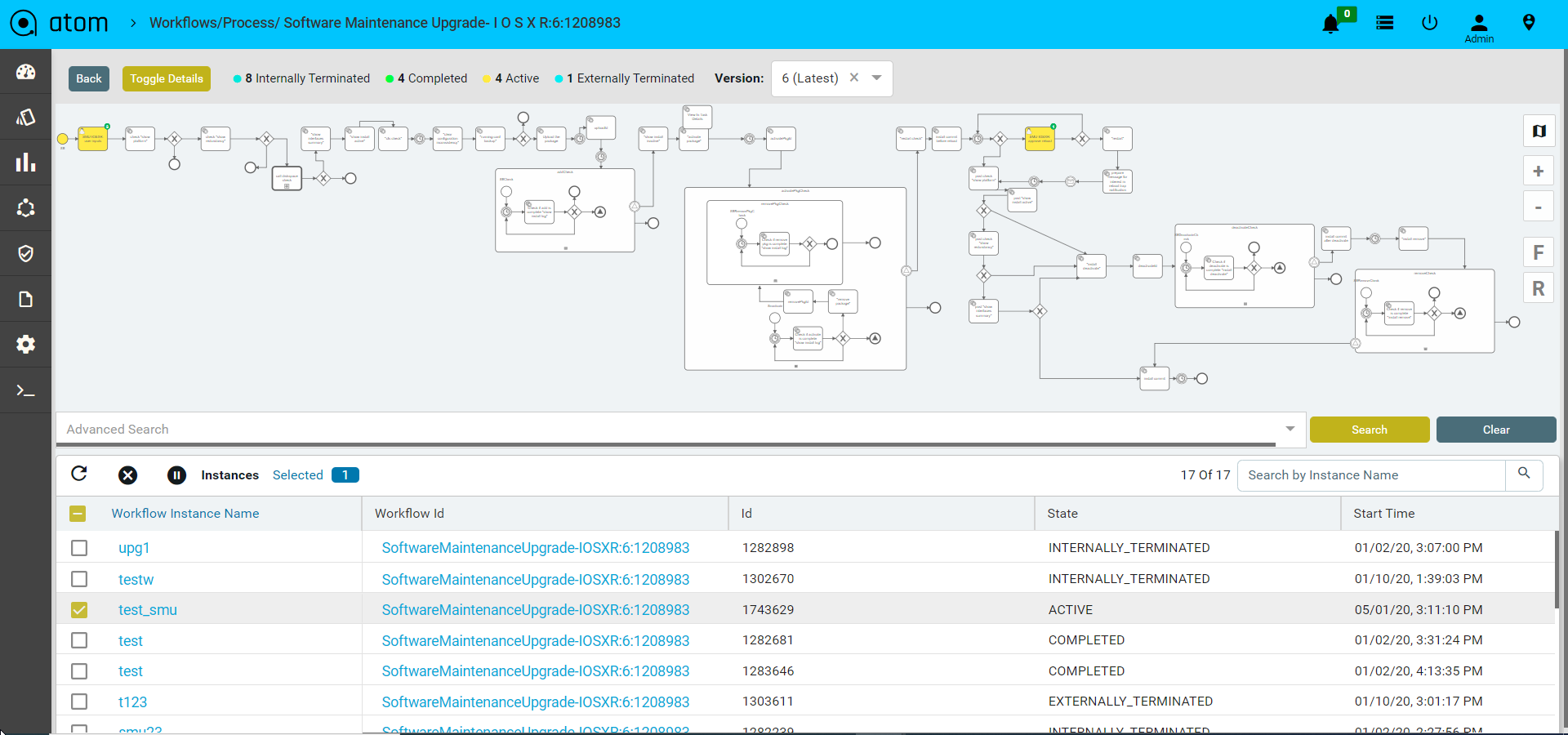

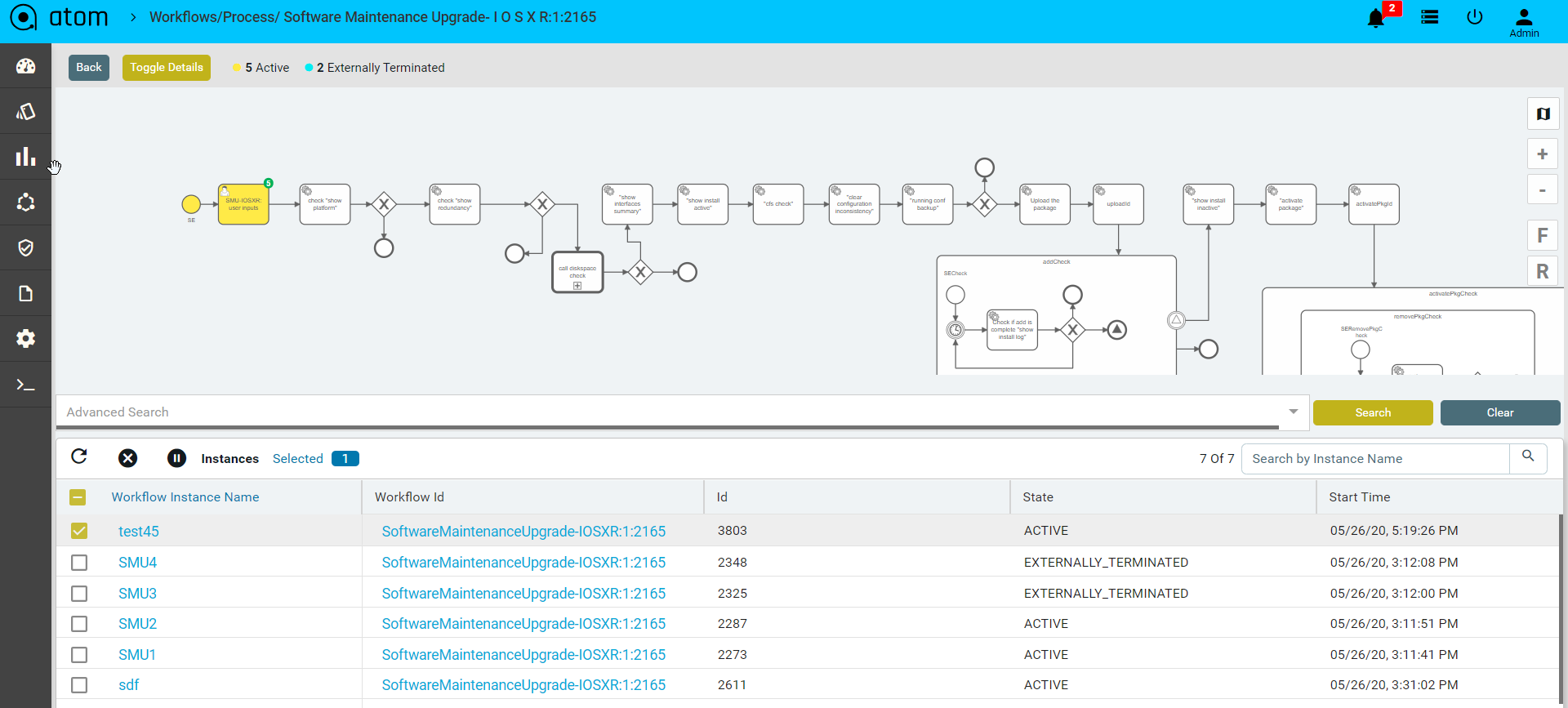

Workflow Lifecycle Management

A workflow definition defines the structure of a workflow. A workflow instance is an individual execution of a workflow definition.The relation of the workflow instance to the workflow definition is the same as the relation between Object and Class in Object Oriented Programming.The workflow engine is responsible for creating workflow instances and managing their state.

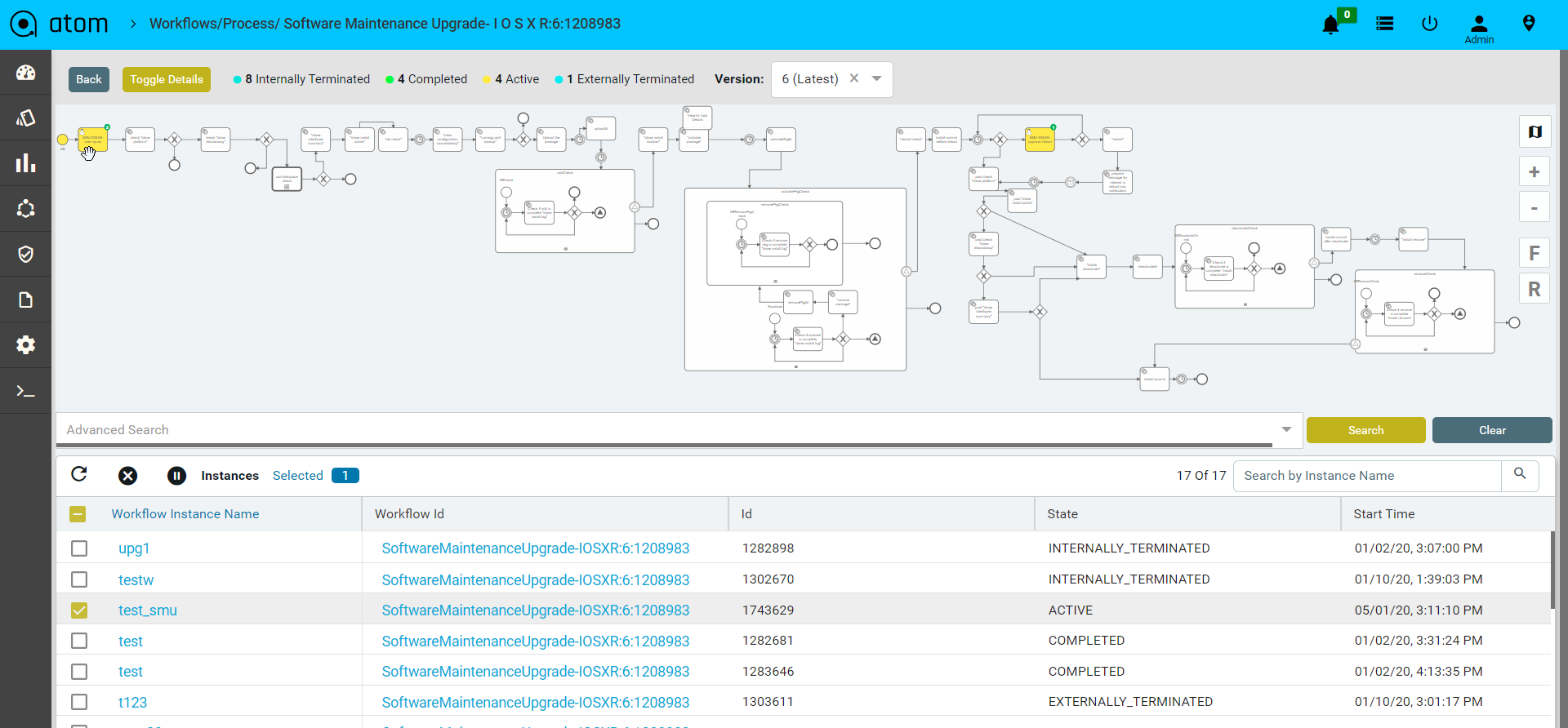

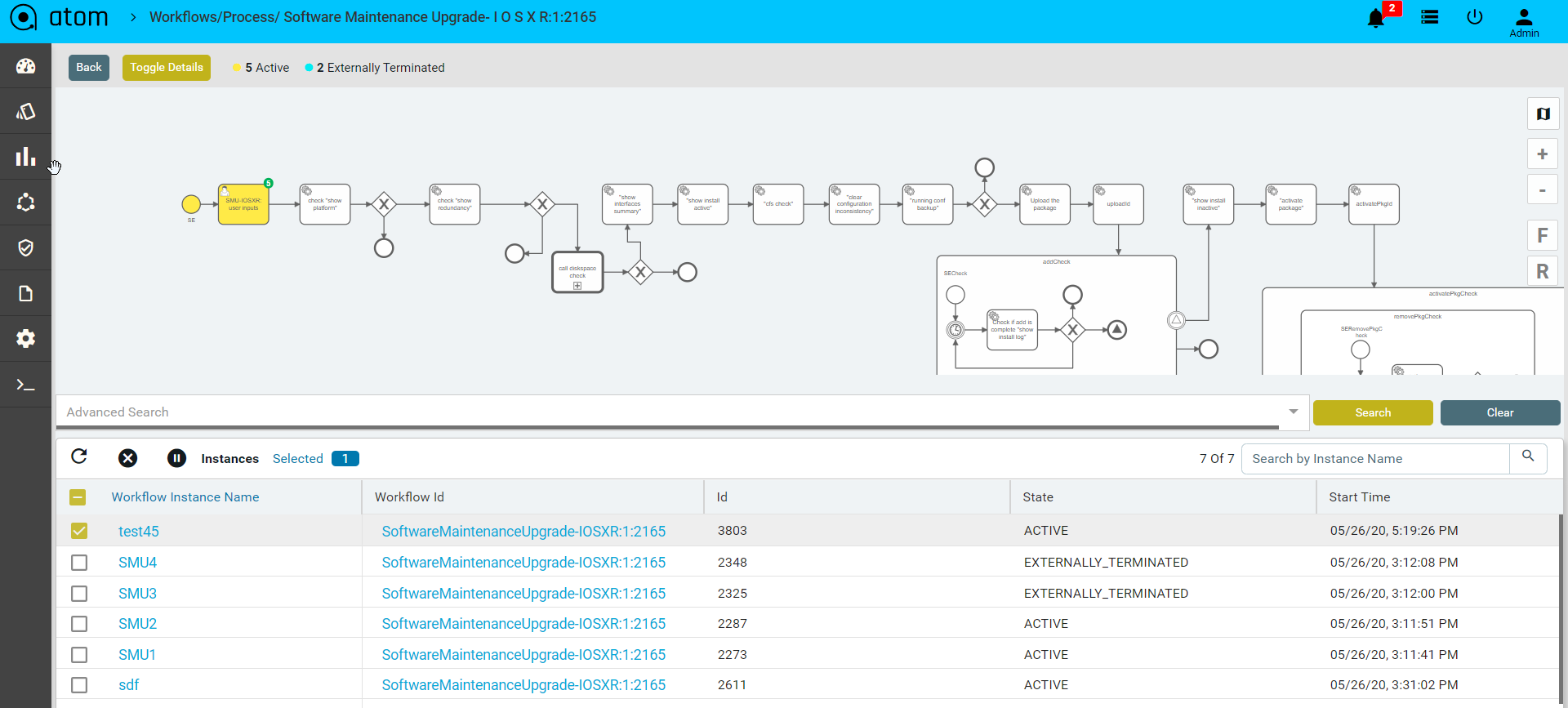

Workflow Instances traverse different states as they progress from the start to end.The various states are as listed below:

- Active : Once the workflow is started it gets into an active state. Through-out the different tasks , workflow continues to be in an active state and indicates an error free execution.

- Error State : If there are unhandled exceptions in the scripts and programmatic/syntactic errors in inline scripts the workflow execution goes to an error state.

- Internally Terminated : If there are any errors in communication with the device or any custom RPCs throw exceptions which don’t have explicit error handling defined in the workflow they are internally terminated by ATOM and state is updated accordingly.

- Externally Terminated: If the Network Administrator finds any unexpected behavior during any point in the workflow execution he has an option of manually terminating the workflow instance. This is the only state which the end user can manually state to terminate the flow.

- Completed:Once the workflow is terminated and has reached the stop event, the workflow goes to a completed stage and indicates a successful positive flow execution.

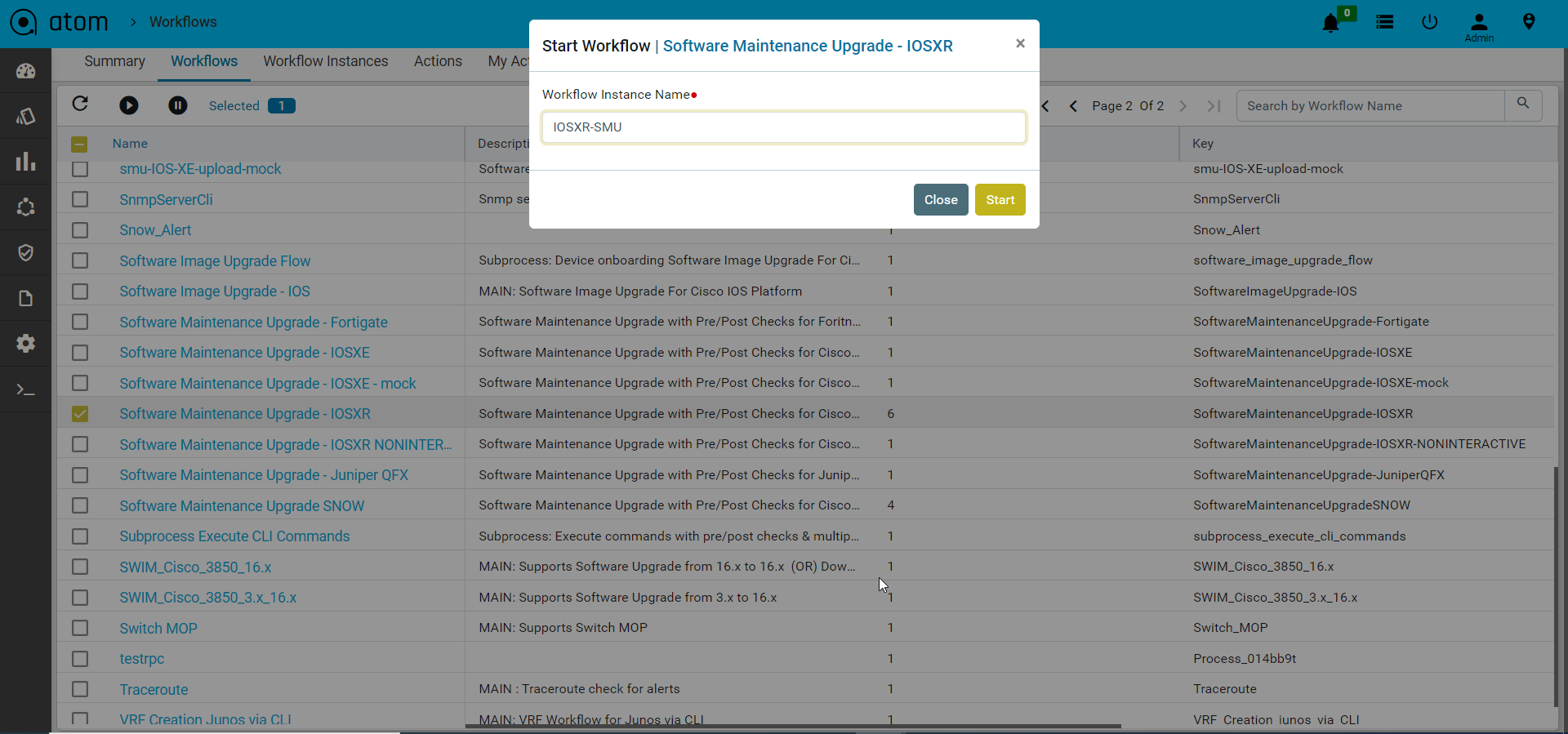

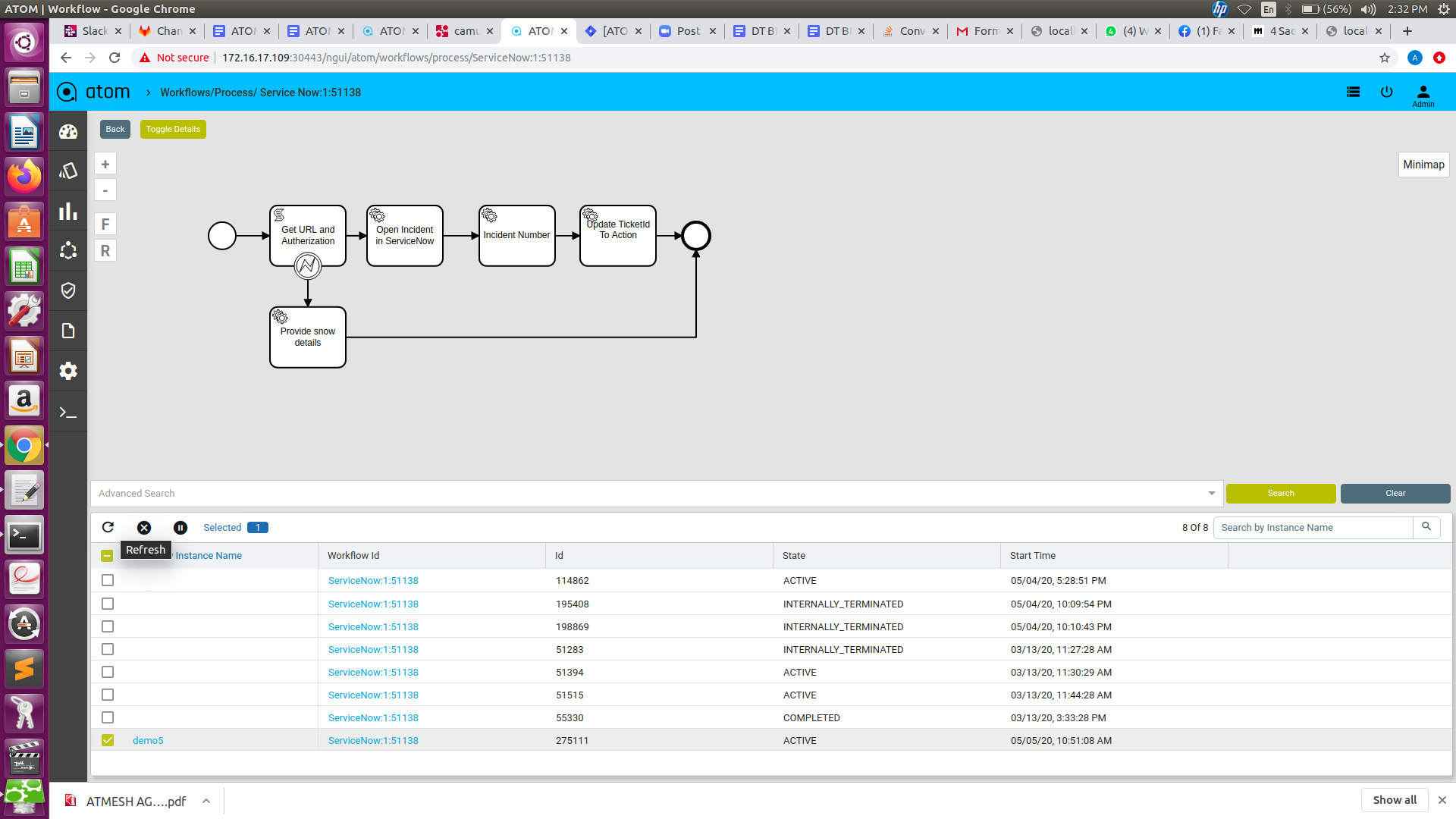

Start Workflows

To start a workflow instance follow the steps below.

- Navigate to Automation > Workflows > Workflows

- Select the workflow package from the list

- Click on Start to start an instance of the workflow and provide valid Instance Name

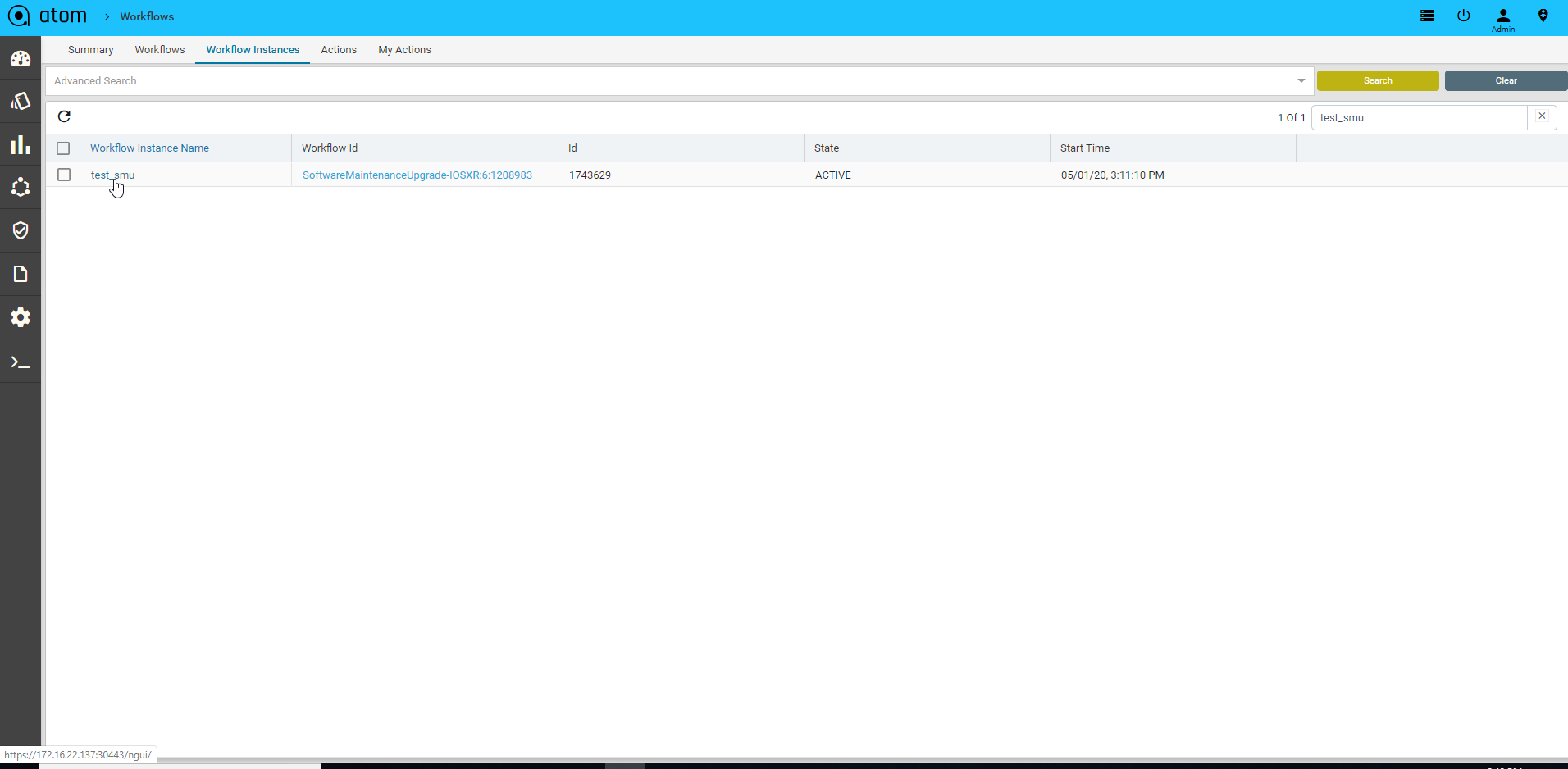

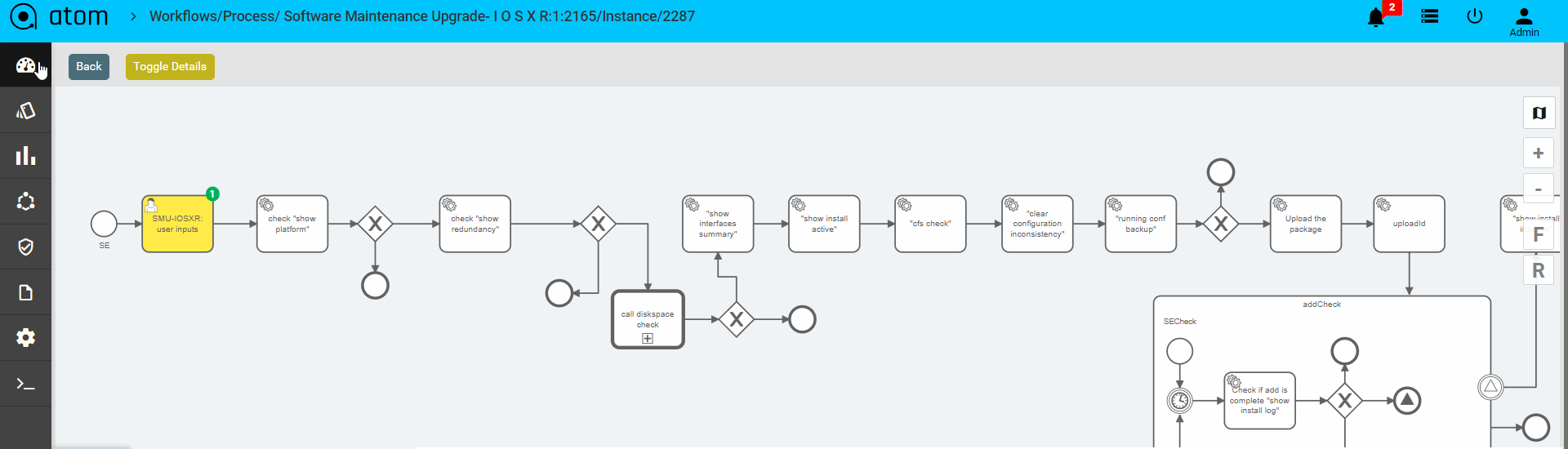

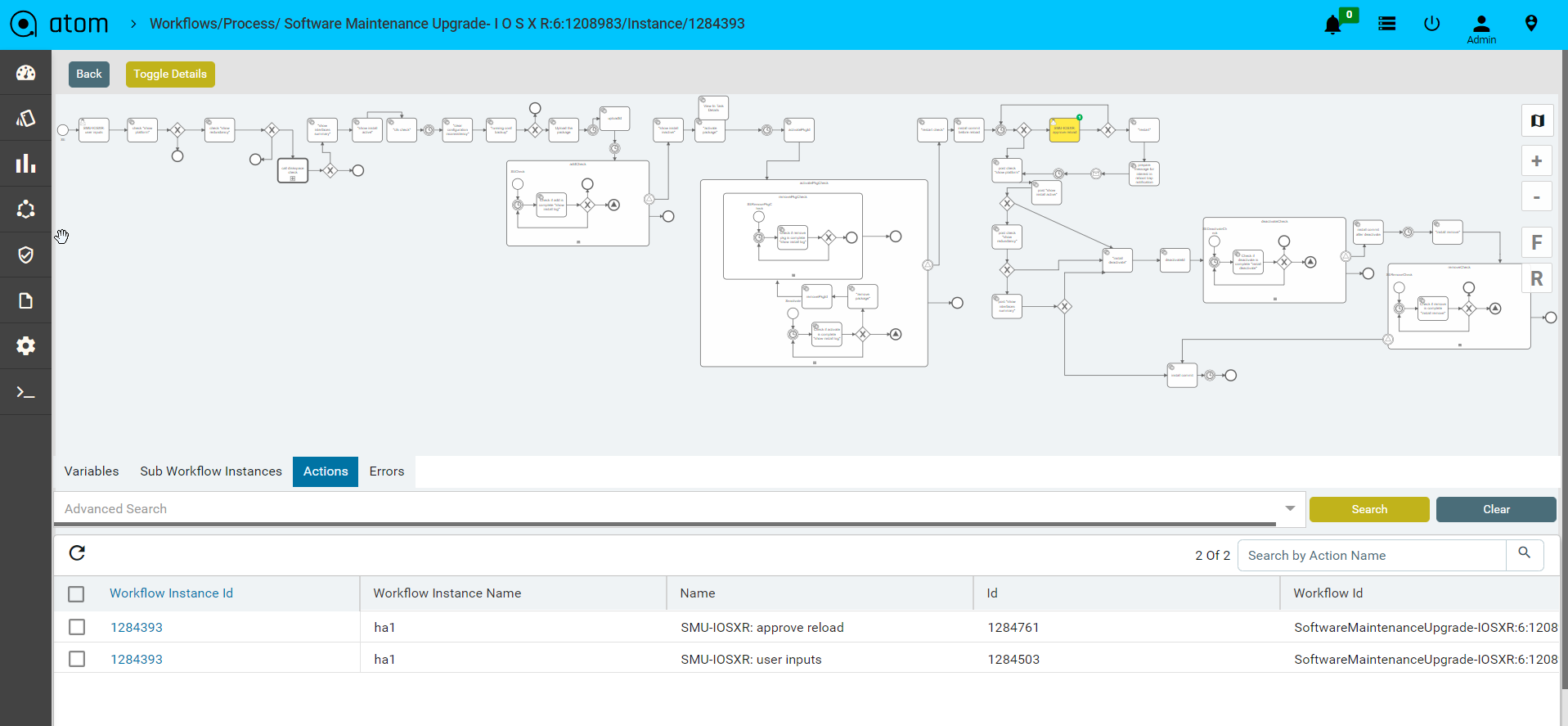

Inspecting Workflows

To view the current running stage of the workflow

- Navigate to Workflows > Instances

- Click on Inspect

This opens a window with the workflow elements. Green indicates successfully completed tasks. Yellow indicates the current task being executed

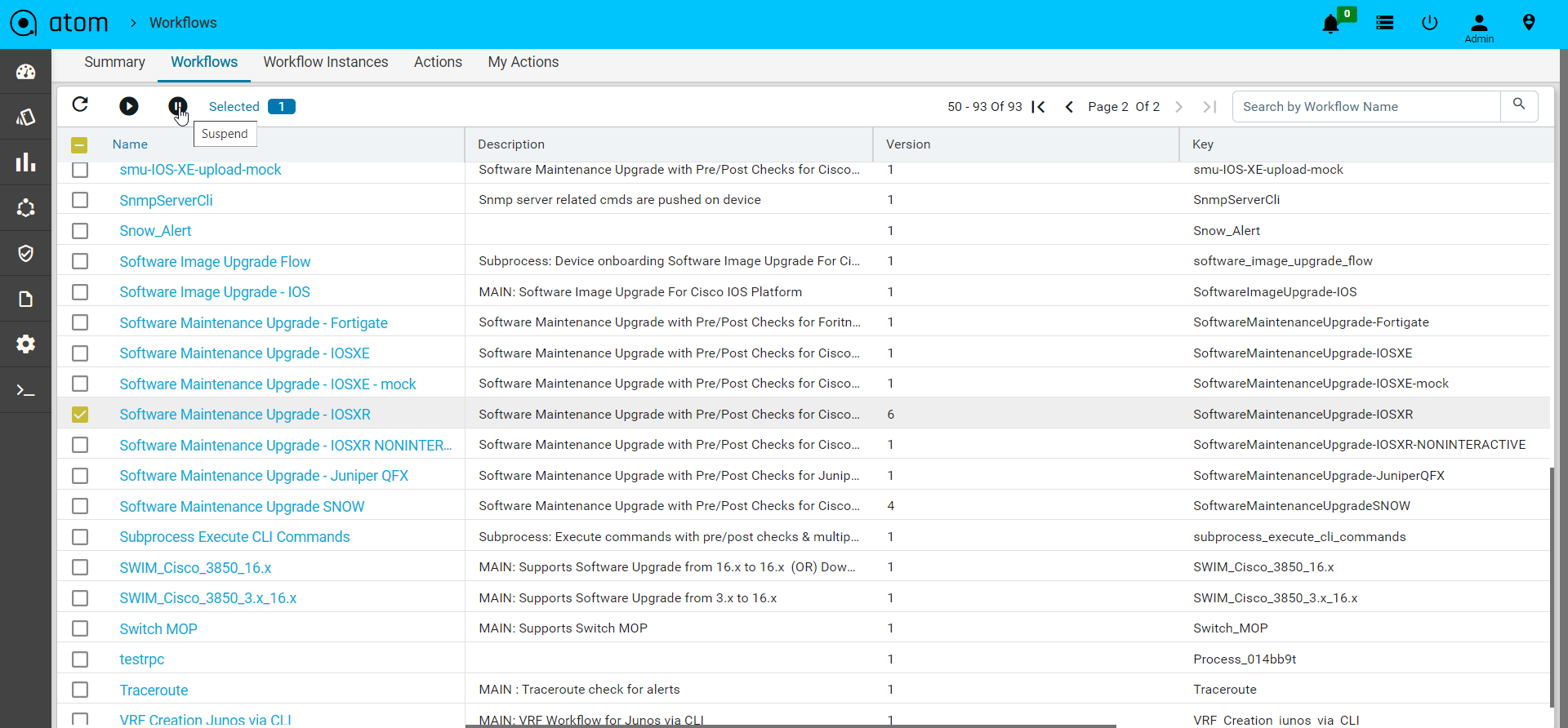

Suspend/Pause Workflows

In the workflow definition view and in the workflow instance view, can suspend the selected workflow definition or workflow instance by using the suspend button on the panel.

Workflow Definition Suspension

If you suspend the workflow definition, you prevent the workflow definition from being instantiated. No further operations can be done while the workflow definition is in the suspended state. You can simply re-activate the workflow definition .

Workflow Instance Suspension

If you suspend the workflow instance, you can prevent the workflow instance from being executed any further. This includes suspending all tasks included in the process instance. You can re-activate the process instance at any later point of time.

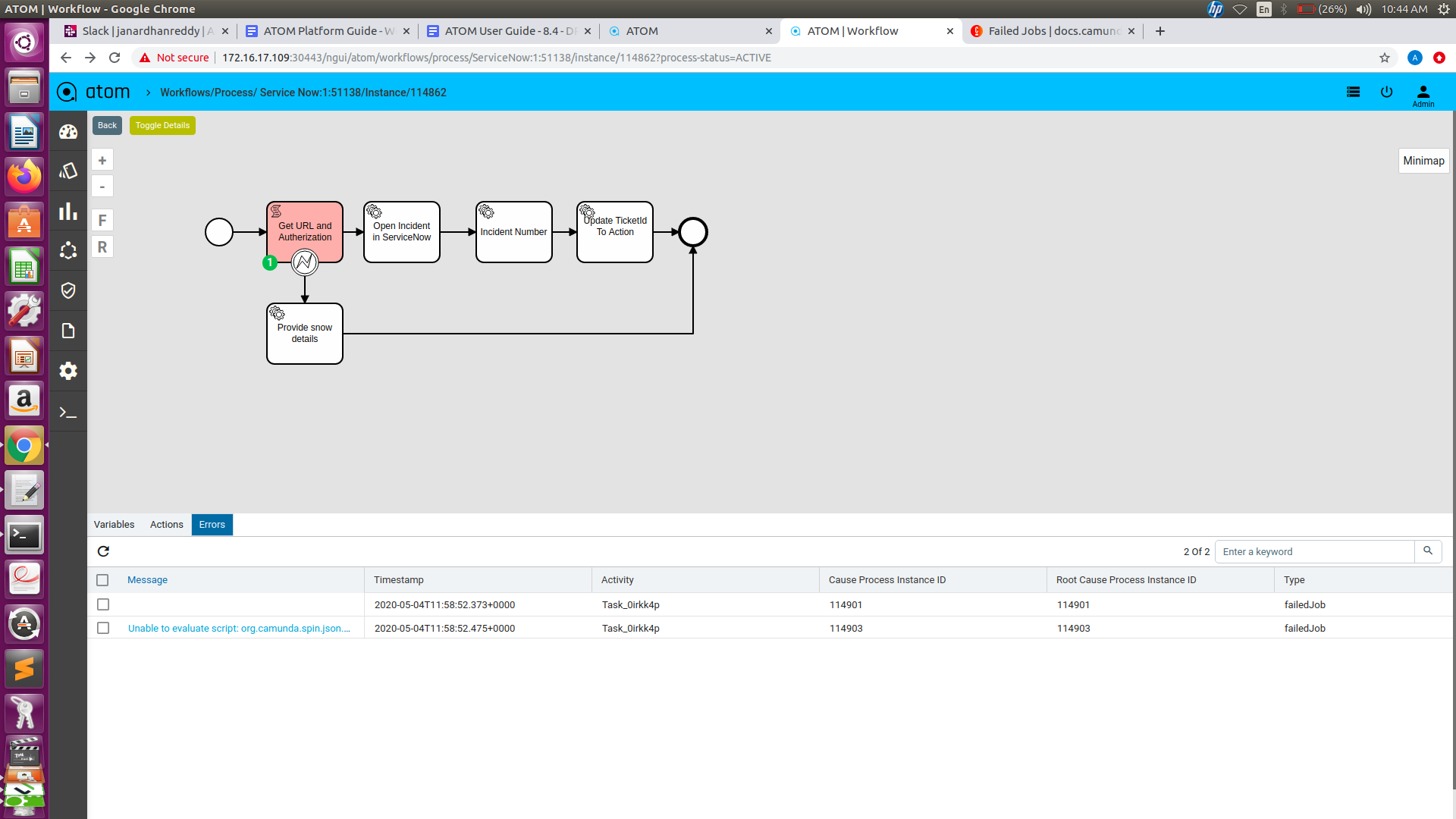

Workflow Instance Error:

Unresolved programmatic/syntactic errors of a process instance or a sub process instance are indicated by Atom workflow engine as errors. The Errors tab in the workflow instance view lists the failed activities with additional information.

Retry a Failed Job

To resolve an error you can use the Retry button on the top panel. Select the corresponding instance, so the atom-engine will re-trigger this job and increment its retry value in the database.

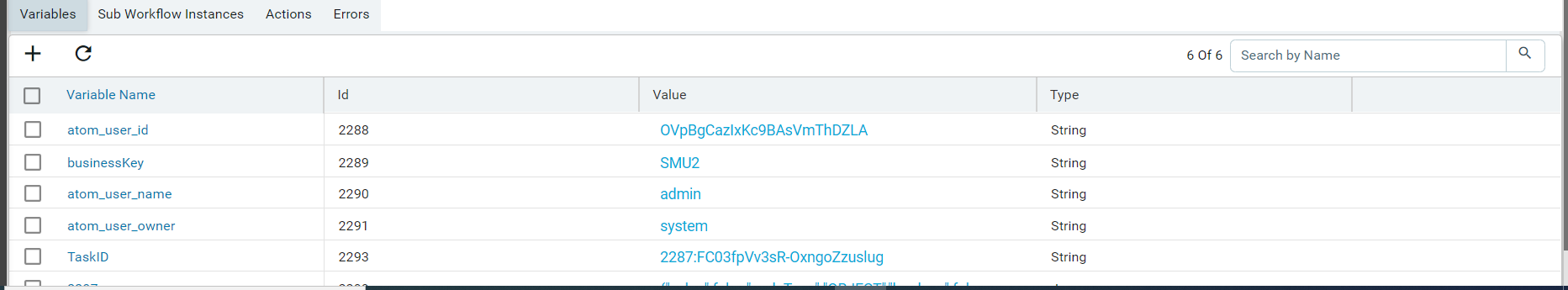

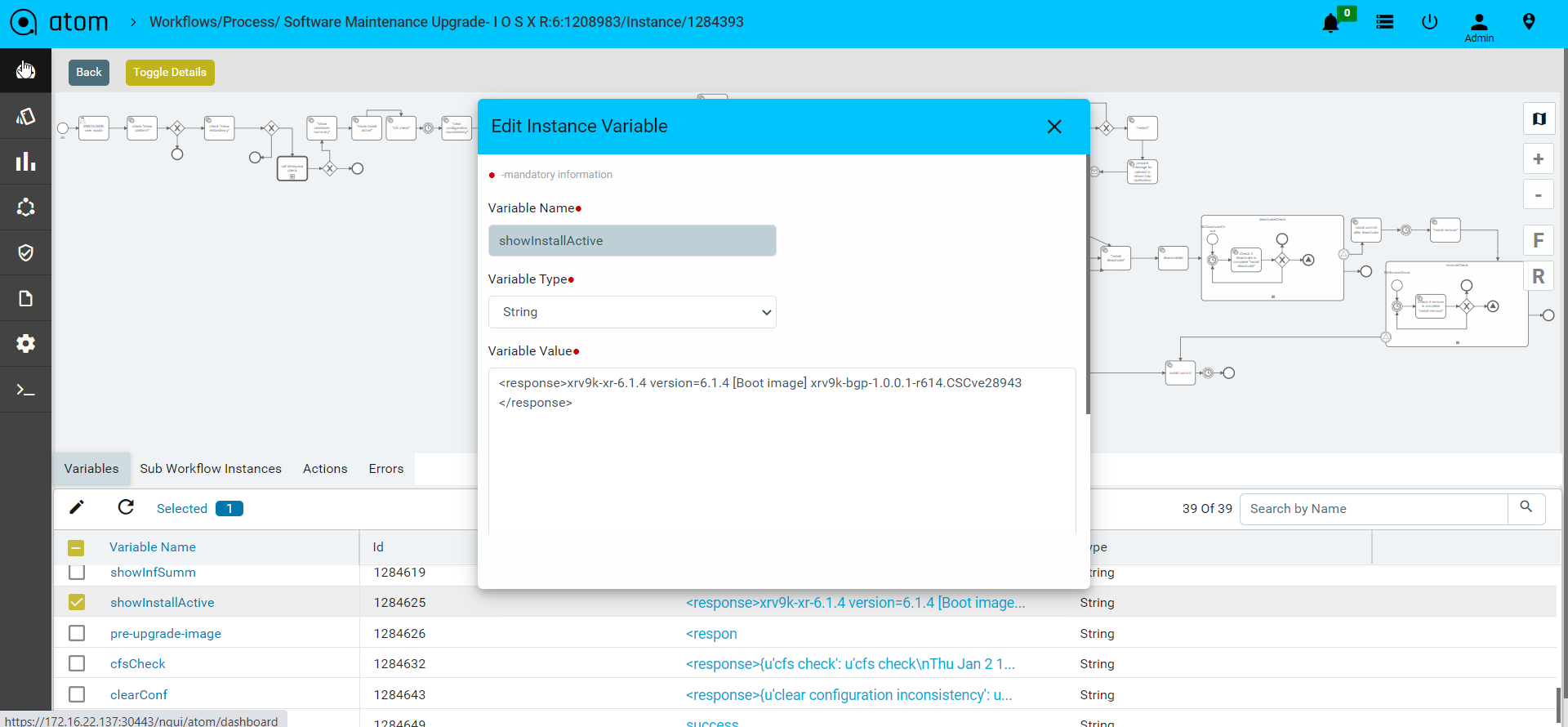

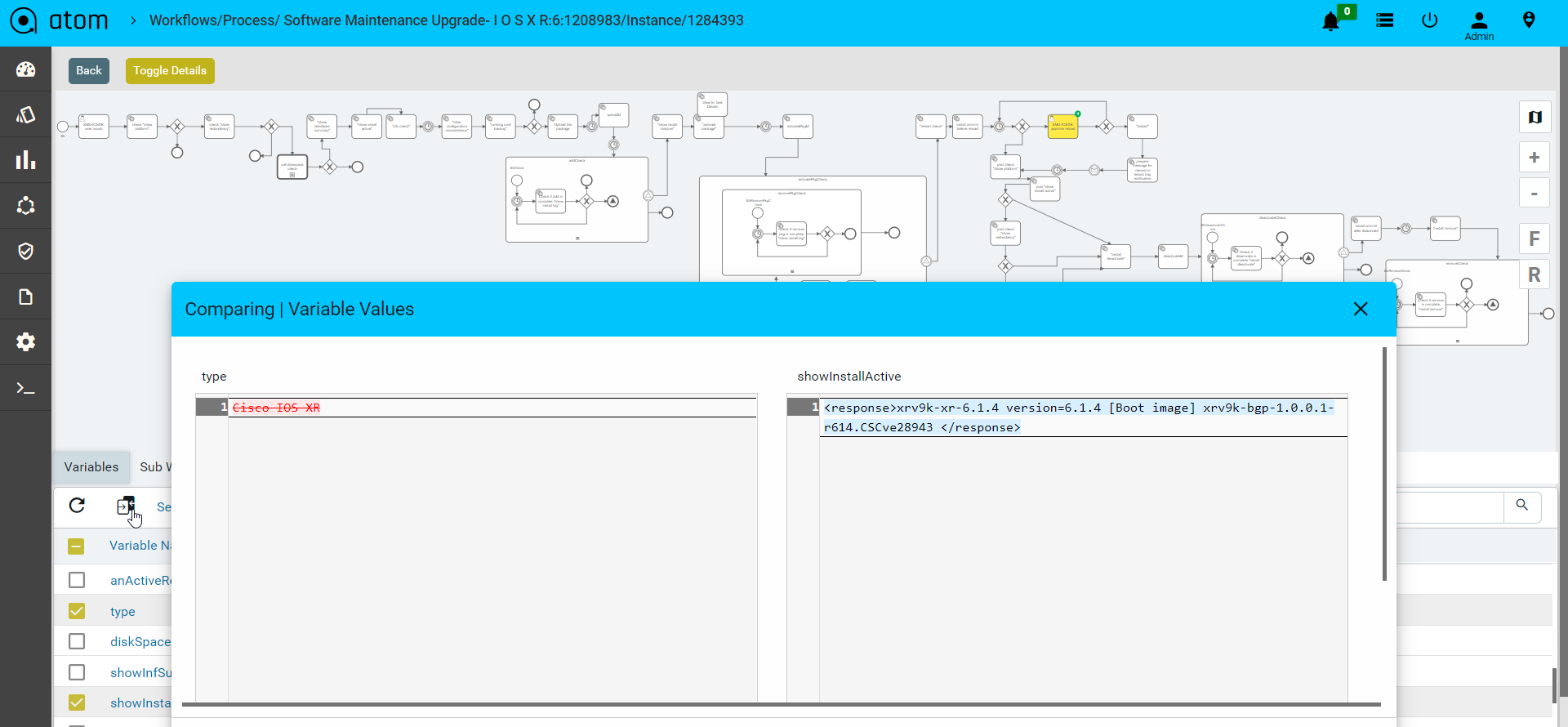

Workflow Variables

Workflow Instance Variables can be used to add data to workflow runtime state. Various API methods/Service Tasks that change the state of these entities allow updating of the attached variables. In general, a variable consists of a name and a value. The name is used for identification across workflow constructs. For example, if one activity sets a variable named var, a follow-up activity can access it by using this name. The value of a variable is the value held by that particular named variable in the Atom engine for that particular workflow instance context.

To view the workflow variables

- Select the particular workflow instance that is active.

- View the workflow variables in the bottom panel.

- Users can also edit the variable values during runtime.

- Alternatively User can compare two variable values and see the difference on the screen.

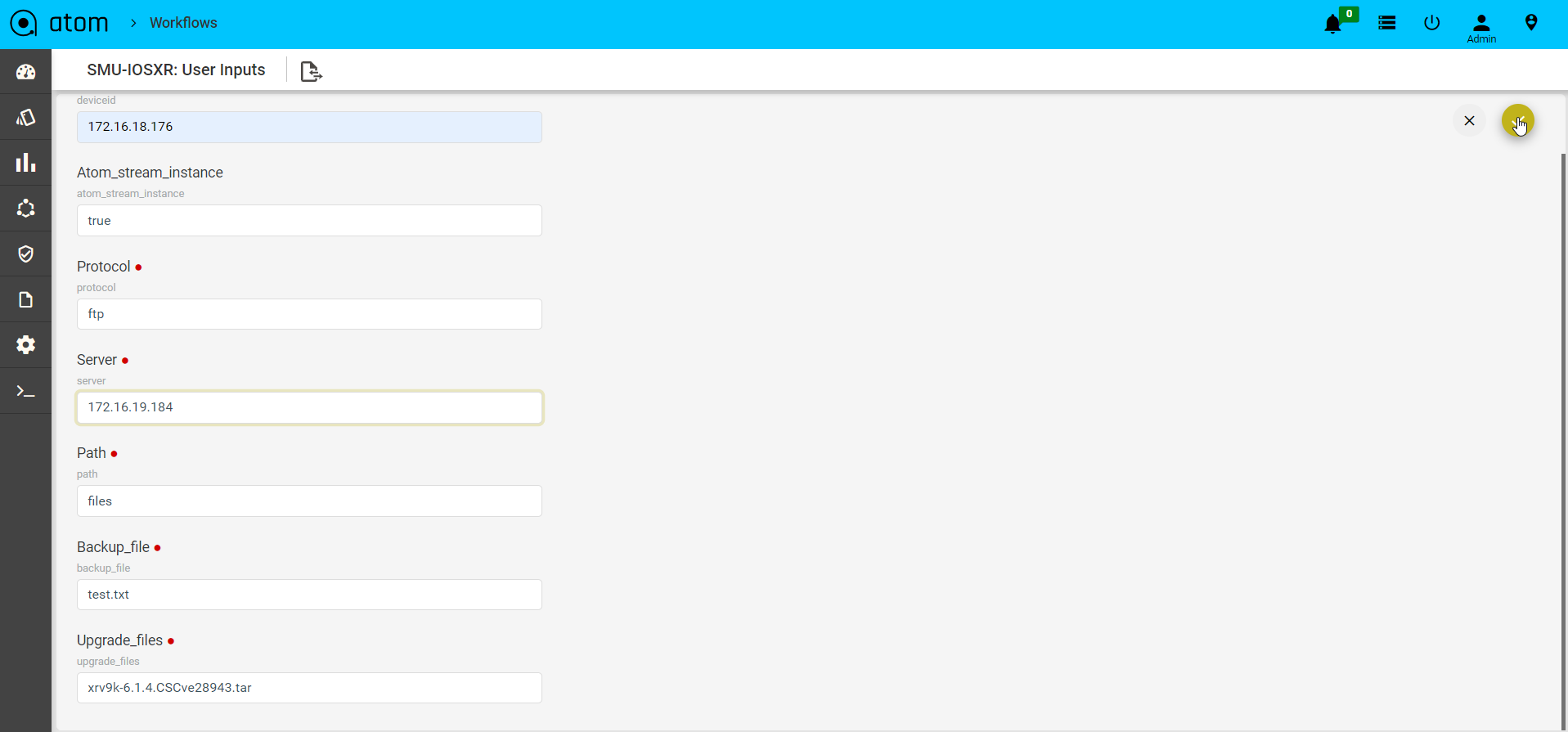

User Inputs

Some workflows may require the administrator to enter some values at particular stages. Workflow execution will be stalled until the values are entered.

To view if any Action items are pending against a particular workflow instance we can view it under the specific workflow instance view :

For viewing such tasks:

- Select the particular workflow instance that is active.

- Once Selected navigate to the action tabs to view all pending action items against this particular workflow instance.

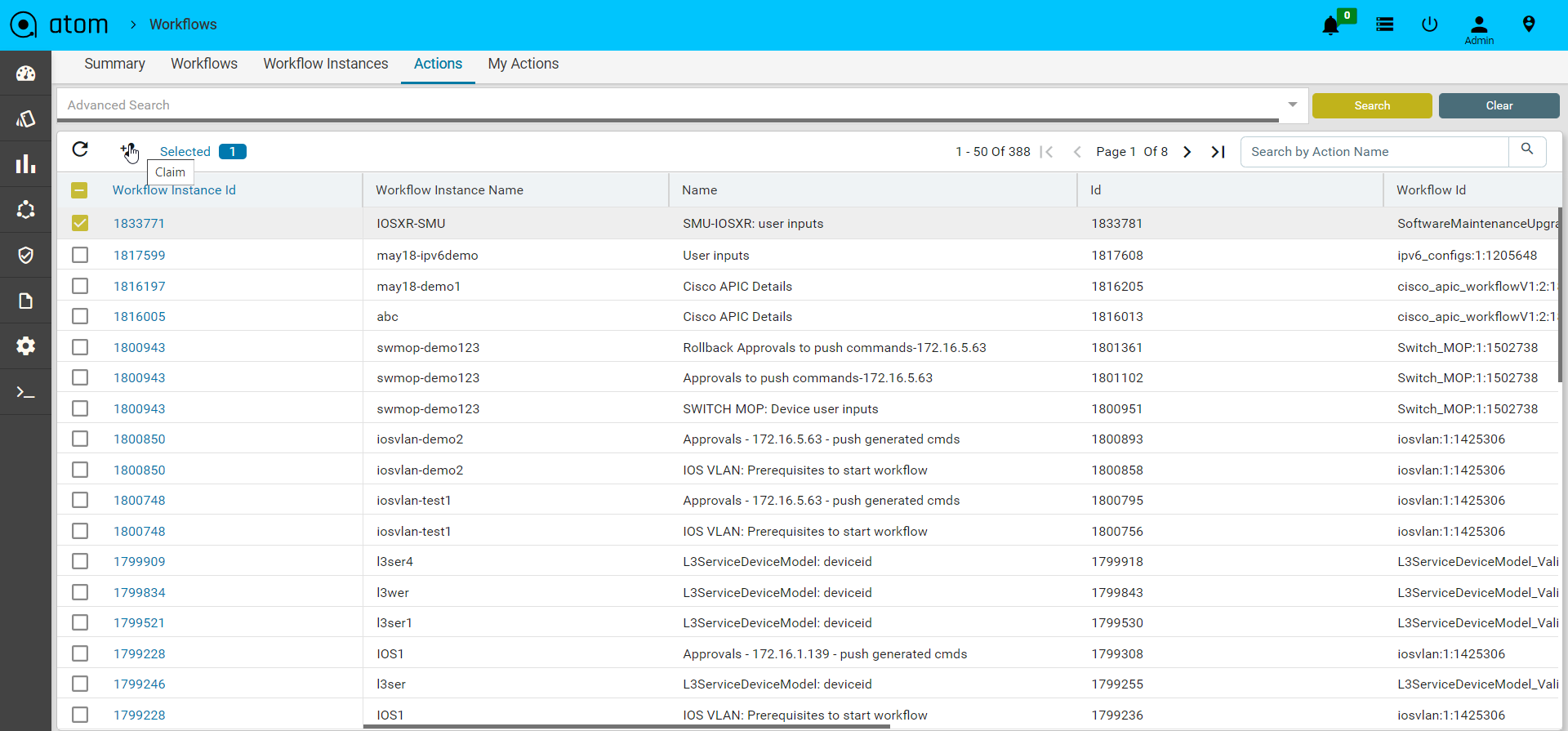

For completing such tasks

- Navigate to Workflows > Actions

- Select the workflow task and click on Claim to claim the task

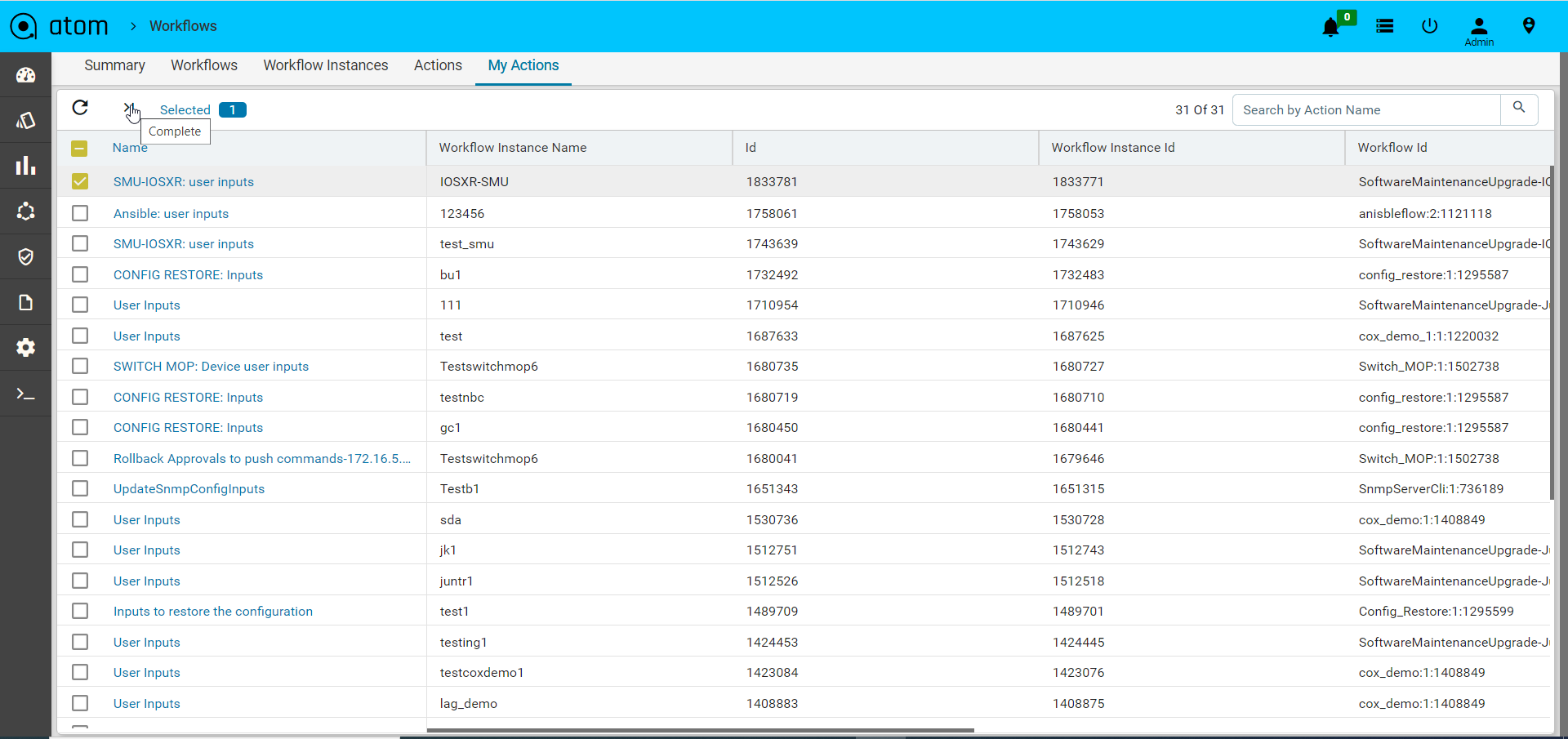

- Navigate to Workflow > My Actions

- Select the task claimed at step 2 and click on Complete

- Enter values and click on the tick mark

Network Service Automation

Stateful services are developed using ATOM SDK and involve Service model developed in YANG and optional business logic in Python. Such services have a continuous life cycle and undergo multiple changes over a period.

Services can be deployed in two modes –

Greenfield Mode – A user can instantiate the service, a set of network configurations, using the service template. These service templates are rendered from schema files that have been developed as a part of the Service package. ATOM automatically generates and applies relevant configurations on to the devices.

Brownfield Mode – ATOM automatically discovers services running on the device and maps it to the service template. For detailed information about service packages and how to write your own service models, and usage of “maps-to” extension, refer to the “ATOM Platform Guide”.

Ordering Greenfield Services in ATOM

To order a service, that was modelled earlier, do the following:

- Navigate to Automation > Services

- In the right pane, click Add

- In the ensuing form, enter values for the fields that are displayed.

- Click OK

ATOM automatically generates relevant network configurations.

Note: If “Dry Run” is enabled in the Administration tab, the generated configurations will not be applied to the devices.

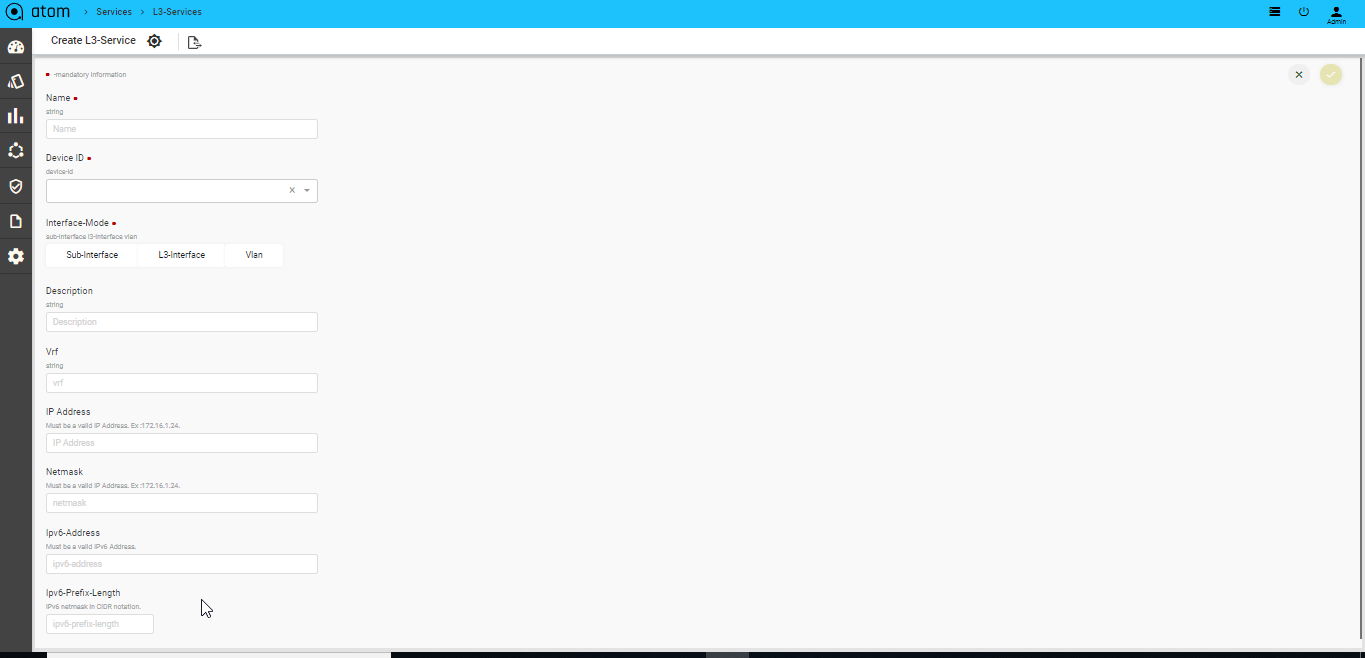

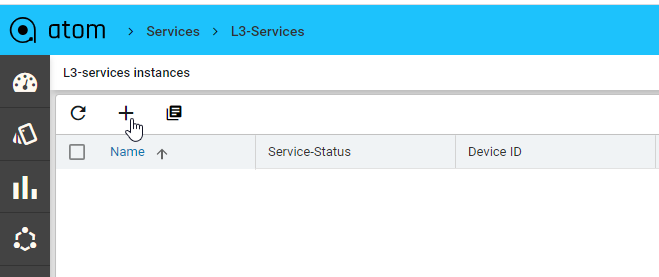

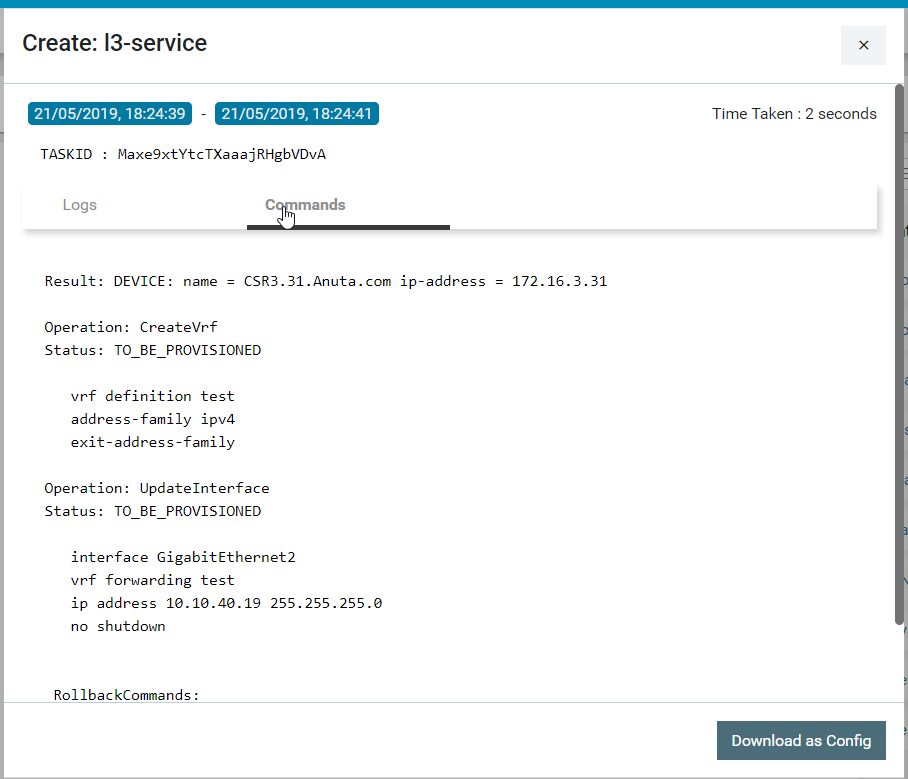

Let us take an example of creating an instance of the “L3 Services” in ATOM. The schematic representation of the service is defined in the .yang file (in this case, l3service.yang file). This file is contained in the model folder of the corresponding service package (l3 service package) uploaded as a plugin to ATOM.

- Navigate to Administration > Plugins & Extensions

- Navigate to Automation > Services > l3-services and click on Add

Deploying BSD services in ATOM

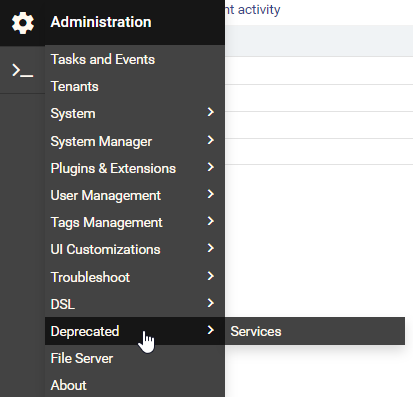

Let us take an example of the deploying the “Application Profiles” service in Brownfield deployment mode:

- Obtain the appropriate service package from Anuta Networks

- Upload the service package into ATOM.

- Navigate to Administration > Deprecated > Services to view the uploaded service package.

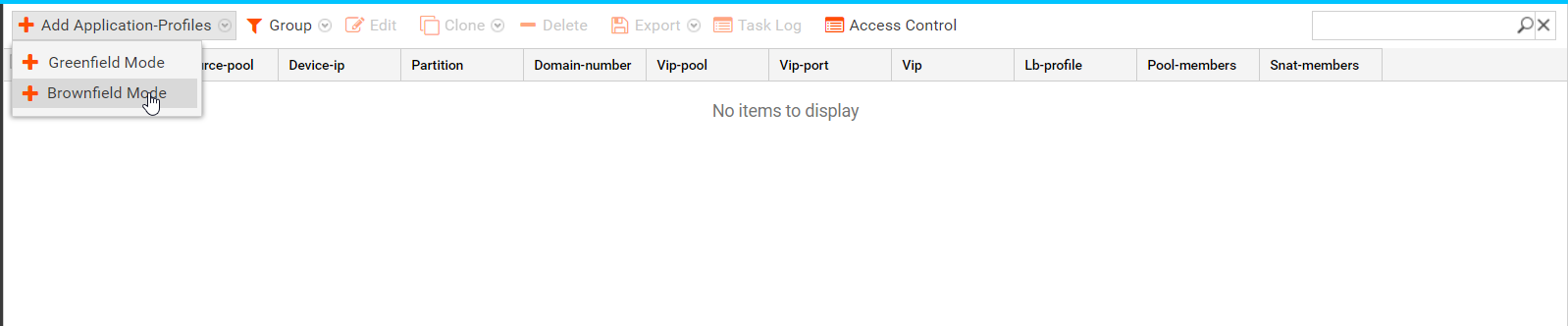

- In the Add Application Profiles pane, select the Brownfield Mode as shown below:

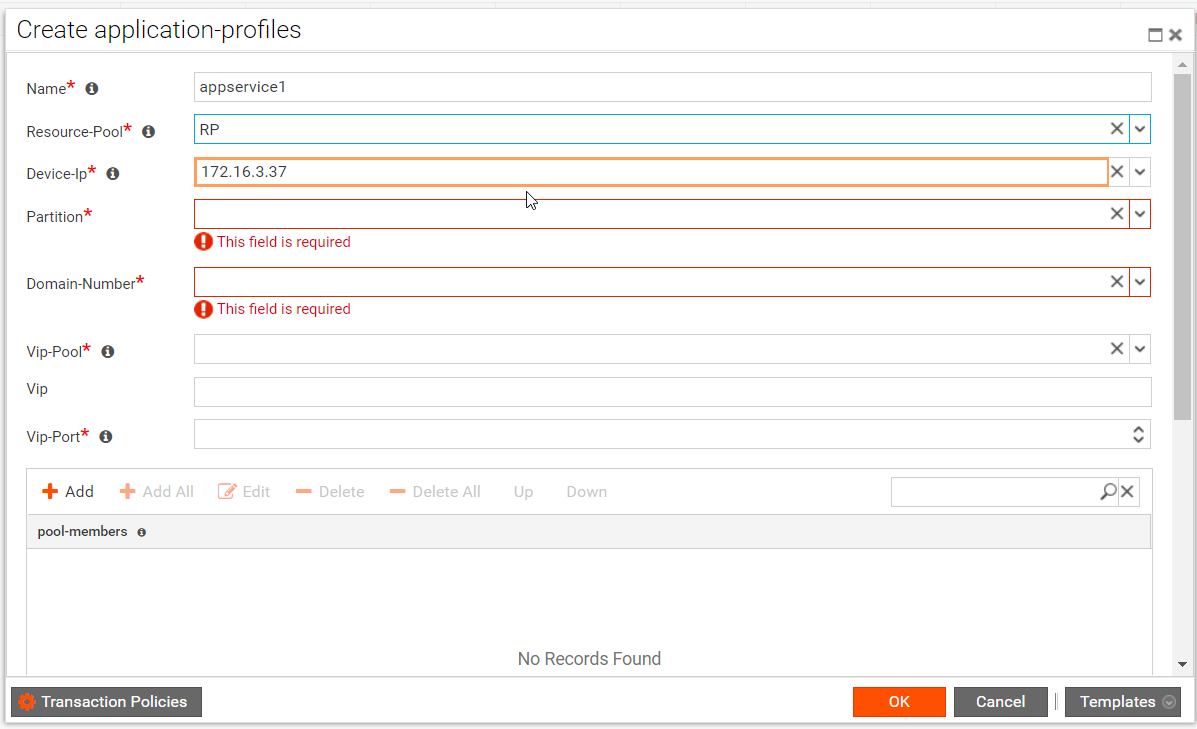

- In the Create Application Services form, all the values discovered from the device are populated in the parameters shown below:

Enter values in the fields that have been marked mandatory.

| Note: The borders of the fields that contain the auto discovered values are coloured in brown color. |

- Click OK after selecting the requisite values in each of the fields.

The commands that are generated in ATOM are not pushed to the device because of the mode of Brownfield deployment.

Transactional control at the Service level

For every service, the admin can control whether the corresponding configurations, generated by ATOM, should be pushed to the device. This gives an admin a granular level of control wherein some services can be sent to the device and a few can be retained on ATOM.

- Navigate to Automation > Services

- Click the service that you want to configure the transaction policies.

- You can either enter the values of the fields or import the values from a template to fill the form.

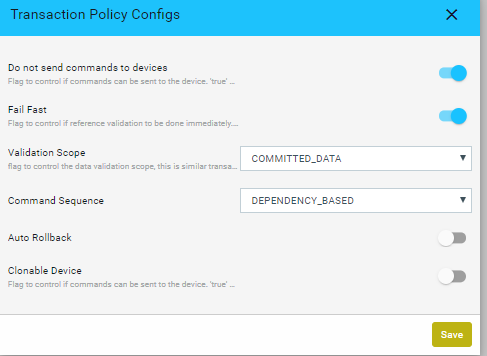

- In the Create service template,click Transaction Policies > Transaction Policy Configs screen to set the control at the transaction level as shown:

| Option | Type | Description |

| do-not- send-commands- to -devices | boolean | Controls whether commands can be sent to the device. devices Select this option to commit the data to ATOM datastore, but no configuration changes will be applied on the device. Useful for testing or in the case of a brown‐field environment to create services. Note: The value set for this option at the transaction policy overrides the value at the global level (in the General Settings) |

| fail-fast | boolean | Controls whether the reference validation should be done immediately. False: Defers the validation to after ‘commit-task’ state of the transaction |

validation‐scope‐type

| enum | Controls whether data validation scope is across transactions. This flag is similar to isolation control in traditional RDBMS, but limited to just data validation. Allowed values are “COMMITTED_DATA” and “UNCOMMITTED_DATA”. Validation will be done only using the committed data. Current transaction will not see changes done by other parallel transactions Data validation will be done using the uncommitted data. Current transaction will see changes done by other parallel transactions |

| enum | Controls whether the generated commands need to be ordered according to the dependencies specified in the model. Generated commands will be re-ordered based on the dependencies specified in the data model. Generated commands reflect the order of the requests sent from the client, no re-ordering is done |

The values for following options can be cross-verified before creation of each service

- Fail Fast

- Validation Scope

- Command Sequence Policy

- Click the task created for the created service to view the commands generated by ATOM in the Task Details.

In the Task details, click Commands to view the generated commands by ATOM. As the commands should not be sent to the device, (if do-not-send-commands-to-device option is selected), the status of the commands is set to “TO_BE_PROVISIONED” as shown below:

The generated commands can be downloaded and verified with the expected configurations for that service.

Cancelling an ordered Service

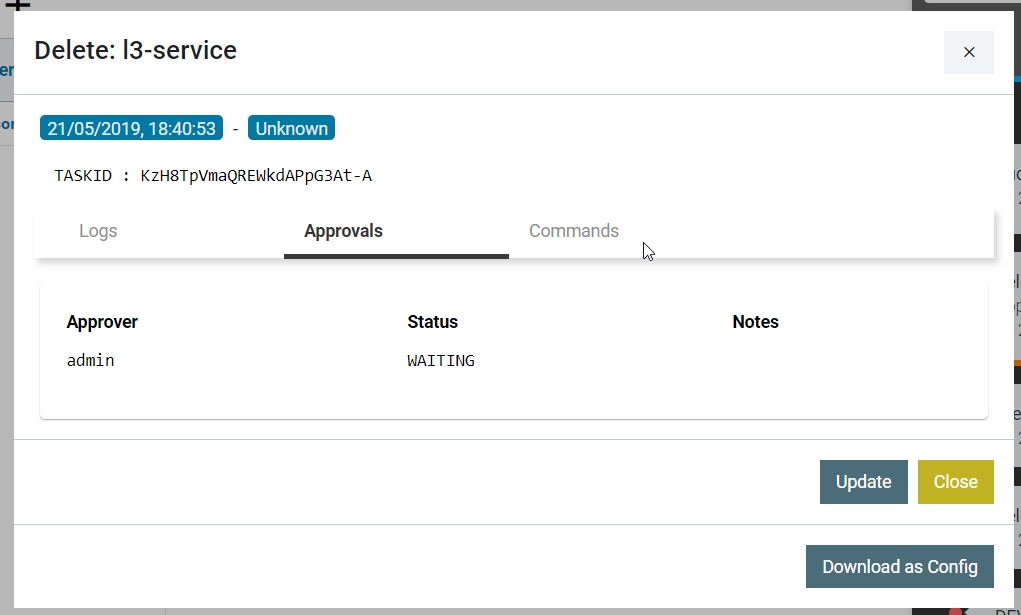

- Select the service and click Delete.

- In the Confirmation window, before selecting the Yes button, click the Transaction Policies.

- Select the option , “Do‐not‐send‐commands‐to‐devices” in the policy

In the corresponding task generated, in the Task Details pane, click Commands to view the generated commands by ATOM. As the commands should not be sent to the device (if “do not send commands to the device” option selected in the transaction policy config), the status of the command is set to “TO_BE_PROVISIONED”.

| IMPORTANT: If this option is not selected properly as per create behavior, the service deletion might fail. |

Service Approvals

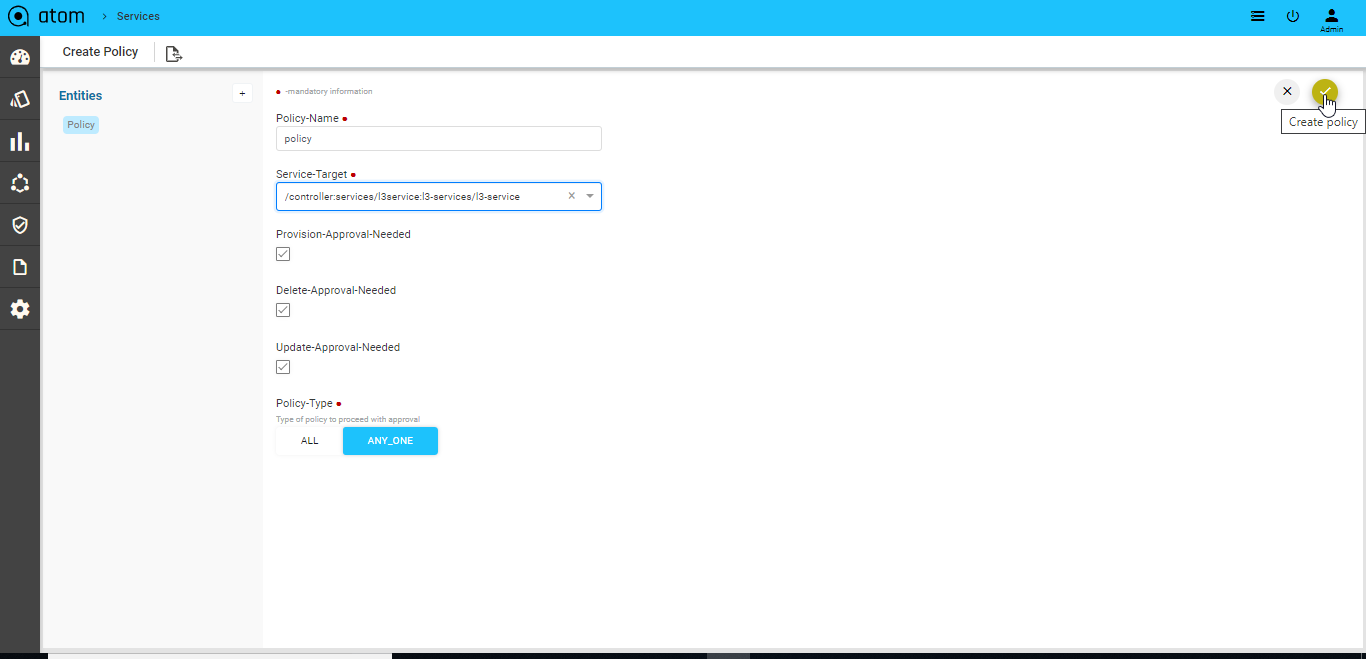

You can create policies for approving creation, deletion or updation of the service configurations on devices. In addition, you can add approvers who must approve the operations defined in the service approval policy. Apart from seeking approvals for services, you can set approvers for any of the operations for any entity in ATOM.

- Navigate to Automation ->Services-> Approvals

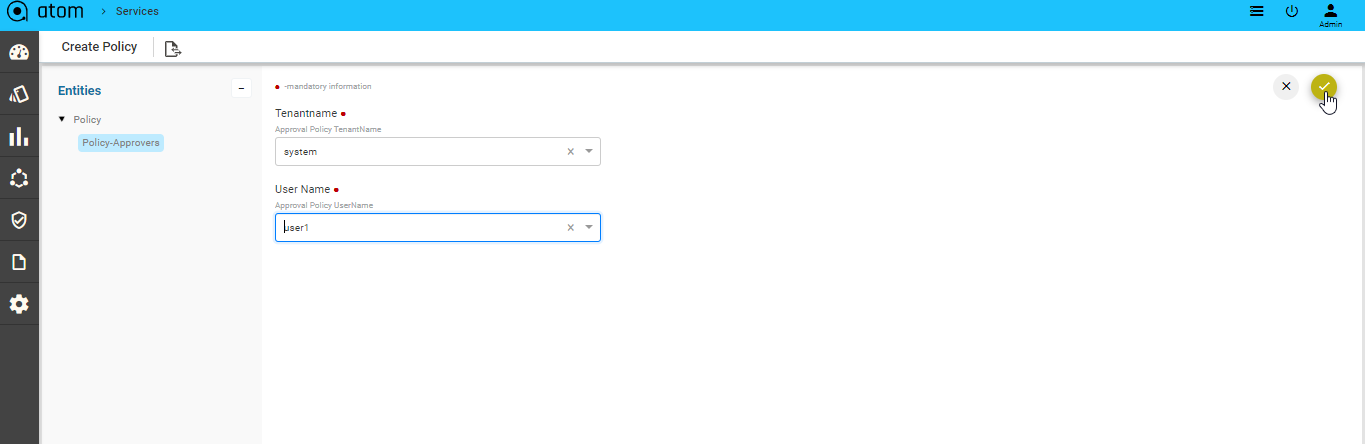

- In the right pane, click Add Policy to create the details as shown below:

- Navigate to the right pane to add details as described below:

- Policy Name: Enter a name for the approval policy

- Service Target: Enter the path for the object in the data model tree for which approval is required.

For example: If the object of interest is a service, enter the path of the service.

/controller:services/l3service:l3-services, which means that the operation of interest on this managed-cpe -service will be sent to the approver or approvers for their perusal before being pushed to the device.

- Provision Approval Needed: Check this option if the user selected as the approver should approve the configurations before they are pushed to the respective device or devices.

- Delete Approval Needed: Check this option if the approver should approve the configurations that are required for deletion of the service configurations from the device or devices.

- Update Approval Needed: Check this option if the admin should approve the configurations that are required to update the service configurations on the device or devices.

- Policy Type: This option enables you to set if approvals are required from a single approver or multiple approvers.

- ALL: The task that is generated as a result of a service operation awaits the approval of all the approvers who have been added for that service .

In the following example, the operation of creating a ‘customer’ needs approval of two approvers, ‘admin’ and ‘User1’. The task is completed successfully after receiving the approval of all the approvers as shown below:

- ANY_ONE: The task generated as a result of a service operation awaits the approval of any of the multiple approvers added for that service.

| NOTE: All changes made in the service approval policy will come into effect only for the subsequent service instantiations and will not affect the ongoing service operations. |

- Navigate to the left pane to add the tenant users who should approve the configurations generated by ATOM for any of the service operations (create, delete or update ).

| NOTE: Do not edit the name of the user (UserName) who has been added as an approver in the service policy. |

Agents

ATOM Agent handles all Device communication all communicates with Other ATOM Components either remotely or locally based on deployment mode.

Each ATOM Agent manages multiple network devices. ATOM agents can be assigned with multiple CIDR blocks to manage the devices. It is used to communicate, collect and monitor the networking devices in your infrastructure using standard protocols. Once the agent collects the data, it gets encrypted and sent to Anuta ATOM Server over an outgoing SSL Connection.

One Agent can typically manage hundreds of devices. However, it depends on many other factors such as device type, data collection, size of the data, frequency etc. Checkout ATOM Agent Hardware requirements for further information.

ATOM Agent Deployment is discussed in detail in “ATOM Agent Deployment Guide”.

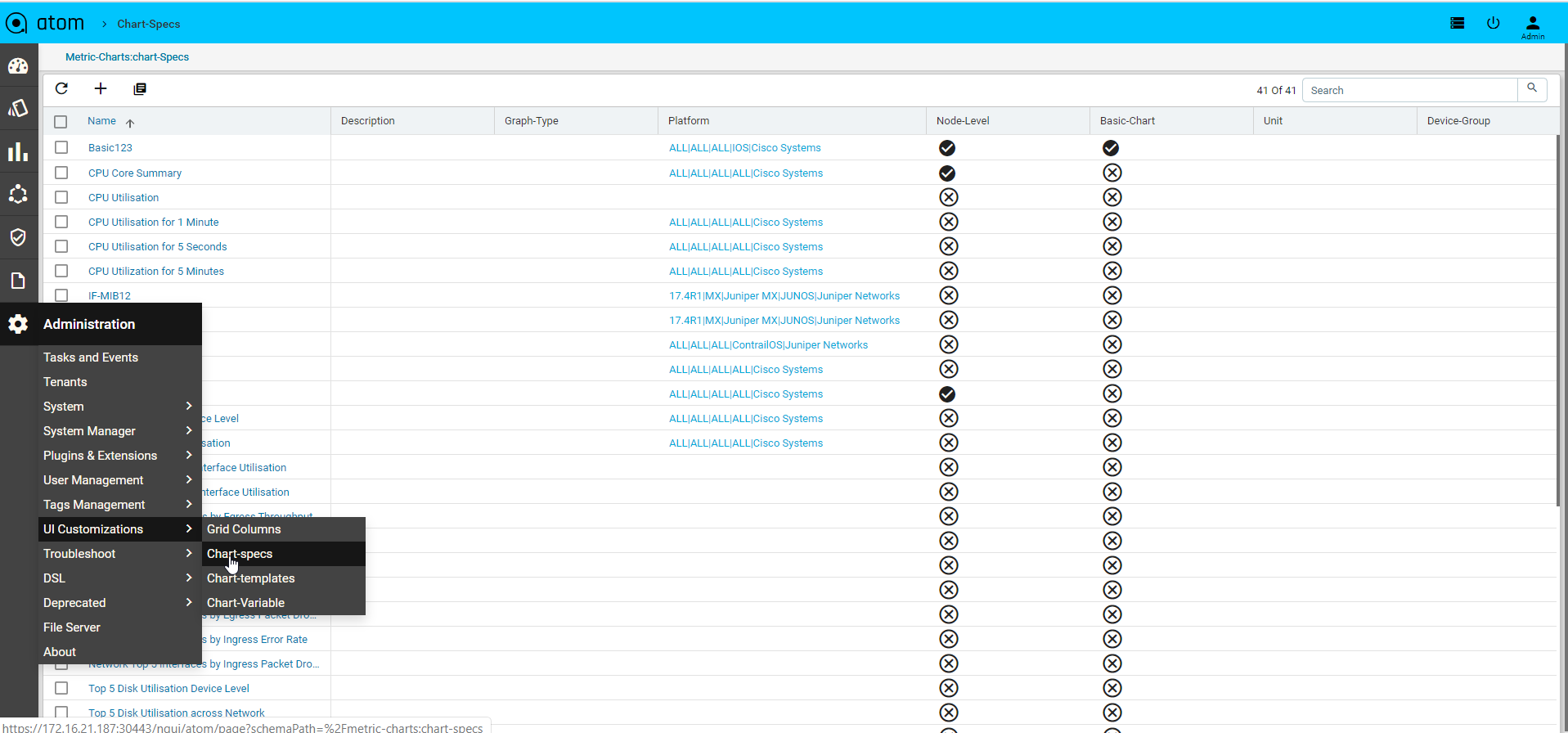

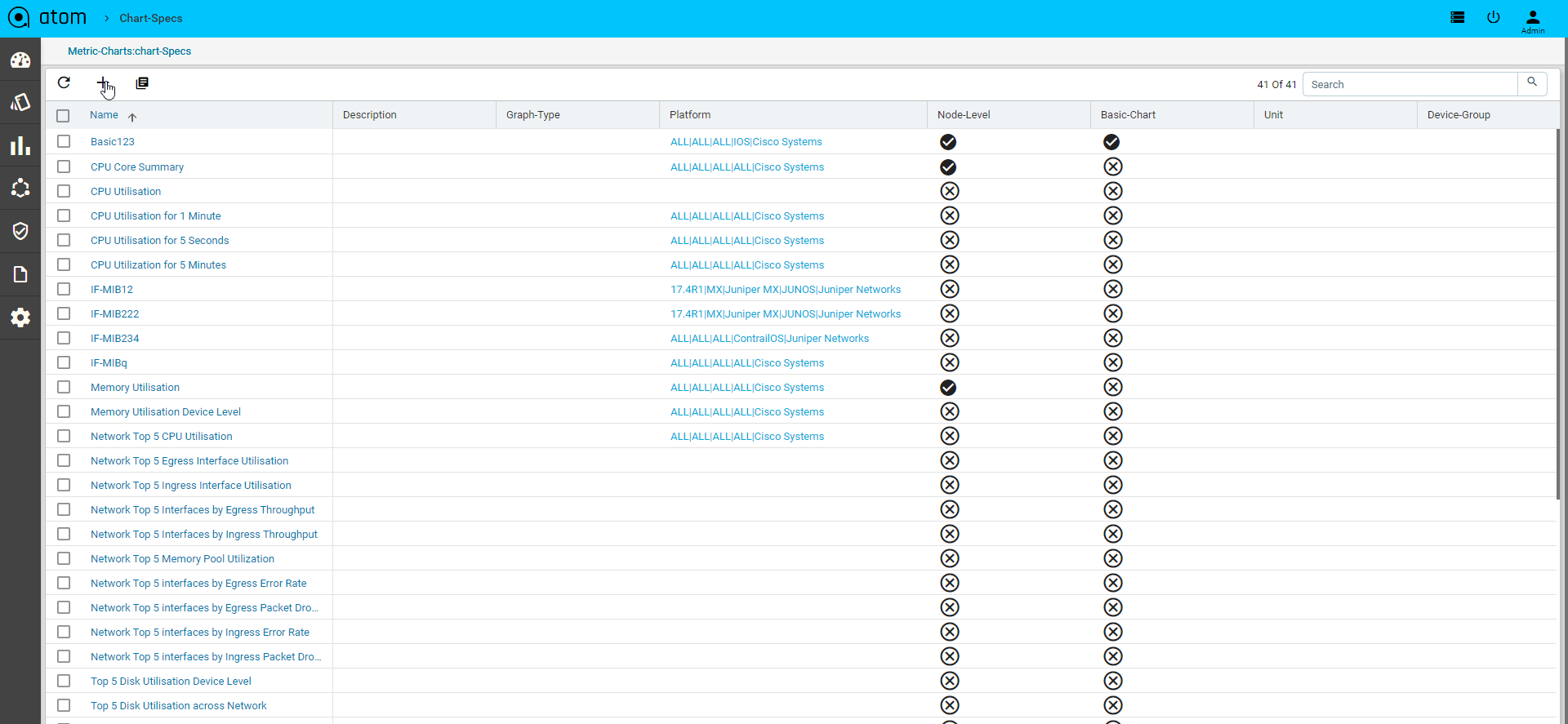

Administration

As an administrator, you can manage changes in the ATOM that will affect the behavior of the system and have a global effect on all the components of ATOM.

- “Tasks” and “Events”

- “Tenants”

- “System“

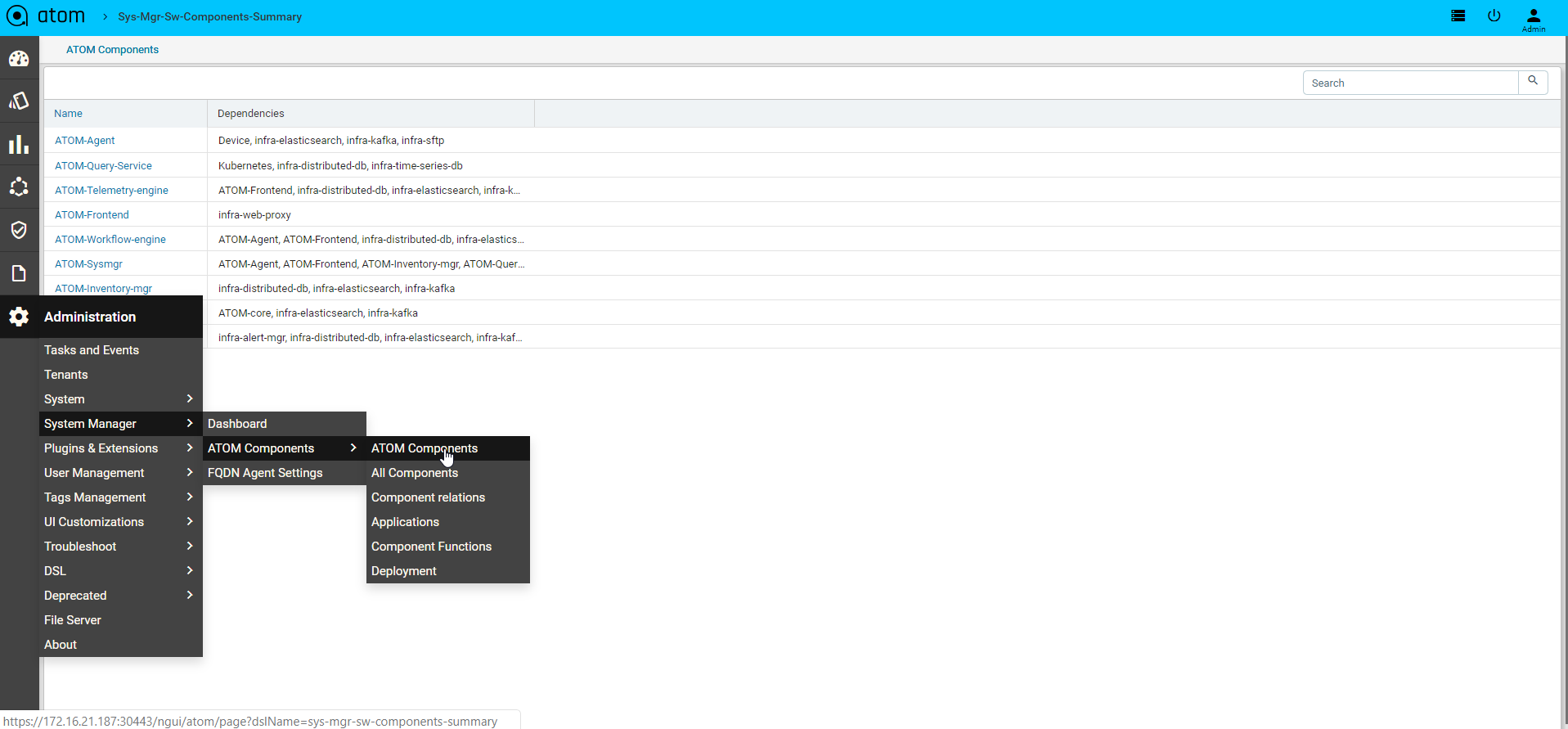

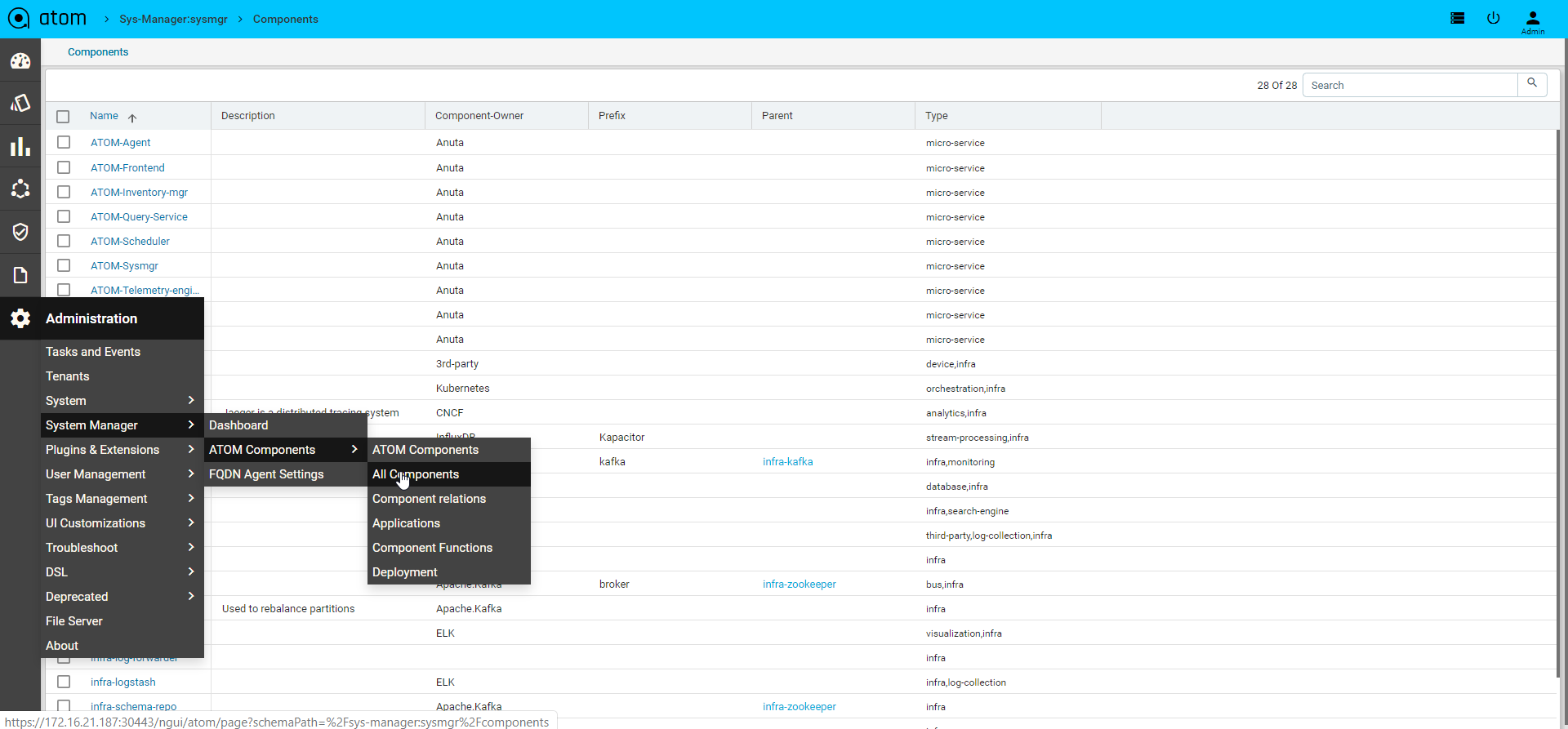

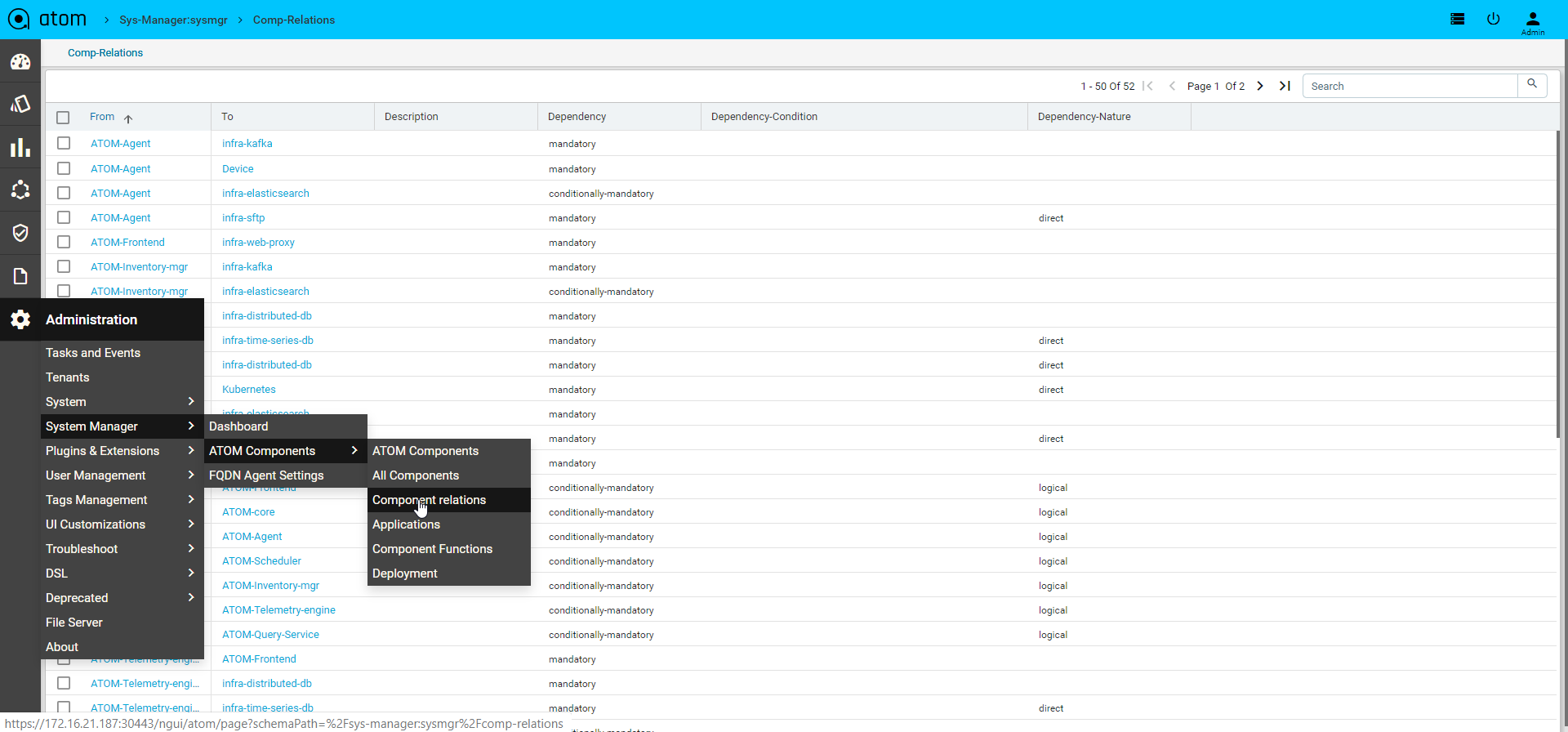

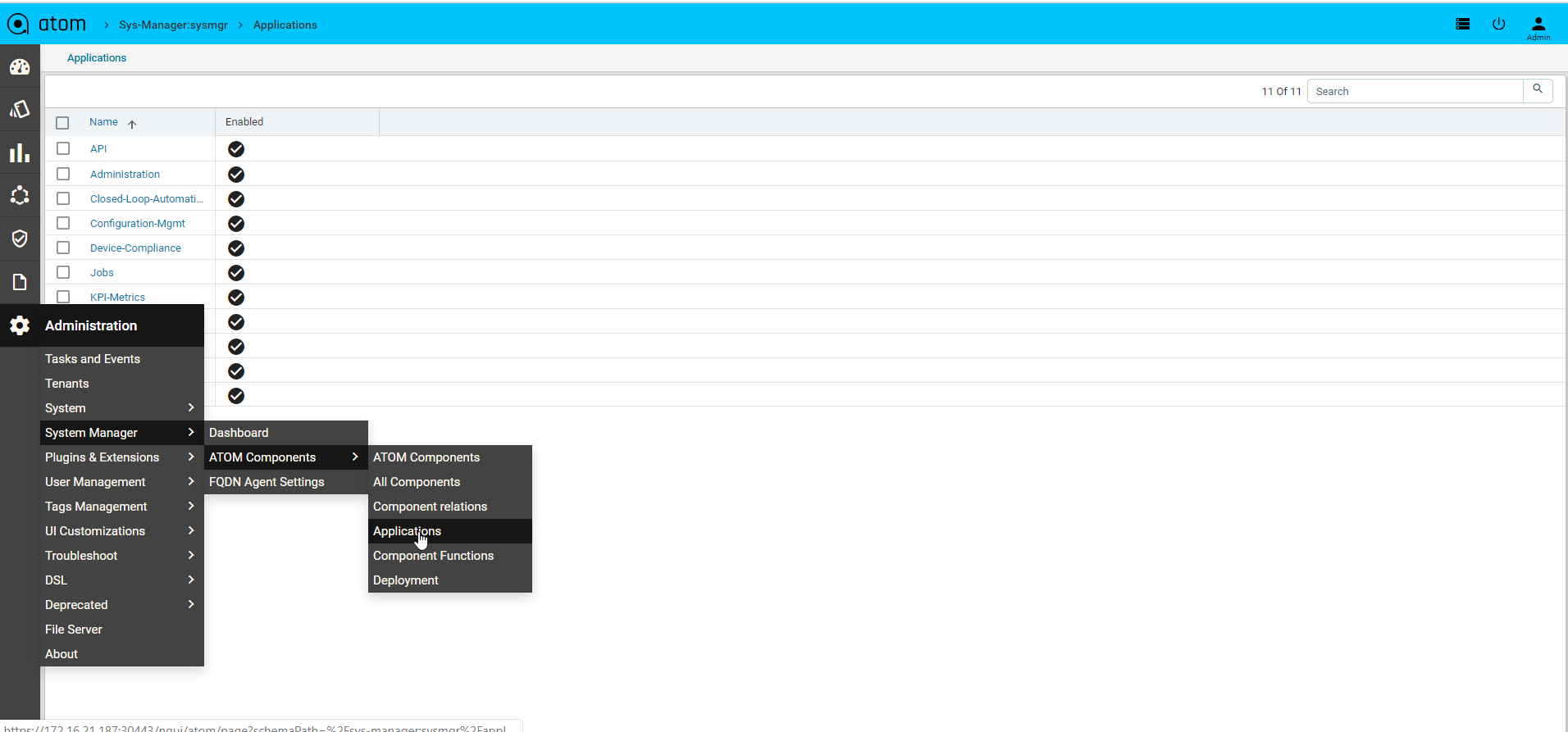

- “System Manager”

- “Plugins and Extensions”

- “User Management“

- “Tag management”

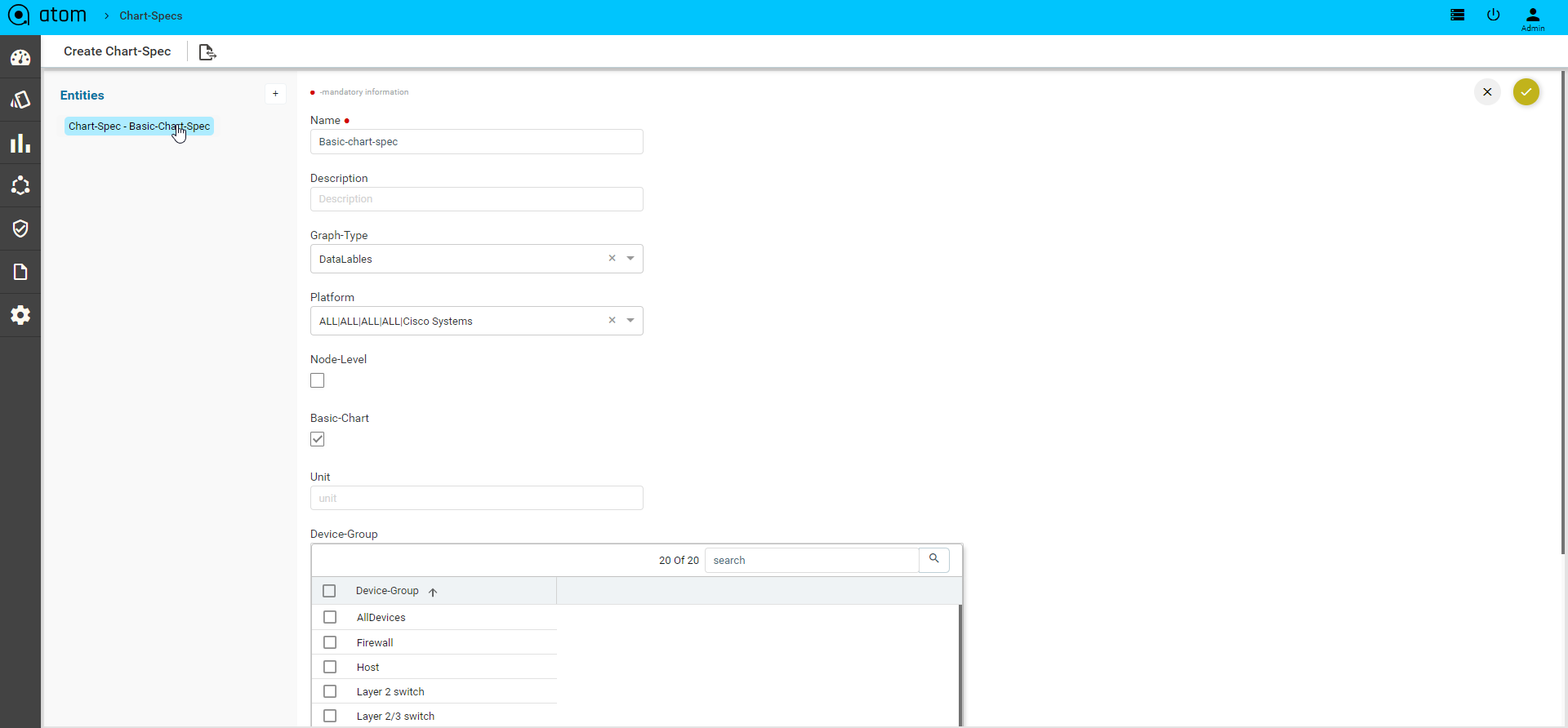

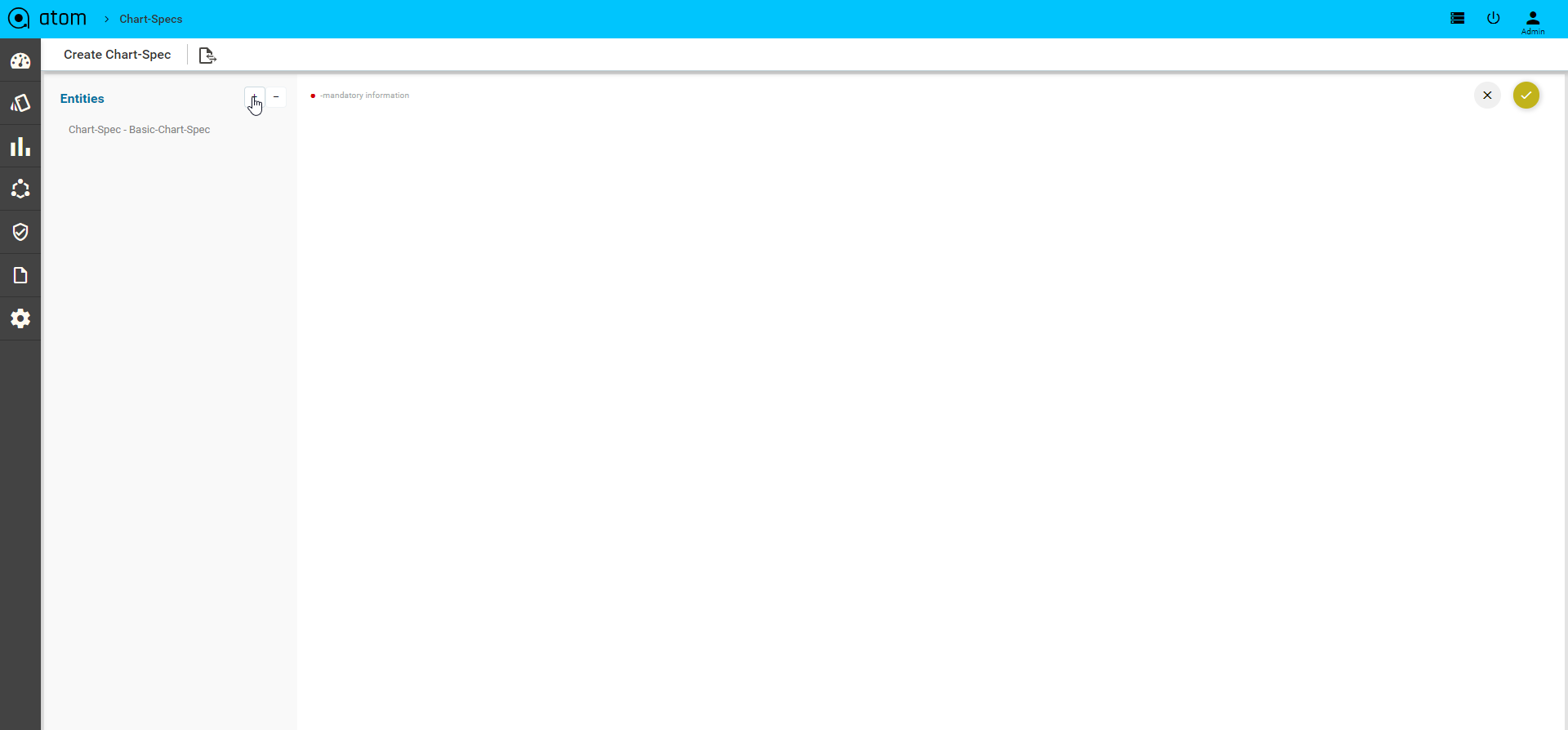

- “UI Customizations”

- “Troubleshoot”

- “DSL”

- “Deprecated”

- “File Server”

- “About”

Tasks & Events

You can view any activity, “task”that is being executed in ATOM as a result of an user‐ initiated action. Tasks are generated during the following operations such as:

- Adding or Deleting Devices

- Executing Jobs

- Validating the resource pool and running the Inventory

- Configuration out ‐of ‐sync between the device and ATOM

- Creating or Deleting Networks

- Select any Task and click Details to view the configurations associated with that task.

- You can search for any Task by entering a query in the Search field.

- Select any task and click Cancel to view the task is to be cancelled

- Select any task and click Download Log to view the system related logs and message.

For example, enter “Create” in the Search field, if you want to query for all the Create operations that have been executed so far. All the Create tasks that have been triggered in various operations are displayed as shown below:

- Click Retry when the creation of a Service (during instantiation of the Service) fails due to deficit in the operational resources or during provisioning. 4. Click Task log to view the system related logs and messages

Events

Events represent an important part of an operation or a change in the state of an object in ATOM. For example, an event is generated when a user logins to ATOM. In addition, login attempts to a device using any of the transport types is also displayed.

Select a task and click Details to view the schema of the service, click Commands to view the configurations associated with the service generated by ATOM.

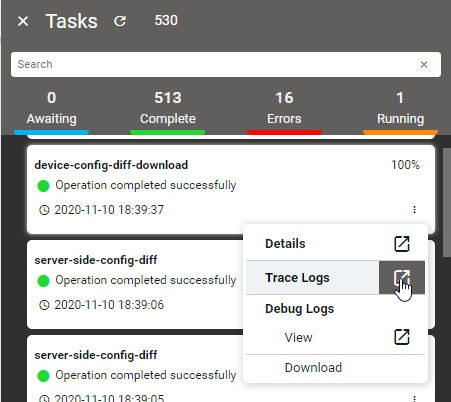

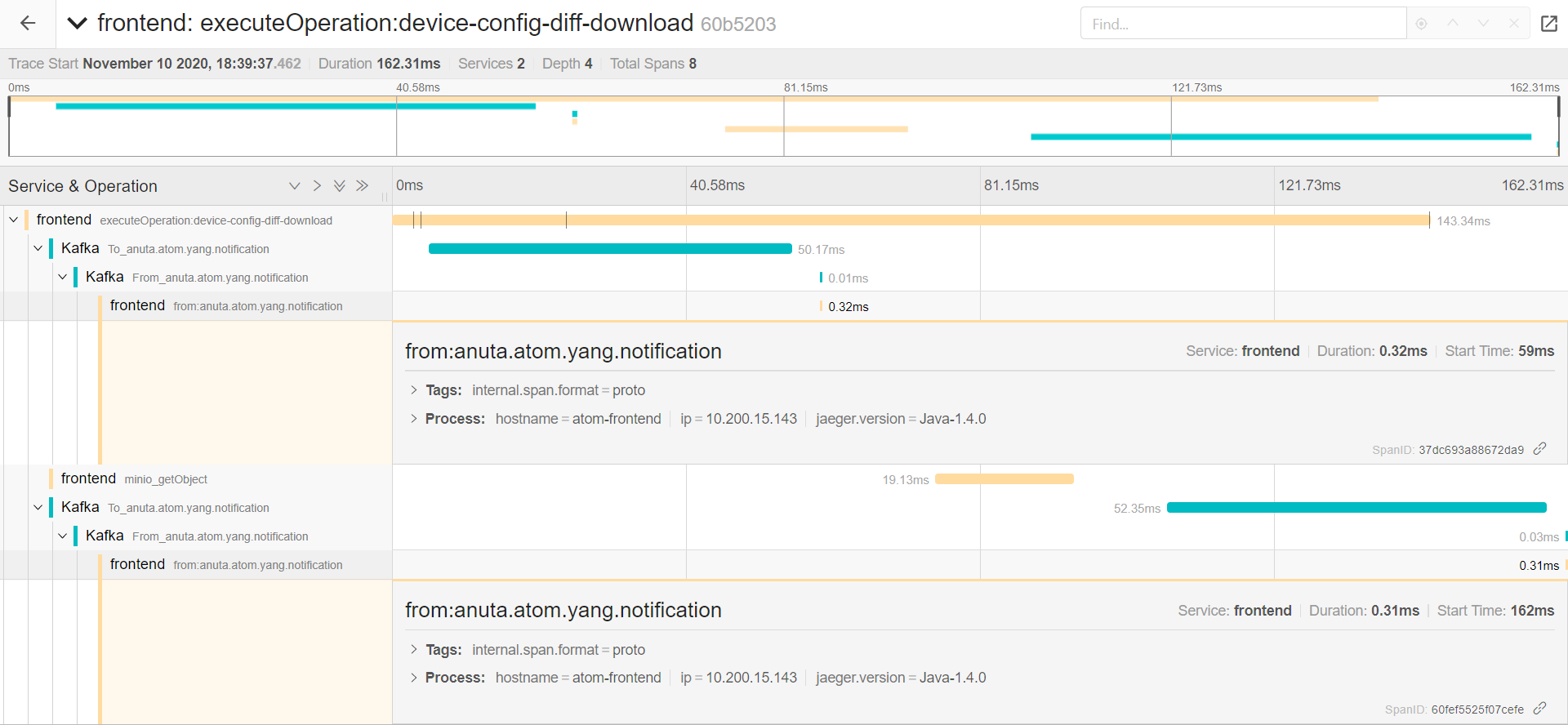

TraceLogs

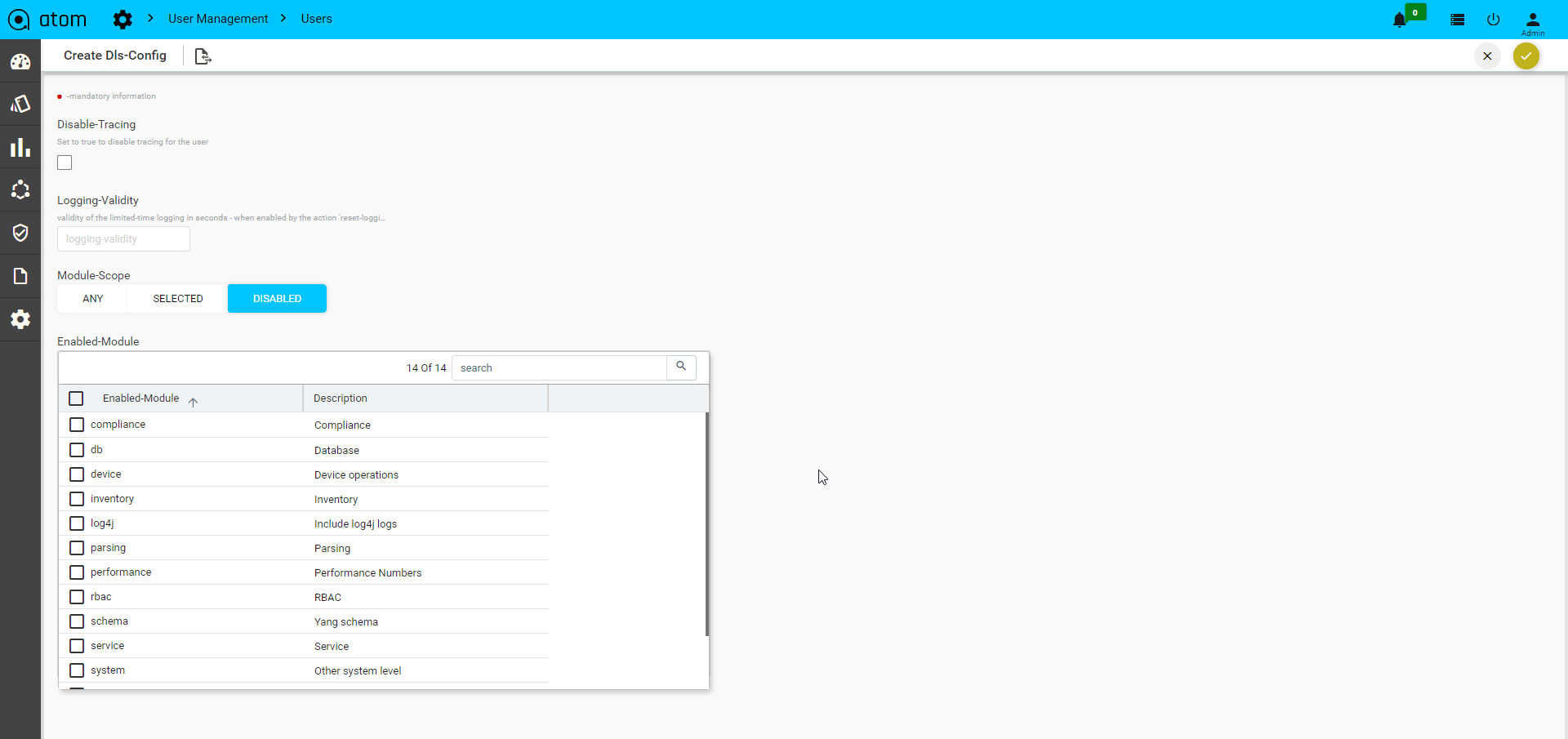

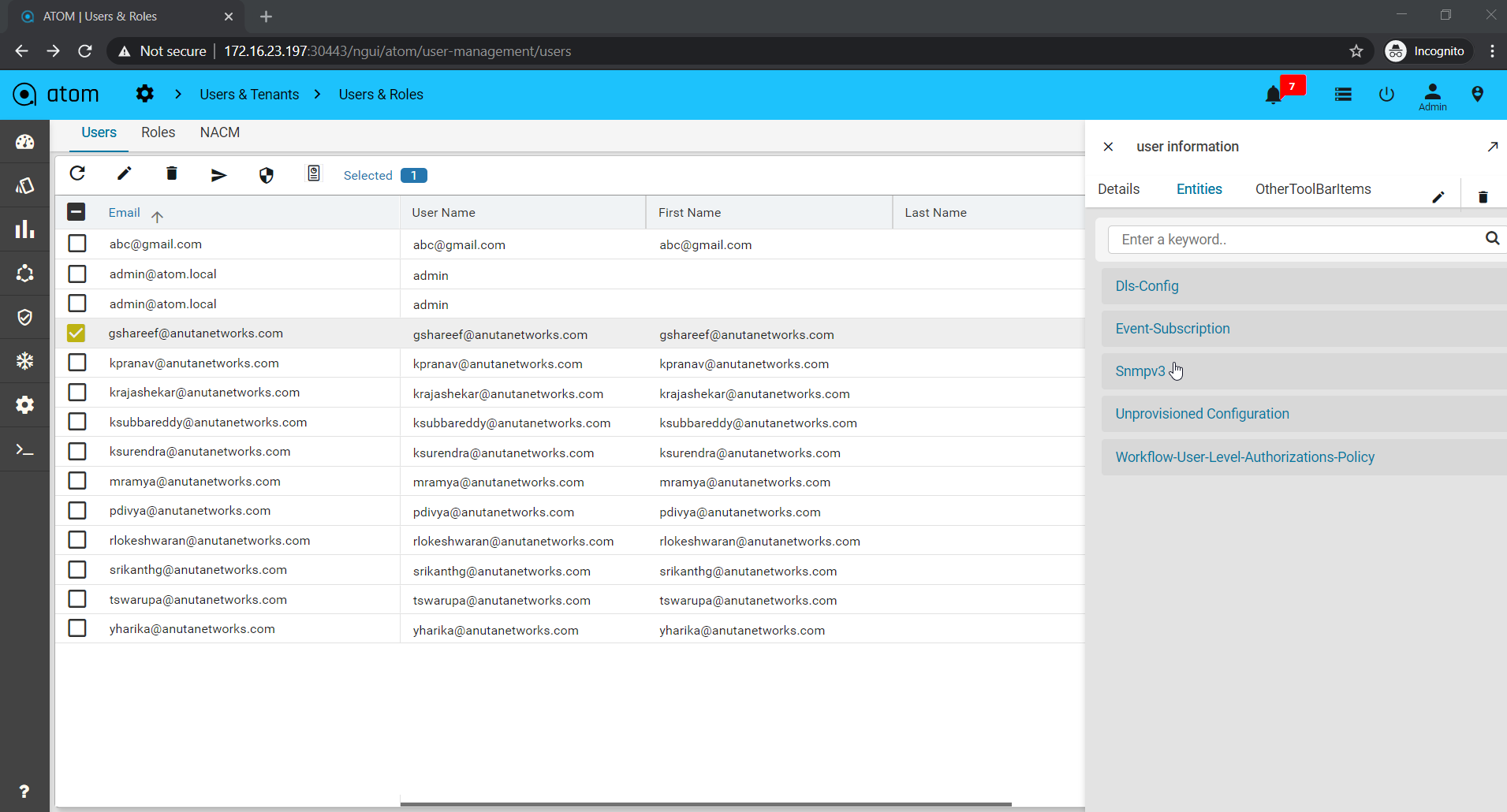

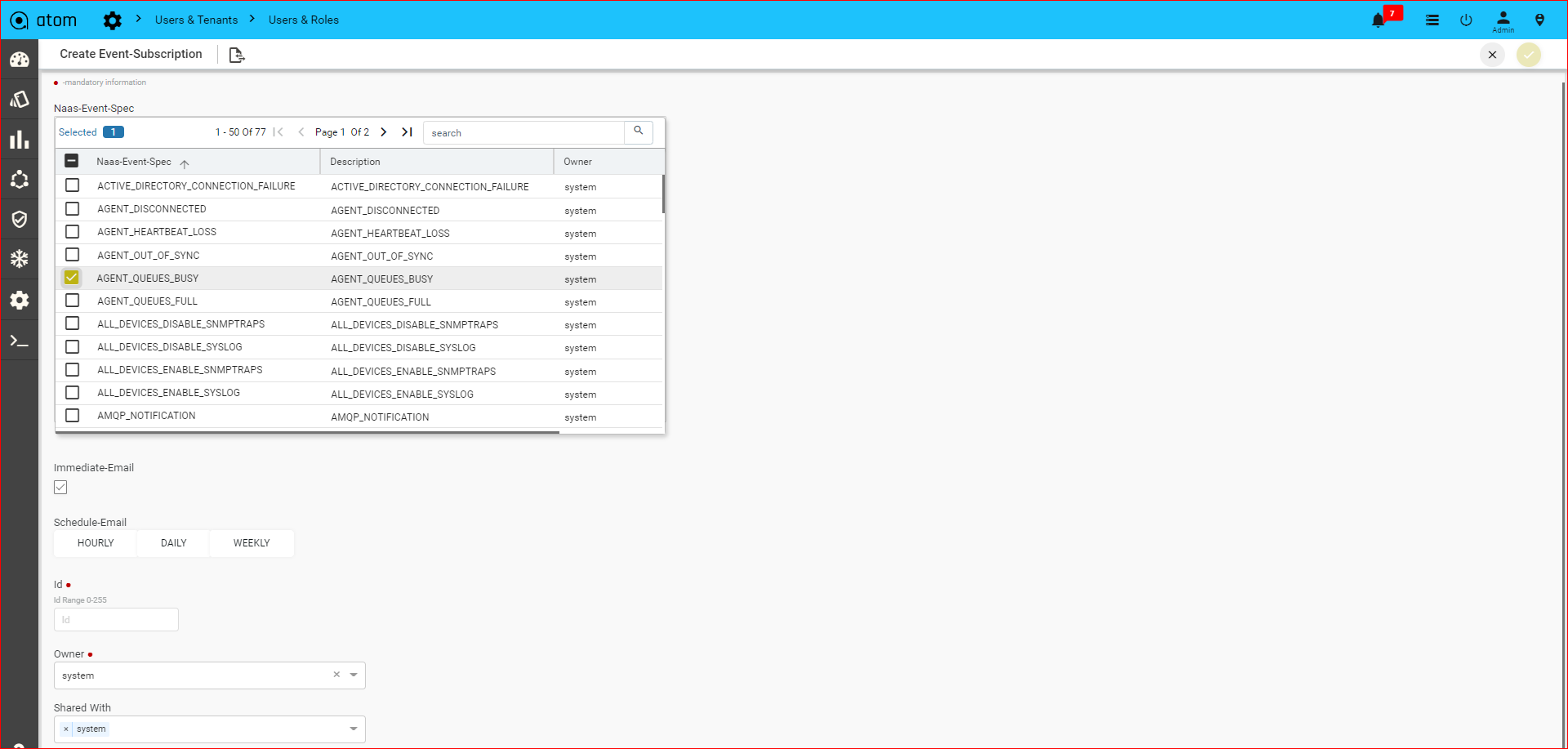

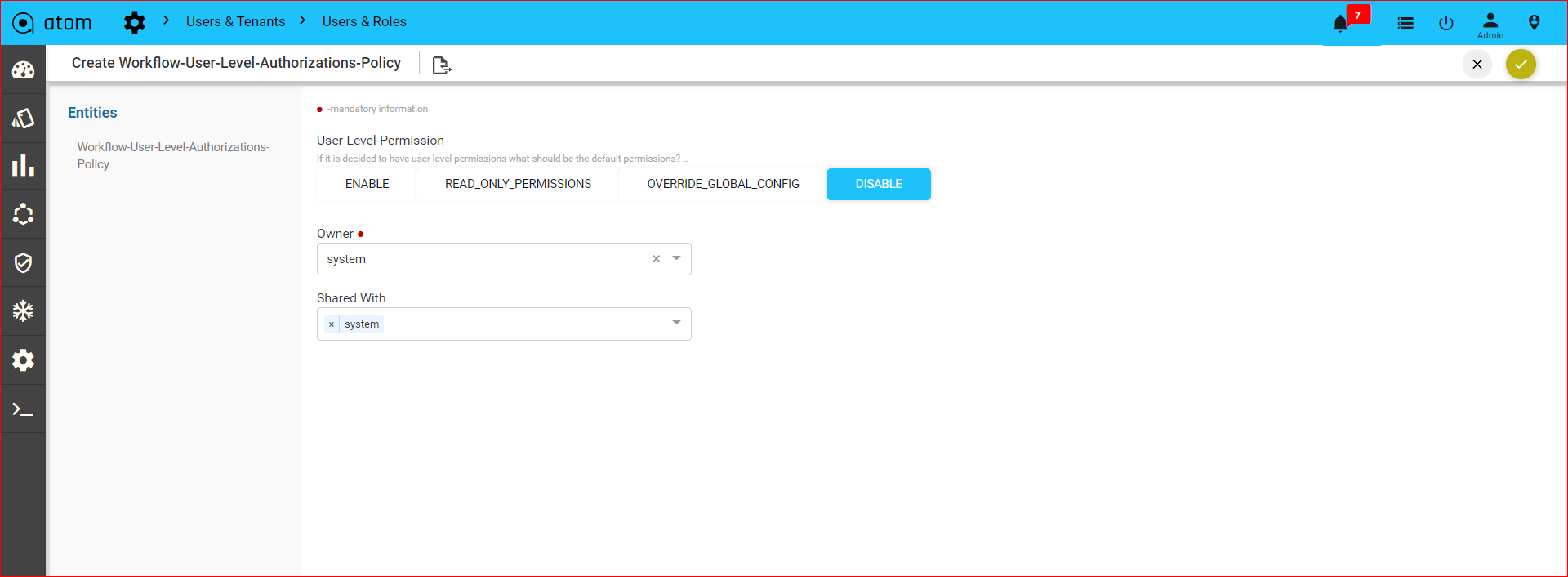

Trace Logs enables users to end-to-end distributed tracing of a task.User can monitor the performance of the task and latency optimisation can be done. It actually helps users to encounter the root cause analysis. The Tracelog option was enabled in tasks UI and also in tasks and events. Select any task and click Trace Logs to view the task in distributed tracing.Trace logs UI can be visualized from jaeger UI, this shows a complete cycle of the task and all the components involved in it. To Enable TraceLogs Navigate to Administration > User Management > UsersHere select a User and Entities > DLS-Config

- Disable-Tracing: Set true to disable tracing for the user.

- Logging-Validity: Validity of the limited-time logging in seconds.

- Module-Scope: List of the modules that are supported for tracing. Any: Enables any of the modules selected. Selected: Enables only selected modules to tracelogs. Disabled: Selected Module will disabled while tracing.

| Note: When you select the trace logs from tasks UI it opens in the new tab as jaeger UI with SSO URLs. When you select trace logs from Tasks and Events then it opens in the ATOM application itself as a new window. To enable trace logs from deployment jaeger-tracing pods should be up. |

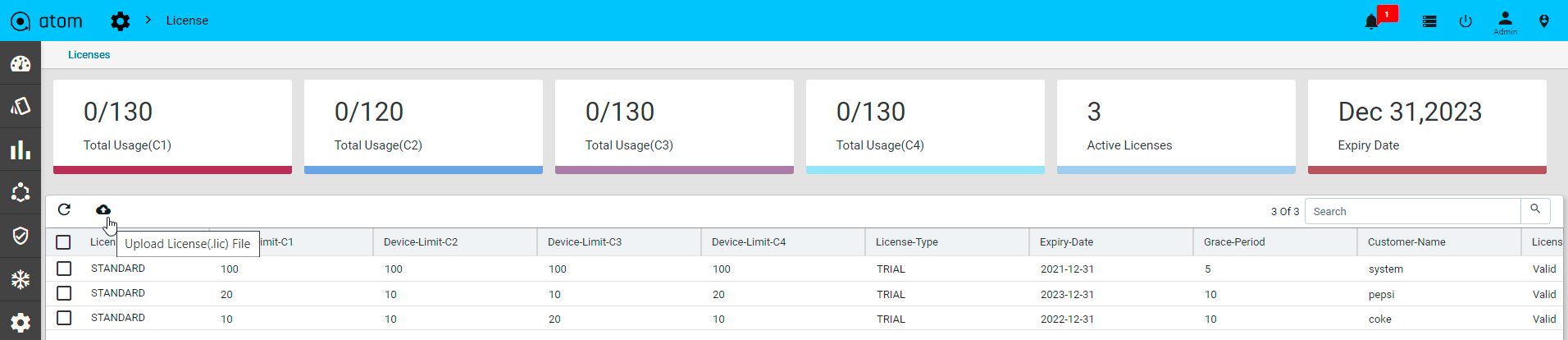

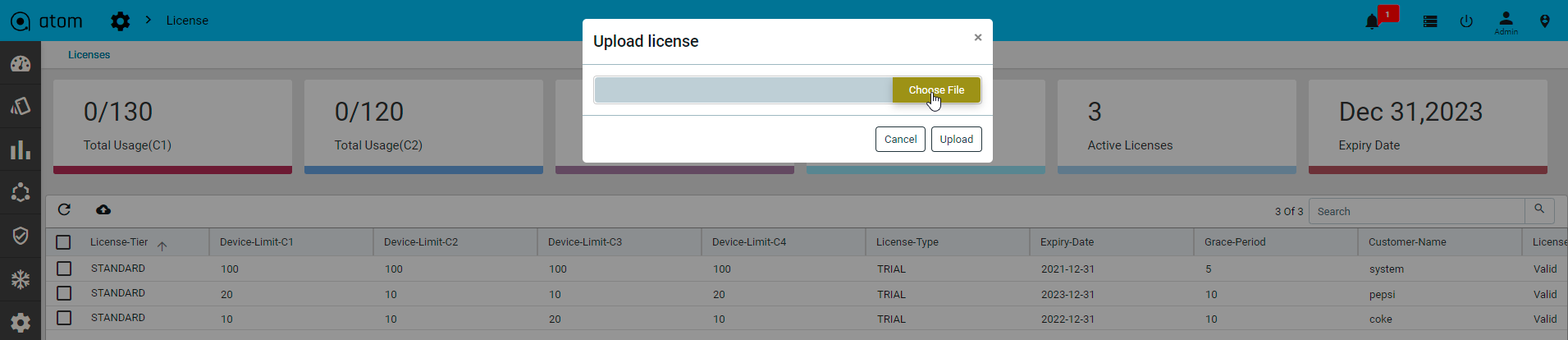

System

As an administrator, you may want to configure or modify the system settings or customize these settings after installing ATOM.

- “Rule Engine“

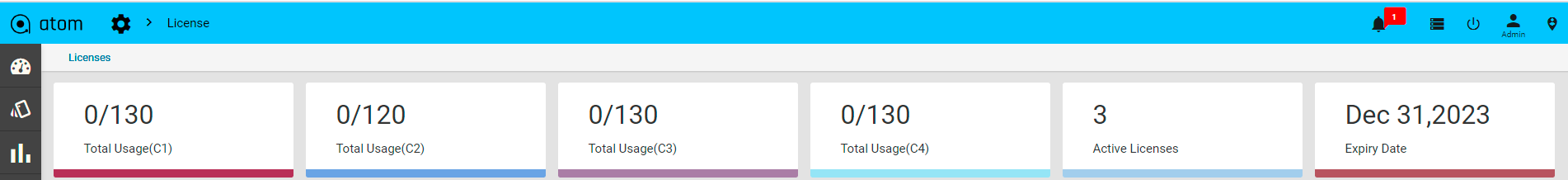

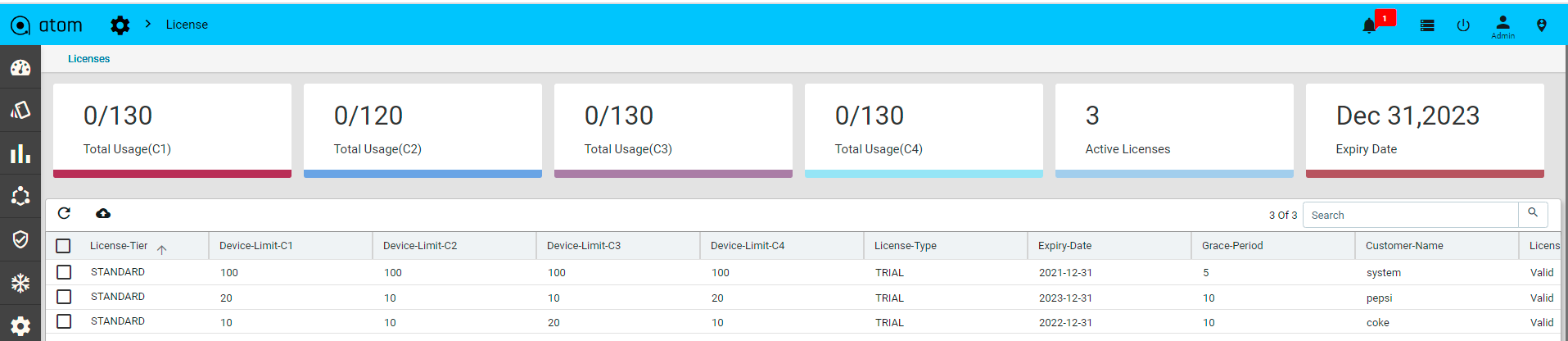

- “License“

- “General Settings“

- “Look and Feel“

- “Event Summary“

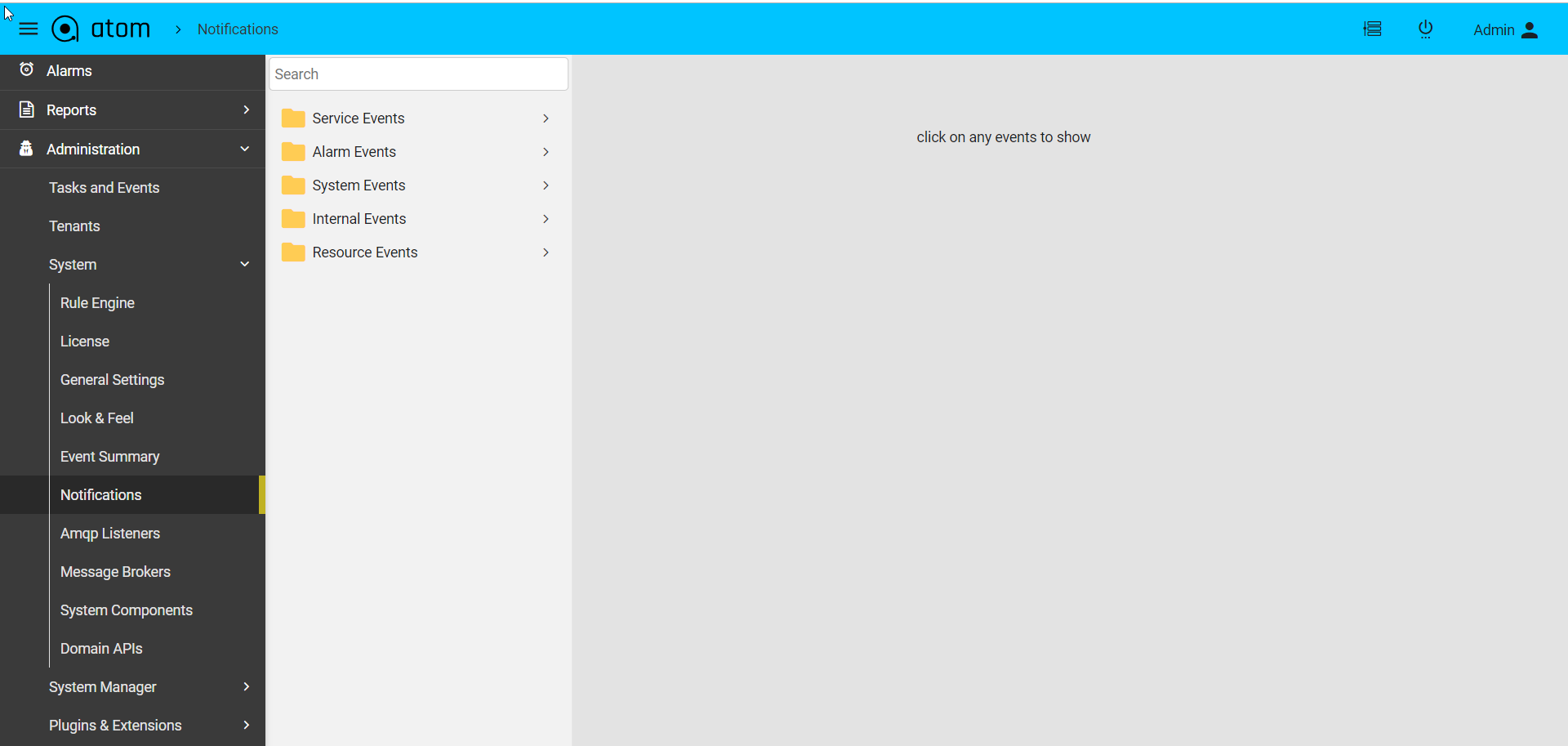

- “Notifications“

- “Message Brokers“

Rule Engine

Rule engine is a functionality in which the user‐defined business logic is executed to bring about changes in the state of the resources managed by ATOM. The logic describes the sequence of operations that is associated with data in a database to carry out the rule. You can create rules in the Rule engine for ATOM to handle changes in devices in a maintainable, reusable, extensible way. Rule engines support rules, conditions, priority (based on index), and other functions. Rules can be constructed to serve various functions, some of which are listed below:

- Resources Validation

- Triggering different actions based on some user defined conditions

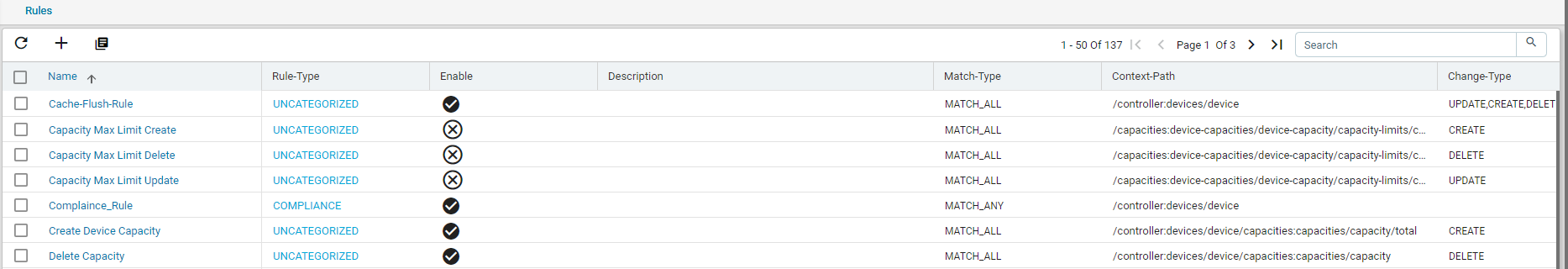

All the system defined rules available in ATOM as shown in the following snippet:

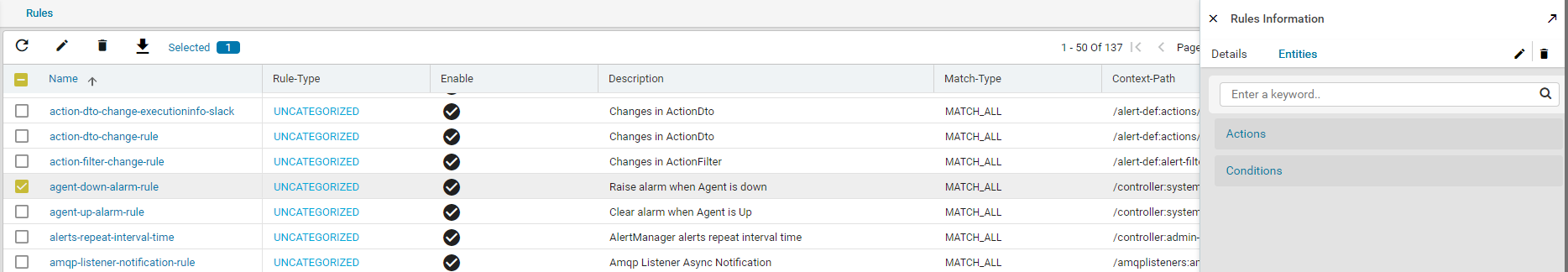

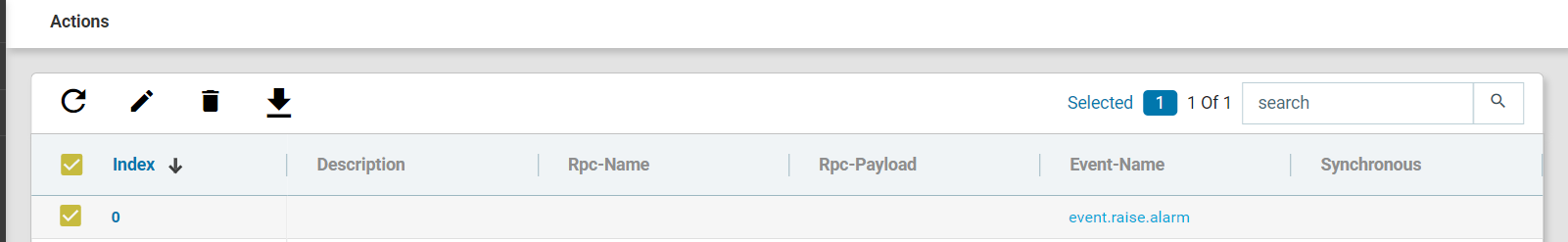

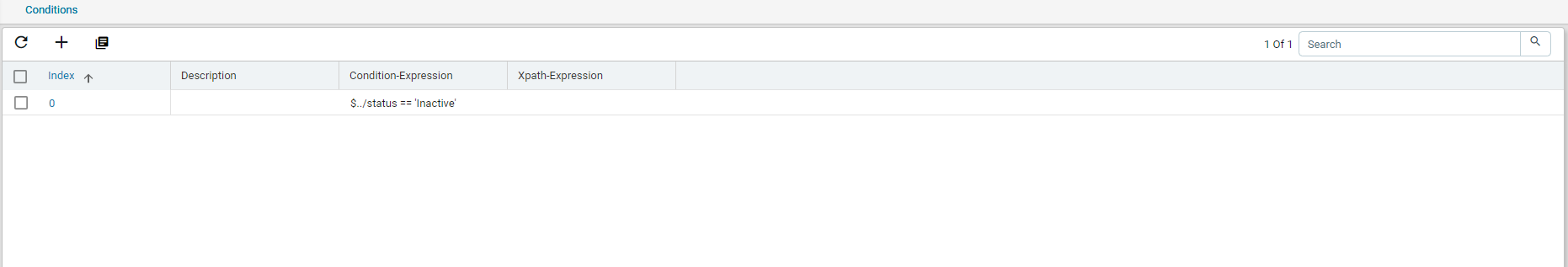

Click on any rule > Entities of your interest and view the Actions and Conditions associated with that rule.

For example, double click the ‘agent-down-alarm’ rule as shown below:

This rule comes to effect when any of the ATOM Agents goes OFFLINE and the status is set to INACTIVE.

In addition to the rules that are available by default, you can create a custom rule as per your requirement as described in the following section.

Rule

Rules are conditional statements that govern the conduct of business processes. A rule consists of a condition and a set of actions. If that condition is met, and is evaluated as true then the rule engine initiates one or more actions.

A rule is composed of three parts:

- Condition ‐ The condition part is a logical test that, if satisfied or evaluates to true, causes the action to be carried out

- Action ‐ The action part consists of one or more actions that need to be performed when the condition is met.

- Event ‐ The event part specifies the signal that triggers the invocation of the rule.

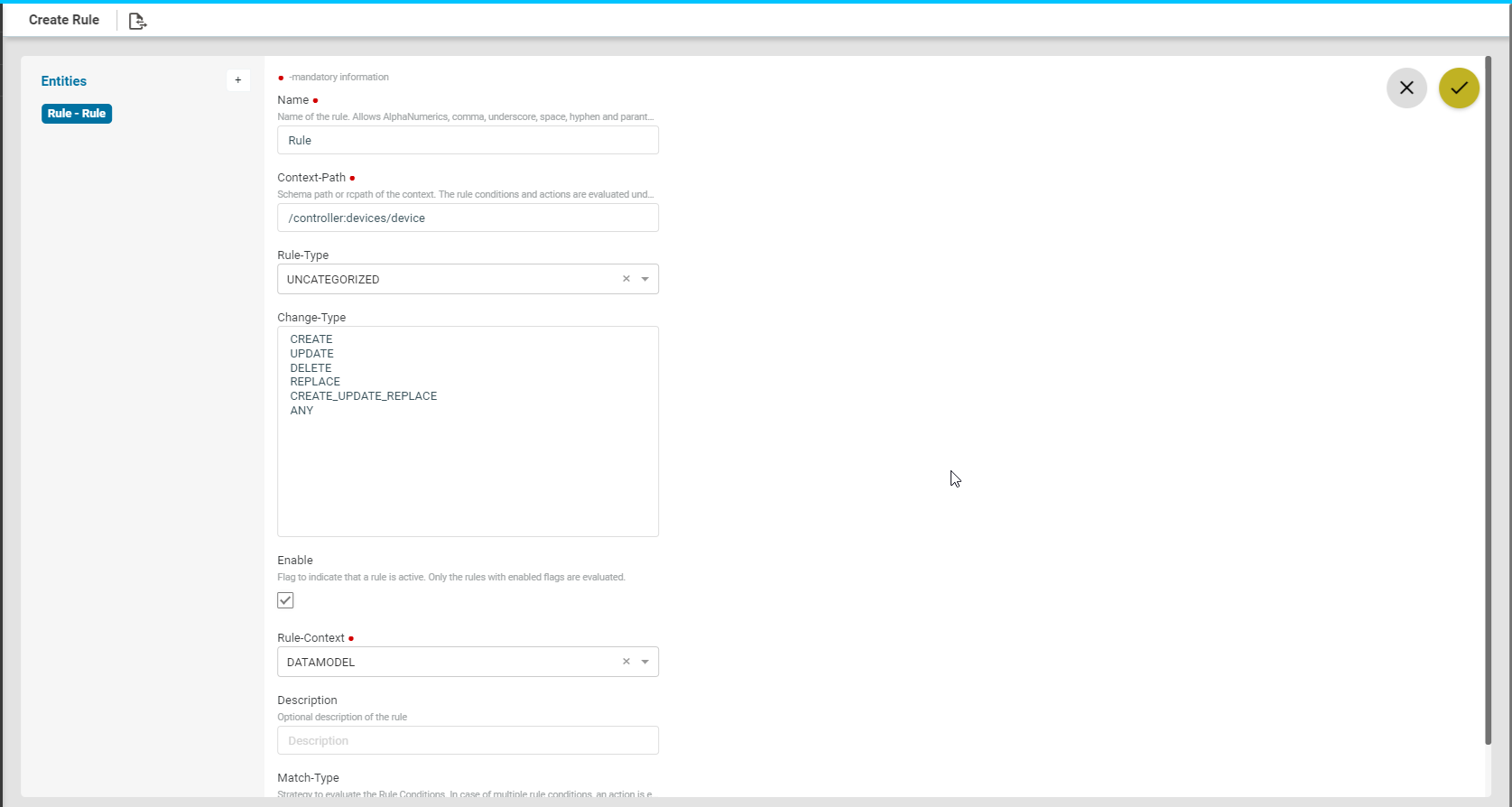

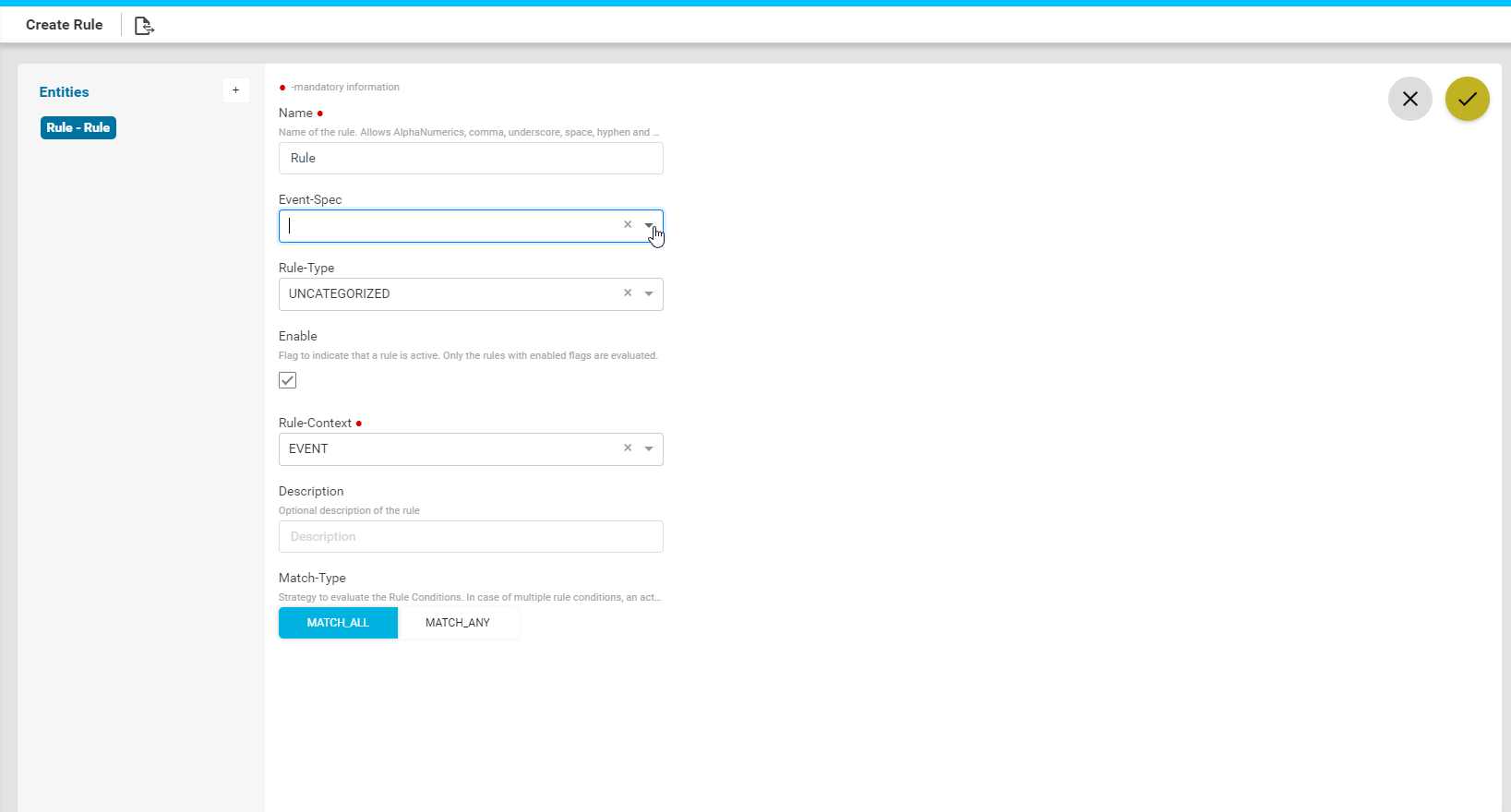

Create Rule

- Navigate to Administration > System > Rule Engine > Rules

- Click Add Rule and fill the following fields:

-

- Name: Enter a string that will be used to identify the rule.

- Rule Type: Select the category that the rule should belong to.

There are two types of categories available now:

-

-

- UNCATEGORIZED

- COMPLIANCE

- Enable: Select this option if the rule should be enabled.

- Rule Context: Enter the context in which the rule has to be triggered:

-

-

-

- DATAMODEL: Select this option if the rule should be triggered on a ATOM managed entity.

- Context path: Example: For this rule to be applicable on the devices, enter the context path as /controller:devices

- EVENT: Select this option if the rule should be triggered in the case of an event generated in ATOM.

- DATAMODEL: Select this option if the rule should be triggered on a ATOM managed entity.

-

- Event Spec: Select from the available event specs in ATOM:

-

- Description: Enter descriptive text for the rule

- Change type The rule engine will check for the conditions defined in the rule when one of the following scenarios listed below:

Change -Type Description

- CREATE A component is created in ATOM

- UPDATE A component is updated in ATOM

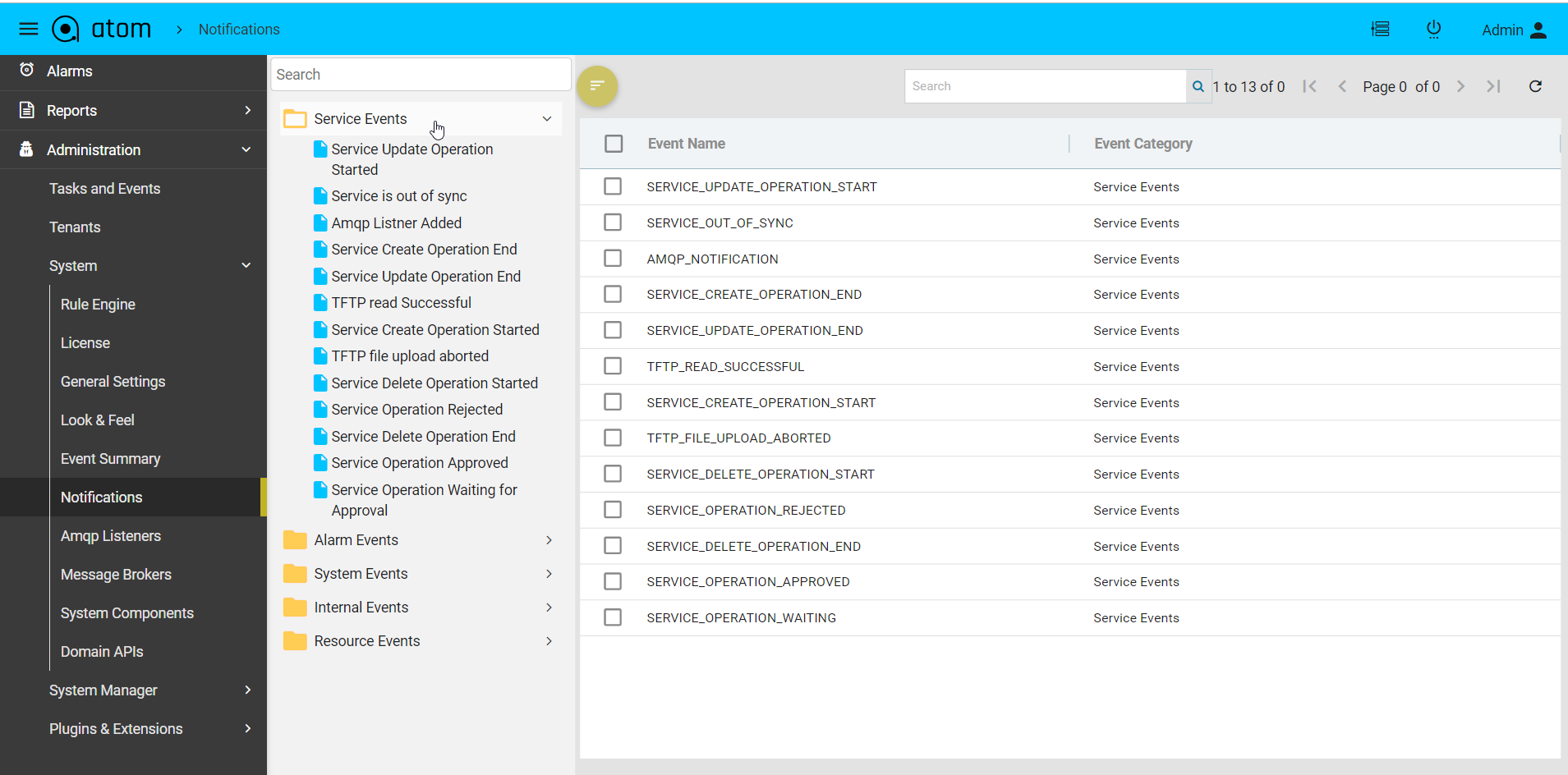

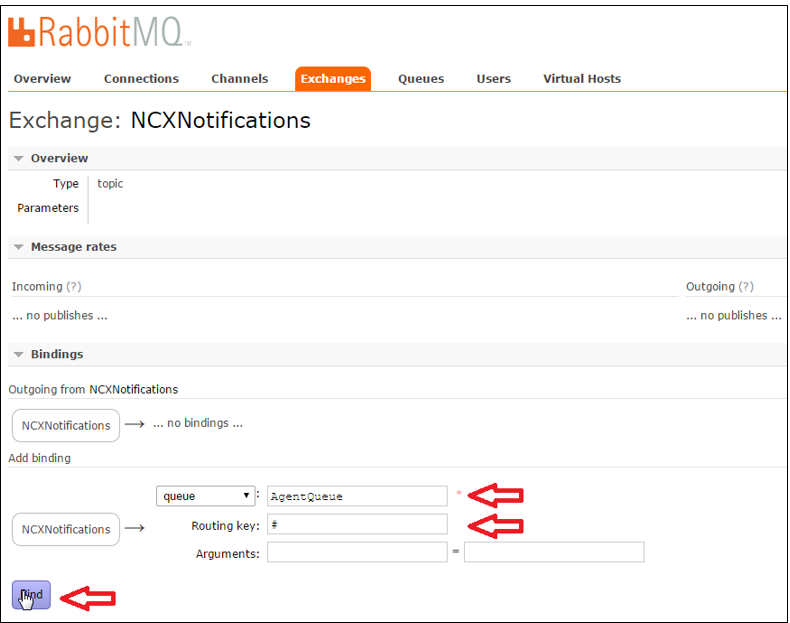

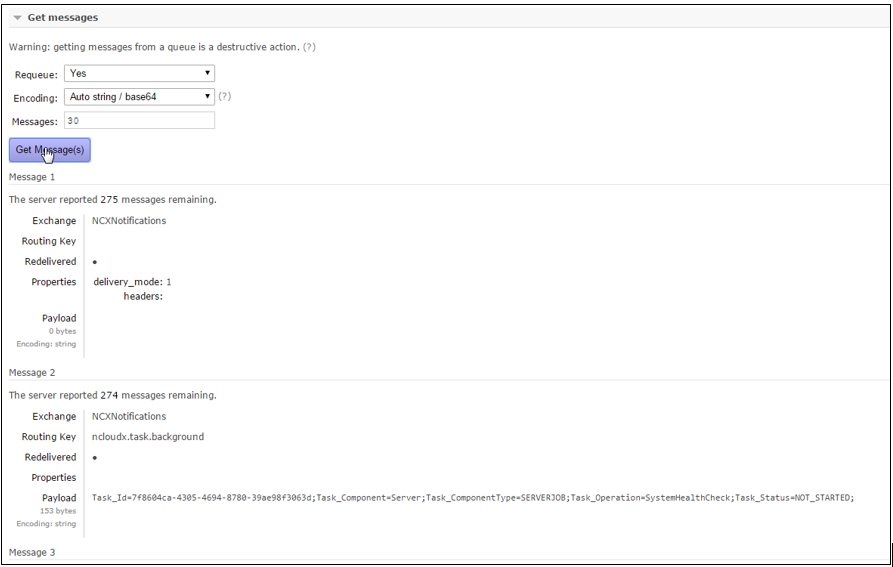

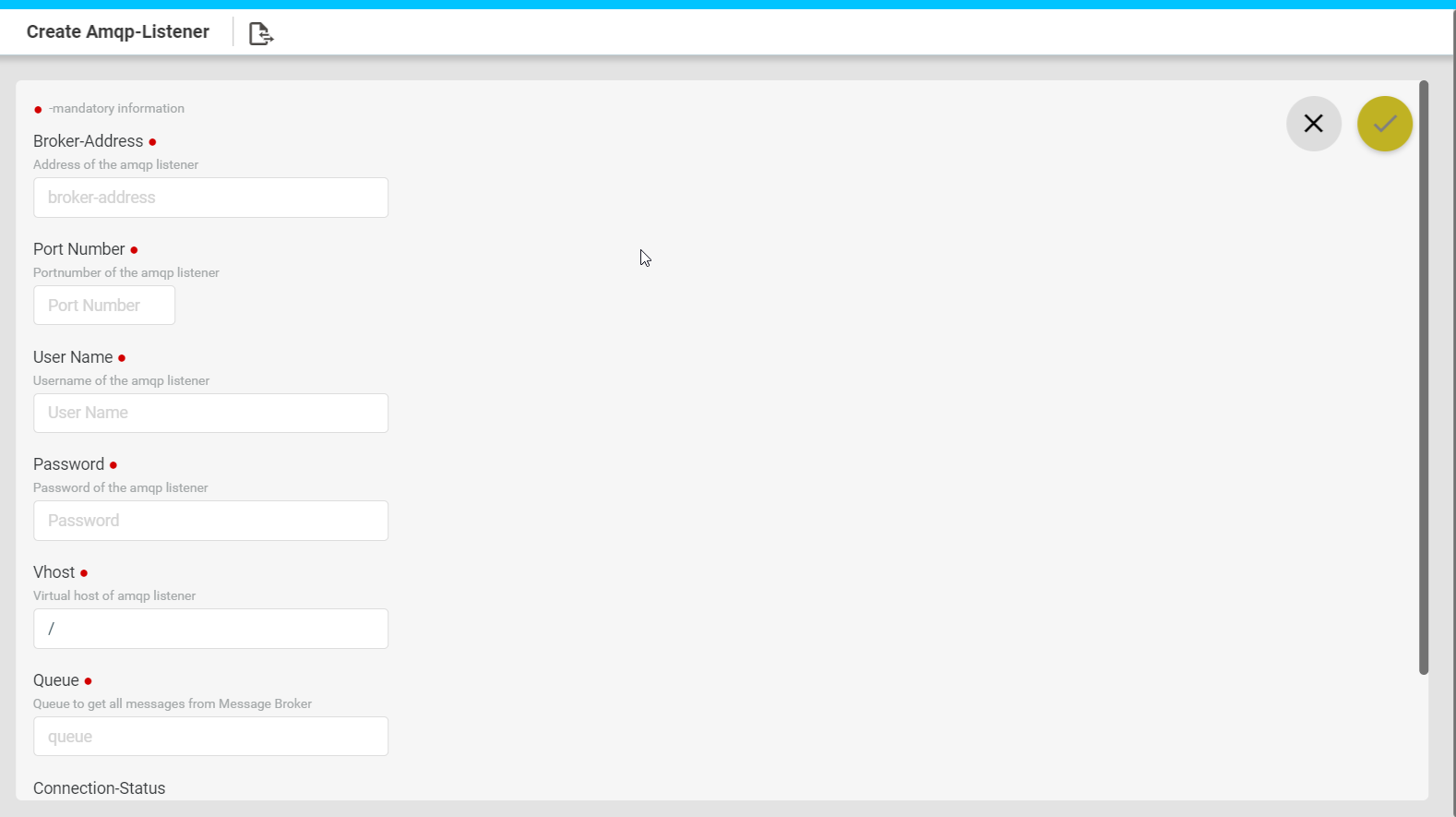

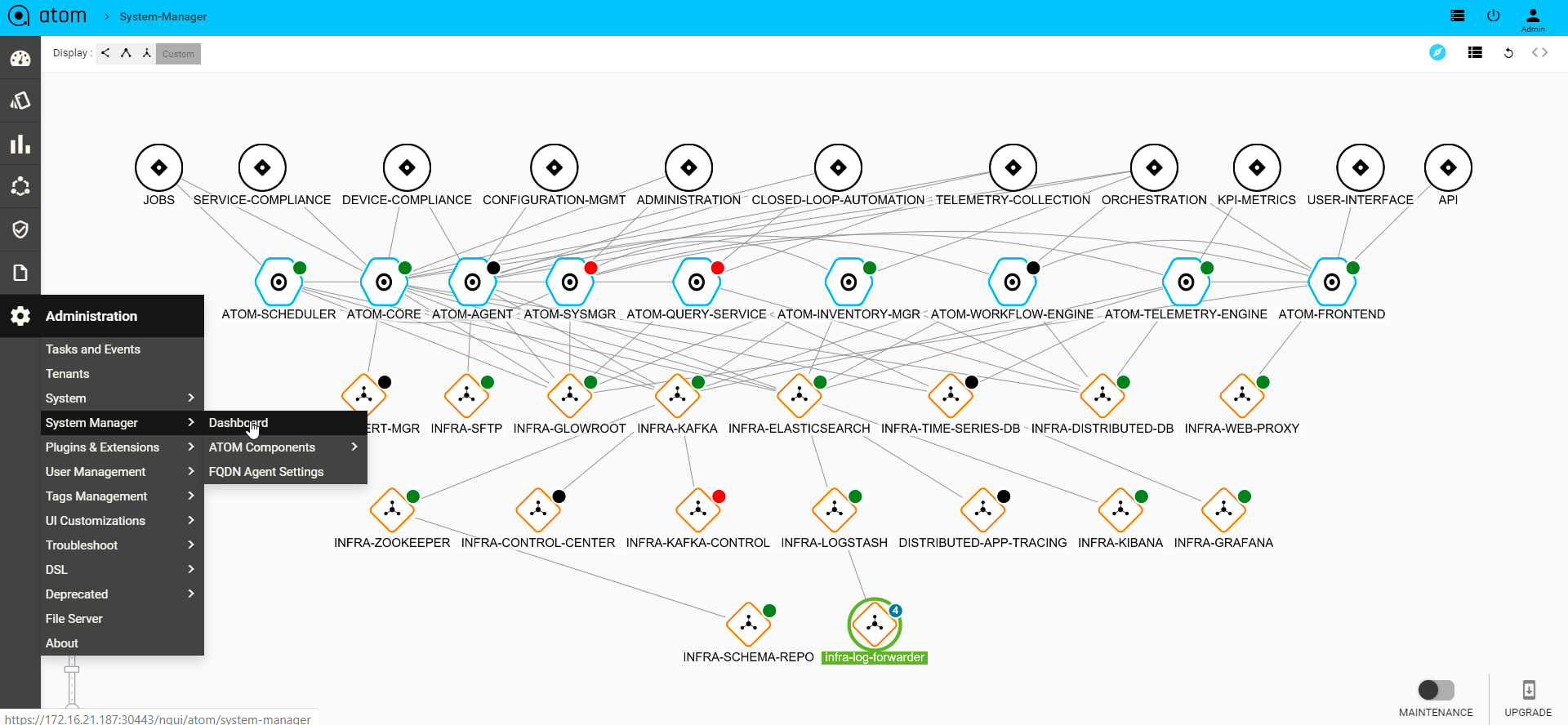

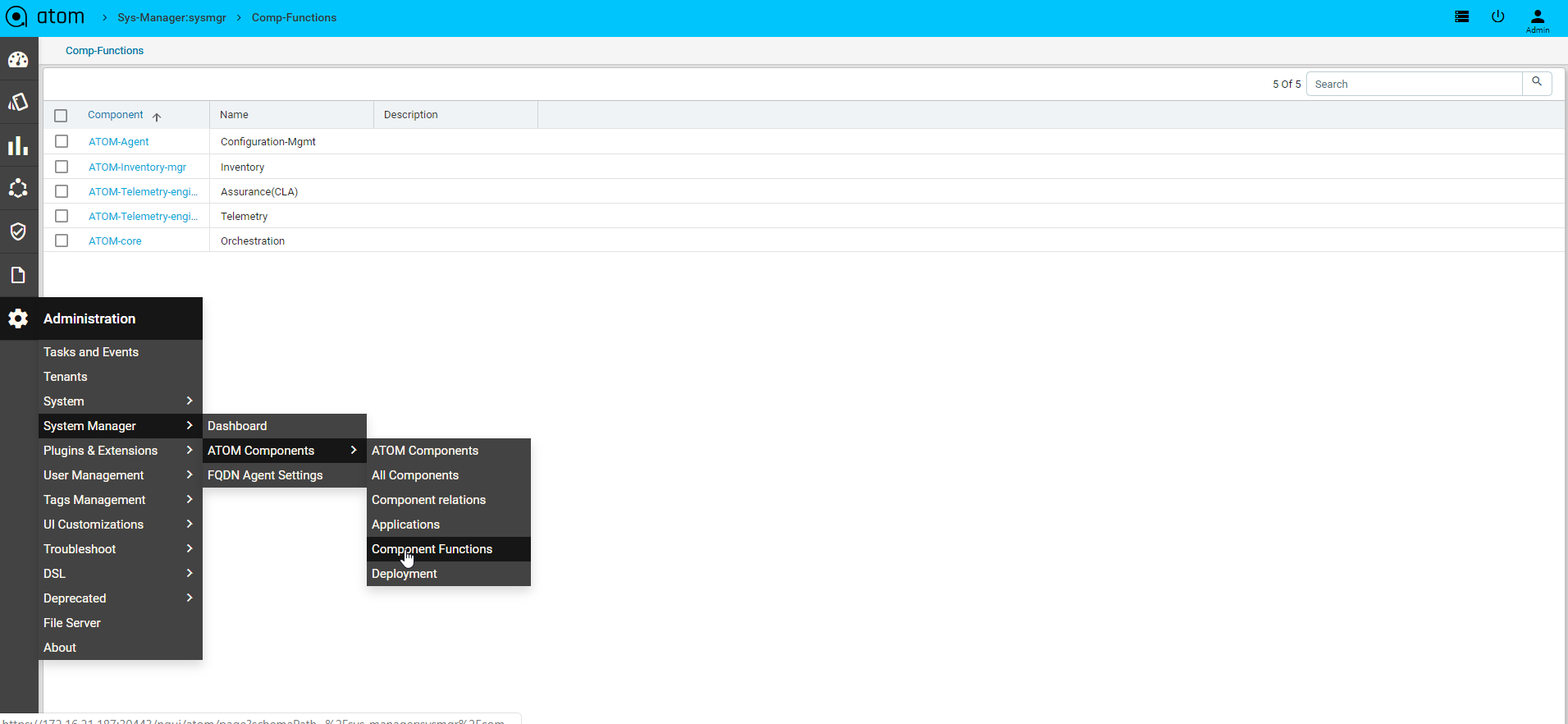

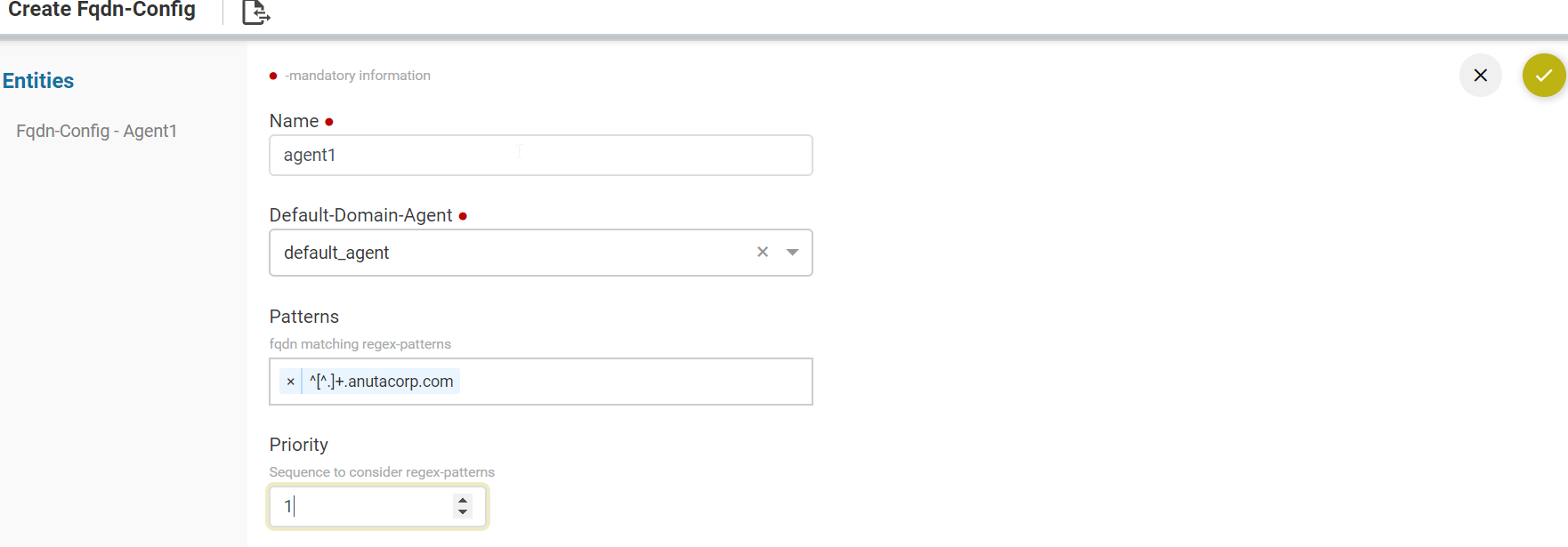

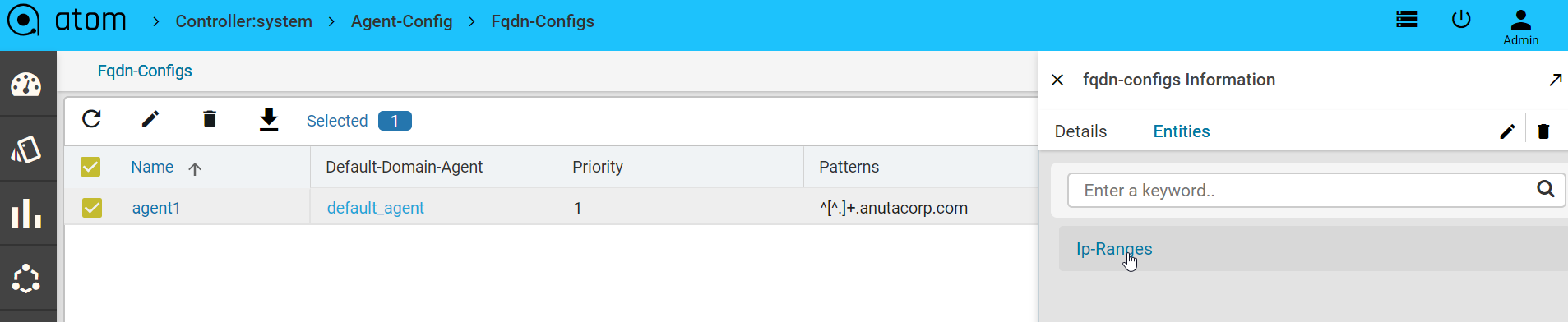

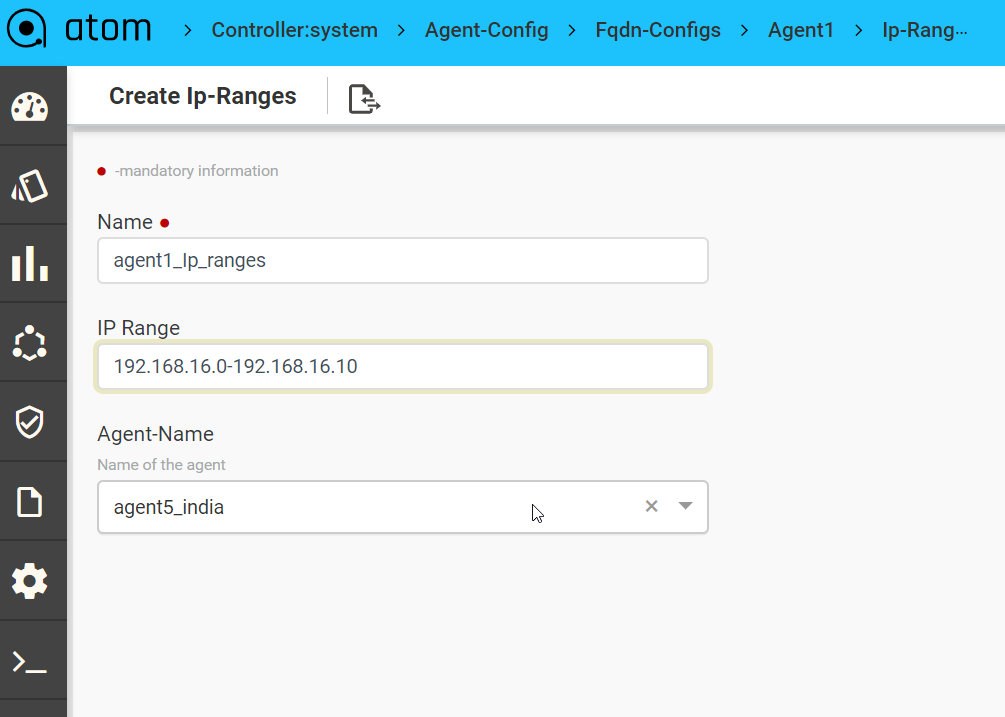

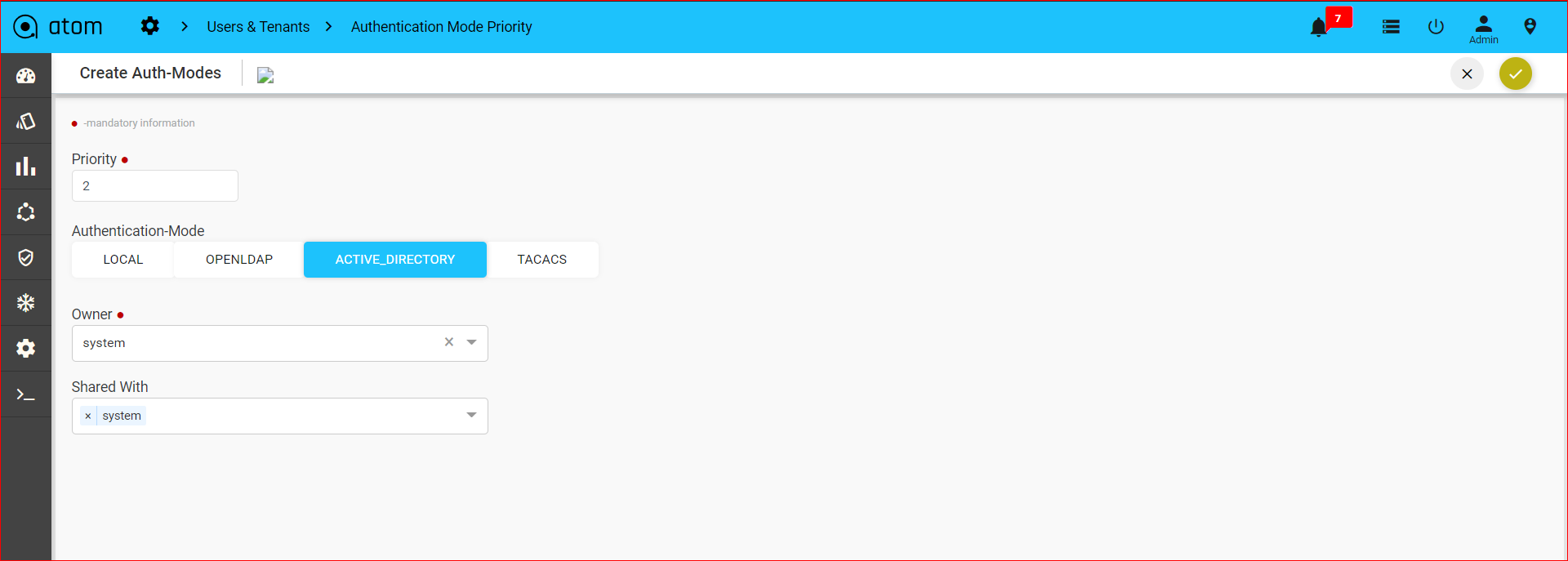

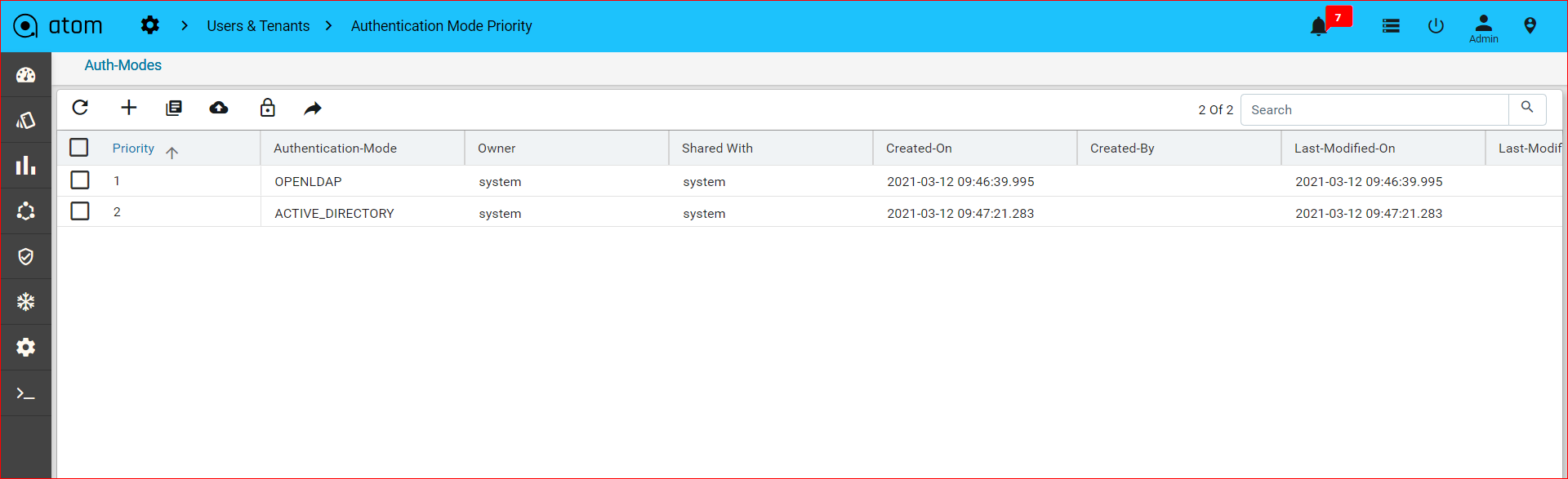

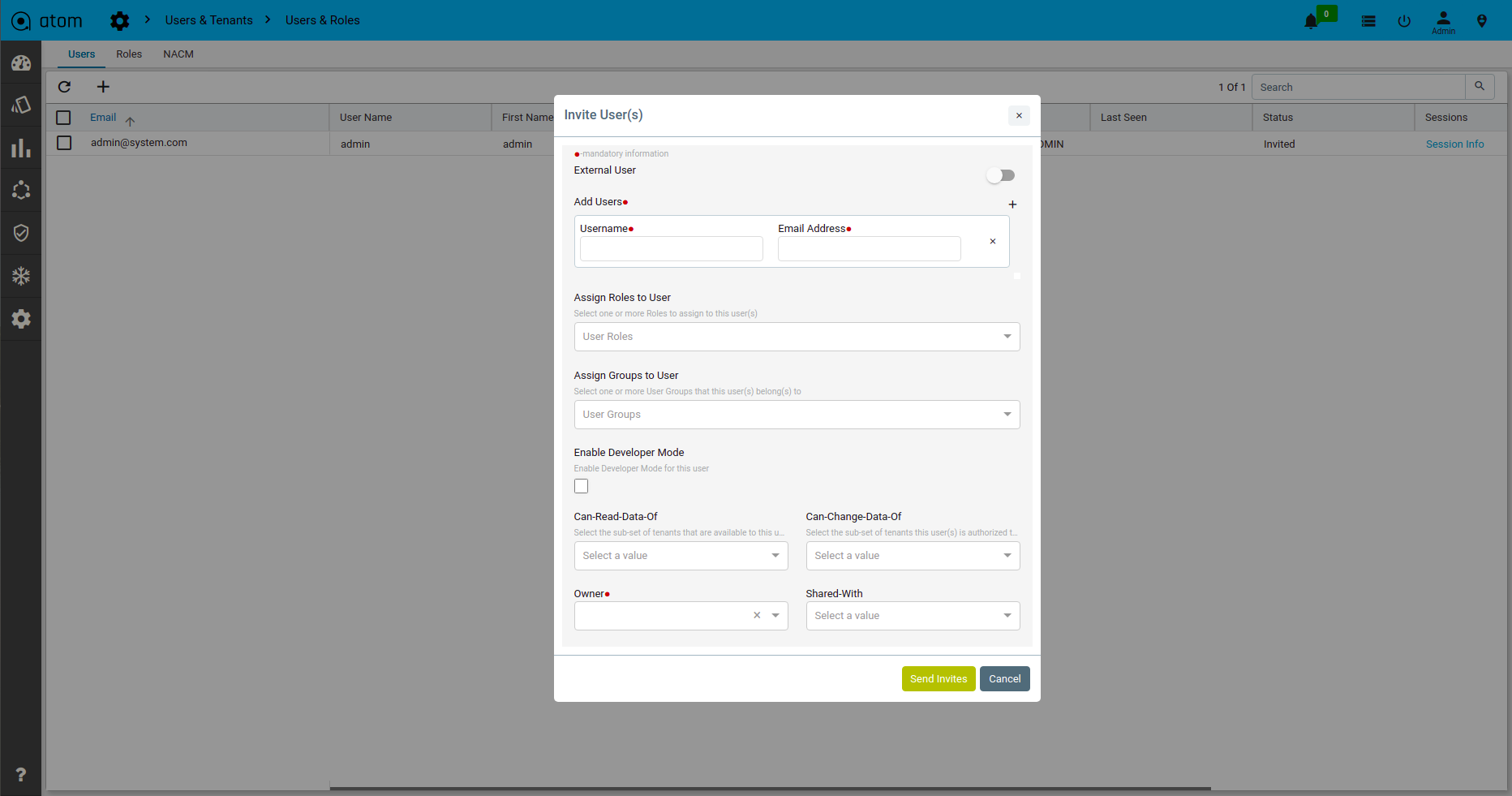

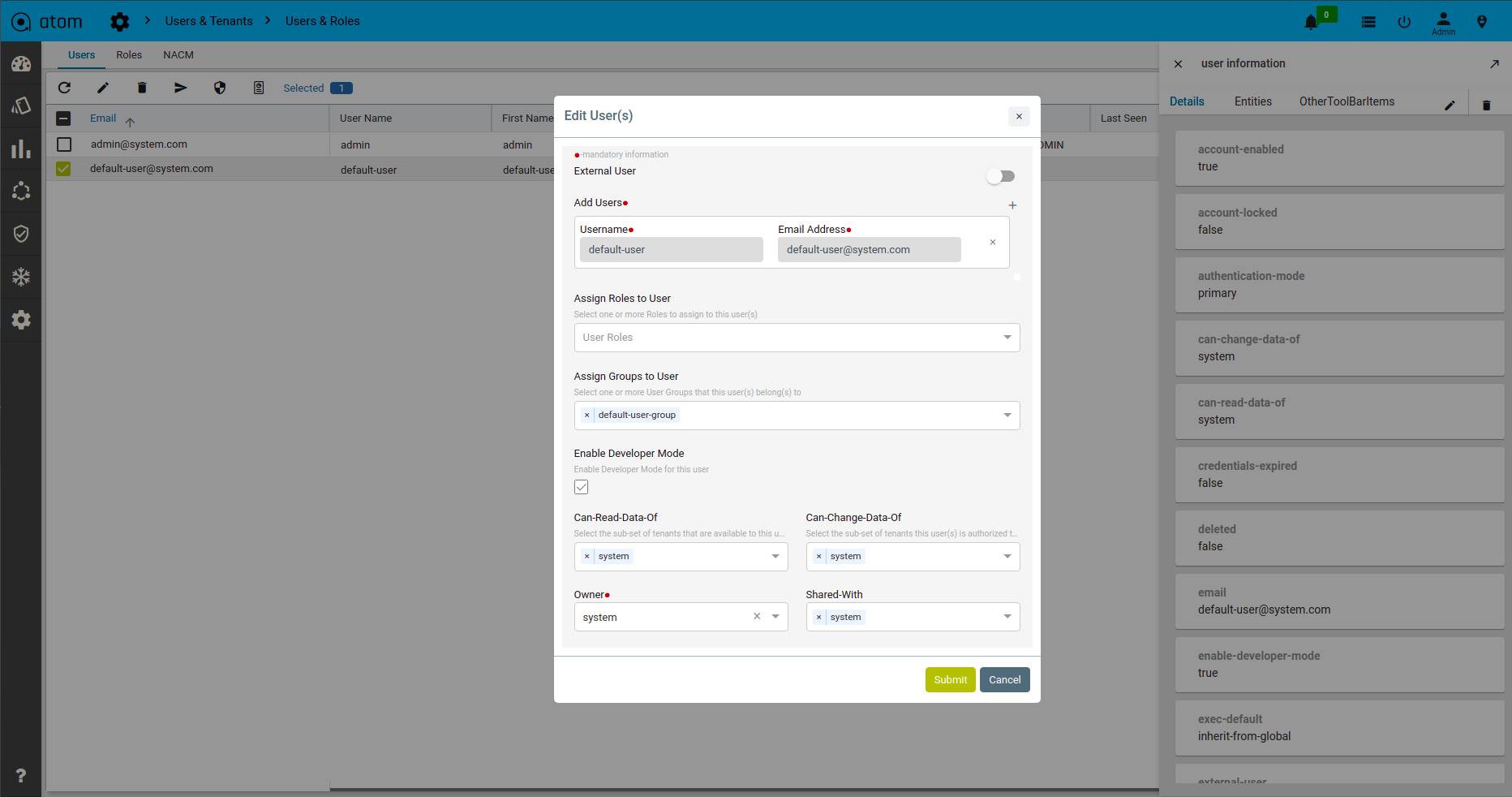

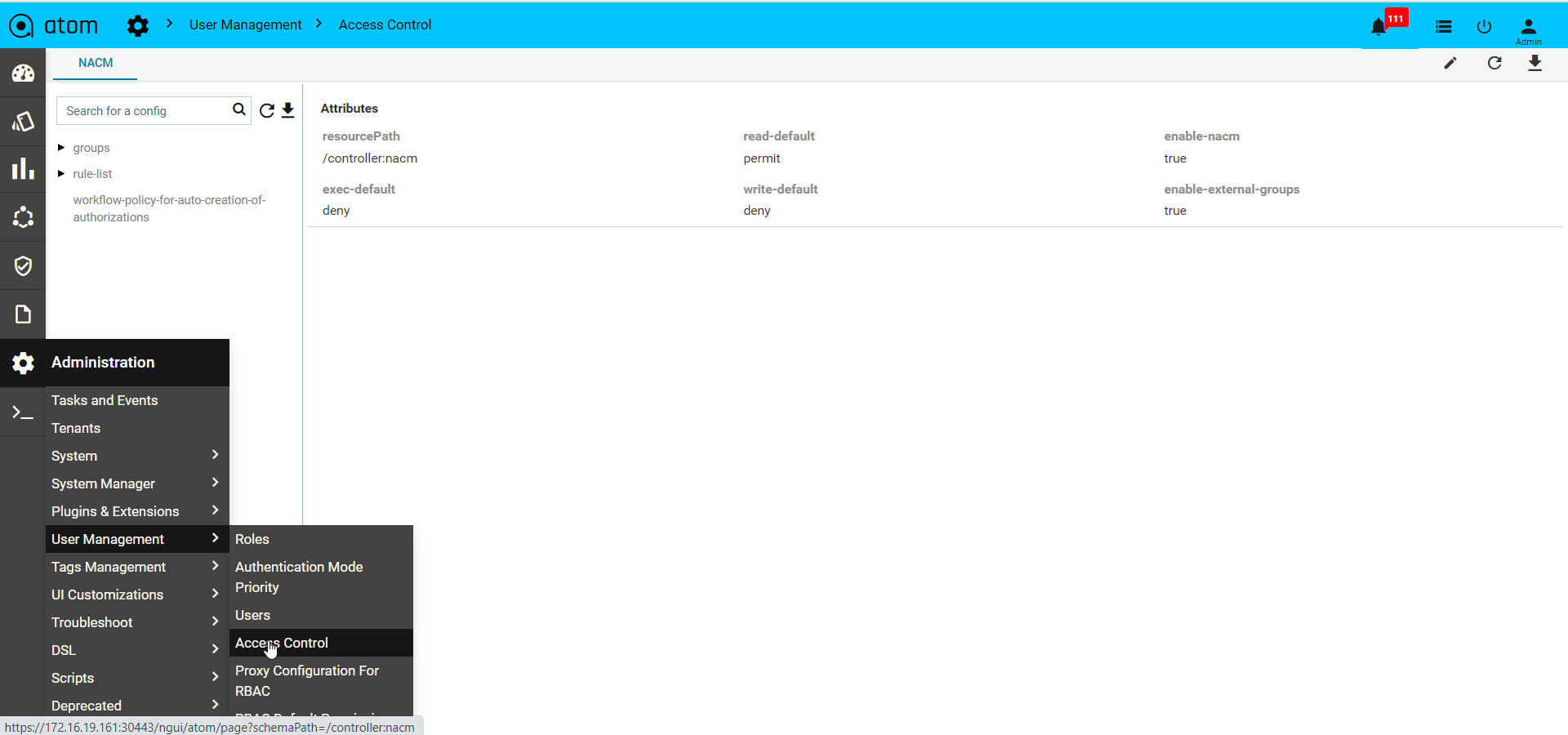

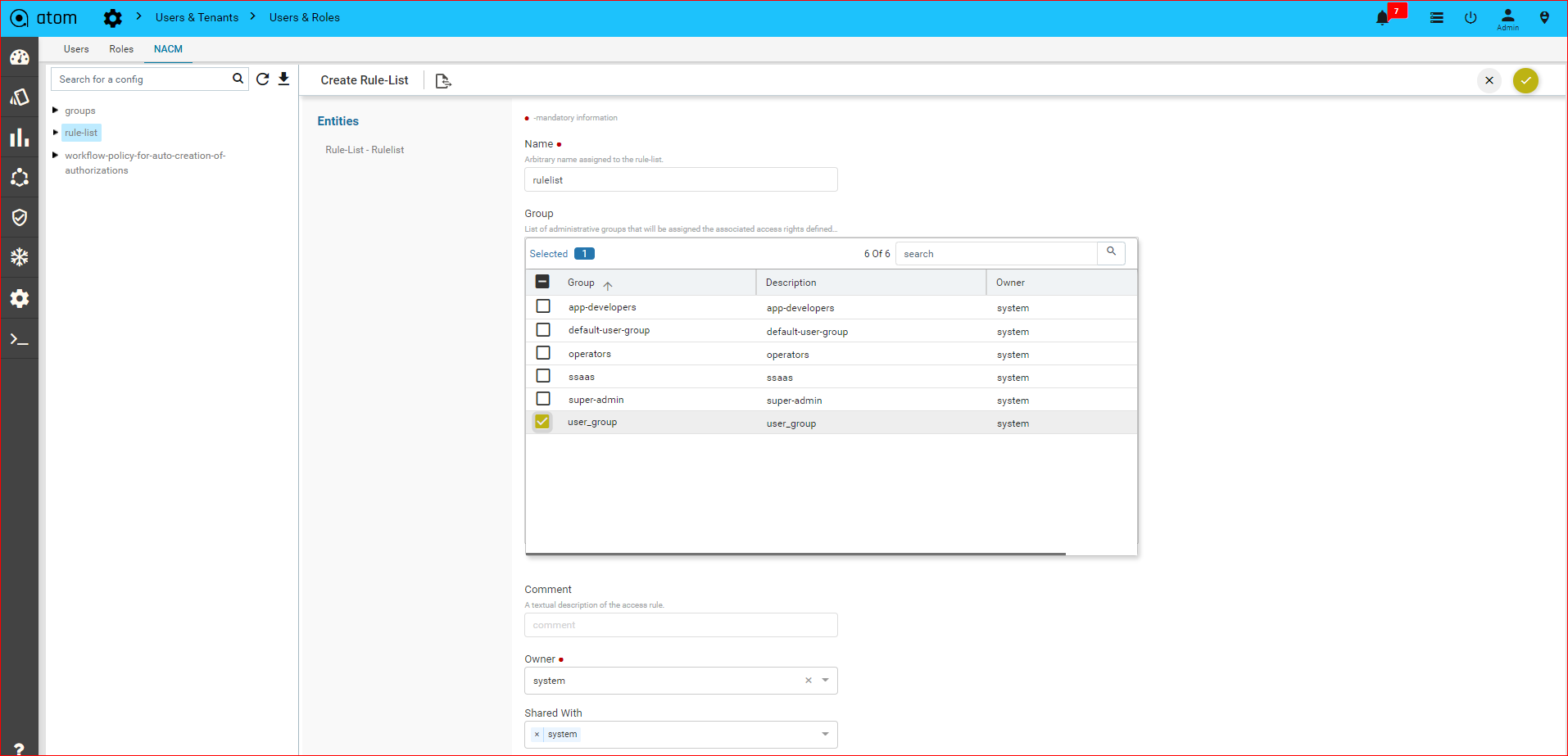

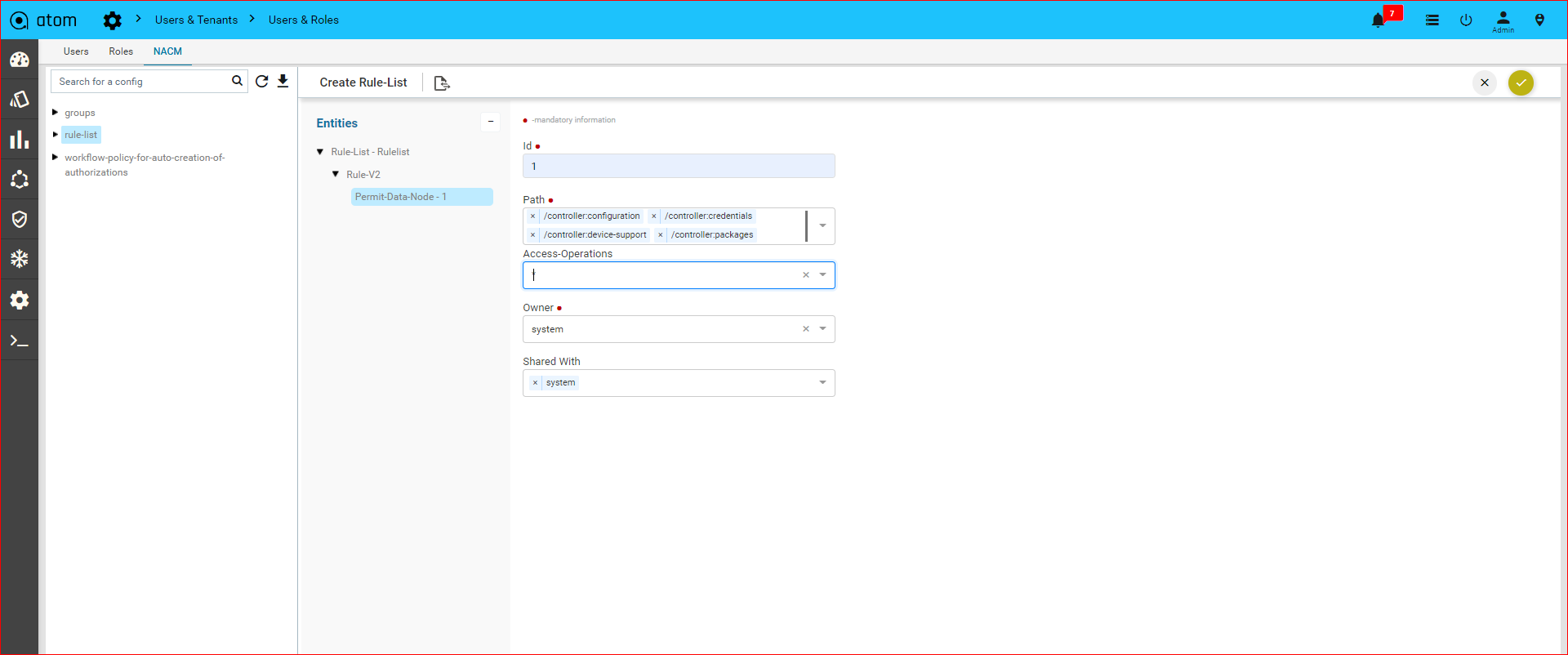

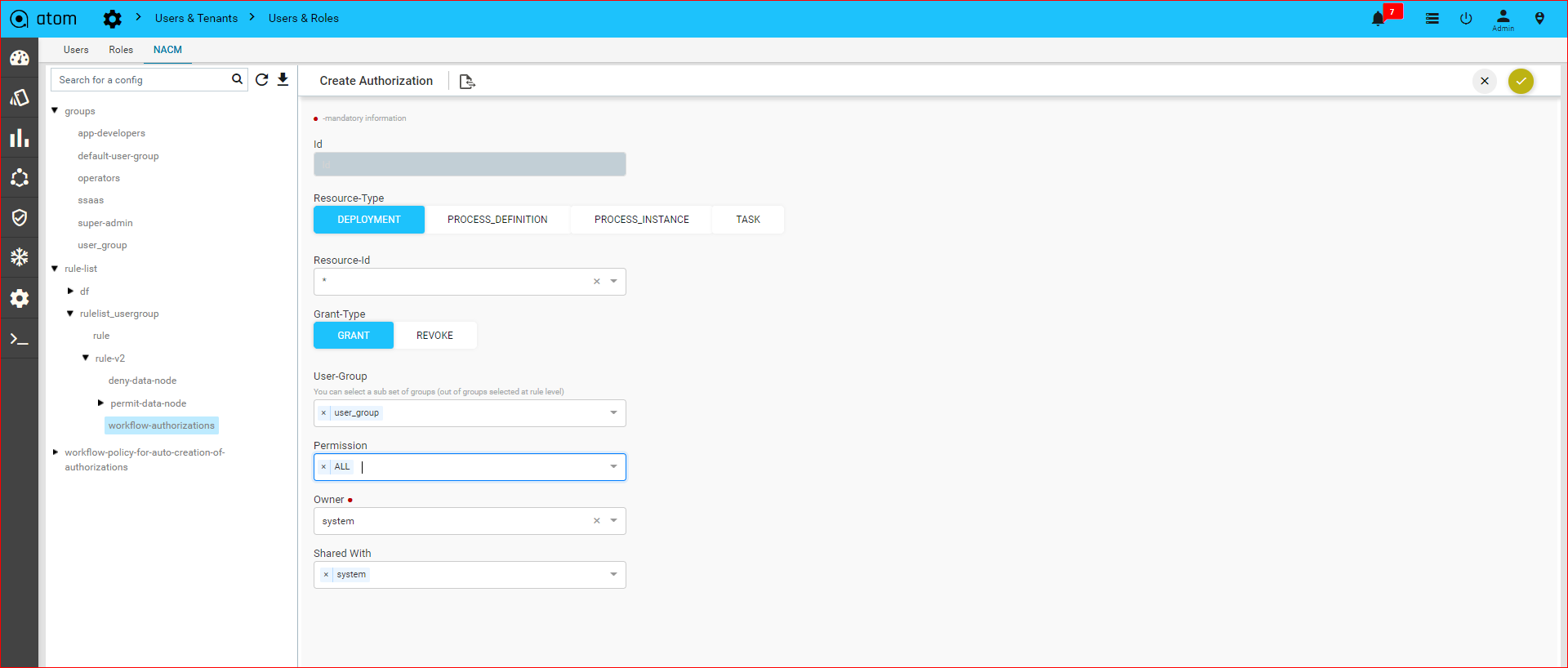

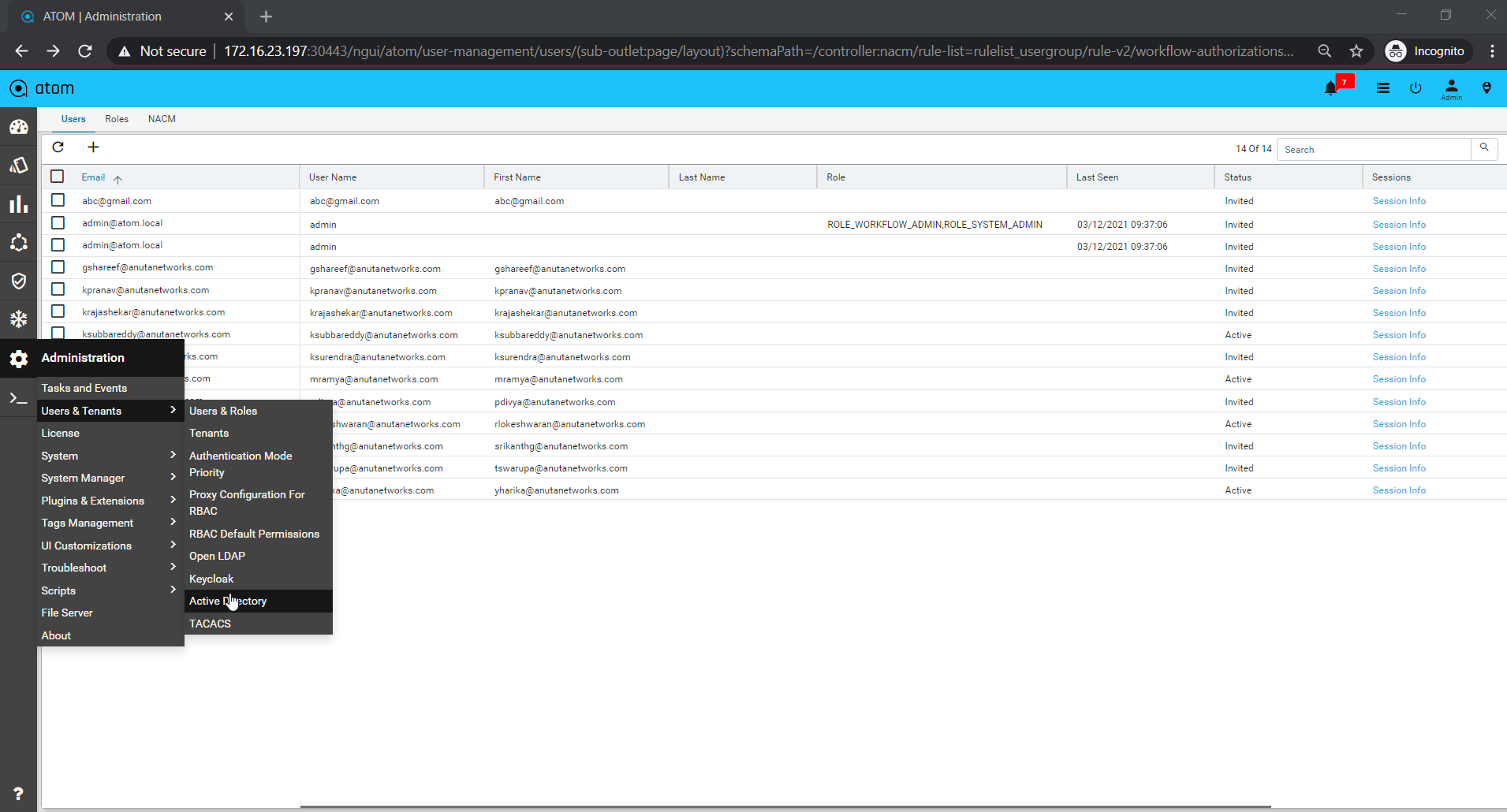

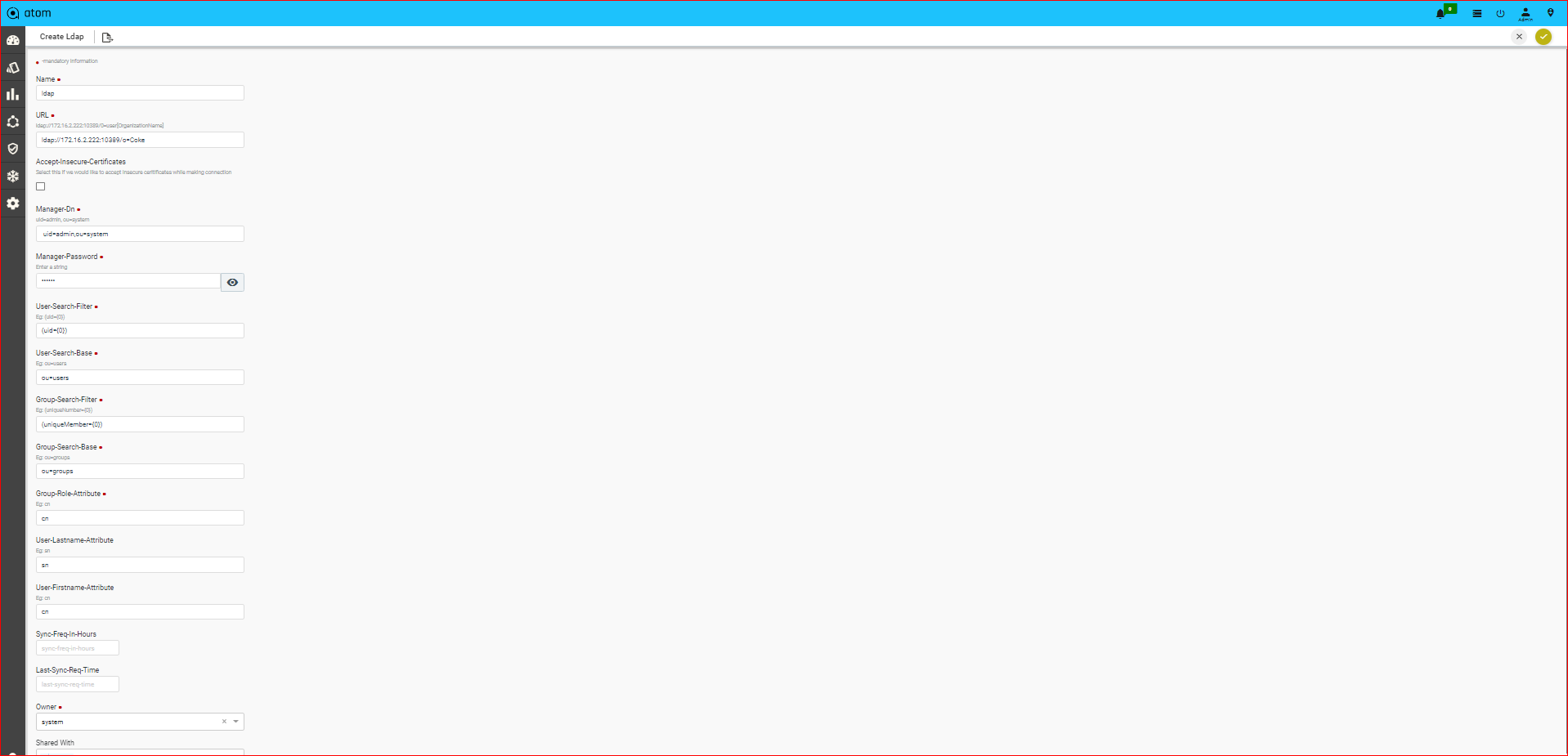

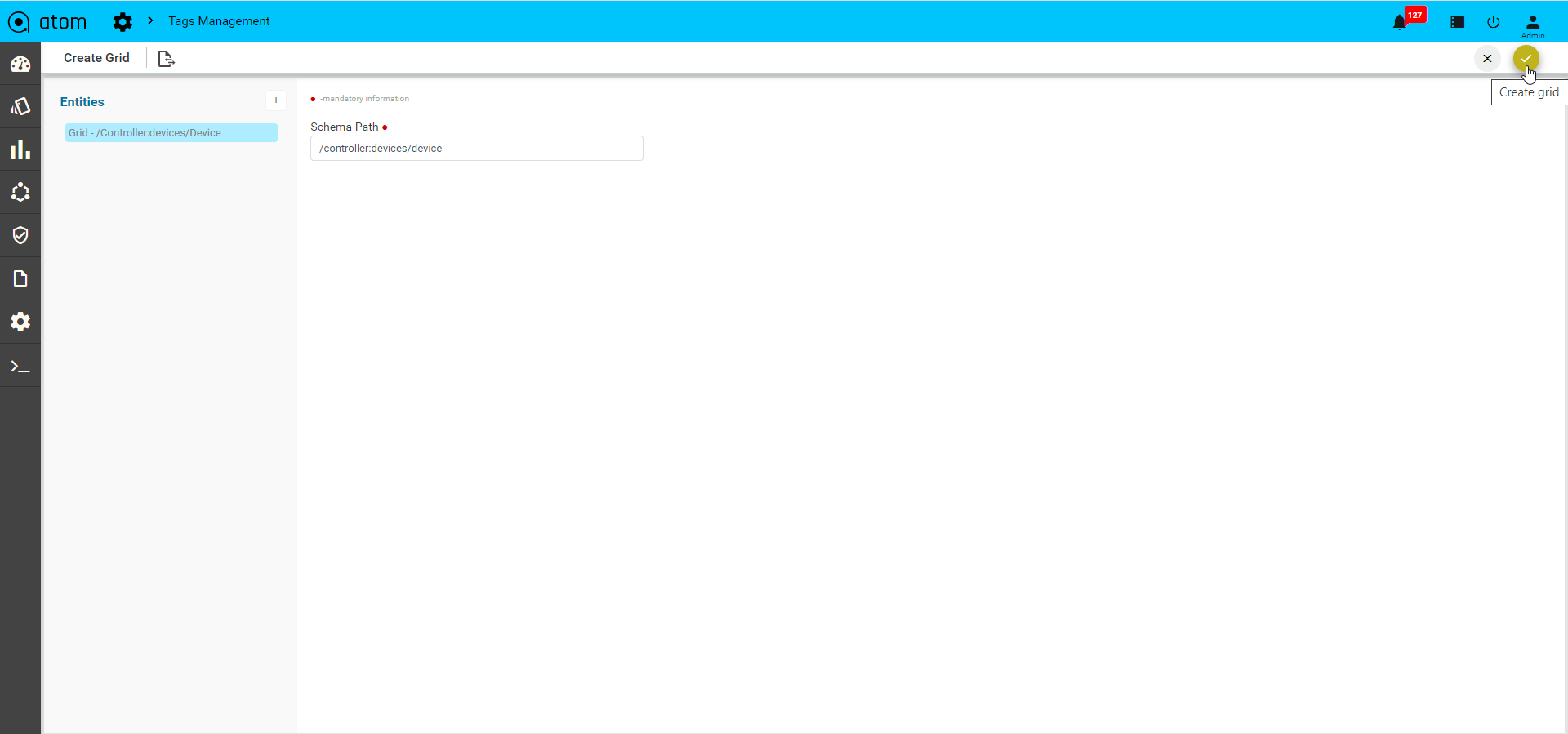

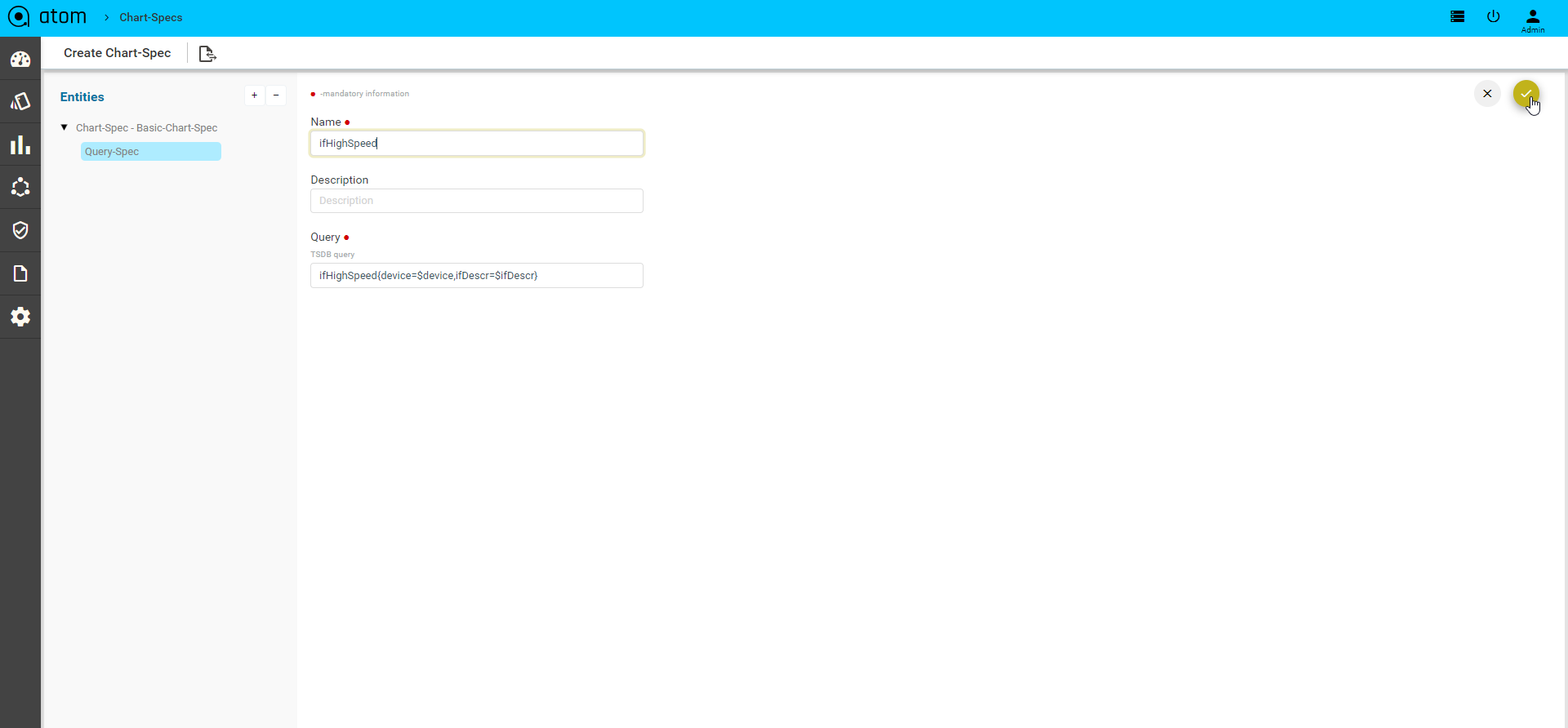

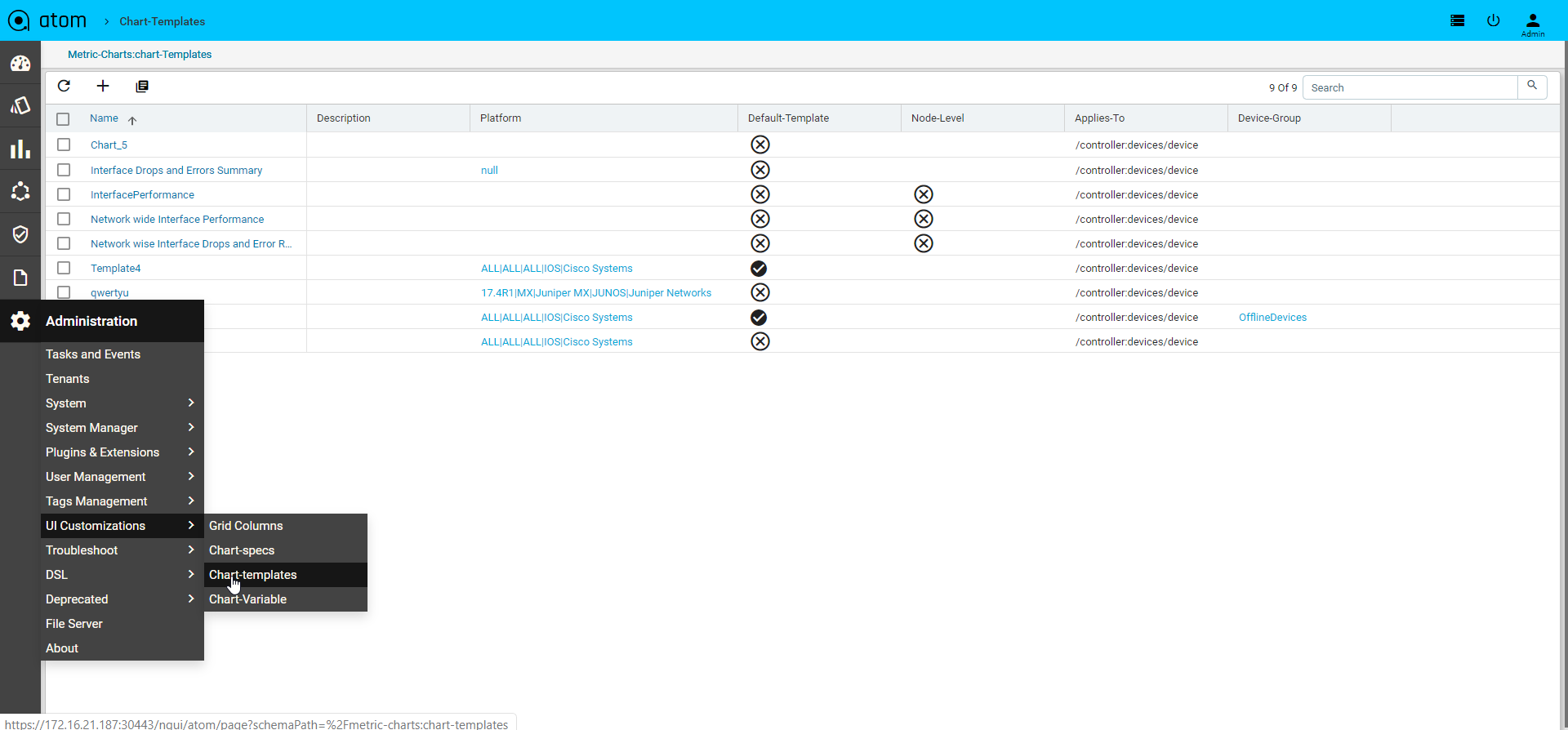

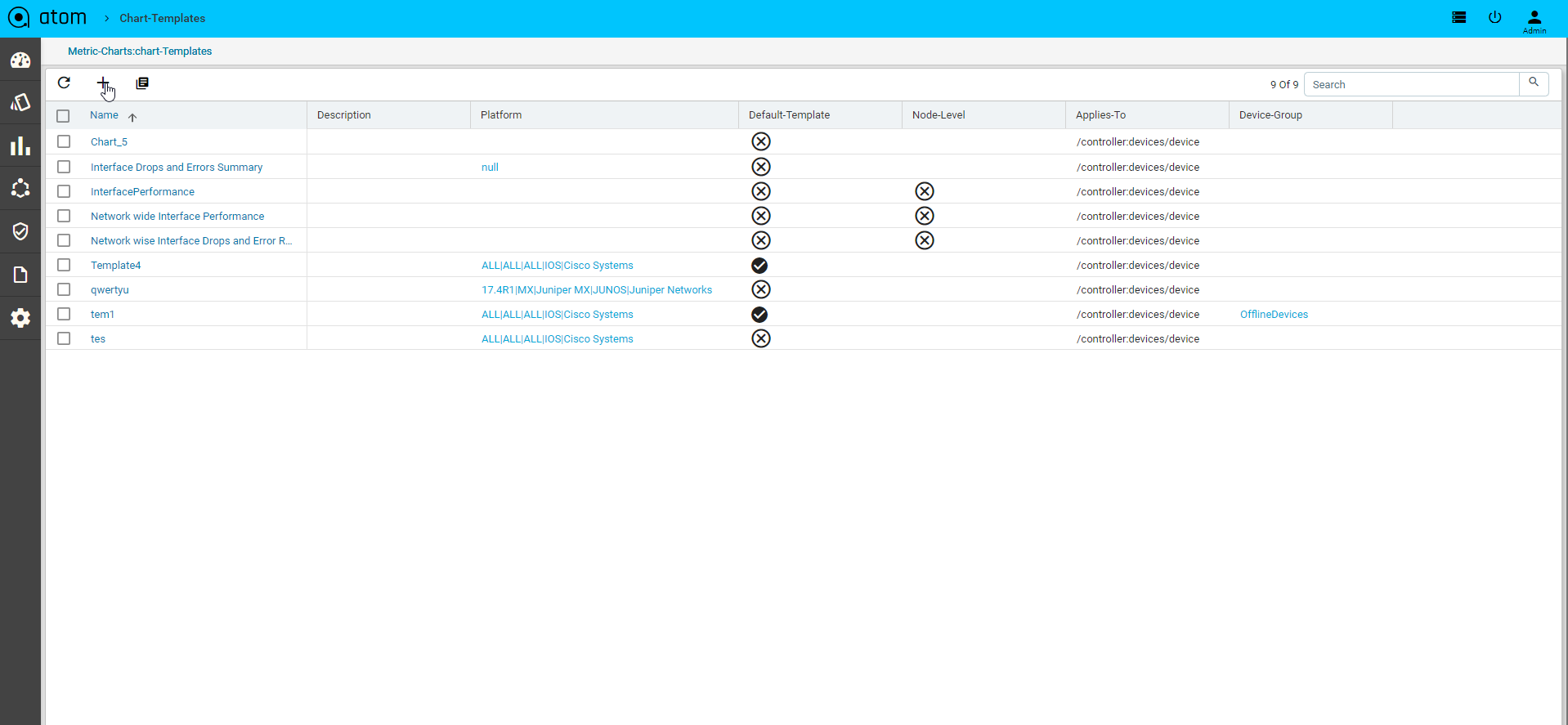

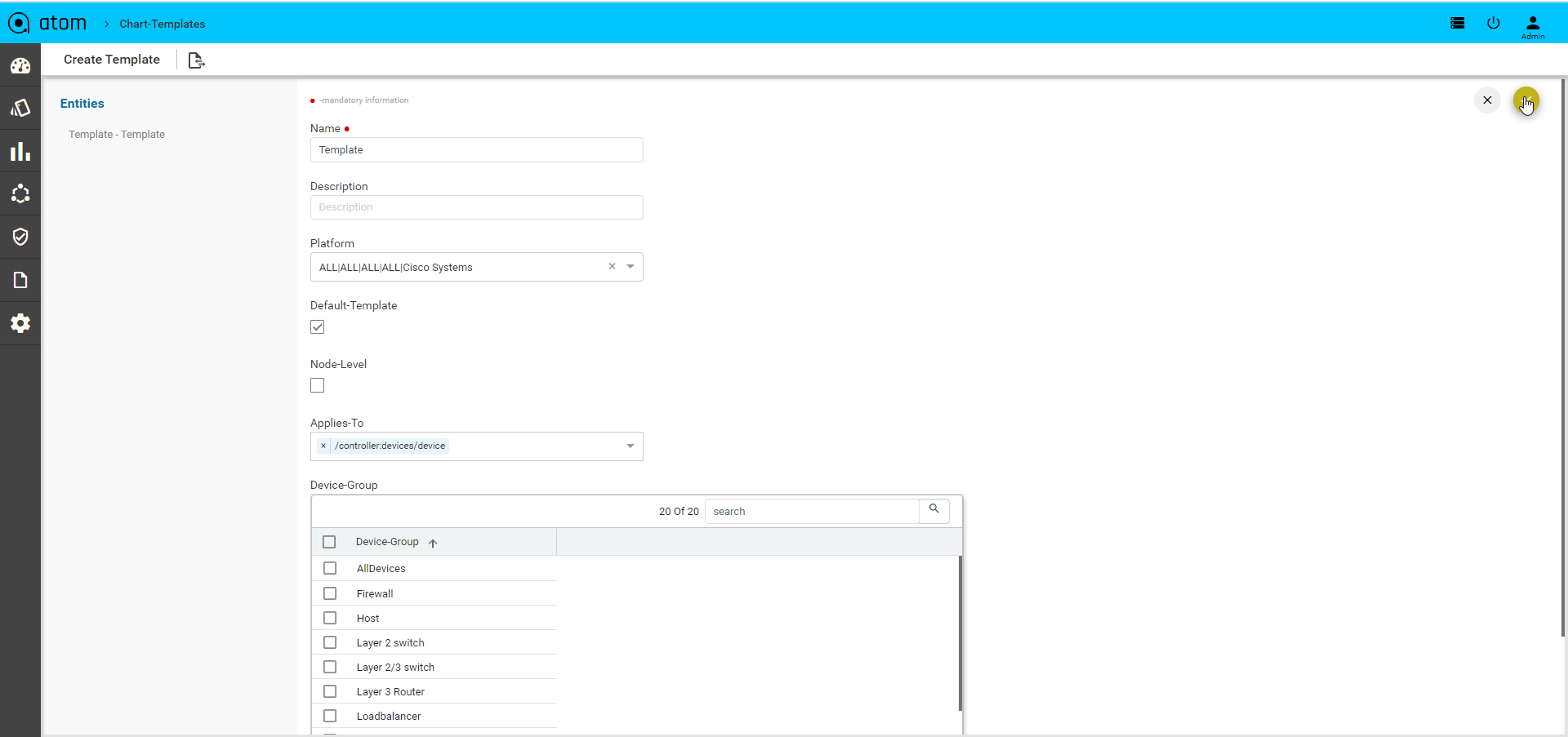

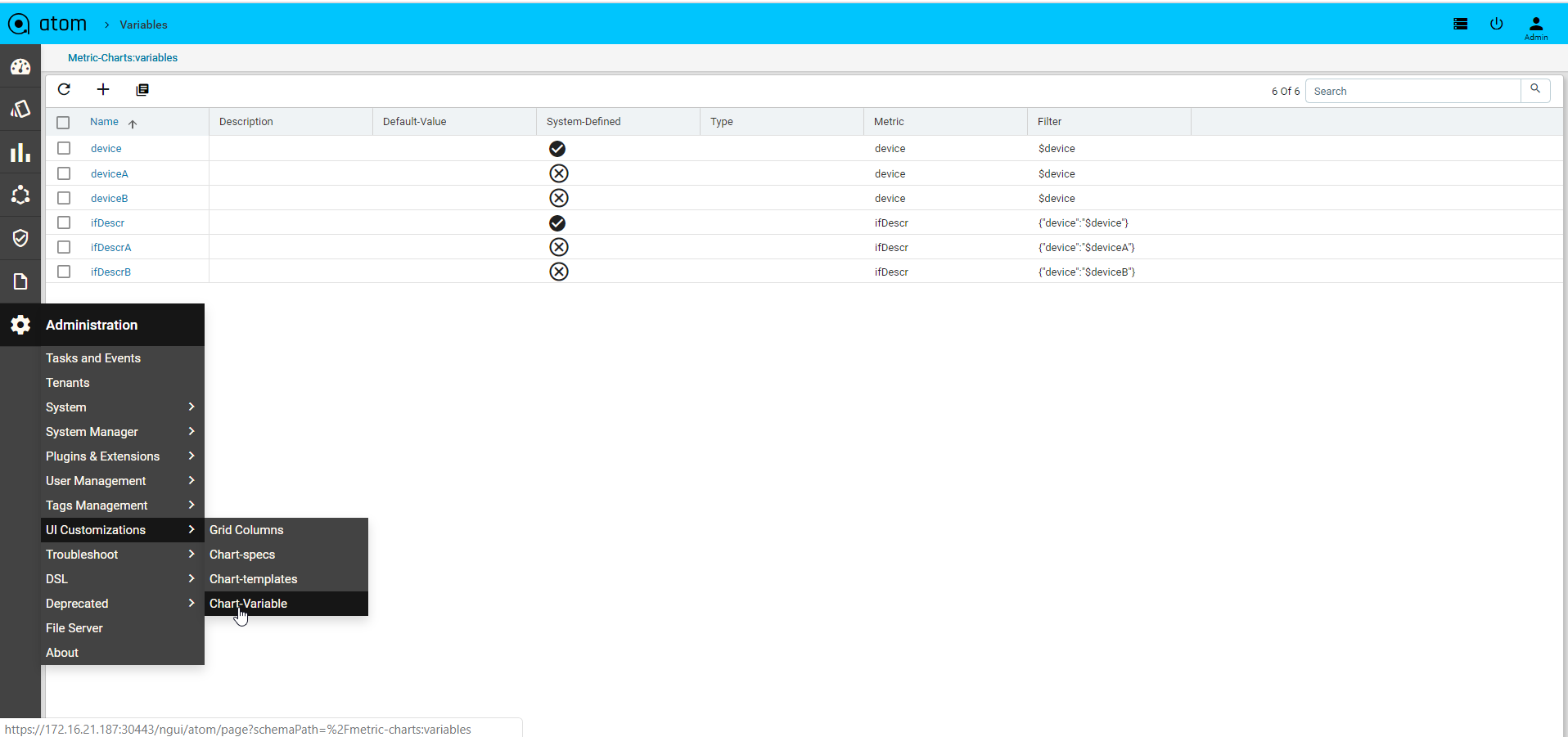

- DELETE A component is deleted from ATOM